Introduction: When Machines Began to Learn

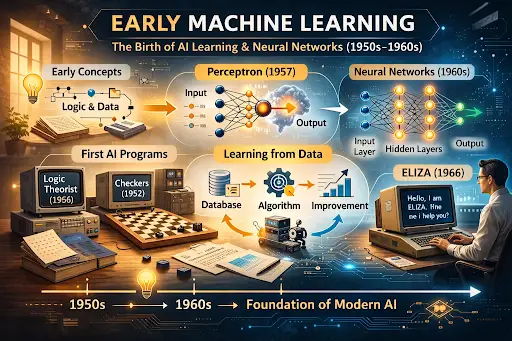

The concept of early machine learning represents one of the most fascinating chapters in the history of artificial intelligence. While ideas about intelligent machines date back to Alan Turing artificial intelligence foundations and the landmark Dartmouth Conference artificial intelligence, it was during the 1950s and 1960s that computers began to perform tasks that could be described as “learning.”

Early machine learning experiments marked the transition from theoretical AI concepts to programs capable of improving their performance through experience. From mathematical problem-solving to pattern recognition and basic neural networks, these programs laid the groundwork for the AI systems we rely on today.

What is Early Machine Learning?

Machine learning is the science of creating algorithms that allow computers to learn from data rather than following strictly programmed instructions. In the early days, researchers focused on simple models that could recognize patterns or solve logical problems, long before today’s deep learning revolution.

Early machine learning was not about vast datasets or sophisticated neural networks. Instead, it was about experimenting with first AI programs, testing the limits of what computers could do with structured reasoning and small amounts of data.

Pre-Machine Learning Ideas

Before computers could learn, scientists explored ways to model intelligence mathematically. Some of the foundational concepts include:

- Pattern Recognition: The ability to identify structures or regularities in data, which later influenced early AI experiments.

- Statistical Learning: Simple probabilistic models were used to make predictions based on observed outcomes.

- Logic and Reasoning: Inspired by Alan Turing artificial intelligence, researchers asked whether logical reasoning could be simulated by a machine.

These theoretical frameworks paved the way for the first attempts to program computers that could adapt or “learn” from examples.

The Perceptron: The First Learning Machine

One of the most iconic early machine learning experiments was the Perceptron, developed in 1957 by Frank Rosenblatt at Cornell University.

- Purpose: The perceptron was designed to recognize visual patterns, such as letters and shapes.

- How it worked: It simulated a simplified neuron, taking inputs and adjusting weights based on errors — the earliest form of supervised learning.

- Impact: The perceptron demonstrated that machines could improve over time through experience, an idea that directly influenced neural networks history.

Though limited in complexity, the perceptron proved that computers could move beyond fixed instructions and adapt to data.

Early Learning Programs Beyond Perceptrons

Following the perceptron, several other early AI experiments explored learning in different contexts:

- Nearest Neighbor Methods: Algorithms that classified data points based on similarity to known examples.

- Checkers Programs: Building on first AI programs, Arthur Samuel created checkers-playing software that improved through repeated play, introducing the concept of machine learning origins in games.

- Rote Learning Systems: Simple programs that memorized input-output pairs, demonstrating early supervised learning techniques.

These experiments were foundational, showing that learning could be applied in a range of domains, from games to pattern recognition.

Early Neural Network Concepts

While perceptrons were the first practical learning machines, researchers began exploring more sophisticated neural network structures:

- Layered networks: Concepts for multiple layers of artificial neurons were proposed to handle complex tasks.

- Learning rules: Early algorithms focused on adjusting connection weights based on performance, an idea central to modern backpropagation.

- Applications: Researchers tested these networks on character recognition, pattern classification, and basic problem-solving.

These early neural concepts were pivotal in transforming AI from purely symbolic reasoning to systems capable of learning from experience.

Key Figures in Early Machine Learning

Several pioneering researchers played critical roles:

- Frank Rosenblatt: Developed the perceptron and introduced the idea of adaptive weights.

- Arthur Samuel: Created self-improving checkers programs, demonstrating practical learning algorithms.

- Allen Newell & Herbert Simon: Their first AI programs, like Logic Theorist and GPS, inspired learning-based experiments.

- Marvin Minsky: Critically analyzed early perceptrons and laid the groundwork for later advances in AI research.

These figures bridged the theoretical foundations from Dartmouth Conference artificial intelligence to practical experiments in early machine learning.

How Early Machine Learning Influenced AI Development

The impact of early machine learning experiments was profound:

- Foundation for Modern Algorithms: Concepts like supervised learning, pattern recognition, and neural connections shaped modern AI.

- Practical Demonstrations: Programs like the perceptron and checkers software proved that computers could improve performance over time.

- Bridging Theory and Experiment: Early experiments connected Alan Turing artificial intelligence ideas with real-world computing systems, including history of computers and programming languages.

These programs formed a bridge from early AI concepts to modern machine learning and deep learning approaches.

Why Early Machine Learning Matters Today

Understanding early machine learning is critical for several reasons:

- It reveals the step-by-step evolution of AI from imagination to practical learning systems.

- It highlights the importance of experimentation in AI research.

- It demonstrates how foundational ideas, like perceptrons and nearest neighbor methods, still influence neural networks history and contemporary machine learning.

By studying these origins, we can appreciate how far AI has come and what lessons from early experiments continue to guide research today.

Frequently Asked Questions (FAQs)

1. What was the first machine learning program?

The perceptron, developed by Frank Rosenblatt in 1957, is widely recognized as one of the first practical machine learning programs.

2. How does early machine learning relate to AI?

Early machine learning experiments turned theoretical AI concepts into programs that could adapt and improve, laying the foundation for modern AI.

3. Who were key figures in early machine learning?

Frank Rosenblatt, Arthur Samuel, Allen Newell, Herbert Simon, and Marvin Minsky were pivotal contributors.

4. How did early machine learning programs work?

They used simple algorithms to recognize patterns, adjust behavior based on feedback, or memorize input-output pairs.

5. Why is studying early machine learning important?

It helps understand the evolution of AI, from simple adaptive programs to today’s advanced machine learning systems.

Conclusion

The history of early machine learning tells a story of imagination, experimentation, and incremental progress. From first AI programs like Logic Theorist and checkers software to the perceptron and early neural networks, these pioneering experiments shaped the neural networks history and the future of AI.

By studying these foundations, we see how AI evolved step by step — from theoretical ideas at the Dartmouth Conference artificial intelligence to practical learning machines — and laid the groundwork for the powerful AI systems in use today.