Introduction

When diving into the vast and complex world of artificial intelligence, one foundational concept stands out as the crucial starting point: perceptron machine learning. Long before we had massive language models and complex deep neural networks capable of generating art or driving cars, scientists had to figure out how to teach a computer to make a simple, binary decision. The perceptron is the answer to that challenge.

Serving as the absolute cornerstone of artificial neural network basics, the perceptron is essentially the digital equivalent of a single biological neuron. It takes in information, processes it based on learned importance, and fires an output. Understanding how this simple algorithm operates is mandatory for anyone looking to master machine learning fundamentals. In this comprehensive guide, we will explore what the perceptron is, break down its core components, trace its fascinating history, and understand both its mathematical elegance and its historic limitations.

What is a Perceptron in Machine Learning?

At its core, a perceptron is the simplest form of an artificial neural network. Specifically, it is a single layer perceptron designed to act as a linear classifier. But what does that mean in plain English? Imagine drawing a straight line on a piece of paper to separate a group of red dots from a group of blue dots. If a single straight line can perfectly divide the two groups, the data is “linearly separable,” and a perceptron can easily solve the problem.

In technical terms, perceptron machine learning is a supervised learning algorithm. This means it learns from a training dataset that already contains the correct answers. You feed it data, it makes a guess, and if the guess is wrong, it adjusts its internal mechanics to be more accurate next time. It is primarily used for binary classification in machine learning, meaning it categorizes inputs into one of two possible groups (e.g., “Yes” or “No”, “Spam” or “Not Spam”, “0” or “1”). Because it relies on a single layer of processing nodes, it is the most basic building block of predictive modeling.

Components of the Perceptron?

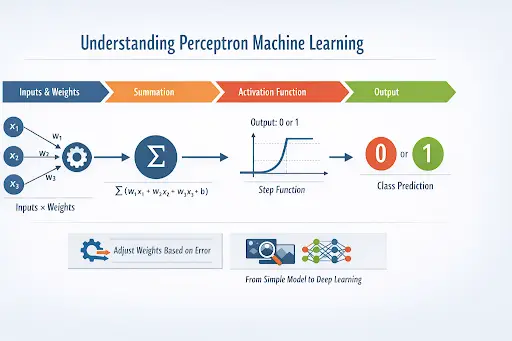

To understand how a perceptron makes a decision, we have to look under the hood at its individual mathematical components. The architecture mimics a biological neuron, taking multiple signals and consolidating them into a single action.

A standard perceptron consists of the following primary parts:

- Input Values: These are the features of the data you are feeding into the model. If you are predicting whether a fruit is an apple or an orange, the inputs might be the fruit’s weight and color.

- Weights: Every input is multiplied by a corresponding weight. Weights determine the “importance” of a specific input. If color is more important than weight in determining the fruit, the color input will have a higher weight.

- Bias: Bias is an extra constant added to the system. It allows the model to shift the activation point up or down, ensuring that the model can fit the data even when all input features are zero.

- Net Sum (Weighted Sum): The perceptron algorithm calculates the sum of all inputs multiplied by their weights, and then adds the bias.

- Activation Function: Finally, the weighted sum is passed through an activation function. In a classic perceptron, this is usually a “Step Function.” If the sum is greater than a certain threshold, the perceptron outputs a 1 (fires). If it is less, it outputs a 0 (does not fire).

The History of the Perceptron Algorithm

The story of the perceptron is deeply intertwined with the very dawn of computing. The theoretical groundwork was laid during the era of Alan Turing Artificial Intelligence research in the 1940s and 50s, a time when mathematicians first began wondering if machines could mimic human thought. However, the field of AI didn’t officially get its name until the famous 1956 Dartmouth Conference, where visionary scientists gathered to explore “thinking machines.”

Shortly after, in 1957, a psychologist named Frank Rosenblatt, working at the Cornell Aeronautical Laboratory, invented the Rosenblatt perceptron. Unlike today’s software-based models, this was initially intended to be a physical machine. The “Mark I Perceptron” was custom-built hardware heavily wired with potentiometers and motors, designed for image recognition. It was one of the First AI Programs that could tangibly learn through trial and error, adapting its own weights without human intervention.

During this exciting period of Early Machine Learning, the media heralded the perceptron as the embryonic precursor to a truly conscious computer. The history of perceptron research is a testament to the ambitious optimism of the 1950s and 60s, establishing the foundational concepts that we still rely on today.

How Does the Perceptron Work

Understanding the inner mechanics of this model requires looking at the perceptron learning rule. The beauty of the perceptron lies in its simplicity and its ability to learn from its mistakes iteratively.

Here is the step-by-step process of how it learns:

- Initialization: The algorithm starts by setting all the weights and the bias to zero (or small random numbers).

- Forward Pass: A data point from the training set is fed into the perceptron. It multiplies the inputs by their current weights, adds the bias, and applies the step function to generate a prediction (0 or 1).

- Error Calculation: The algorithm compares its prediction against the actual, correct label.

- Weight Update: This is where the magic of the perceptron learning rule happens. If the prediction is correct, the weights remain unchanged. If the prediction is wrong, the algorithm adjusts the weights slightly in the direction that would have made the prediction more accurate.

- Iteration: The algorithm repeats this process for every piece of data in the dataset (an epoch) and continues doing so until it makes no more mistakes, or until a set number of iterations is reached.

Applications of Perceptron in Machine Learning

While modern data science relies on much more complex systems, perceptron machine learning still holds practical value, particularly in scenarios requiring rapid, binary decisions on linearly separable data.

In the real world, single-layer perceptrons are used as simple classifiers. For example, they can be utilized in basic logic gates (like simulating AND/OR functions in hardware), straightforward sentiment analysis (categorizing a short sentence as positive or negative based on keyword presence), and rudimentary spam filters. While a single perceptron cannot handle the nuances of human language, it remains an excellent educational tool and a lightweight solution for straightforward, two-class sorting problems where computational efficiency is the priority.

Limitations of the Perceptron Algorithm

Despite the initial hype surrounding Frank Rosenblatt’s invention, the perceptron had a fatal flaw. It is strictly a linear classifier. This means it can only separate data that can be divided by a perfectly straight line (or a flat plane in higher dimensions).

In 1969, Marvin Minsky and Seymour Papert published a highly influential book titled Perceptrons, which mathematically proved these limitations. They demonstrated that a single-layer perceptron could not solve the “XOR (Exclusive OR) problem”—a very simple non-linear logic puzzle. This revelation severely dashed the hopes of researchers and funding agencies. The resulting loss of financial support and academic interest plunged the artificial intelligence community into a dark period of stagnation, known today as one of the infamous AI Winters.

Evolution: Multi-Layer Perceptrons and Deep Learning

The solution to the perceptron’s limitations was conceptually simple but mathematically complex to execute: stack them together. This realization sparked the Evolution of Machine Learning Algorithms. By connecting multiple perceptrons into distinct layers—an input layer, one or more “hidden” layers, and an output layer—researchers created the Multi-Layer Perceptron (MLP).

Adding hidden layers allowed the network to bend and fold the decision boundaries, solving complex non-linear problems like the XOR puzzle. However, to train these multi-layered networks, a new mathematical approach was needed. The development of the “backpropagation” algorithm in the 1980s allowed errors to be calculated backward through the hidden layers, updating weights across the entire network. This evolution from a single-layer perceptron to complex, multi-layered networks is the very foundation of modern Deep Learning, powering everything from facial recognition to generative AI today.

Frequently Asked Questions (FAQs)

1. What is the difference between a perceptron and a neural network?

A perceptron is the simplest building block of a neural network—a single artificial neuron (a single layer). A full neural network, specifically a Multi-Layer Perceptron (MLP), consists of many perceptrons organized into multiple interconnected layers (input, hidden, and output layers).

2. Is the perceptron algorithm supervised or unsupervised?

It is strictly a supervised learning algorithm. It requires a labeled training dataset to calculate its errors and adjust its weights during the learning process.

3. Why can’t a single layer perceptron solve the XOR problem?

The XOR problem represents data points that cannot be separated by a single straight line (they are not linearly separable). Because a single perceptron can only draw one straight decision boundary, it mathematically cannot classify XOR data correctly.

4. Does the perceptron always find a solution?

If the data provided to the perceptron is perfectly linearly separable, the Perceptron Convergence Theorem guarantees that the algorithm will eventually find a solution and separate the classes perfectly. If the data is not linearly separable, the algorithm will never converge and will loop endlessly unless stopped.

Conclusion

Understanding perceptron machine learning is not just a history lesson; it is an essential step in comprehending how modern artificial intelligence truly functions. From its early days as a physical machine reading simple shapes to its evolution into the massive, deep neural networks of the modern era, the perceptron remains a marvel of computer science. By grasping how a simple set of inputs, weights, and a step function can “learn” from data, you lay a solid foundation for exploring the incredible, ever-expanding world of artificial intelligence and machine learning.