Introduction

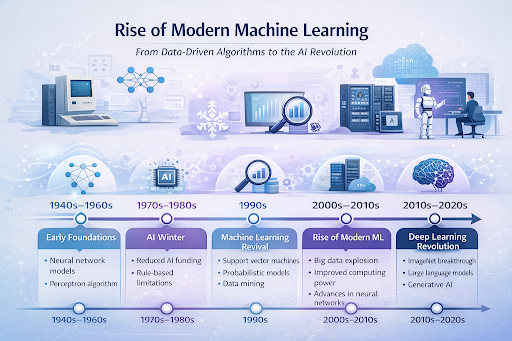

The technological landscape of the 21st century has been completely reshaped by artificial intelligence, but this transformation did not happen overnight. At the heart of this technological leap is the rise of modern machine learning. Unlike early computer programs that relied on rigid, hand-coded rules to perform specific tasks, today’s systems are designed to learn, adapt, and improve from experience. This fundamental shift from instruction-driven programming to data-driven learning has unlocked unprecedented capabilities, allowing computers to see, hear, understand, and predict with astonishing accuracy.

The machine learning revolution is a fascinating journey of theoretical breakthroughs, hardware advancements, and massive accumulations of digital data. By understanding the modern machine learning history, we can better appreciate how algorithms evolved from simple academic concepts into the powerful engines driving today’s global economy. From the foundational ideas of the mid-20th century to the complex deep learning systems of today, this article explores the transformative journey of machine learning in artificial intelligence.

Early Foundations of Machine Learning

The quest to create intelligent machines is a concept that has intrigued scientists and mathematicians for generations. The formal beginning of this journey is widely attributed to the 1956 Dartmouth Conference, a historic summer workshop where the term “artificial intelligence” was first coined. During this era, researchers were highly optimistic about the potential to simulate human intelligence using logic and computational power.

Following this gathering, the development of the First AI Programs focused heavily on symbolic AI, where programmers attempted to manually encode human knowledge into machine-readable rules. However, the concept of a machine learning on its own was already brewing. Pioneers like Arthur Samuel, who created a checker-playing program in 1959, demonstrated that a computer could be programmed to learn from its past games and improve its performance over time. This laid the early conceptual groundwork for what would eventually become the evolution of machine learning, proving that computers could do more than just execute pre-written commands.

The Challenges of Early Artificial Intelligence

Despite the early enthusiasm, creating machines that could genuinely learn proved to be incredibly difficult. In the late 1950s, Frank Rosenblatt introduced The Perceptron, an early artificial neuron designed to recognize simple patterns. While initially hailed as a breakthrough, researchers soon discovered its mathematical limitations, realizing it could only solve linearly separable problems. This discovery severely dampened excitement and funding for neural network research for decades.

As researchers hit a wall trying to scale symbolic logic and early algorithms to solve complex, real-world problems, the industry entered prolonged periods of reduced funding and deep skepticism, famously known as the AI Winters. During these downturns, the history of machine learning algorithms seemed to stagnate. The computational power of the time was simply too weak, and the available data was far too scarce to train models effectively. It became clear that hard-coding human intelligence was practically impossible due to the sheer infinite variables of the real world. A new approach was desperately needed.

The Shift Toward Data-Driven AI in the 1990s

The true turning point in machine learning development occurred in the 1990s. Researchers began to step away from trying to build generalized artificial intelligence through symbolic logic and instead focused on solving specific, practical problems using statistics and probability. This era marked the rise of data-driven AI, where algorithms were designed to analyze datasets, find patterns, and make predictions without being explicitly programmed for the task.

Support Vector Machines

During this period, Support Vector Machines (SVMs) became incredibly popular. SVMs are highly effective supervised learning models used for classification and regression analysis. By finding the optimal hyperplane that separates different categories of data, SVMs provided a robust and mathematically sound way to categorize complex datasets, becoming the gold standard for tasks like image categorization and text classification before the deep learning era.

Probabilistic Models

Another major advancement was the widespread adoption of probabilistic models, specifically Bayesian networks. These models rely on statistical learning to calculate the probability of various outcomes based on prior knowledge and new evidence. By embracing uncertainty, probabilistic models allowed machines to make highly accurate educated guesses, which proved invaluable in fields like medical diagnosis and early spam filtering.

Data Mining

As digital record-keeping became the norm, companies found themselves sitting on massive databases of untapped information. This led to the explosion of data mining—the process of using unsupervised learning techniques to discover hidden patterns, anomalies, and correlations within large datasets. Data mining bridged the gap between raw data and actionable business intelligence, proving the commercial viability of AI algorithms.

The Impact of Big Data and Computing Power

The growth of machine learning accelerated dramatically at the turn of the 21st century, fueled by two massive catalysts: the explosion of the internet and the exponential increase in computational power. The digital age generated unprecedented amounts of information—text, images, video, and user behavior logs. This phenomenon, known as big data and AI synergy, provided the necessary “fuel” that data-driven algorithms needed to thrive.

Simultaneously, the gaming industry drove the development of Graphical Processing Units (GPUs). While originally designed to render complex 3D graphics, researchers realized that GPUs were uniquely perfectly suited for the parallel processing required to train massive machine learning models. This sudden alignment of massive datasets and accessible, high-performance computing power set the stage for the most significant leap in modern computing history.

The Deep Learning Breakthrough

With infinite data and immense computing power at their disposal, researchers revisited the old concepts of artificial neural networks, leading to a modern renaissance. This era was defined by The Rise of Neural Networks featuring multiple hidden layers—a concept known today as the deep learning revolution.

Unlike older algorithms that required human engineers to manually extract features from data, deep learning networks could automatically discover the representations needed for classification directly from raw data. In 2012, a deep learning model called AlexNet overwhelmingly defeated traditional algorithms in the ImageNet computer vision competition. This watershed moment definitively proved that deep neural networks could vastly outperform older methods if given enough data and processing power. This breakthrough cemented the rise of modern machine learning, shifting the entire tech industry’s focus toward deep learning architectures.

Real-World Applications of Modern Machine Learning

Today, the theories and algorithms developed over the past few decades are seamlessly integrated into our daily lives. The practical applications of modern machine learning are vast, transforming nearly every industry on the planet.

Search Engines

Search engines like Google rely heavily on complex machine learning models to understand user intent, rank web pages, and deliver highly relevant results. These algorithms constantly analyze user clicks, dwell times, and search patterns to refine and optimize the information they provide in milliseconds.

Recommendation Systems

Whether you are browsing Netflix, shopping on Amazon, or scrolling through social media, recommendation systems are working behind the scenes. These unsupervised learning and supervised learning algorithms analyze your past behavior and compare it to millions of other users to predict what movies, products, or posts will keep you engaged.

Speech Recognition

Virtual assistants like Siri, Alexa, and Google Assistant are powered by advanced deep learning models capable of processing natural language. These systems translate audio waves into text, interpret the semantic meaning of the words, and generate appropriate, context-aware responses in real-time.

Computer Vision

Machine learning has given computers the ability to “see.” Through convolutional neural networks, machines can now identify objects, faces, and anomalies in images and videos with a high degree of accuracy. This technology is crucial in healthcare for analyzing X-rays and MRI scans to detect diseases earlier than human doctors might.

Autonomous Vehicles

Perhaps the most ambitious application of machine learning development is the pursuit of self-driving cars. Autonomous vehicles process massive streams of data from cameras, LIDAR, and radar sensors in real-time. Complex AI algorithms make split-second decisions regarding steering, braking, and navigation to safely move through unpredictable real-world environments.

Machine Learning and the Future of Artificial Intelligence

The evolution of machine learning is far from over. As we look to the future, the focus is shifting toward creating more efficient, transparent, and generalized models. Current research is exploring areas like reinforcement learning, where agents learn by interacting with environments, and generative AI, which can create entirely new text, images, and music based on human prompts.

Furthermore, the industry is grappling with the ethical implications of these powerful systems. Ensuring that machine learning models are free from bias, respect user privacy, and operate transparently will be the defining challenge of the next decade. As hardware continues to improve and algorithms become even more refined, the line between human cognitive abilities and machine processing will continue to blur.

Frequently Asked Questions (FAQs)

1. What is the difference between artificial intelligence and machine learning?

Artificial intelligence is the broader concept of machines being able to carry out tasks in a way that we would consider “smart.” Machine learning is a specific subset of AI based on the idea that systems can learn from data, identify patterns, and make decisions with minimal human intervention.

2. Why did machine learning suddenly become so popular in the 2010s?

The massive surge in popularity was driven by the deep learning revolution. This was made possible by the sudden availability of massive datasets (Big Data) and the use of powerful GPUs that could process complex neural networks much faster than traditional CPUs.

3. What is the difference between supervised and unsupervised learning?

In supervised learning, the algorithm is trained on labeled data, meaning the “right answer” is provided during training to help the machine learn the relationship between input and output. In unsupervised learning, the machine is given unlabeled data and must find hidden structures, patterns, or groupings on its own.

4. Will machine learning replace human jobs?

While machine learning will automate certain repetitive and data-heavy tasks, it is also creating new industries and roles. Historically, technological shifts change the nature of work rather than simply eliminating it, emphasizing the need for humans to adapt and work alongside AI tools.

Conclusion

The journey from the theoretical concepts of the mid-20th century to the powerful algorithms running today’s digital world is a testament to human ingenuity. The rise of modern machine learning has fundamentally altered how we interact with technology, moving us from an era of strict programming to an era of dynamic, data-driven intelligence. By harnessing the power of vast datasets, statistical mathematics, and neural networks, we have built machines capable of solving problems that were once thought impossible. As we stand on the brink of even more advanced AI breakthroughs, understanding this history provides crucial context for navigating the exciting, machine-augmented future that lies ahead.