Introduction to Reinforcement Learning

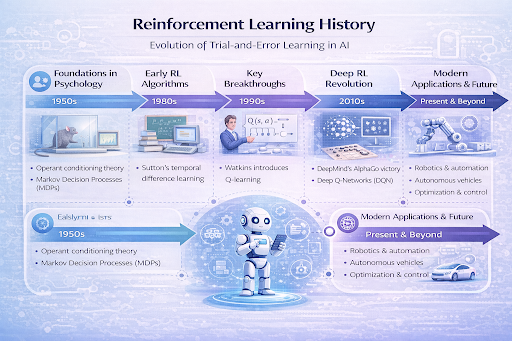

The reinforcement learning history is a captivating journey that mirrors how biological organisms learn to navigate their environment. Unlike supervised learning, which relies on labeled datasets, reinforcement learning (RL) is built on the concept of reward-based learning. It is the computational embodiment of learning through interaction. When we look at the reinforcement learning history, we see a transition from simple mathematical theories to sophisticated systems capable of defeating world champions in complex games and controlling high-precision robotics.

Understanding the reinforcement learning history is essential for anyone looking to master modern AI training methods. At its core, RL involves an “agent” that takes actions in an “environment” to maximize a cumulative “reward.” This framework, while simple in concept, took decades of interdisciplinary research to refine. Today, the evolution of this field stands as a testament to the power of persistent trial and error learning in artificial intelligence.

Early Foundations in Psychology (1950s)

To understand when reinforcement learning was invented, one must look beyond computer science and into the realm of behavioral psychology. The reinforcement learning history actually begins with the study of animal behavior. In the early 20th century, Edward Thorndike’s “Law of Effect” suggested that responses followed by satisfaction would be more likely to recur.

During the 1950s, researchers began to formalize these psychological observations into mathematical models. This era saw the First AI Programs emerge, which were heavily influenced by the work of B.F. Skinner on operant conditioning. The Dartmouth Conference in 1956 further solidified the idea that machines could simulate every aspect of human intelligence, including the ability to learn from consequences. Early pioneers like Richard Bellman introduced the concept of the Markov decision process (MDP) during this time, providing the necessary mathematical structure that would later define the reinforcement learning history.

The Development of Reinforcement Learning in AI (1980s)

After a period of relative quiet often associated with the AI Winters, the 1980s brought a significant resurgence in the reinforcement learning history. This was the decade where the thread of psychology finally merged with the thread of optimal control and computer science. Researchers like Richard Sutton and Andrew Barto began to synthesize these ideas into a unified field.

A major milestone in the reinforcement learning history occurred during this period with the development of “Temporal Difference” (TD) learning. This allowed agents to learn from incomplete sequences of actions, rather than waiting for a final outcome to adjust their behavior. It was a breakthrough in trial and error learning because it enabled “bootstrapping”—updating a guess based on another guess. This era also saw a transition away from Expert Systems in Artificial Intelligence, which relied on hard-coded rules, toward systems that could discover their own strategies through interaction.

Key Algorithms and Breakthroughs (1990s)

The 1990s were a decade of incredible technical refinement in the reinforcement learning history. The most famous development of this time was the introduction of the Q-learning algorithm by Chris Watkins in 1989 and its subsequent popularization in the early 90s. Q-learning provided a way for agents to learn the value of an action in a particular state without even needing a model of the environment.

During the Revival of Artificial Intelligence in the 1990s, reinforcement learning began to show its true potential. One of the early reinforcement learning algorithms that captured the world’s attention was Gerald Tesauro’s TD-Gammon. This program used TD learning to play backgammon at a level nearly equal to the best human players in the world. This was a pivotal moment in the reinforcement learning history because it proved that RL could scale to complex, non-trivial problems using neural networks as function approximators, long before “deep learning” became a buzzword.

Rise of Reinforcement Learning in Machine Learning (2000s)

As the new millennium began, the reinforcement learning history shifted toward addressing the “curse of dimensionality”—the problem that arises when an environment has too many possible states for a computer to track. The 2000s focused on integrating machine learning methods with RL to handle continuous spaces and more complex policy learning.

Researchers began exploring least-squares methods and policy gradient theorems, which allowed for smoother and more stable training. This period of Early Machine Learning integration laid the groundwork for modern AI training methods. The reinforcement learning history in the 2000s was characterized by a push toward theoretical convergence guarantees—mathematicians were proving that these algorithms would eventually find the best possible solution, given enough time and data. This academic rigor was necessary to move RL from a niche interest into a mainstream tool for large-scale optimization.

Deep Reinforcement Learning Revolution (2010s)

The most explosive chapter in the reinforcement learning history started around 2013 when DeepMind published their paper on playing Atari games with Deep Q-Networks (DQN). This marked the beginning of the deep reinforcement learning development era. By combining deep neural networks with RL, researchers were finally able to process raw sensory input, like pixels from a screen, to make high-level decisions.

The reinforcement learning breakthroughs timeline reached its peak in 2016 with AlphaGo. By defeating the world champion Lee Sedol, AlphaGo demonstrated that deep reinforcement learning could conquer a game with more possible positions than there are atoms in the observable universe. This was a defining moment in the reinforcement learning history, showcasing that reward-based learning could lead to “superhuman” intuition and creativity. The Rise of Neural Networks had finally provided the “brain” that reinforcement learning needed to tackle the real world.

Modern Applications of Reinforcement Learning

Today, the reinforcement learning history continues to expand through practical implementation. No longer confined to board games, these systems are integral to Modern Artificial Intelligence Applications.

Robotics

In robotics, RL is used to teach machines complex motor skills, such as walking, grasping fragile objects, or even performing surgery. Trial and error learning allows robots to adapt to physical variations in their environment that would be impossible to program manually.

Autonomous Vehicles

The applications of reinforcement learning today are highly visible in the automotive industry. RL helps autonomous vehicles make split-second decisions regarding lane changes, merging, and navigating complex intersections by predicting the behaviors of other drivers.

Game AI

While games were the testing ground for the reinforcement learning history, they remain a major application. Modern game developers use RL to create non-player characters (NPCs) that adapt to a player’s style, making for a more challenging and immersive experience.

Recommendation Systems

Streaming services and social media platforms use reinforcement learning to optimize user engagement. By treating user clicks as rewards, these systems learn to suggest content that keeps users on the platform longer, constantly refining their policy learning based on real-time feedback.

Industrial Optimization

In energy management and supply chain logistics, RL algorithms find the most efficient ways to distribute resources. Google, for instance, famously used RL to reduce the energy required to cool its data centers by 40%, proving the economic value of reward-based learning.

Future of Reinforcement Learning

The future of reinforcement learning history will likely focus on “sample efficiency”—teaching AI to learn from fewer examples, much like a human does. We are also seeing a rise in “Offline RL,” where agents learn from pre-existing datasets rather than live interaction, making it safer for healthcare and finance.

Another exciting frontier is Multi-Agent Reinforcement Learning (MARL), where hundreds of AI agents learn to cooperate or compete. As we look back at the reinforcement learning history, it is clear that the field is moving toward general-purpose intelligence that can assist humans in solving the world’s most complex problems, from climate change to drug discovery.

Frequently Asked Questions (FAQs)

When was reinforcement learning invented?

While the psychological roots go back to the early 1900s, the computational reinforcement learning history truly began in the late 1950s with the formalization of Markov Decision Processes and early trial-and-error computer programs.

What is the difference between Q-learning and Deep RL?

Traditional Q-learning uses a table to store the value of actions, which only works for small environments. Deep reinforcement learning replaces that table with a neural network, allowing the AI to handle millions of possibilities, such as the pixels in a video game.

Why is reinforcement learning harder than other types of AI?

RL is challenging because the “reward” is often delayed. An agent might make a brilliant move now but not receive the “point” until much later, making it difficult to know exactly which action was the right one.

Conclusion

In conclusion, the reinforcement learning history is a story of incredible persistence. From the 1950s study of pigeons and rats to the modern era of DeepMind and OpenAI, the field has evolved into one of the most powerful branches of artificial intelligence. By mimicking the biological process of reward-based learning, researchers have created systems that can teach themselves to solve problems we once thought were reserved for human intellect. As the reinforcement learning history continues to unfold, its impact on our technology and society will only grow deeper and more profound.