Introduction

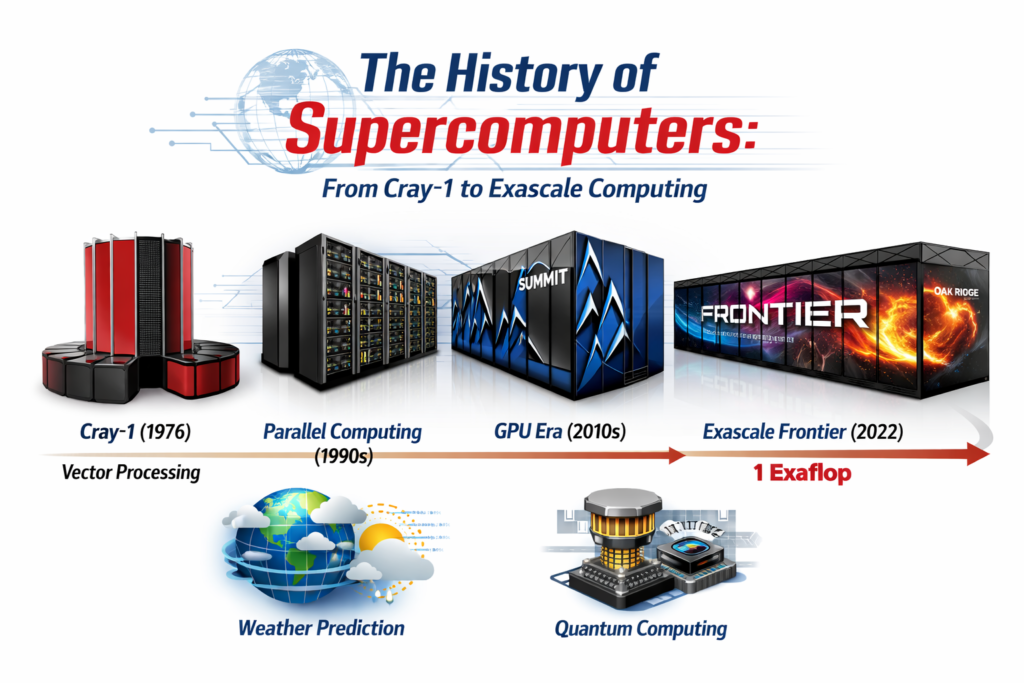

The history of supercomputers represents one of the most fascinating technological journeys in modern science. From room-sized machines consuming enormous power to cutting-edge exascale systems capable of performing quintillions of calculations per second, supercomputers have revolutionized fields such as climate science, medicine, astrophysics, and artificial intelligence.

Supercomputers are designed for high-performance computing (HPC) tasks that demand extreme computational power. Unlike conventional computers, these machines operate using thousands or even millions of interconnected processors working together through parallel processing. Their performance is measured in FLOPS (Floating Point Operations Per Second), a benchmark that highlights their ability to solve complex numerical problems rapidly.

The story of supercomputing spans more than half a century and involves innovations in vector processors, cluster computing, interconnects, and cooling technologies. It also intersects with developments in semiconductor engineering, particularly the evolution of transistors, which enabled processors to become faster and more efficient.

Understanding the history of supercomputers reveals how computational power evolved from early mainframes to modern exascale machines capable of modeling the universe, predicting natural disasters, and accelerating breakthroughs in artificial intelligence.

A. Seymour Cray and the Giant Mainframes (1960 – 1976)

The early history of supercomputers begins with Seymour Cray, often referred to as the father of supercomputing. During the 1960s and 1970s, Cray pioneered revolutionary designs that dramatically increased computing power.

At a time when computers were mainly used for business and administrative tasks, Cray focused on machines capable of solving highly complex scientific problems. These machines laid the foundation for modern supercomputer architecture evolution.

The CDC 6600: Establishing the Concept of Supercomputing

Released in 1964, the CDC 6600 is widely considered the world’s first supercomputer. Designed by Seymour Cray at Control Data Corporation, the system was capable of performing around three million calculations per second.

What made the CDC 6600 revolutionary was its innovative architecture. Instead of relying on a single central processor, the system used multiple functional units that worked simultaneously, improving efficiency. This early form of parallel processing significantly boosted performance.

The CDC 6600 was primarily used for scientific simulations, including nuclear research and aerospace engineering. Its success established the idea that specialized machines could outperform general-purpose computers in complex numerical tasks.

This era also saw significant advancements in computer hardware and semiconductors, closely tied to the evolution of transistors, which enabled processors to operate faster and consume less power.

The Cray-1: Iconic Design and Vector Processing Power

In 1976, Seymour Cray introduced the Cray-1, a machine that became an icon in the history of supercomputers. With its distinctive circular design and padded seating surrounding the base, the Cray-1 looked futuristic even by modern standards.

The system utilized vector processors, which allowed it to perform operations on large sets of data simultaneously. This architecture dramatically increased efficiency for scientific calculations involving matrices and vectors.

The Cray-1 achieved a peak performance of about 160 megaflops and quickly became the gold standard for supercomputing. Institutions such as NASA and national laboratories relied on the system for advanced simulations.

B. Vector Processing and Parallel Computing (1976 – 1995)

The period from the late 1970s to the mid-1990s marked a major shift in the history of supercomputers, as engineers began experimenting with new ways to scale computing performance.

Rather than relying solely on faster processors, researchers began developing architectures that used multiple processors working together.

Scaling Beyond Single Processors: The Birth of Parallelism

During this period, the concept of parallel processing gained prominence. Instead of one processor executing instructions sequentially, multiple processors could work simultaneously on different parts of a problem.

This approach led to the development of massively parallel systems. Machines like the Thinking Machines Connection Machine utilized thousands of processors connected through specialized interconnects.

Parallel computing dramatically increased computing power and paved the way for petaflops-scale systems decades later.

These advancements also influenced other computing fields, including distributed systems and networking, areas closely related to history of computer networking.

The Remarkable History of Supercomputers in Weather Prediction

One of the most impactful applications of supercomputers during this period was weather forecasting.

Meteorological models require the analysis of massive datasets, including atmospheric pressure, ocean temperatures, and wind patterns. Without high-performance computing, accurate forecasts would be impossible.

Supercomputers enabled scientists to run advanced climate models and simulate long-term environmental changes. These simulations became essential for predicting hurricanes, understanding climate change, and improving disaster preparedness.

C. Clusters and Commodity Hardware (1995 – 2010)

The next major phase in the history of supercomputers occurred when engineers began using clusters of ordinary computers instead of building specialized machines.

This approach dramatically reduced costs and increased scalability.

Beowulf Clusters: Turning Standard PCs into Supercomputers

In the mid-1990s, researchers developed the Beowulf cluster architecture. This approach connected multiple commodity PCs through high-speed networks to form a single computational system.

Each computer acted as a node in the cluster, working together to solve complex problems.

This method transformed supercomputing because it allowed universities and research institutions to build powerful systems at a fraction of the cost of traditional supercomputers.

The rise of clusters also coincided with improvements in rise of storage technology, enabling massive datasets to be stored and accessed efficiently.

Around this time, the history of TOP500 list began tracking the world’s most powerful supercomputers using the LINPACK benchmark.

IBM Deep Blue vs. Kasparov: A Milestone for Artificial Intelligence

In 1997, IBM’s Deep Blue defeated world chess champion Garry Kasparov in a historic match.

Deep Blue was a specialized supercomputer designed to evaluate millions of chess positions per second. While it relied heavily on brute-force calculations, the achievement demonstrated the potential of computational intelligence.

The victory represented a turning point in AI research and highlighted the growing capabilities of high-performance computing systems.

D. The Heterogeneous Era and GPU Integration (2010 – 2020)

The decade between 2010 and 2020 introduced another major transformation in the history of supercomputers.

Engineers began integrating graphics processing units (GPUs) into supercomputers, creating heterogeneous architectures that combined CPUs and GPUs for improved efficiency.

Summit and Sierra: Utilizing NVIDIA GPUs for Massive Compute

Two of the most powerful systems of this era were Summit and Sierra, built by IBM and deployed at U.S. national laboratories.

These machines utilized thousands of NVIDIA GPUs to accelerate calculations. GPUs are particularly effective at handling highly parallel tasks such as machine learning and molecular simulations.

Summit achieved performance levels exceeding 200 petaflops, making it one of the most powerful computers ever built at the time.

This period also saw major improvements in history of computer processors, which played a critical role in supporting large-scale HPC systems.

Why Cooling Systems Became the Biggest Engineering Challenge

As supercomputers became more powerful, heat generation became a serious challenge.

Modern systems consume megawatts of power, generating enormous amounts of heat that must be dissipated efficiently.

Engineers began using liquid cooling and advanced thermal management systems to keep processors within safe operating temperatures.

These innovations allowed supercomputers to scale beyond petaflops without overheating.

E. The Exascale Frontier and Beyond (2020 – 2026)

The most recent chapter in the history of supercomputers is defined by the race toward exascale computing.

An exascale system can perform one exaflop — one quintillion calculations per second.

Frontier: Breaking the Exaflop Barrier at Oak Ridge

In 2022, the Frontier supercomputer at Oak Ridge National Laboratory became the first machine to officially break the exaflop barrier.

Frontier uses AMD CPUs and GPUs connected through ultra-fast interconnects and achieves performance levels exceeding 1 exaflop on the LINPACK benchmark.

This milestone represents one of the most significant exascale computing milestones in the most powerful computers timeline.

Frontier enables breakthroughs in climate modeling, nuclear research, and drug discovery.

Quantum Supercomputing: The Next Leap in Processing Speed

Looking ahead, researchers are exploring quantum computing as the next major step in computational power.

Unlike classical supercomputers that rely on binary bits, quantum systems use qubits that can represent multiple states simultaneously.

This capability could allow quantum computers to solve problems that are currently impossible even for the most advanced HPC systems.

While still in its early stages, quantum supremacy represents a potential revolution in computing.

Frequently Asked Questions (FAQs)

What is a supercomputer?

A supercomputer is an extremely powerful computer designed to perform complex calculations at extremely high speeds, typically measured in FLOPS.

What is exascale computing?

Exascale computing refers to systems capable of performing at least one exaflop (10¹⁸ calculations per second).

Why are GPUs used in supercomputers?

GPUs excel at parallel processing, making them ideal for scientific simulations, machine learning, and large-scale computations.

What industries use supercomputers?

Supercomputers are used in weather forecasting, pharmaceutical research, aerospace engineering, artificial intelligence, and nuclear physics.

How is supercomputer performance measured?

Performance is typically measured using benchmarks like LINPACK, which evaluates floating-point computational speed.

Conclusion

The history of supercomputers demonstrates the relentless pursuit of computational power. From Seymour Cray’s pioneering machines in the 1960s to today’s exascale systems, each generation of supercomputers has expanded the boundaries of what technology can achieve.

Advancements in processor design, interconnect technology, cluster computing, and cooling systems have all contributed to the evolution of these powerful machines. As exascale computing becomes more widespread and quantum computing continues to advance, the future of supercomputing promises even greater breakthroughs.

Supercomputers will remain essential tools for solving humanity’s most complex challenges, from climate change to medical discovery. Their remarkable history is not only a testament to engineering innovation but also a glimpse into the limitless potential of computing technology.