Introduction to Bayesian Networks

The world is full of uncertainty. Will it rain tomorrow? Is this symptom a sign of a serious illness? Will a customer default on their loan? Traditional algorithms struggle with these questions because they demand clean, deterministic answers. Bayesian networks offer a remarkably powerful alternative. They provide an elegant framework for modeling uncertainty, capturing complex relationships between variables, and making intelligent predictions even when information is incomplete.

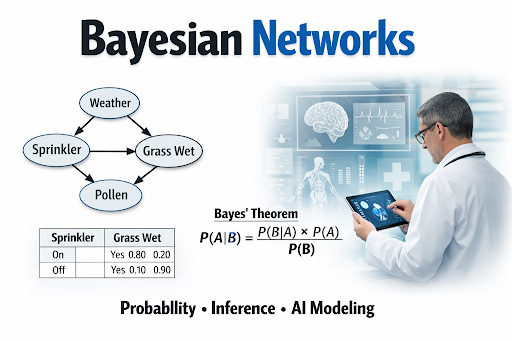

At their heart, bayesian networks are a type of probabilistic graphical model. They combine graph theory with probability theory to represent knowledge about an uncertain domain. Each variable in the network is a node, and the connections between nodes represent probabilistic dependencies. This structure allows Bayesian networks to reason about cause and effect, update beliefs as new evidence arrives, and handle missing data gracefully.

The name honors Thomas Bayes, an 18th century statistician who developed Bayes’ theorem. This theorem provides the mathematical foundation for how bayesian networks update probabilities when new information becomes available. This approach to learning from data shares similarities with self supervised learning in artificial intelligence , where algorithms learn useful representations without explicit labels. Understanding Bayesian networks opens doors to more sophisticated AI applications, from medical diagnosis to fraud detection.

Core Components of a Bayesian Belief Network

A Bayesian belief network consists of two main components: a structure and a set of parameters. The structure captures the relationships between variables, while the parameters quantify the strength of those relationships.

Directed Acyclic Graphs (DAGs) Simply Explained

The structure of a bayesian network is a Directed Acyclic Graph, often abbreviated as DAG. Let us break down this term. Directed means the connections between nodes have arrows that point in a specific direction, indicating causality or influence. Acyclic means there are no cycles or loops in the graph. You cannot follow the arrows and return to where you started.

The acyclic property is crucial. It ensures that bayesian networks represent a coherent flow of influence without circular reasoning. For example, rain influences whether the ground gets wet, but the ground being wet does not influence whether it rains. This directional flow without cycles makes the mathematics tractable and the interpretations meaningful. The evolution of machine learning algorithms shows how probabilistic methods like this became essential as AI moved from toy problems to real world complexity.

Understanding Nodes and Edges

In Bayesian networks, nodes represent random variables. A variable could be anything you want to model: temperature, stock price, disease status, or customer behavior. Nodes can represent discrete outcomes, like sunny or rainy, or continuous values, like exact temperature readings.

Edges, the arrows connecting nodes, represent direct probabilistic dependencies. An arrow from node A to node B means A directly influences B. In probabilistic terms, B is conditionally dependent on A. Understanding these node dependencies is key to interpreting the network.

If there is no direct edge between two nodes, they are conditionally independent given other variables. This property makes Bayesian networks efficient. Instead of storing probabilities for every possible combination of variables, the network exploits independence to reduce complexity. This is what makes Bayesian networks machine learning practical for real world problems with many variables.

How to Read Conditional Probability Tables (CPTs)

The parameters of a Bayesian network are stored in Conditional Probability Tables, or CPTs. For each node, the CPT specifies the probability of each possible outcome given every combination of its parent nodes.

Consider a simple bayesian network with two nodes. A node called Rain has no parents. Its CPT simply lists the prior probability of rain, say 20 percent. A node called Wet Grass has Rain as its parent. Its CPT specifies the probability of wet grass given rain and given no rain. For example, the probability of wet grass is 90 percent when rain occurs but only 10 percent when rain does not occur.

Reading a CPT requires understanding conditional probability. The table answers questions like “what is the probability of the grass being wet given that it is raining?” This conditional relationship is the building block of probabilistic inference in Bayesian networks.

The Math Behind the Model: A Quick Look at Bayes’ Theorem

At the mathematical heart of Bayesian networks lies Bayes’ theorem. This elegant formula, developed by Thomas Bayes, describes how to update the probability of a hypothesis as new evidence arrives. The theorem is fundamental to Bayesian networks because it enables probabilistic inference.

Bayes’ theorem is expressed mathematically as:

P(A|B) = P(B|A) × P(A) / P(B)

Let us break down each component. P(A|B) is the posterior probability, the probability of event A occurring given that event B has occurred. P(B|A) is the likelihood, the probability of observing evidence B if A is true. P(A) is the prior probability, our initial belief about A before seeing any evidence. P(B) is the marginal probability, the overall probability of observing evidence B.

In the context of Bayesian networks, Bayes’ theorem allows us to reverse the direction of reasoning. If we know the probability of symptoms given a disease, the theorem tells us the probability of the disease given the symptoms. This reversal is what makes Bayesian networks so valuable for diagnosis and prediction. As we look toward what comes next, the Future of artificial intelligence technology will likely see even more sophisticated applications of Bayesian reasoning.

How Probabilistic Inference Works

Inference is the process of computing the probability of one or more variables given observed evidence about other variables. Bayesian networks support several types of inference, each suited to different scenarios.

Exact Inference vs. Approximate Inference

Exact inference computes probabilities precisely using algorithms that leverage the network’s structure. The most common exact inference algorithm is variable elimination. This method sums over hidden variables to compute the desired probability distribution. For small to medium sized bayesian networks, exact inference is feasible and provides accurate results.

However, exact inference becomes computationally expensive as the network grows. The problem of inference in Bayesian networks is NP hard, meaning exact solutions can require exponential time in the worst case. For large networks with hundreds or thousands of nodes, exact inference may be impossible.

Approximate inference offers a practical alternative. Instead of computing exact probabilities, these methods estimate probabilities through sampling or other approximation techniques. Markov Chain Monte Carlo, or MCMC, is a popular approximate inference method. It generates random samples from the posterior distribution and uses these samples to estimate probabilities.

The tradeoff between exact and approximate inference is speed versus accuracy. Exact inference guarantees correct results but may be slow. Approximate inference is faster but introduces some errors. Choosing the right approach depends on your specific problem, the size of your Bayesian network, and your tolerance for approximation error. The rise of modern machine learning has seen Bayesian methods become increasingly important for handling uncertainty.

Key Advantages of Using Bayesian Networks

Bayesian networks offer several compelling advantages that make them attractive for real world AI applications.

Handling Uncertainty Gracefully is perhaps their greatest strength. Unlike deterministic algorithms that demand complete information, Bayesian networks thrive on uncertainty. They represent probabilities explicitly and update beliefs as new evidence arrives. This makes them ideal for medical diagnosis, where symptoms are ambiguous, or for fraud detection, where evidence is often incomplete.

Transparency and Interpretability set bayesian networks apart from black box models like deep neural networks. The graph structure provides a clear visual representation of causal relationships. Stakeholders can see which variables influence which outcomes and understand the reasoning behind predictions. The expert systems in artificial intelligence tradition emphasized this kind of transparency, and bayesian networks continue that legacy.

Combining Domain Knowledge with Data is another major advantage. You can build a Bayesian network purely from expert knowledge, purely from data, or from a combination of both. This flexibility is valuable when data is scarce or expensive. Experts can specify the network structure based on their understanding, then the data can refine the probability tables.

Handling Missing Data Naturally distinguishes Bayesian networks from many other algorithms. When some variables are unobserved, the network simply marginalizes them. You do not need to impute missing values or discard incomplete records. The probabilistic framework accounts for uncertainty about missing information.

Causal Reasoning is possible with Bayesian networks when the graph structure reflects true causal relationships. You can ask what if questions, such as “what would happen to the probability of disease if we administered this treatment?” This causal perspective is invaluable for decision making and planning.

Practical Applications of Bayesian Networks

Bayesian networks have found success across numerous domains. Their ability to model uncertainty and reason probabilistically makes them particularly valuable in high stakes applications.

Medical Diagnosis

Medical diagnosis is perhaps the classic application of Bayesian networks. A doctor observes symptoms and test results but rarely has complete information. There is always uncertainty. Bayesian networks help navigate this uncertainty by modeling the probabilistic relationships between diseases, symptoms, and risk factors.

Consider a simple diagnostic bayesian network for a respiratory illness. Nodes might include smoking status, air pollution exposure, presence of coughing, fever, chest pain, and the underlying disease. The network captures how smoking influences disease risk, how the disease influences symptoms, and how symptoms co-occur.

When a patient presents with coughing and fever, the bayesian network computes the posterior probabilities of each possible disease. As more test results arrive, the network updates these probabilities. This dynamic updating mirrors how doctors think, refining diagnoses as evidence accumulates.

The incredible AI in healthcare history and evolution documents how probabilistic models like bayesian networks have transformed medical practice. They reduce diagnostic errors, guide test selection, and provide quantitative risk assessments that support clinical decision making.

Risk Management and Fraud Detection

Financial institutions face constant threats from fraud and default risk. Bayesian networks help manage these risks by modeling the probabilistic relationships between customer attributes, transaction patterns, and fraudulent behavior.

For credit risk assessment, a bayesian network might include nodes for credit score, income, debt level, employment status, and loan default. The network captures how these factors interact. A low credit score combined with high debt might indicate high default risk, while high income might offset some of that risk.

For fraud detection, the network models normal transaction patterns. When a transaction occurs, the bayesian network computes the probability that it is fraudulent given the observed features. Unusual patterns trigger alerts for investigation. The AI recommendation systems show how similar probabilistic methods power personalization and security across the web.

The advantage of bayesian networks for risk management is their transparency. Regulators and auditors can examine the network structure and understand why certain transactions were flagged. This explainability is increasingly important as financial institutions face stricter oversight.

Frequently Asked Questions

1. What is the difference between Bayesian networks and neural networks?

Bayesian networks are probabilistic graphical models that represent uncertainty explicitly and are highly interpretable. Neural networks are connectionist models that learn patterns from data but operate as black boxes. Bayesian networks work well with small data and expert knowledge, while neural networks excel with large datasets.

2. How do Bayesian networks handle missing data?

Bayesian networks handle missing data naturally through marginalization. When a variable is unobserved, the network sums over all possible values of that variable, effectively averaging over the uncertainty.

3. Can Bayesian networks represent cycles or feedback loops?

No, Bayesian networks must be acyclic. Directed cycles would create inconsistent probability calculations. For systems with feedback loops, alternative models like dynamic Bayesian networks can represent temporal cycles.

4. What is the Markov blanket in a Bayesian network?

The Markov blanket of a node consists of its parents, children, and other parents of its children. This set of nodes completely shields the node from the rest of the network, making it conditionally independent of all other variables given its Markov blanket.

5. Are Bayesian networks only for discrete variables?

No, Bayesian networks can handle both discrete and continuous variables. Continuous variables typically use Gaussian distributions, leading to Gaussian Bayesian networks.

6. How do I learn the structure of a Bayesian network from data?

Structure learning algorithms search through possible graph structures to find one that fits the data well. Common approaches include score based methods, which optimize a scoring function, and constraint based methods, which test conditional independencies.

Conclusion

Bayesian networks represent a remarkably elegant approach to modeling uncertainty in artificial intelligence. By combining the visual clarity of graphs with the mathematical rigor of probability theory, they offer a framework that is both powerful and interpretable. From medical diagnosis to fraud detection, Bayesian networks have proven their value in high stakes applications where understanding the reasoning behind predictions matters as much as the predictions themselves.

The mathematical foundation of Bayesian networks, rooted in Bayes’ theorem, provides a principled way to update beliefs as new evidence arrives. This dynamic reasoning mirrors how humans think, refining understanding incrementally. The graph structure captures causal relationships and conditional independencies, making the models efficient and transparent.

As you explore the broader landscape of artificial intelligence, you will find that Bayesian networks complement other approaches. The history of knowledge representation in artificial intelligence shows how representing uncertainty has been a long standing challenge. Bayesian networks provide one of the most successful solutions. The modern artificial intelligence applications we see today, from self-driving cars to virtual assistants, increasingly rely on probabilistic reasoning to navigate an uncertain world.

Understanding Bayesian networks is not just learning another algorithm. It is gaining a new way to think about uncertainty, causality, and probabilistic reasoning. These skills are essential for anyone serious about building intelligent systems that operate in the real world. The fascinating history of the turing test reminds us that intelligence requires handling ambiguity. Bayesian networks give us the tools to do exactly that.