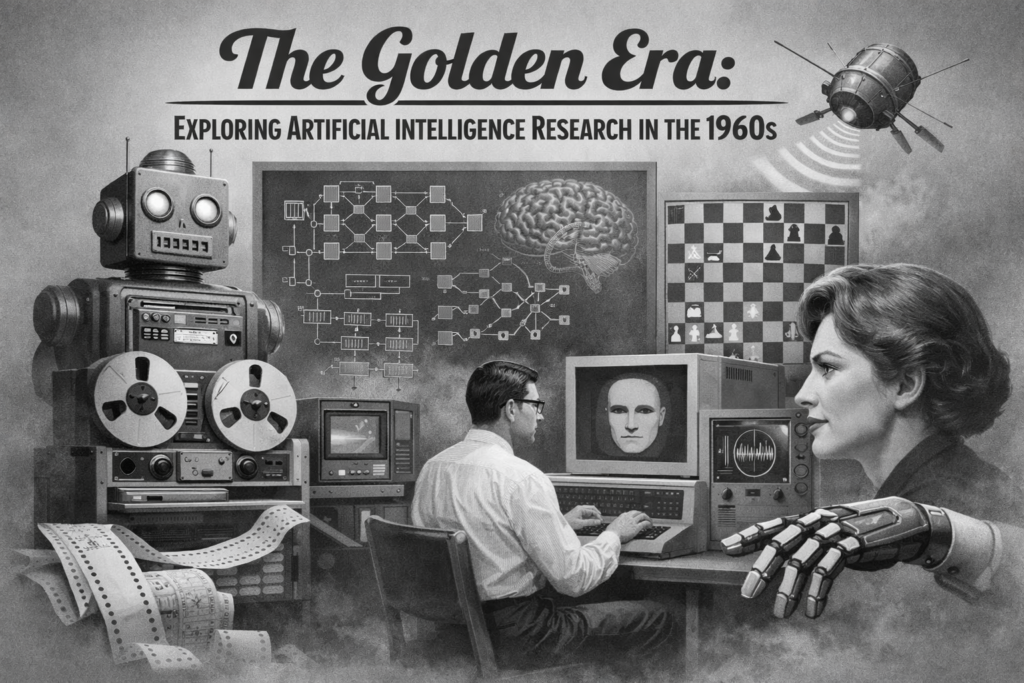

The Artificial Intelligence Research in the 1960s marked a bold and optimistic period that shaped the future of modern computing. This decade is often called the golden era of AI, where visionary scientists believed machines could soon match human intelligence.

Fueled by government funding, academic curiosity, and groundbreaking innovation, researchers explored symbolic reasoning, early Natural Language Processing, and robotics. Although many predictions proved overly ambitious, the foundations laid during this time continue to influence today’s AI technologies.

The Artificial Intelligence Research in the 1960s was not just about building machines—it was about redefining intelligence itself.

The Visionaries: McCarthy, Minsky, and the Dartmouth Legacy

Artificial Intelligence Research in the 1960s was driven by pioneering thinkers such as John McCarthy and Marvin Minsky. Their ideas, born from the famous Dartmouth conference, shaped the direction of AI research for decades.

Symbolic AI: The Logic-Based Approach to Thinking Machines

One of the dominant approaches during Artificial Intelligence Research in the 1960s was symbolic AI.

Symbolic AI focused on representing knowledge using logic, rules, and symbols. Researchers believed that intelligence could be replicated by encoding reasoning processes into machines.

This approach led to systems like The General Problem Solver, which attempted to mimic human reasoning.

The foundation of symbolic reasoning is closely tied to expert systems in artificial intelligence, where knowledge is represented through rules and logical structures.

Although powerful in controlled environments, symbolic AI struggled with ambiguity and real-world complexity.

LISP: Creating the Language of Artificial Intelligence

To support AI development, John McCarthy created LISP, one of the first programming languages designed specifically for AI research.

LISP allowed researchers to manipulate symbols and represent knowledge efficiently.

It became the backbone of Artificial Intelligence Research in the 1960s and remained influential for decades.

Many early AI programs were written in LISP, enabling rapid experimentation and innovation.

This period also aligned with first ai programs, where researchers began implementing early intelligent systems.

Major Milestones of the Sixties

Artificial Intelligence Research in the 1960s produced several groundbreaking milestones that demonstrated the potential of intelligent machines.

ELIZA: The World’s First Glimpse of Conversational AI

One of the most famous innovations was ELIZA, an early chatbot created by Joseph Weizenbaum.

ELIZA simulated a psychotherapist by responding to user input using simple pattern matching.

Although it lacked true understanding, ELIZA created the illusion of conversation and surprised many users.

This milestone is deeply connected to the history of ai assistants and chatbots, where conversational AI continues evolving today.

ELIZA showed that even simple systems could mimic human-like interaction.

Shakey the Robot: Merging Computer Vision and Navigation

Another remarkable achievement in Artificial Intelligence Research in the 1960s was Shakey the Robot.

Developed at the Stanford Research Institute, Shakey combined perception, reasoning, and action.

It used early computer vision techniques to navigate its environment and make decisions.

Shakey is often considered the first mobile robot capable of reasoning about its actions.

This innovation contributed to the broader history of robotics and artificial intelligence, where machines interact with the physical world.

Shakey demonstrated that AI could extend beyond abstract reasoning into real-world applications.

Government Funding and the DARPA Influence

The rapid progress in Artificial Intelligence Research in the 1960s was largely driven by government funding, particularly from DARPA (Defense Advanced Research Projects Agency).

The Lighthill Report and the Impending Funding Crisis

Despite early optimism, progress was slower than expected.

The Lighthill Report in the early 1970s criticized the lack of real-world results from AI research.

This report contributed to reduced funding and marked the beginning of the AI winters, where enthusiasm for AI declined.

The challenges highlighted in this report exposed the limitations of early AI systems.

High Hopes: The Prediction of Human-Level AI by 1980

During the Artificial Intelligence Research in the 1960s, many researchers predicted that machines would achieve human-level intelligence within two decades.

These predictions fueled investment but also set unrealistic expectations.

When these goals were not met, disappointment followed.

However, the ambition of this era pushed the boundaries of what was possible and inspired future generations of researchers.

The First AI Winter: Lessons from the 1960s Failures

The Artificial Intelligence Research in the 1960s eventually led to a period of disillusionment known as the First AI Winter.

The Limitation of Rule-Based Systems

Rule-based systems were powerful but rigid.

They struggled with:

Handling ambiguity in language

Adapting to new situations

Scaling to complex real-world problems

These limitations highlighted the need for more flexible approaches.

This realization eventually led to advancements described in the rise of neural networks, where machines learn from data rather than relying solely on rules.

Why 1960s Research is Still Relevant to Modern LLMs

Despite its challenges, Artificial Intelligence Research in the 1960s remains highly relevant today.

Modern Large Language Models (LLMs) still rely on concepts introduced during this era, such as symbolic reasoning and structured knowledge representation.

Breakthroughs discussed in large language models history build upon these early ideas.

Additionally, modern approaches such as self supervised learning in artificial intelligence continue the shift toward learning-based systems.

The legacy of the 1960s proves that early failures can lead to long-term success.

Additional Breakthrough: The General Problem Solver

One important milestone often overlooked in Artificial Intelligence Research in the 1960s is the General Problem Solver.

Developed by Allen Newell and Herbert Simon, this system aimed to simulate human problem-solving strategies.

It used heuristic search methods to find solutions to complex problems.

Although limited in scope, it represented a major step toward understanding human cognition and machine reasoning.

The Influence on Modern AI Development

The Artificial Intelligence Research in the 1960s continues to influence modern AI in several ways:

It introduced foundational concepts in symbolic reasoning

It established programming tools like LISP

It inspired advancements in robotics and language processing

It highlighted the importance of learning-based approaches

Today’s AI systems are far more advanced, but they still build on these early ideas.

The progress we see today is part of a long journey that began during this golden era.

Researchers now explore the Future of Artificial Intelligence Technology, where systems become more adaptive, intelligent, and capable.

Frequently Asked Questions (FAQs)

What was Artificial Intelligence Research in the 1960s?

It was a foundational period in AI where researchers explored symbolic reasoning, early chatbots, and robotics, laying the groundwork for modern AI systems.

Who were the key figures in 1960s AI research?

Key figures included John McCarthy, Marvin Minsky, Allen Newell, and Herbert Simon.

What is symbolic AI?

Symbolic AI is an approach that represents knowledge using logical rules and symbols to simulate human reasoning.

Why did the first AI winter happen?

The first AI winter occurred due to unrealistic expectations, limited computational power, and the inability of early systems to solve complex real-world problems.

How did the 1960s influence modern AI?

The concepts, tools, and lessons from the 1960s continue to shape modern AI, including machine learning, robotics, and natural language processing.

Conclusion

The Artificial Intelligence Research in the 1960s represents a remarkable chapter in technological history.

It was a time of bold ideas, groundbreaking innovations, and ambitious goals that shaped the future of artificial intelligence.

Although many early systems fell short of expectations, their contributions laid the foundation for modern AI breakthroughs.

From symbolic reasoning to robotics and language processing, the legacy of this era continues to influence today’s technologies.

The journey that began in the 1960s reminds us that innovation often requires both success and failure—and that even the most ambitious dreams can eventually become reality.