Introduction to Computer Vision

The history of computer vision in artificial intelligence is a fascinating tale of human ambition, scientific breakthroughs, and the relentless pursuit of teaching machines how to “see.” For humans, vision is almost effortless; our brains process light, recognize shapes, and interpret complex scenes in milliseconds. However, replicating this biological marvel in machines has proven to be one of the most formidable challenges in computer science. Understanding the history of computer vision in artificial intelligence helps us appreciate exactly how far we have come—from struggling to recognize basic geometric shapes to developing systems that can diagnose diseases from medical scans more accurately than human experts.

At its core, computer vision history is the story of transforming pixels into meaning. The evolution of computer vision has grown alongside broader advancements in computing power and algorithmic design. Today, computer vision in AI is not just a theoretical research field; it is the backbone of the digital transformation reshaping our daily lives.

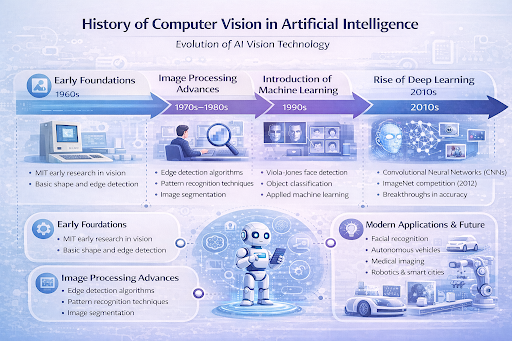

Early Foundations of Computer Vision (1960s)

Looking back at the history of computer vision in artificial intelligence, the 1960s mark the ambitious, albeit naive, beginnings of the field. Early researchers believed that solving the visual perception problem would be a relatively quick summer project. The foundational concepts of AI vision systems were heavily influenced by the Dartmouth Conference in 1956, which officially birthed the field of artificial intelligence.

This era in the history of computer vision in artificial intelligence was defined by early computer vision systems that focused heavily on “block worlds.” Pioneers like Larry Roberts, often considered the father of computer vision, created programs capable of extracting 3D geometric information from 2D views of solid blocks. In 1966, Marvin Minsky at MIT famously assigned the “Summer Vision Project” to an undergraduate student, aiming to build a significant part of a visual system in just a few months. While the project fell short of its massive goal, it sparked the development of computer vision as a dedicated and highly complex subfield of AI.

Development of Image Processing Techniques (1970s–1980s)

As we trace the history of computer vision in artificial intelligence, the 1970s and 1980s represent a shift toward rigorous mathematics and image processing. Researchers quickly realized that before a computer could “understand” an image, it needed robust ways to process the raw visual data. This led to the creation of fundamental image processing techniques such as edge detection, optical flow, and image segmentation.

It was a critical stepping stone in the history of computer vision in artificial intelligence. Visionary neuroscientist David Marr proposed a framework for vision that involved moving from a 2D “primal sketch” to a 3D model, bridging the gap between biological vision and machine perception. During this time, early neural concepts like The Perceptron were also being explored, though computing power was far too limited to make them viable for complex imagery. This computer vision technology history laid the essential groundwork, allowing machines to detect boundaries, lines, and curves, transitioning the field from simple block analysis to interpreting the messy reality of natural images.

Machine Learning and Computer Vision (1990s)

The history of computer vision in artificial intelligence took a sharp turn in the 1990s with the integration of statistical methods and early machine learning algorithms. Instead of relying solely on rigid, hand-crafted rules to detect features, scientists began using data to train models. The growth of computer vision in AI exploded as researchers applied techniques like Support Vector Machines (SVMs) and Principal Component Analysis (PCA) to visual tasks.

Throughout the history of computer vision in artificial intelligence, this decade stands out for achieving real-world viability. A monumental breakthrough occurred in image recognition with the advent of the Viola-Jones object detection framework in 2001 (born from 90s research). It allowed for real-time face detection on basic hardware, revolutionizing the way machines interacted with human subjects. Machine learning in computer vision shifted the paradigm from explicitly programming a computer how to see, to teaching it to recognize patterns by feeding it countless examples.

Deep Learning Revolution in Computer Vision (2010s)

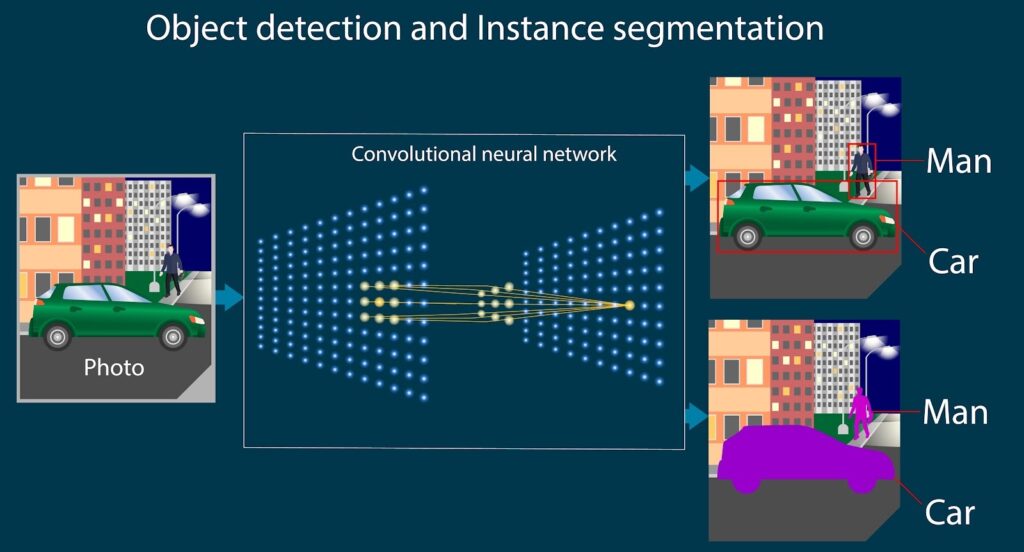

The absolute turning point in the history of computer vision in artificial intelligence arrived in the 2010s with the deep learning revolution. While neural networks had been around for decades, the combination of massive datasets (like ImageNet) and the parallel processing power of modern GPUs created a perfect storm for deep learning computer vision.

This revolution redefined the history of computer vision in artificial intelligence. In 2012, a Convolutional Neural Network (CNN) called AlexNet destroyed the competition in the ImageNet Large Scale Visual Recognition Challenge. By utilizing multiple layers of artificial neurons to automatically learn hierarchical features—from simple edges to complex object parts—convolutional neural networks solved problems that had stumped researchers for half a century. From that moment on, object detection, semantic segmentation, and image classification reached unprecedented levels of accuracy, matching and sometimes surpassing human performance in specific tasks.

Modern Applications of Computer Vision

The practical results of the history of computer vision in artificial intelligence are now everywhere you look. What started as an academic exercise is now seamlessly woven into consumer products and enterprise solutions. Within the history of computer vision in artificial intelligence, Modern Artificial Intelligence Applications showcase just how transformative and capable this technology has become.

Facial Recognition

Facial recognition is perhaps the most widely recognized application today. Used for everything from unlocking our smartphones to securing international borders, modern facial recognition algorithms map the geometry of a human face with astonishing precision. By comparing these maps against massive databases, systems can identify individuals instantly, streamlining security and identity verification protocols worldwide.

Autonomous Vehicles

The dream of self-driving cars heavily relies on advanced autonomous vehicles vision systems. Vehicles equipped with arrays of cameras, LiDAR, and radar use computer vision to interpret their surroundings in real-time. These systems must flawlessly identify pedestrians, read traffic lights, detect lane markings, and anticipate the movements of other vehicles, all while traveling at high speeds.

Medical Imaging

In the healthcare sector, computer vision is saving lives. Algorithms trained on millions of X-rays, MRIs, and CT scans can now detect anomalies like tumors, fractures, and internal bleeding. In many cases, these AI models act as a highly skilled second pair of eyes for radiologists, catching microscopic details that might be missed by the human eye and drastically speeding up the diagnostic process.

Retail and Shopping

Retailers are leveraging computer vision to revolutionize the shopping experience. Amazon Go stores, for instance, use ceiling-mounted cameras and object detection to track which items a customer picks up, automatically charging them as they walk out the door. Furthermore, AI vision is used for inventory management, ensuring shelves are stocked and identifying misplaced items without manual intervention.

Industrial Automation

In manufacturing and industrial settings, computer vision ensures quality control on assembly lines. High-speed cameras inspect products for microscopic defects, ensuring that faulty components never make it to the consumer. Additionally, vision-guided robotic arms can pick, pack, and sort items with a level of precision and tireless efficiency that humans simply cannot match.

Future of Computer Vision

The future history of computer vision in artificial intelligence is being written today. We are currently witnessing a shift toward Edge AI, where vision processing happens directly on local devices (like smart cameras or drones) rather than relying on cloud servers. This reduces latency and enhances privacy. Furthermore, the introduction of Vision Transformers (ViTs) is currently challenging the dominance of CNNs, promising even greater context understanding across entire images.

Innovations will continue to shape the history of computer vision in artificial intelligence. We will likely see more seamless multimodal AI, where computer vision is combined with natural language processing—allowing you to ask an AI a complex question about a live video feed and receive a conversational, accurate answer. However, the future will also require us to tackle ethical concerns, such as algorithmic bias in facial recognition and the societal impacts of deepfakes.

Frequently Asked Questions

What is the most important milestone in the history of computer vision in artificial intelligence?

While there are many, the 2012 ImageNet competition victory by the AlexNet deep learning model is widely considered the modern watershed moment in the history of computer vision in artificial intelligence. It proved that deep neural networks, powered by GPUs, could solve complex visual tasks better than any previous method.

How does computer vision differ from image processing?

Image processing involves manipulating an image to enhance it or extract basic information (like sharpening a blurry photo or finding edges). Computer vision goes a step further by using AI to understand and make decisions based on that image (like recognizing that the edges form a picture of a cat).

Why did it take so long for computer vision to become accurate?

Human vision is biologically incredibly complex, relying on millions of years of evolution. Early computers lacked the processing power (GPUs), the vast amounts of labeled training data, and the sophisticated algorithms (like CNNs) required to mimic this level of complex perception.

Conclusion

In summary, the history of computer vision in artificial intelligence has evolved from an overly optimistic summer project in the 1960s to a foundational pillar of modern technology. We have journeyed through the block worlds of early researchers, the mathematical rigors of the 1980s, the statistical shifts of the 1990s, and the explosive deep learning revolution of the 2010s.

The history of computer vision in artificial intelligence proves that machines can indeed be taught to perceive our world. As algorithms become more sophisticated and hardware continues to improve, the gap between human and machine vision will continue to narrow, unlocking possibilities we have only just begun to imagine.