The ability of computers to understand, interpret, and generate human language is one of the most remarkable achievements in modern computing. The history of natural language processing is a fascinating journey that spans several decades, moving from rigid, rule-heavy systems to the incredibly fluid and dynamic artificial intelligence we interact with today. By exploring the evolution of natural language processing, we can better understand how machines learned to “speak” our language and what the future might hold for human-computer interaction.

History of Natural Language Processing

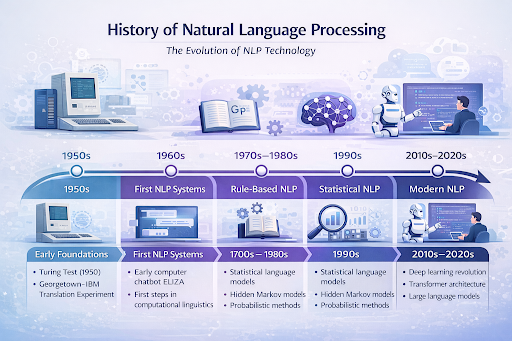

Understanding the history of natural language processing requires looking back at the intersection of computer science, linguistics, and cognitive psychology. In its earliest days, this field was primarily known as computational linguistics, focusing on automating the translation of text from one language to another. Over time, the NLP history timeline reveals a dramatic shift from humans explicitly programming grammar rules into machines to machines learning the complexities of language from massive amounts of data. The history of NLP technology is not just a straight line of progress; it is filled with ambitious beginnings, periods of stagnation, and eventual paradigm shifts that redefined what computers could achieve.

Introduction to Natural Language Processing

Natural Language Processing (NLP) is a specialized branch of artificial intelligence that gives computers the ability to comprehend text and spoken words in much the same way human beings can. The growth of NLP in artificial intelligence has been exponential, bridging the gap between human communication and computer understanding. Today, we rely on NLP for everyday tasks, from searching the web to using voice assistants on our smartphones. However, getting to this point required decades of intensive natural language processing development, transitioning from rudimentary translation attempts to sophisticated systems capable of grasping context, tone, and intent.

Early Foundations of NLP (1950s)

The 1950s laid the theoretical groundwork for what would eventually become modern NLP. During this era, researchers were highly optimistic about the potential of machine translation. A landmark event in machine translation history was the 1954 Georgetown-IBM experiment, which successfully translated more than 60 Russian sentences into English. This success led to early, albeit overly optimistic, predictions that the machine translation problem would be completely solved within a few years.

A pivotal figure during this time was Alan Turing. The concept of Alan Turing Artificial Intelligence was solidified with the publication of his 1950 paper, “Computing Machinery and Intelligence,” where he proposed the Turing Test as a criterion for true machine intelligence. This test fundamentally relied on a computer’s ability to process and generate natural language effectively enough to fool a human evaluator. Soon after, the legendary Dartmouth Conference in 1956 officially coined the term “artificial intelligence,” setting the stage for the First AI Programs that attempted to tackle complex language tasks.

The First NLP Systems (1960s)

As the 1960s dawned, researchers began developing the first functional, early natural language processing systems. One of the most famous breakthroughs of this decade was the ELIZA chatbot, created by Joseph Weizenbaum at MIT between 1964 and 1966. ELIZA simulated conversation by using pattern matching and substitution methodology, famously mimicking a Rogerian psychotherapist.

While ELIZA was highly influential in the NLP research history, it possessed no true understanding of the conversation. Another major milestone in the 1960s was SHRDLU, a program developed by Terry Winograd that could understand and execute natural language commands within a simulated “blocks world.” These systems demonstrated that machines could interact using human language, even if their underlying logic was heavily restricted by the rigid programming of the time.

Rule-Based NLP Era (1970s–1980s)

During the 1970s and 1980s, the field shifted heavily toward rule-based NLP. Linguists and computer scientists worked together to painstakingly encode the grammatical rules and vocabularies of human languages into computer algorithms. This era saw the rise of Expert Systems in Artificial Intelligence, which were designed to solve complex problems by reasoning through bodies of knowledge, represented mainly as “if-then” rules.

While Expert Systems in Artificial Intelligence found success in very specific, constrained medical or corporate domains, they struggled immensely with the inherent ambiguity and flexibility of everyday human language. The fragility of these rule-based systems, combined with a lack of computational power, led to a period of diminished funding and reduced interest in AI research, widely known as the AI Winters. Despite these setbacks, the work done during these decades provided crucial structural insights that would inform future natural language processing evolution.

Statistical NLP Revolution (1990s)

The limitations of hand-coded rules eventually paved the way for the Revival of Artificial Intelligence in the 1990s. Instead of trying to teach computers the strict rules of linguistics, researchers began to feed computers vast amounts of text to let them find patterns themselves. This marked the birth of statistical natural language processing.

Probabilistic Language Models

Instead of defining what was grammatically correct, probabilistic language models calculated the mathematical likelihood of a specific sequence of words occurring. This allowed systems to make educated “guesses” about text, significantly improving speech recognition and translation accuracy by relying on data rather than rigid programming.

Hidden Markov Models

Hidden Markov Models (HMMs) became the backbone of many NLP tasks during this time, particularly in part-of-speech tagging and speech recognition.

HMMs work on the principle that the probability of a word appearing depends on the previous word, helping algorithms decode hidden linguistic structures based on observable text data.

Corpus-Based Linguistics

This statistical revolution was powered by corpus-based linguistics—the study of language as expressed in large collections of real-world text (corpora). With the advent of the internet and increased digital storage, researchers suddenly had access to massive datasets, allowing statistical models to become increasingly accurate and robust.

Machine Learning and NLP (2000s)

The 2000s brought a deeper integration of machine learning NLP techniques. While the roots of neural computing date back to older concepts like The Perceptron, the 2000s saw a much broader Evolution of Machine Learning Algorithms. Techniques like Support Vector Machines (SVMs), decision trees, and logistic regression became standard tools for NLP tasks such as text classification and sentiment analysis.

During this decade, Early Machine Learning models moved beyond simple word counts. They began evaluating the contextual weights of different words in relation to specific topics. This era bridged the gap between purely statistical counting methods and the highly complex neural architectures that were on the horizon, cementing a new standard for accuracy in the industry.

Deep Learning and Modern NLP (2010s–Present)

The 2010s witnessed The Rise of Neural Networks, which completely transformed the landscape of the industry. The integration of deep learning NLP drastically reduced the need for manual feature engineering, allowing models to automatically discover the representations needed for language understanding. This era gave birth to the large language models we see dominating tech news today.

Word Embeddings

A massive leap forward occurred in 2013 with the introduction of Word2Vec. This technique introduced word embeddings, mapping words into dense mathematical vectors. Words with similar meanings were placed close together in a multi-dimensional space, finally allowing computers to grasp semantic relationships and nuances.

Recurrent Neural Networks

Recurrent Neural Networks (RNNs) and Long Short-Term Memory (LSTM) networks became the standard for handling sequential data like text. Because they possessed a form of “memory,” they could process a sentence word by word while retaining the context of earlier words, leading to massive improvements in machine translation and text generation.

Transformer Models

In 2017, Google researchers introduced the Transformer architecture, a paradigm-shifting innovation.

Transformers rely on “attention mechanisms,” allowing the model to look at an entire sentence at once and weigh the importance of every word in relation to every other word. This architecture is the foundation for almost all modern, state-of-the-art large language models, including BERT and the GPT series, driving unprecedented leaps in language comprehension and generation.

Applications of Natural Language Processing

The culmination of this long history is a world where NLP is seamlessly integrated into our daily lives and business operations.

Machine Translation

Modern translation tools like Google Translate use neural machine translation (NMT) to provide highly accurate, context-aware translations across hundreds of languages instantly, a far cry from the word-for-word dictionary translations of the past.

Chatbots and Virtual Assistants

From Siri and Alexa to sophisticated customer service bots, modern virtual assistants use advanced NLP to process spoken or typed natural language, determine user intent, and execute commands or provide helpful, human-like responses.

Sentiment Analysis

Businesses rely on sentiment analysis to automatically process thousands of social media posts, reviews, and customer feedback forms to determine public opinion. The models can accurately detect whether a piece of text is positive, negative, or neutral.

Information Retrieval

Every time you type a query into a search engine, complex NLP algorithms go to work. They interpret your misspelled words, understand the semantic meaning behind your search, and retrieve the most relevant documents from billions of web pages.

Text Generation

Perhaps the most visible modern application, text generation allows AI to write essays, summarize long reports, write code, and draft emails. Utilizing massive neural networks, these systems generate coherent, contextually appropriate text that is often indistinguishable from human writing.

FAQs

1. What was the earliest application of Natural Language Processing?

The earliest primary focus of NLP was machine translation. In the 1950s, particularly during the Cold War, researchers heavily invested in systems meant to automatically translate Russian text into English.

2. Why did rule-based NLP ultimately fail to scale?

Rule-based systems required linguists to manually write out every grammatical rule and exception. Because human language is constantly evolving, highly ambiguous, and full of slang and context-dependent meanings, it became impossible to manually code enough rules to cover every linguistic scenario.

3. How did deep learning change NLP?

Deep learning shifted the burden of learning from human programmers to the machine itself. Instead of writing rules, developers feed massive neural networks millions of gigabytes of text. The models autonomously learn the patterns, grammar, and semantics of language, resulting in much higher accuracy and fluency.

4. What is a Large Language Model (LLM)?

An LLM is a type of AI system built on deep learning (specifically Transformer architectures) that has been trained on vast amounts of text data. These models can understand, summarize, translate, predict, and generate human language at an incredibly sophisticated level.

Conclusion

The history of natural language processing is a testament to the perseverance of researchers over the past seven decades. From the early, rigid rules of the 1950s to the statistical shifts of the 1990s, and finally to the deep learning explosion of today, the journey has completely redefined how machines interact with human data. As we continue to refine large language models and explore new frontiers in AI, NLP will only become more deeply woven into the fabric of our daily technology.