Introduction to Speech Recognition Technology

The human desire to communicate with machines using natural voice is older than the modern computer itself. When we dive into speech recognition artificial intelligence history, we uncover an epic saga of technological breakthroughs, shifting paradigms, and relentless innovation. At its foundation, speech recognition is the ability of a machine or program to identify words and phrases in spoken language and convert them into a machine-readable format.

Understanding the history of speech recognition provides critical context for how today’s intelligent systems operate. In the early days, teaching a machine to understand a single spoken digit was considered a monumental triumph. Today, the evolution of speech recognition technology has given us systems capable of real-time translation, context-aware digital assistants, and seamless voice-to-text dictation. The trajectory of speech recognition artificial intelligence history highlights our transition from hard-coded, rule-based systems to dynamic, self-learning algorithms. By examining this speech recognition AI development, we can truly appreciate the complex intersection of linguistics, computer science, and acoustic engineering that makes modern voice technology possible.

Early Experiments in Speech Recognition (1950s)

The 1950s marked the absolute genesis of speech recognition artificial intelligence history. During this decade, the groundwork was laid for what would eventually become a massive subfield of computer science. This era coincided with the famous Dartmouth Conference in 1956, which officially coined the term “Artificial Intelligence” and spurred early researchers to dream of machines that could mimic human cognitive faculties, including hearing and understanding.

The very first notable achievement in voice recognition AI history occurred in 1952 at Bell Labs with the creation of the “Audrey” system. Audrey (Automatic Digit Recognition) was a massive, room-sized machine capable of recognizing spoken digits from zero to nine. However, it had severe limitations: it could only understand a single designated speaker, and it required pauses between each number. Despite its constraints, Audrey proved that acoustic signals could be mechanically translated into actionable data, effectively launching the earliest speech recognition in artificial intelligence research.

Development of Early Speech Recognition Systems (1960s–1970s)

Building on the primitive success of the 1950s, the next two decades in speech recognition artificial intelligence history saw researchers tackling the complexities of vocabulary and continuous speech. In 1962, IBM introduced “Shoebox” at the Seattle World’s Fair. Shoebox could understand 16 spoken words, including digits and basic arithmetic commands, allowing users to operate a calculator using only their voice.

By the 1970s, the U.S. Department of Defense’s DARPA launched the Speech Understanding Research (SUR) program. This heavily funded initiative aimed to develop a machine that could understand continuous speech with a vocabulary of 1,000 words. The most successful outcome of this program was the “Harpy” system developed at Carnegie Mellon University. Harpy was a massive milestone in speech recognition systems history because it could understand entire sentences, utilizing a network of potential word sequences to guess the most likely interpretation of a spoken phrase. This era laid the crucial foundational architecture for future development of speech recognition AI.

Statistical Models and Speech Recognition (1980s–1990s)

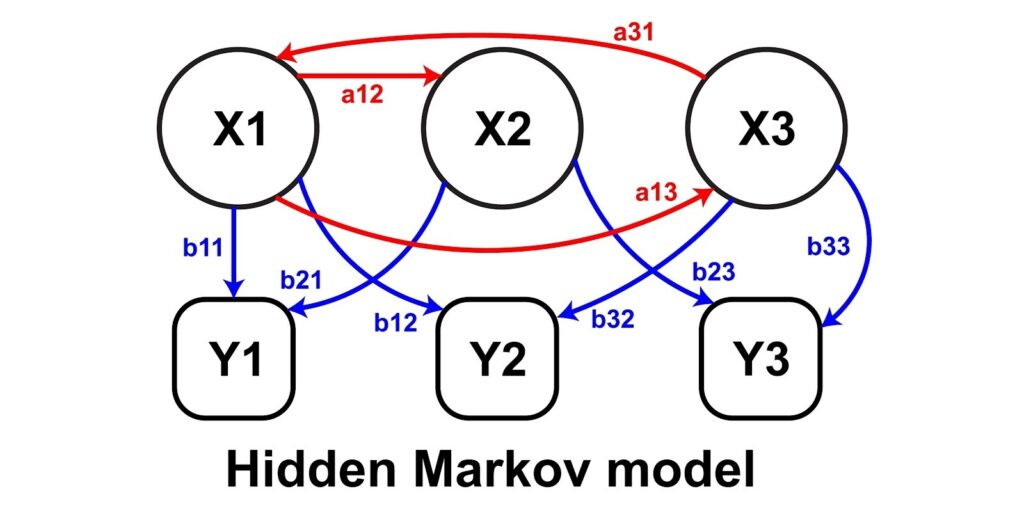

The 1980s and 1990s represented a massive paradigm shift in speech recognition artificial intelligence history. Researchers largely abandoned attempts to teach computers human linguistics and grammar rules, opting instead for a mathematical approach. This era was defined by the introduction of Hidden Markov Models (HMMs), a statistical method that revolutionized acoustic modeling.

Instead of looking for exact sound matches, HMMs allowed computers to calculate the probability of unknown sounds being certain phonemes. This statistical approach, combined with the Revival of Artificial Intelligence in the 1990s, drastically improved accuracy. Companies like Dragon Systems released “Dragon Dictate” in 1990, the first consumer speech recognition product, though it required users to pause between every word. By the late 1990s, continuous speech recognition software finally hit the commercial market, marking a massive leap forward in AI voice recognition technology.

Machine Learning and Speech Recognition (2000s)

As the new millennium dawned, speech recognition artificial intelligence history experienced another surge forward, driven largely by the Evolution of Machine Learning Algorithms. The internet boom provided researchers with something they desperately needed: massive amounts of digital audio data.

During the 2000s, automated speech recognition began to integrate more advanced machine learning techniques to process this data. DARPA once again pushed the envelope with the PAL (Personal Assistant that Learns) program, which laid the groundwork for modern digital assistants. During this time, the evolution of speech recognition technology shifted toward cloud computing. By offloading the heavy computational lifting to remote servers, mobile devices could suddenly offer robust voice recognition capabilities. This transition paved the way for voice controlled systems to enter the consumer mainstream, setting the stage for the mobile revolution.

Deep Learning Revolution in Speech Recognition (2010s)

The 2010s will forever be remembered as the golden age of speech recognition artificial intelligence history, defined entirely by The Rise of Neural Networks. While HMMs had hit a performance plateau, deep learning speech recognition completely shattered previous accuracy barriers.

By utilizing multi-layered neural networks—specifically Recurrent Neural Networks (RNNs) and Long Short-Term Memory (LSTM) networks—machines could finally process sequential data with incredible precision. This deep learning breakthrough allowed systems to understand context, accents, and background noise better than ever before. In 2011, Apple introduced Siri, bringing AI speech technology into the pockets of millions. This was rapidly followed by Amazon’s Alexa and Google Assistant. This era of speech recognition artificial intelligence history transformed voice from a clunky novelty into a primary user interface for modern computing.

Modern Applications of Speech Recognition

Today, we are witnessing the full realization of decades of research. Through Modern Artificial Intelligence Applications, voice technology has been seamlessly woven into the fabric of our daily lives. The current state of speech recognition artificial intelligence history is characterized by ubiquitous integration and high-fidelity natural language processing.

Here are the most prominent ways this technology is utilized today:

Voice Assistants

Voice assistants like Siri, Alexa, and Google Assistant are the most visible face of modern AI voice recognition technology. They utilize advanced natural language processing to not only transcribe words but to understand user intent, allowing them to answer questions, set reminders, and manage daily tasks with conversational fluency.

Smart Home Systems

The modern smart home relies entirely on voice controlled systems. From adjusting thermostats and dimming lights to locking doors and playing music, smart speakers act as central hubs. These systems use edge-based AI speech technology to listen for “wake words” before seamlessly executing complex environmental commands.

Customer Service Automation

In the enterprise sector, the evolution of speech recognition technology has transformed customer service. Interactive Voice Response (IVR) systems now use conversational AI to understand frustrated customers, route calls intelligently, and resolve basic queries without human intervention, saving companies billions of dollars annually.

Medical Transcription

Healthcare has benefited immensely from speech to text systems. Doctors and clinicians use highly specialized automated speech recognition software to dictate patient notes, surgical reports, and prescriptions directly into Electronic Health Records (EHR). These systems are trained on complex medical taxonomy, reducing administrative burden and minimizing transcription errors.

Accessibility Technologies

Perhaps the most impactful application in speech recognition artificial intelligence history is its role in accessibility. For individuals with motor disabilities or visual impairments, voice technology offers a crucial lifeline. It allows them to navigate computers, write emails, and control their environments independently, proving that AI can profoundly improve human quality of life.

Future of Speech Recognition Technology

As we look toward the horizon, the next chapter of speech recognition artificial intelligence history is already being written. The future of this technology will likely move beyond simple transcription and intent recognition toward deep emotional intelligence. Researchers are currently developing systems capable of detecting a user’s mood, stress level, and health status simply by analyzing the micro-tremors and acoustic variations in their voice.

Furthermore, the ongoing development of speech recognition AI will focus heavily on bridging the language divide. Real-time, zero-latency universal translators are on the verge of becoming a reality, breaking down global communication barriers. We are also seeing early integrations of voice AI with brain-computer interfaces, hinting at a future where the machine understands the speech you intend to make before you even vocalize it. The epic tale of speech recognition artificial intelligence history is far from over; it is simply entering its most advanced and integrated phase yet.

Few Important FAQs

What was the first machine in speech recognition artificial intelligence history?

The first major milestone was “Audrey,” created by Bell Labs in 1952. It was capable of recognizing spoken digits from a single voice, marking the beginning of speech recognition systems history.

How did Hidden Markov Models change speech recognition AI development?

Introduced in the 1980s, Hidden Markov Models shifted the industry from rule-based linguistics to statistical probability. Instead of looking for exact sound matches, systems calculated the likelihood of sounds forming specific words, drastically improving accuracy.

What caused the massive leap in voice AI accuracy in the 2010s?

The massive leap in speech recognition artificial intelligence history during the 2010s was caused by deep learning and neural networks. These architectures allowed systems to process vast amounts of data, understand context, and filter out background noise much like the human brain.

What is the difference between speech recognition and natural language processing (NLP)?

Speech recognition is the process of converting spoken audio into text. Natural language processing (NLP) is the subsequent step where the AI analyzes that text to understand its meaning, context, and intent.

Conclusion

The vast and continuous timeline of speech recognition artificial intelligence history is a remarkable testament to human persistence. What began in the 1950s as a room-sized machine struggling to understand a single number has evolved into an invisible, ubiquitous intelligence that powers our phones, homes, and hospitals. We have journeyed through the early struggles of rule-based programming, the mathematical elegance of statistical acoustic modeling, and the explosive power of modern deep learning.By tracing the development of speech recognition AI, we gain a profound appreciation for the underlying technologies that make voice assistants and real-time transcription possible today. As neural networks become more sophisticated and computing power continues to scale, the next era of speech recognition artificial intelligence history promises to bring us even closer to seamless, conversational harmony between humans and machines. Voice is our most natural form of communication, and thanks to AI, the machines are finally listening and understanding.