What is K-Means Clustering?

Imagine you have a massive pile of unlabeled data. No categories, no predefined groups, no answers at the back of the book. How do you make sense of it all? This is where k means clustering comes to the rescue. This incredibly powerful unsupervised learning algorithm automatically discovers hidden patterns and natural groupings within your data without any prior labels.

The beauty of k means clustering lies in its elegant simplicity. The algorithm partitions your data into K distinct clusters based on similarity. Data points within the same cluster are more similar to each other than to points in other clusters. Think of it like organizing a library where books arrange themselves into sections based on their content, without anyone telling them which section they belong to. The evolution of machine learning algorithms shows how clustering methods like this emerged as essential tools for discovering hidden patterns.

K means clustering has become a cornerstone of unsupervised learning because it is intuitive, efficient, and remarkably effective across countless applications. From segmenting customers to compressing images, this algorithm delivers astonishing results with minimal complexity.

How the K-Means Algorithm Works

The inner workings of k means clustering follow a beautiful, iterative dance of assignment and optimization. The algorithm aims to partition n data points into K clusters while minimizing the distance between points and their assigned cluster center. Understanding this process is essential for anyone serious about self supervised learning in artificial intelligence , as clustering forms the foundation of many modern approaches.

Step 1: Choosing the Number of Clusters (K)

Before running k means clustering, you must decide how many clusters you want the algorithm to find. This number, called K, is the most critical parameter. Choosing the right K is not always obvious. Sometimes domain knowledge guides your choice. Other times, you must experiment to find the optimal value.

The value of K determines how granular your cluster analysis becomes. A small K creates broad, general groups. A large K creates many specific, detailed clusters. There is no universally correct K. The best value depends on your specific problem and what insights you hope to gain.

Step 2: Initializing Centroids

Once you choose K, the k-means clustering algorithm randomly places K points called centroids somewhere in your feature space. These centroids serve as the initial heart of each cluster. Think of them as flags planted in the ground, declaring “this is where our cluster begins.”

The initial placement of centroids matters because k means clustering can converge to different results based on where centroids start. Poor initialization can lead to suboptimal clusters. Modern implementations use techniques like K Means++ to choose smarter starting positions, reducing the chance of getting stuck in bad solutions.

Step 3: Assigning Data Points

With centroids in place, k means clustering assigns every data point to its nearest centroid. The algorithm calculates the distance between each point and each centroid. The most common distance metric is Euclidean distance. The formula for Euclidean distance between two points in n dimensional space is:

Distance = square root of the sum of (x_i minus y_i) squared for all dimensions i

Each data point joins the cluster whose centroid is closest. After this assignment, the data is partitioned into K groups. Some groups may have many points. Others may have few. This assignment step is the heart of how k means clustering organizes your data.

Step 4: Updating Centroids to Iterate

After assigning all points, k means clustering recalculates each centroid as the mean of all points in its cluster. For each cluster, with m points, the new centroid is the average position of every point belonging to that cluster. Mathematically, the formula is:

New Centroid = (1/m) times the sum of all points in the cluster

Then the algorithm repeats steps 3 and 4. Points reassign to the nearest updated centroids. Centroids recalculate again. This iterative process continues until convergence, meaning centroids stop moving or move very little between iterations. At convergence, k means clustering has found a stable grouping of your data.

How to Find the Optimal “K” Value

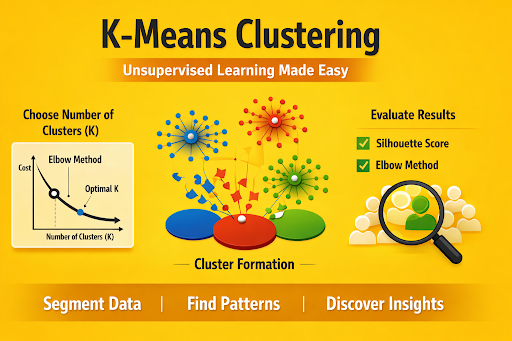

Choosing the right K is one of the biggest challenges when using k means clustering. Fortunately, several techniques help you make this decision intelligently rather than guessing randomly.

Using the Elbow Method

The elbow method is the most popular technique for finding the optimal K in k means clustering. Here is how it works. You run the algorithm with different K values and calculate the within cluster sum of squares for each. This metric measures how compact your clusters are, with lower values indicating tighter, more cohesive groups.

As you increase K, the within cluster sum of squares always decreases. More clusters mean each point is closer to its centroid. The elbow method looks for the point where adding more clusters stops providing meaningful improvement. This bend in the curve resembles an elbow, giving the technique its name.

Plot K against the within cluster sum of squares. Look for the K where the curve sharply bends from steep decline to gentle slope. That bend point is your optimal K. The elbow method is intuitive and visual, making it accessible even to those new to k means clustering machine learning.

Evaluating with the Silhouette Score

The silhouette score offers a more sophisticated way to evaluate k means clustering results. This metric measures how similar each point is to its own cluster compared to other clusters. Silhouette scores range from -1 to +1.

A score near +1 means points are well matched to their own cluster and poorly matched to neighboring clusters. A score near 0 indicates overlapping clusters. A score near -1 suggests points may belong in the wrong cluster.

To find the optimal K, compute the average silhouette score across all points for different K values. Choose the K that produces the highest average silhouette score. The silhouette score often confirms the elbow method’s suggestion or provides a compelling alternative when the elbow is ambiguous.

Advantages and Limitations of K-Means

Every algorithm has strengths and weaknesses. K means clustering is no exception. Understanding both sides helps you apply it effectively. The rise of modern machine learning has highlighted how clustering methods balance simplicity with power.

Advantages

Remarkable Speed makes k-means clustering attractive for large datasets. The algorithm scales linearly with the number of data points, meaning it remains practical even when you have millions of samples.

Simplicity and Interpretability mean you can explain k means clustering to non technical stakeholders. The concept of grouping similar items together is intuitive and easy to understand.

Guaranteed Convergence ensures the algorithm always terminates. While it may find a locally optimal solution rather than the global optimum, you will always get a result.

Versatility Across Domains has made k means clustering a favorite in industries from marketing to medicine. The algorithm adapts to any problem where similarity can be measured.

Limitations

Requires Predefined K is the biggest challenge. You must choose the number of clusters before seeing the results. The elbow method and silhouette score help, but no perfect automatic method exists.

Sensitive to Initial Centroids means different runs can produce different results. Poor initialization leads to suboptimal clusters. Running the algorithm multiple times with different starting points is standard practice.

Assumes Spherical Clusters work poorly when clusters have complex shapes or varying sizes. K means clustering struggles with elongated or nested clusters that other algorithms handle better.

Affected by Outliers because centroids are mean. Extreme values pull centroids away from the true cluster center, distorting results. Outlier removal or robust alternatives may be necessary.

Not Suitable for Categorical Data without transformation. K means clustering expects numerical features. Categorical variables require encoding before the algorithm can process them.

Top Use Cases for K-Means Clustering

The practical applications of k means clustering spans nearly every industry. Here are three of the most powerful and common use cases. As we look toward what comes next, the Future of artificial intelligence technology will likely see even more sophisticated applications of clustering methods.

Customer Segmentation

Businesses live and die by their understanding of customers. K means clustering helps companies discover natural customer segments within their data. An online retailer might find clusters of bargain hunters, luxury shoppers, and occasional browsers.

Once segments are identified, marketing teams tailor messages to each group. Bargain hunters receive discount offers. Luxury shoppers see premium product recommendations. This data segmentation drives higher conversion rates and stronger customer loyalty.

Customer segmentation with k means clustering works with various data types. Purchase history, browsing behavior, demographic information, and engagement metrics all feed into the algorithm. The resulting clusters reveal patterns you might never have suspected. The AI recommendation system demonstrates how clustering powers personalized experiences across the web.

Anomaly Detection

Finding unusual patterns in data is critical for security, quality control, and fraud prevention. K means clustering excels at anomaly detection by identifying points that do not fit naturally into any cluster.

Consider credit card fraud detection. Normal transactions form tight clusters based on typical spending patterns. Fraudulent transactions, being unusual, often fall far from any cluster center. By measuring the distance from each point to its nearest centroid, k means clustering flags suspicious activity for investigation.

Similarly, manufacturing quality control uses k means clustering to spot defective products. Normal products cluster together based on sensor readings. Defects deviate from these clusters, triggering inspection alerts.

Image Compression

K means clustering can dramatically reduce the size of digital images through a technique called color quantization. A typical color image contains millions of distinct colors. Many of these colors are visually similar and redundant.

The algorithm groups similar colors into K clusters, then replaces every pixel’s color with its cluster centroid’s color. Instead of storing millions of colors, the image stores only K colors plus a mapping for each pixel. This compression reduces file size while maintaining visual quality.

For example, reducing a photo from 16 million colors to just 16 colors using k means clustering might reduce file size by 90 percent while keeping the image recognizable. This technique balances storage efficiency with acceptable quality loss. The history of computer vision in artificial intelligence shows how clustering has been essential for image processing since the early days.

Frequently Asked Questions

1. What does the K in K Means Clustering represent?

K represents the number of clusters you want the algorithm to find. You must choose this value before running the algorithm.

2. How do I know if my K Means Clustering results are good?

Use the silhouette score or elbow method to evaluate cluster quality. High silhouette scores and clear elbows indicate good results.

3. Does K Means Clustering work with categorical data?

Not directly. K Means Clustering requires numerical data. You must encode categorical variables into numbers before applying the algorithm.

4. Why do I get different results each time I run K Means Clustering?

Random initialization of centroids can lead to different final clusters. Run the algorithm multiple times and select the result with the lowest within the cluster sum of squares.

5. Is K Means Clustering supervised or unsupervised?

K Means Clustering is an unsupervised learning algorithm. It discovers patterns in unlabeled data without any predefined target variables.

6. How does scaling affect K Means Clustering?

Scaling is essential. Features with larger ranges dominate distance calculations. Always scale your data before applying K Means Clustering.

Conclusion

K means clustering stands as one of the most elegant and practical algorithms in the machine learning toolkit. Its ability to discover hidden patterns in unlabeled data opens doors to insights that would otherwise remain invisible. From segmenting customers to detecting anomalies and compressing images, this incredibly powerful technique delivers value across countless applications.

The algorithm’s beauty lies in its simplicity. Iterative assignment and updating, guided by the principle of minimizing distances, produces surprisingly effective results. While k means clustering has limitations, including sensitivity to initialization and the need to preselect K, these challenges are manageable with proper techniques like the elbow method and silhouette score.

As you explore the broader landscape of artificial intelligence, you will find that k means clustering often works alongside other techniques. The incredible AI in healthcare history and evolution and modern artificial intelligence applications both demonstrate how clustering continues to drive real world innovation. Whether you are analyzing customer behavior, cleaning sensor data, or building recommendation systems, k means clustering deserves a prominent place in your analytical arsenal. Understanding k means clustering is not just learning an algorithm; it is gaining a superpower for discovering the hidden structure within your data.