Introduction

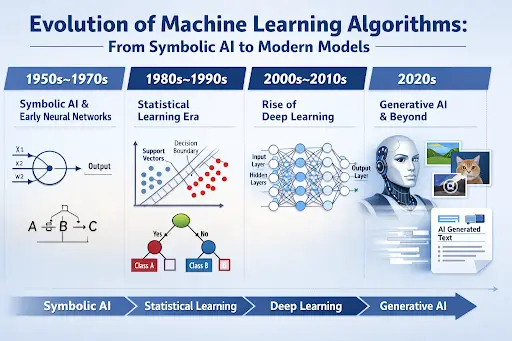

The evolution of machine learning algorithms is a fascinating journey through decades of experimentation, setbacks, breakthroughs, and renewed optimism. What began as theoretical ideas about thinking machines has transformed into powerful systems capable of recognizing speech, diagnosing diseases, recommending products, and even generating human-like text.

To truly understand modern artificial intelligence, we must look at how machine learning developed step by step. Each generation of algorithms built upon earlier discoveries. From symbolic reasoning and early neural experiments to support vector machines and deep learning, the story is one of persistence and reinvention.

In this article, we’ll walk through the evolution of machine learning algorithms chronologically, explore key milestones, examine real examples, and understand why each phase mattered.

The Foundations: Early Ideas and Neural Models (1940s–1960s)

The roots of machine learning history trace back to early work in artificial intelligence. Inspired by the human brain, researchers in the 1940s began modeling artificial neurons.

In 1943, Warren McCulloch and Walter Pitts introduced a mathematical model of a neuron. It was simple, but revolutionary. Their work suggested that machines could mimic logical reasoning using networks of artificial neurons.

These ideas connected directly to early concepts of artificial intelligence, where scientists imagined machines capable of learning from experience.

The Perceptron

In 1958, Frank Rosenblatt introduced the perceptron model — one of the first learning algorithms. The perceptron could classify inputs into categories by adjusting weights based on errors. It represented an early form of supervised learning progression.

For example, if shown images labeled “cat” and “not cat,” the perceptron gradually learned to separate them using mathematical boundaries.

However, there was a problem. The perceptron could only solve linearly separable problems. When Marvin Minsky and Seymour Papert highlighted these limitations in 1969, interest in neural networks declined sharply.

This marked one of the early slowdowns in machine learning development.

Statistical Learning and Classical Algorithms (1970s–1990s)

After neural networks lost momentum, researchers shifted toward statistical and rule-based approaches. This period introduced many classical machine learning algorithms that remain influential today.

Decision Trees

Decision trees became popular for classification tasks. They split data into branches based on feature values.

Imagine diagnosing a disease:

- Is the patient’s temperature high?

- Are there respiratory symptoms?

- Has there been recent travel?

Each answer leads down a different branch. This simple yet powerful method became foundational in applied machine learning.

k-Nearest Neighbors (k-NN)

Another key development was k-nearest neighbors. Instead of building a complex model, k-NN simply compares new data points to the closest existing examples.

If most nearby examples are labeled “spam,” the new email is likely spam too.

This approach illustrated an important shift: algorithms didn’t need to “understand” data symbolically. They could rely on mathematical proximity and probability.

Support Vector Machines (SVMs)

In the 1990s, support vector machines emerged as powerful classification tools. SVMs find the optimal boundary between categories by maximizing margin distance.

These methods were particularly effective in high-dimensional spaces and became widely used in text classification, image recognition, and bioinformatics.

During this period, supervised and unsupervised learning methods became clearly defined branches of machine learning.

The Backpropagation Breakthrough (1980s Revival)

Although neural networks struggled in the 1970s, they didn’t disappear entirely.

In 1986, Geoffrey Hinton and colleagues popularized the backpropagation algorithm. Backpropagation allowed multi-layer neural networks to adjust weights efficiently by propagating errors backward through the network.

This was a major turning point in the evolution of machine learning algorithms.

With backpropagation, neural networks could learn complex patterns that earlier models could not.

Still, limitations remained:

- Limited computational power

- Small datasets

- Hardware constraints linked to the broader history of computers

As a result, neural networks remained promising but not dominant.

The Big Data Era and Algorithm Expansion (2000s)

The early 2000s changed everything.

Three major forces converged:

- Explosive growth of digital data

- Faster processors and GPUs

- Improved algorithmic techniques

This environment allowed machine learning algorithms to scale in ways previously impossible.

Ensemble Methods

Algorithms like Random Forests and Gradient Boosting became widely adopted. These methods combine multiple models to improve accuracy.

Instead of relying on one decision tree, Random Forests build hundreds and average their predictions.

This phase showed that combining simple models could outperform complex individual ones.

Reinforcement Learning Advances

Reinforcement learning basics gained practical traction during this time. Instead of learning from labeled examples, agents learned through rewards and penalties.

For example:

- A game-playing AI improves by receiving positive rewards for winning moves.

- A robotic system learns balance through trial and error.

These developments set the stage for dramatic breakthroughs.

The Deep Learning Revolution (2010s)

If we map the timeline of machine learning, the 2010s stand out as transformative.

Deep learning evolution reshaped the entire AI landscape.

Why Deep Learning Succeeded

Earlier neural networks failed mainly due to limited data and hardware. By 2012:

- Massive datasets were available.

- GPUs accelerated matrix computations.

- Improved training techniques stabilized learning.

The breakthrough moment came when a deep convolutional neural network dramatically outperformed competitors in an image recognition competition.

From there, progress accelerated rapidly.

Convolutional Neural Networks (CNNs)

CNNs became dominant in computer vision tasks:

- Face recognition

- Medical imaging

- Autonomous driving systems

Recurrent Neural Networks (RNNs)

RNNs handled sequential data like:

- Speech recognition

- Language translation

Transformers

Later, transformer architectures revolutionized natural language processing. They enabled large-scale generative AI models capable of writing, summarizing, and answering questions.

The evolution of machine learning algorithms had entered a new era.

Modern Trends: Generative AI and Explainability

Today, machine learning continues evolving rapidly.

Generative Models

Generative adversarial networks (GANs) and transformer-based systems can create:

- Realistic images

- Music

- Human-like text

This represents one of the most visible stages in machine learning history.

Explainable AI (XAI)

As algorithms grow more powerful, understanding how they make decisions becomes critical. Explainable AI focuses on transparency, ensuring that models are interpretable and accountable.

This reflects a shift from pure performance toward responsible innovation.

Why the Evolution of Machine Learning Algorithms Matters

Understanding this progression reveals three key insights:

- Progress is cyclical. Neural networks fell out of favor before returning stronger.

- Hardware and data availability shape algorithm success.

- No single method dominates forever.

Each stage — from the perceptron model to deep learning — contributed essential building blocks.

The journey from early machine learning experiments to modern AI systems highlights decades of persistence, collaboration, and scientific curiosity.

Frequently Asked Questions

What is the evolution of machine learning algorithms?

The evolution of machine learning algorithms refers to the historical development of methods that enable computers to learn from data — from early neural models and statistical classifiers to modern deep learning systems.

What were the first machine learning algorithms?

Early examples include the perceptron, decision trees, and nearest neighbor methods. These laid the groundwork for more advanced neural networks and ensemble models.

How did deep learning change machine learning?

Deep learning introduced multi-layer neural networks capable of learning complex patterns automatically. It significantly improved performance in vision, speech, and language tasks.

What is the difference between classical machine learning and deep learning?

Classical machine learning algorithms often require manual feature selection and smaller datasets. Deep learning models automatically learn features from large datasets using layered neural networks.

Are machine learning algorithms still evolving?

Yes. Research continues in reinforcement learning, generative AI models, and explainable AI, pushing the boundaries of what machines can achieve.

Conclusion

The evolution of machine learning algorithms is not a straight line but a dynamic progression shaped by theory, experimentation, failure, and reinvention.

From early neural network experiments and classical machine learning algorithms to deep learning breakthroughs and generative AI, each stage expanded what machines could learn and accomplish.

Understanding this history provides valuable perspective. It shows that innovation often requires patience — and that today’s limitations may become tomorrow’s breakthroughs.

As machine learning continues to evolve, its future will likely be as surprising and transformative as its past.