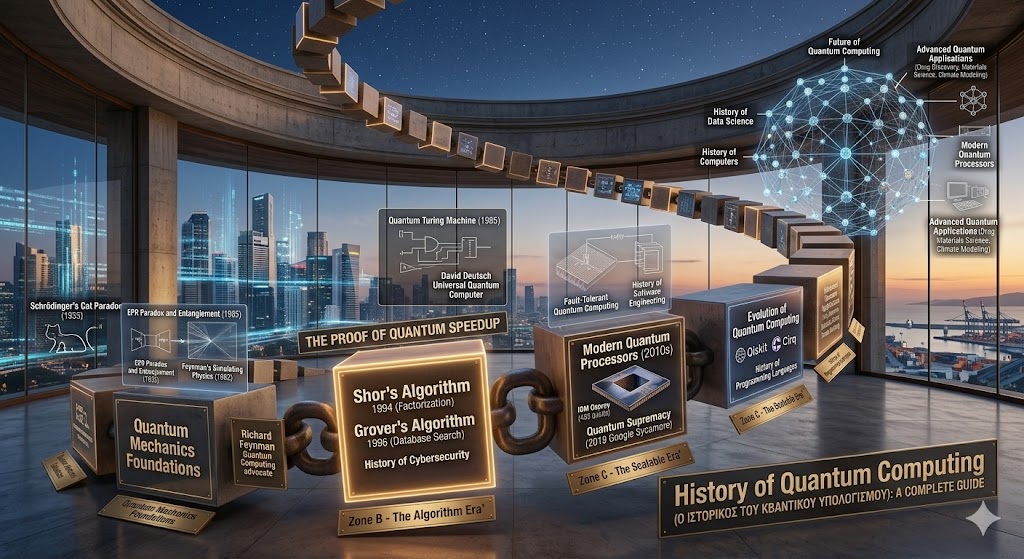

For nearly a century, the History of Computers has been defined by the binary world of bits—ones and zeros. However, as we reach the physical limits of silicon, a new paradigm has emerged. The History of Quantum Computing represents a shift from classical physics to the strange, probabilistic world of subatomic particles. Unlike a classical computer that solves problems one path at a time, a quantum computer uses the principles of superposition and entanglement to explore multiple solutions simultaneously. This journey from abstract theory to functional hardware is one of the most ambitious chapters in the History of Software Engineering, promising to solve problems that would take classical supercomputers billions of years to crack.

Quantum Mechanics Foundations – 1920s–1930s

The History of Quantum Computing did not begin with a circuit board, but with a chalkboard. In the early 20th century, physicists like Max Planck, Albert Einstein, and Niels Bohr realized that at the atomic level, the laws of classical physics break down. The Quantum Mechanics Foundations established during this era introduced concepts like superposition (where a particle exists in multiple states at once) and entanglement (where particles remain connected regardless of distance).

During this time, the History of Data Science was non-existent, and the idea of using these “spooky” behaviors for calculation was considered science fiction. However, these mathematical foundations were essential. Without the work of Erwin Schrödinger and Werner Heisenberg, the “qubit”—the fundamental unit of quantum information—could never have been conceptualized.

Early Quantum Computing Theories – 1980s

The 1980s marked the moment when physics and computer science finally collided. In 1981, during a conference co-sponsored by MIT and IBM, the legendary physicist Richard Feynman Quantum Computing advocate argued that classical computers could never efficiently simulate quantum systems. He famously stated, “Nature isn’t classical, dammit, and if you want to make a simulation of nature, you’d better make it quantum mechanical.”

Following Feynman’s challenge, David Deutsch Universal Quantum Computer theory emerged in 1985. Deutsch described a mathematical model for a quantum Turing machine, proving that a quantum computer could, in theory, perform any task a classical computer could, but with exponential speedups for specific problems. This was a landmark in the History of Programming Languages, as it suggested that we would eventually need an entirely new way to write instructions for these machines.

First Quantum Algorithms and Experimental Devices – 1990s

In the 1990s, the History of Quantum Computing moved from “if” to “how.” The decade saw the birth of algorithms that proved quantum computers were more than just theoretical curiosities. In 1994, Peter Shor developed Shor’s Algorithm 1994, which showed that a quantum computer could factor large numbers incredibly fast.

This sent shockwaves through the History of Cybersecurity, as modern encryption (RSA) relies on the difficulty of factoring large numbers. If a quantum computer could do it in seconds, the world’s digital security would be at risk. Shortly after, Lov Grover introduced Grover’s Algorithm 1996, which provided a way to search unsorted databases much faster than any classical method. These discoveries turned the Evolution of Quantum Computing into a high-stakes race for national and corporate security.

Early Quantum Computers – 2000s

The turn of the millennium brought the first physical proof that these theories could work in reality. In 2001, IBM researchers used a 7-qubit quantum computer to run Shor’s algorithm, successfully factoring the number 15 into 3 and 5. It was a small step for math, but a giant leap for the History of Quantum Computing.

Throughout the 2000s, scientists experimented with various “qubit” technologies, from trapped ions to superconducting loops. This era was critical for the History of Computers, as engineers realized that keeping qubits stable (maintaining “coherence”) required temperatures colder than deep space. The Quantum Computing Timeline during these years was a series of incremental battles against “noise”—the environmental interference that causes quantum information to vanish.

Scalable Quantum Computing and Commercial Interest – 2010s

The 2010s saw the entry of “Big Tech” into the History of Quantum Computing. Companies like Google, IBM, Microsoft, and Intel began investing billions into Modern Quantum Processors. The goal was no longer just to prove a point, but to achieve Quantum Supremacy—the moment a quantum computer performs a task that is impossible for any classical computer.

In 2019, Google claimed to have reached this milestone with its Sycamore processor, performing a calculation in 200 seconds that they estimated would take the world’s fastest supercomputer 10,000 years. This decade also influenced the History of Data Science, as researchers began developing “Quantum Machine Learning” models, hoping to use the Evolution of Quantum Computing to find patterns in data that were previously invisible.

Modern Quantum Processors and Future Prospects – 2020s

As we navigate the 2020s, the History of Quantum Computing has entered the era of “NISQ” (Noisy Intermediate-Scale Quantum) devices. Modern quantum processors, such as IBM’s Osprey (433 qubits) and Condor (over 1,000 qubits), are pushing the boundaries of what is physically possible.

The current focus is on error correction. Because qubits are so fragile, scientists are using the History of Software Engineering to create “logical qubits”—groups of physical qubits that work together to cancel out errors. This is the final hurdle in the Quantum Computing Timeline before we reach the “Fault-Tolerant” era. In the future, we expect quantum computers to revolutionize material science, drug discovery, and climate modeling. The History of Programming Languages is also evolving, with tools like Qiskit and Cirq allowing developers to write quantum code today in preparation for the machines of tomorrow.

Frequently Asked Questions (FAQs)

What is a Qubit?

A qubit (quantum bit) is the basic unit of quantum information. Unlike a classical bit, which is either 0 or 1, a qubit can exist in a superposition of both states at the same time.

Will quantum computers replace my laptop?

No. Quantum computers are specialized machines designed for specific, complex tasks. For everyday activities like browsing the web or word processing, classical computers will always be more efficient and cost-effective.

What is Quantum Supremacy?

It is the point where a quantum computer can solve a problem that the most powerful classical supercomputer cannot solve in a reasonable timeframe.

How cold do quantum computers need to be?

Most Modern Quantum Processors require temperatures around 15 millikelvins—colder than outer space—to prevent the qubits from losing their quantum state due to heat.

Why is quantum computing important for medicine?

Quantum computers can simulate the behavior of molecules at the atomic level, which is impossible for classical computers. This could lead to the discovery of new drugs and materials in a fraction of the current time.

Conclusion

The History of Quantum Computing is a journey from the impossible to the inevitable. What began as a series of strange Quantum Mechanics Foundations in the 1920s has evolved into a global race to define the next century of technology. From the theoretical genius of Richard Feynman Quantum Computing proposals to the Modern Quantum Processors of the 2020s, we are on the verge of a computational revolution. As the Evolution of Quantum Computing continues, it will redefine the History of Computers and the History of Cybersecurity, unlocking a future where the only limit is our imagination. The quantum age is no longer a dream; it is being built, one qubit at a time.