What is the Random Forest Algorithm?

Imagine you have a critical decision to make. Would you trust a single advisor or a committee of experts working together? The random forest algorithm takes the wisdom of the committee approach and applies it to machine learning. This remarkably powerful ensemble method combines hundreds or even thousands of decision trees to produce predictions that are far more accurate and stable than any single tree could achieve alone.

The random forest algorithm has become a go to tool for data scientists across industries. Its popularity stems from three key strengths: exceptional accuracy, built in protection against overfitting, and surprising ease of use. Whether you are predicting customer behavior, diagnosing diseases, or detecting fraud, random forests deliver outstanding results with minimal tuning. The concept of combining multiple models is closely related to self supervised learning in artificial intelligence , where algorithms learn useful representations without explicit labels.

The Basics of Decision Trees

To understand the random forest algorithm, you first need to understand decision trees. A decision tree is a simple flowchart structure that asks a series of questions about your data. Each question splits the data into smaller groups. The process continues until the algorithm reaches a final answer, which is stored in a leaf node.

Single decision trees have a serious flaw. They are highly unstable. If you change the training data slightly, the tree structure can change dramatically. This instability leads to overfitting, where the model memorizes noise in the training data rather than learning general patterns. The evolution of machine learning algorithms shows how researchers recognized this limitation and sought better approaches.

How Ensemble Learning Solves Overfitting

Ensemble learning is the secret weapon behind the random forest algorithm. Instead of relying on a single model, ensemble methods combine multiple models to produce a superior result. The logic is simple: many weak learners working together can create one strong learner.

Think of a crowd guessing the number of jellybeans in a jar. Individual guesses may be wildly inaccurate, but the average of all guesses is often remarkably close to the true number. The random forest algorithm applies this same principle to decision trees. By building many trees and averaging their predictions, random forests cancel out the errors and noise that plague individual trees. As we look toward what comes next, the Future of artificial intelligence technology will likely build upon these ensemble principles.

Core Mechanics: How a Random Forest Operates

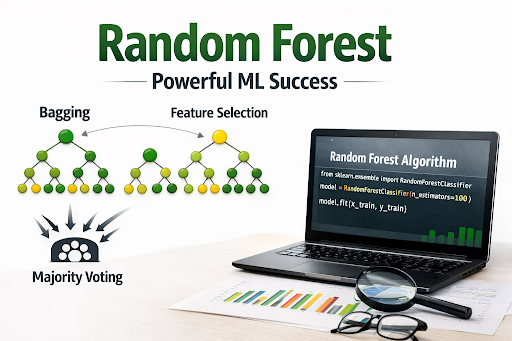

The random forest algorithm introduces two key innovations that make it so effective: bagging and feature randomness. These techniques work together to create diverse, uncorrelated trees that collectively produce outstanding predictions.

Bagging (Bootstrap Aggregating) Explained

Bagging, short for bootstrap aggregating, is the first pillar of the random forest algorithm. Here is how it works. The algorithm creates multiple subsets of the original training data by sampling with replacement. This means the same data point can appear in multiple subsets, while some data points may be left out entirely.

For each subset, the algorithm trains a full decision tree without pruning. Because each subset is slightly different, each tree learns slightly different patterns. The trees are grown deep, allowing them to capture complex relationships, but the magic happens when they are combined.

For classification tasks, the random forest algorithm uses majority voting. Each tree casts a vote for a class, and the class with the most votes wins. For regression tasks, the algorithm averages the predictions from all trees to produce the final output.

The Role of Feature Randomness

Bagging alone is not enough. If every tree uses all the same features, the trees will be highly correlated. Correlated trees make similar errors, which defeats the purpose of ensemble learning. The random forest algorithm solves this problem with feature randomness.

At each split in every tree, the algorithm randomly selects a subset of features to consider. Only these randomly chosen features are eligible for the split. This forced randomness ensures that the trees are diverse. Some trees may focus on certain features while others focus on different features.

The result is a collection of trees that are uncorrelated. When you average their predictions, the errors cancel out, leaving you with a highly accurate and stable model. This breakthrough was a significant moment in the rise of modern machine learning .

Out of Bag Evaluation

Here is a remarkably clever feature of the random forest algorithm. Because each tree is trained on a bootstrap sample, approximately one third of the original data is left out of that tree’s training set. These left out samples are called out of bag data.

The algorithm can test each tree on its out of bag data without needing a separate validation set. The out of bag error rate provides an unbiased estimate of the model’s generalization error. This built in validation saves time and helps prevent overfitting.

Random Forest Classifier vs. Random Forest Regressor

The random forest algorithm comes in two main flavors: classifier and regressor. Both share the same underlying mechanics but differ in how they produce final predictions.

Random Forest Classifier

The random forest classifier is used when your target variable is categorical. You might want to predict whether an email is spam or not, whether a tumor is malignant or benign, or which product category a customer will purchase.

For classification, each tree in the forest produces a class prediction. The algorithm collects all these votes and selects the class that receives the majority. This majority voting approach is robust and works well even when individual trees are uncertain.

The classifier uses splitting criteria like Gini impurity or entropy to build each tree. Gini impurity measures how mixed the classes are within a node. A pure node, where all samples belong to one class, has a Gini impurity of zero. The formula for Gini impurity is:

Gini = 1 minus the sum of squared class probabilities

When the algorithm splits a node, it chooses the feature and split point that most reduce the weighted average of Gini impurity across the child nodes.

Random Forest Regressor

The random forest regression model is used when your target variable is continuous. You might want to predict house prices, temperature readings, or stock market values.

For regression, each tree produces a numerical prediction. The random forest algorithm averages all these predictions to produce the final output. This averaging smooths out the extreme predictions that individual trees might make, resulting in more stable and accurate estimates.

The regressor uses variance reduction as its splitting criterion. Variance measures how spread out the target values are within a node. The formula for variance is the average of squared differences from the mean. The algorithm splits nodes in a way that minimizes the variance in the child nodes.

Pros and Cons of Using Random Forests

Every algorithm has strengths and weaknesses. The random forest algorithm offers many advantages but also comes with limitations.

Advantages

Exceptional Accuracy is the primary reason to choose random forests. By combining many trees, the algorithm achieves performance that rivals and often exceeds more complex models.

Resistance to Overfitting sets random forests apart from single decision trees. The ensemble approach naturally regularizes the model, making it robust to noise in the training data.

Handles High Dimensional Data gracefully. The random forest algorithm works well even when you have thousands of features. It performs implicit feature selection by giving more importance to informative features.

No Scaling Required saves preprocessing time. Unlike algorithms that rely on distance calculations, random forests are unaffected by feature scales. You can feed raw data directly into the model.

Providing Feature Importance scores help you understand which features drive your predictions. This insight is valuable for feature selection and for explaining your model to stakeholders.

Disadvantages

Less Interpretable than a single decision tree. While you can visualize one tree, visualizing a forest of hundreds of trees is impossible. You lose the clear decision path that makes individual trees so appealing.

Memory Intensive because the algorithm stores every tree in memory. Large forests with deep trees can consume significant RAM, which may be problematic on resource constrained systems.

Slower Predictions compared to simpler models. When you make a prediction, the random forest algorithm must pass your data through every tree and aggregate the results. This takes longer than a single decision tree.

Can Overfit with Noisy Data despite its robustness. If you have extremely noisy data or use too many deep trees, random forests can still overfit. Proper hyperparameter tuning is essential.

Real World Applications (Finance, Healthcare, and E-commerce)

The random forest algorithm has proven its value across diverse industries. Its combination of accuracy, robustness, and ease of use makes it a favorite for real world deployments.

Finance

Banks and financial institutions rely on random forest machine learning for critical decisions. Credit scoring models use random forests to predict loan default risk. The algorithm’s ability to handle many features, including credit history, income, employment status, and demographic information, makes it ideal for this application.

Fraud detection systems employ random forests to identify suspicious transactions in real time. The algorithm learns patterns of legitimate behavior and flags transactions that deviate from these patterns. The powerful history of AI recommendation systems shows how similar ensemble methods have transformed financial services.

Stock market prediction, while notoriously difficult, benefits from random forests’ ability to capture non linear relationships between market indicators and price movements.

Healthcare

The medical field has embraced random forest machine learning for diagnosis and treatment planning. The algorithm helps doctors detect diseases from medical images, predict patient outcomes, and identify risk factors for chronic conditions.

In cancer research, random forests analyze genetic data to identify mutations associated with specific tumor types. This application requires handling thousands of genetic markers, a task for which random forests are well suited. The incredible AI in healthcare history and evolution documents how machine learning continues to transform medical practice.

Electronic health records contain vast amounts of patient data. Random forests mine this data to predict hospital readmission risks, medication responses, and disease progression.

E-commerce

Online retailers use the random forest algorithm to personalize shopping experiences. Recommendation engines predict which products a customer is likely to purchase based on browsing history, past purchases, and demographic information.

Customer churn prediction helps companies identify at risk customers before they leave. Random forests analyze engagement patterns, support interactions, and purchase behavior to flag customers who may be considering switching to competitors.

Inventory management benefits from demand forecasting. Random forests predict how many units of each product will sell, helping retailers optimize stock levels and reduce waste.

Best Practices for Hyperparameter Tuning

Getting the most from the random forest algorithm requires thoughtful hyperparameter tuning. Here are proven strategies to optimize your model.

Start Simple

Begin with a baseline model using default hyperparameters. Most implementations provide sensible defaults that work reasonably well. Establish a baseline performance metric before attempting any tuning.

Tune Number of Trees

The number of trees is the easiest parameter to tune. Monitor the out of bag error rate as you increase the number of trees. The error will decrease quickly at first then plateau. Choose the point where additional trees provide diminishing returns. This is often between 100 and 500 trees.

Optimize Maximum Features

The maximum features parameter controls the randomness in your forest. Smaller values create more diverse trees but may sacrifice individual tree quality. Use cross validation to find the sweet spot. A common starting point is the square root of the number of features for classification and one third for regression.

Control Tree Depth

Limiting tree depth prevents overfitting and speeds up training. Start with a shallow depth like 10 or 20 and gradually increase while monitoring validation performance. Stop when performance stops improving.

Use Cross Validation

Grid search with cross validation systematically explores combinations of hyperparameters. While computationally expensive, this approach finds the optimal configuration for your specific dataset. Focus on the most influential parameters: number of trees, maximum features, and maximum depth.

Frequently Asked Questions

1. How many trees should I use in a random forest?

Start with 100 trees. Monitor the out of bag error rate. If it continues decreasing significantly, add more trees. Diminishing returns typically set in between 100 and 500 trees.

2. Is a random forest better than a single decision tree?

Yes, for most problems. Random forests reduce overfitting, improve accuracy, and provide more stable predictions. The tradeoff is reduced interpretability and longer training times.

3. Does random forest require feature scaling?

No. Random forests are based on decision trees, which use splitting criteria like Gini impurity rather than distance calculations. Feature scales do not affect the algorithm.

4. How does random forest compare to gradient boosting?

Both are ensemble methods. Random forests build trees independently and average them. Gradient boosting builds trees sequentially, with each tree correcting the errors of previous trees. Gradient boosting often achieves higher accuracy but requires more careful tuning.

5. Can random forests handle missing values?

Basic implementations do not handle missing values. You must impute or remove missing data before training. Some advanced implementations include built in methods for handling missingness.

6. What is an out of bag error?

Out of bag error is the error rate calculated using data not included in each tree’s bootstrap sample. It provides an unbiased estimate of generalization error without needing a separate validation set.

Conclusion

The random forest algorithm represents a remarkably powerful advancement in machine learning. By combining the simplicity of decision trees with the robustness of ensemble learning, it delivers exceptional accuracy across a wide range of problems. The algorithm’s resistance to overfitting, ability to handle high dimensional data, and minimal preprocessing requirements make it an ideal choice for both beginners and experienced practitioners.

Understanding the random forest algorithm opens doors to more advanced ensemble methods. The concepts of bagging, feature randomness, and out of bag evaluation appear throughout modern machine learning. Mastering these fundamentals provides a foundation for exploring gradient boosting, stacking, and other sophisticated techniques.

Whether you are predicting customer behavior, diagnosing diseases, or detecting fraud, the random forest algorithm deserves a place in your toolkit. Its combination of power and usability is difficult to match. The history of computer vision in artificial intelligence and modern artificial intelligence applications both demonstrate how random forests continue to drive real world innovation. For reliable, accurate, and robust predictions, few algorithms rival the random forest.