In the vast landscape of supervised learning, one algorithm stands out for its simplicity, interpretability, and sheer effectiveness: the decision tree algorithm. While more complex models like neural networks often steal the spotlight, the humble decision tree algorithm remains the foundational workforce in predictive modeling, data mining, and ensemble learning methods like random forest foundation. Whether you are a beginner taking your first steps into data science or an experienced professional looking to understand the fine details, this ultimate guide will empower you with the powerful knowledge needed to master this critical tool and achieve remarkable success in your machine learning projects.

We will explore every facet of this non parametric model, from its anatomical structure to the complex mathematical work behind its decisions. Understanding the decision tree algorithm is not just about writing code; it is about grasping the logic that drives intelligent classification and regression tasks.

What is a Decision Tree Algorithm?

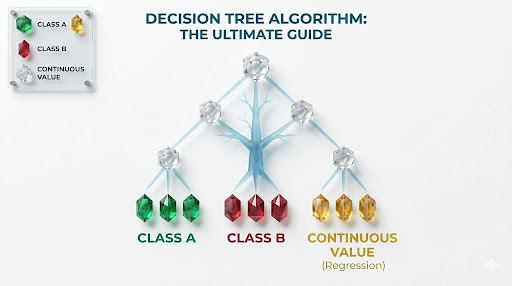

At its core, the decision tree algorithm is a supervised learning algorithm that can be used for both classification and regression tasks. It is essentially a flow chart logic structure where each internal node denotes a test on an attribute, each branch represents the outcome of the test, and each leaf node (terminal node) holds a class label or a continuous value. The algorithm mimics human decision making, using recursive partitioning to break down a dataset into smaller, more manageable subsets until a decision can be reached.

Definition and Importance in Data Science

In the modern context of data science, the decision tree algorithm is a robust predictive modeling tool used primarily for machine learning classification. Its primary purpose is to create a model that predicts the value of a target variable by learning simple decision rules inferred from the data features.

The importance of the decision tree algorithm stems from its transparency. In the First AI Programs, decision logic was explicit. The decision tree continues this tradition of clear reasoning, which is increasingly valuable for debugging models and explaining predictions to stakeholders.

Real World Examples of Tree Based Logic

The decision tree algorithm is intuitively designed, making it readily applicable to countless real world scenarios. The simple if else logic it employs is ubiquitous. For instance:

- Email Spam Detection: Does the subject line contain ‘winner’? (Yes/No). If yes, is the sender known? (Yes/No).

- Credit Risk Assessment: A bank uses a decision tree algorithm to determine if a loan applicant is risky based on credit score and income.

- Medical Diagnosis: A classic application in AI in Healthcare History involves guiding doctors through diagnosis paths.

Anatomy of a Decision Tree

A decision tree isn’t a complex, ethereal entity; it is structured logically with specific components, much like an inverted biological tree.

Root Nodes: Where the Split Begins

Every decision tree algorithm originates from a single, critical point: the root node. The root node represents the entire population or dataset and is the top most decision node in the hierarchy. It is selected based on which feature provides the optimal split.

Decision Nodes vs. Leaf Nodes

Once the root node splits, it creates decision nodes and potentially leaf nodes.

- Decision Nodes: These are internal nodes that occur after the root node. A decision node represents a point where a choice must be made, splitting the data based on categorical features or numerical features.

- Leaf Nodes (Terminal Nodes): These are the end points of the tree branches. A leaf node contains the final decision or classification result.

Sub trees and Branches

A sub tree is a section of the entire decision tree, viewed in isolation. Examining sub trees is useful during analysis or when performing tree pruning, as it allows practitioners to isolate and evaluate the effectiveness of specific decision pathways.

How Decision Trees Make Decisions (Splitting Criteria)

The powerful effectiveness of a decision tree algorithm depends entirely on how it selects the features for data splitting. The greedy search approach aims to find the best single attribute at each step.

Entropy and Information Gain (ID3 Algorithm)

Entropy measures the uncertainty or impurity in a group of examples. The mathematical formula for Entropy ($H$) is:

$$H = – \sum_{i=1}^{c} p_i \log_2(p_i)$$

Information Gain (IG) measures the effective reduction in entropy after a dataset is split on an attribute ($A$):

$$IG(A) = Entropy(Parent) – \sum \left( \frac{\text{size of child}}{\text{size of parent}} \times Entropy(Child) \right)$$

Chi Square and Variance Reduction

While Gini and Entropy are for classification, regression trees employ variance reduction. The decision tree algorithm splits the data where the weighted sum of variance in the child nodes is minimized. This was a significant step in the Evolution of Machine Learning Algorithms.

Types of Decision Tree Algorithms

ID3 (Iterative Dichotomiser 3)

The ID3 algorithm is a greedy search algorithm that grows a decision tree in a top down manner. It uses entropy and information gain to find the optimal split but is limited to categorical features.

C4.5: The Successor to ID3

C4.5 is a significant improvement; it can handle both categorical and numerical features and manage missing data values. This transition marked the Rise of Modern Machine Learning.

CART: Classification and Regression Trees

The CART algorithm is the standard used today by Scikit Learn. It handles binary splits exclusively and works for both classification and regression tasks.

Overfitting and the Need for Pruning

A serious limitation of single decision trees is their tendency to overfit. A single decision tree algorithm can grow until every leaf node is perfectly pure, capturing noise rather than patterns. This was a challenge understood deeply in the study of Expert Systems in Artificial Intelligence.

Pre Pruning (Setting Max Depth)

Pre pruning stops the tree building process early. This is done by setting hyperparameters like max_depth or min_samples_split.

Post Pruning (Removing Weak Branches)

Post pruning allows the tree to grow fully and then strategically removes nodes that do not contribute significantly to predictive power. This helps avoid the pitfalls seen during historical AI winters.

Implementation: Building a Decision Tree in Python

Preparing Your Dataset with Scikit Learn

Before training, you must perform categorical features encoding to transform text based features into numerical vectors. Crucially, a decision tree generally does not require feature scaling.

Training the DecisionTreeClassifier

Training is simple using the Scikit Learn library. We recommend setting max_depth during initialization to improve generalization.

Visualizing the Tree Output

A tremendous advantage of the decision tree algorithm is the ability to visualize the model. This transparency is a hallmark of Modern Artificial Intelligence Applications.

Pros and Cons of Decision Trees

Advantages: Interpretability and No Scaling Required

- Interpretability: You can easily visualize the decision rules.

- Minimal Data Preparation: They require less data cleaning and no feature scaling.

- Automatic Feature Selection: The algorithm implicitly performs feature selection.

Disadvantages: Instability and High Variance

- Instability: Small variations in data can alter the optimal split.

- Overfitting: They naturally grow complex trees that overfit noise.

Frequently Asked Questions (FAQs)

Can a decision tree algorithm handle both numerical and categorical features?

Yes, though implementations like Scikit Learn require categorical variables to be pre encoded numerically.

Does the decision tree algorithm work for regression?

Yes, using variance reduction instead of Gini or Entropy.

How do you choose the root node?

By calculating the highest information gain or lowest Gini impurity across all features.

Conclusion: From Single Trees to Random Forests

The decision tree algorithm stands as a powerful testament to the value of simplicity. Its anatomical structure provides a clear, understandable pathway for data tasks. While single trees suffer from instability, they are the critical ingredient in the powerful random forest foundation. Mastering these concepts allows you to build robust models that achieve remarkable success.