What is Few Shot Learning?

Imagine teaching a child to recognize a zebra after showing just one picture. The child sees the stripes, the shape, the mane, and from that single example, can identify zebras in any future photo. Traditional artificial intelligence cannot do this. Standard deep learning models require thousands or even millions of labeled examples to learn a new concept. This is where few shot learning comes to the rescue, a remarkably powerful approach that enables AI to learn from just a handful of examples.

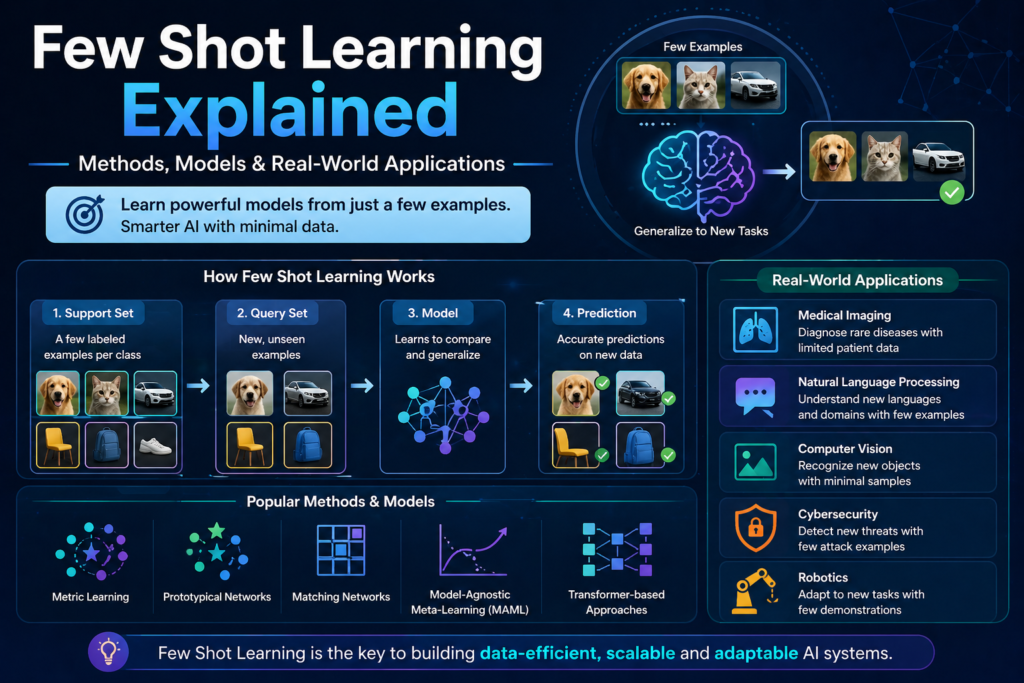

Few shot learning is a subfield of machine learning that focuses on building models that can generalize from very limited training data. The “few shot” refers to the small number of examples, typically between one and five, provided for each new class or task. This capability is incredibly valuable because real world scenarios rarely come with abundant labeled data.

The importance of few shot learning continues to grow as AI expands into new domains. Medical imaging lacks large labeled datasets because expert radiologists are expensive and time constrained. Rare species identification cannot rely on thousands of photos because the animals are seldom seen. Personalized user interfaces cannot train on millions of examples because each user is unique. Few shot learning addresses all these challenges by dramatically reducing the data requirements for building effective AI systems.

Definition and Concept

Few shot learning in machine learning refers to the ability of a model to learn new concepts from a very small number of training examples. The standard formulation involves support sets and query sets. The support set contains the few labeled examples for each new class. The query set contains unlabeled examples that the model must classify based on the support set.

The mathematical formulation of few shot learning typically involves learning a similarity function. Given a support set S containing examples of novel classes, and a query example x, the model predicts the class of x by comparing it to the support examples. The prediction is:

Prediction(x) = arg max over classes of similarity (x, support_examples_of_class)

Where similarity is a learned metric that captures how close two examples are in feature space. The goal of few shot learning algorithms is to learn this similarity function from many meta training tasks, each with their own support and query sets.

Why Data Scarcity Matters

Data scarcity is a serious problem in many real world applications of artificial intelligence. Traditional supervised learning assumes abundant labeled data, an assumption that rarely holds outside of tech companies with massive crowdsourcing operations.

In healthcare, privacy regulations limit data sharing. Rare diseases have few documented cases. Expert labeling requires years of medical training. Few shot learning offers a solution by enabling diagnostic models trained on just a handful of examples per condition.

In manufacturing, defect detection systems need to identify rare production errors. Collecting thousands of defective product images is impractical because defects happen infrequently. Few shot learning allows quality control systems to learn from the few examples that exist.

In natural language processing, low resource languages lack the massive text corpora needed for traditional deep learning models. Few shot learning in NLP enables language technologies for languages spoken by millions but with limited digital text.

The rise of modern machine learning has highlighted data scarcity as a key bottleneck, and few shot learning directly addresses this limitation.

How Few Shot Learning Works

The mechanics of few shot learning differ significantly from traditional machine learning. Instead of learning to classify from many examples, few shot learning learns how to learn from few examples.

Meta-Learning Approach

Meta learning a few shots is often called “learning to learn.” The core idea is to train a model on many different tasks so that it becomes good at adapting to new tasks with minimal data. Each meta training task is itself a few shot learning problems.

During meta training, the model sees many episodes. Each episode contains a support set and a query set drawn from the same classes. The model tries to classify the query examples based on the support set. The loss is computed, and the model updates its parameters to perform better on future episodes.

After meta training, the model can be applied to novel classes it has never seen before. It takes a support set of just a few examples for these new classes and can classify query examples accurately. This ability to generalize to entirely new classes is what makes few shot learning so powerful.

Transfer Learning Role

Transfer learning and few shot learning are closely related but distinct. Transfer learning takes a model pre-trained on a large dataset and fine-tunes it on a small target dataset. Few shot learning typically requires even less data, often just one to five examples per class.

However, transfer learning plays an important role in many few shot learning approaches. A model pre-trained on a large dataset like ImageNet learns useful feature representations. These features can then be used as a starting point for few shot learning algorithms.

The combination of transfer learning and few shot learning is particularly powerful. The pre-trained model provides general visual knowledge. The few shot learning algorithm provides the ability to rapidly adapt to new classes. Together, they achieve strong performance with minimal labeled data.

Embedding Techniques

Few shot classification methods rely heavily on learning good embeddings. An embedding is a vector representation of an input that captures its semantic meaning. Similar inputs should have similar embeddings. Different inputs should have different embeddings.

The mathematical goal is to learn an embedding function f that maps inputs to a d dimensional space such that the distance between embeddings reflects semantic similarity. The most common distance metric is Euclidean distance or cosine similarity.

During meta training, the embedding function is learned so that embeddings of examples from the same class are close together, while embeddings from different classes are far apart. Once the embedding function is learned, classifying a new query example involves computing its embedding and comparing it to the embeddings of support examples.

Few Shot Learning Models

Several successful few shot learning algorithms have been developed, each with distinct architectures and approaches.

Siamese Networks

Siamese networks are among the earliest and most intuitive few shot learning models. The architecture consists of two identical neural networks that share weights. Each network processes one input, and the outputs are combined to produce a similarity score.

During training, Siamese networks learn to output high similarity for pairs of inputs from the same class and low similarity for pairs from different classes. The loss function is typically contrastive loss or triplet loss.

For few shot classification, a support set of examples is provided for each new class. To classify a query example, the Siamese network compares it to each support example and assigns the class of the most similar support example.

The simplicity of Siamese networks makes them an excellent starting point for understanding few shot learning. They directly learn a similarity metric that generalizes to new classes.

Prototypical Networks

Prototypical networks take a different approach. Instead of comparing a query to each support example individually, they compute a prototype for each class by averaging the embeddings of its support examples. The prototype represents the “center” of the class in embedding space.

The prototype for class k is computed as:

Prototype_k = (1 / |S_k|) × sum over x in S_k of f(x)

Where S_k is the support set for class k and f is the embedding function. To classify a query example, the model computes its embedding and finds the closest prototype using Euclidean distance:

Prediction = arg min over k of distance(f(query), prototype_k)

Prototypical networks are elegant and effective. They have become a standard baseline for few shot learning research.

Matching Networks

Matching networks combine ideas from Siamese networks and prototypical networks. They use an attention mechanism to compare a query example to support examples, weighting the contribution of each support example based on its similarity to the query.

The prediction for a query is a weighted sum of support labels, where the weights are computed using a softmax over similarities. This allows the model to attend to the most relevant support examples for each query.

Matching networks also use a technique called full context embedding, where the embedding of each support example depends on other support examples. This allows the model to understand the relationships within the support set.

Applications of Few Shot Learning

Few shot learning has found applications across diverse domains where labeled data is scarce.

Image Classification

Few shot learning computer vision is the most heavily researched application. Standard image classification requires thousands of labeled images per class. Few shot learning reduces this to one to five images.

In medical imaging, few shot learning enables diagnostic models for rare diseases where few labeled scans exist. A radiologist might provide just a few examples of a rare tumor type, and the model learns to detect it in new patients.

In wildlife conservation, few shot learning helps identify endangered species from camera trap images. Conservationists cannot label millions of images, but they can provide a handful of examples for each species.

The incredible AI in healthcare history and evolution shows how few shot learning is accelerating medical AI by reducing data requirements.

NLP Tasks

Few shot learning in NLP has transformed how language models handle new tasks. Large language models like GPT-3 demonstrate remarkable few shot learning abilities through in context learning. The model is given a few examples of a task in its prompt, and it generalizes to new examples without any parameter updates.

Text classification benefits from few shot learning when labeled data is limited. Sentiment analysis for niche domains, intent classification for specialized chatbots, and topic labeling for small document collections can all be accomplished with just a handful of labeled examples.

Named entity recognition, the task of identifying names, dates, and locations in text, also benefits from few shot learning. A user can provide a few examples of the entities they care about, and the model learns to find similar entities in new text.

Speech Recognition

Few shot learning for speech recognition enables personalized voice interfaces. Traditional speech recognition requires hours of transcribed audio from each user. Few shot learning reduces this to just a few spoken phrases.

Accent adaptation is another important application. A speech recognition system trained on standard English can adapt to a new accent using just a few minutes of transcribed speech from a speaker with that accent.

Keyword spotting, the task of detecting specific wake words like “Hey Siri” or “OK Google,” can be personalized using few shot learning. A user says their chosen wake word a few times, and the system learns to recognize it.

Challenges and Limitations

Despite its power, few shot learning faces several challenges that limit its practical application.

Overfitting Issues

Overfitting is perhaps the biggest challenge in few shot learning. With only a handful of examples, models can easily memorize the training data rather than learning generalizable patterns. This is especially problematic when the support examples are not representative of the class distribution.

Regularization techniques help but cannot completely solve the problem. Dropout, weight decay, and data augmentation all reduce overfitting, but the fundamental issue remains: very little data provides very little signal.

Meta learning few shot approaches are themselves prone to overfitting to the meta training tasks. If the meta training tasks are not sufficiently diverse, the model may not generalize to novel tasks.

Data Quality Problems

Few shot learning is extremely sensitive to data quality. If the few provided examples are noisy, mislabeled, or unrepresentative, the model’s performance suffers dramatically. Traditional machine learning can average out noisy examples when many are available. Few shot learning has no such luxury.

Outliers are a serious problem. If one of the few support examples is an outlier, the model’s understanding of the entire class can be distorted. Robust few shot learning methods attempt to detect and downweight outliers, but this remains an open research challenge.

Class imbalance is another issue. Some classes may have more support examples than others, or some classes may be inherently more variable. Handling these imbalances requires careful algorithm design.

Frequently Asked Questions

1. What is few shot learning in simple terms?

Few shot learning is a method that allows AI models to learn new concepts from just one to five examples, similar to how humans can recognize new objects after seeing them once.

2. What is the difference between few shot learning and zero shot learning?

Zero shot learning requires no examples, using semantic descriptions instead. Few shot learning requires one to five examples per new class.

3. What are some few shot learning examples?

Recognizing a new bird species from one photo, adapting a speech recognizer to a new accent from a few phrases, or classifying a new document type from a handful of examples.

4. What are Siamese networks in few shot learning?

Siamese networks are twin neural networks that learn to compare inputs and output similarity scores, enabling classification by comparing query examples to support examples.

5. Can few shot learning work with one example per class?

Yes, one shot learning is a subset of few shot learning where exactly one example is provided per new class.

6. How does few shot learning differ from transfer learning?

Transfer learning fine tunes a pre-trained model on a small dataset. Few shot learning typically requires even less data and often uses meta learning to learn how to learn from few examples.

Conclusion

Few shot learning represents one of the most remarkably powerful advances in modern artificial intelligence. By enabling models to learn from just a handful of examples, it addresses the fundamental limitation of traditional machine learning: the insatiable hunger for labeled data. From medical imaging to personalized voice interfaces, few shot learning is unlocking AI applications that were previously impossible.

The journey of few shot learning from research curiosity to practical tools mirrors the broader evolution of AI toward more efficient, human like learning. The evolution of machine learning algorithms shows how each advance has reduced the data requirements for building effective models. Few shot learning represents the logical endpoint of this trend: learning from the absolute minimum number of examples.

For practitioners, few shot learning offers a practical path to building models in data scarce domains. The modern artificial intelligence applications we see emerging, from rare disease diagnosis to low resource language translation, increasingly rely on few shot learning techniques.

To explore complementary approaches for learning with limited data, review the history of AI agents and how autonomous systems adapt to new environments. Additionally, the self supervised learning in artificial intelligence resource provides insight into learning from unlabeled data, which pairs naturally with few shot learning for labeled examples.

Whether you are a researcher pushing the boundaries of few shot learning algorithms or a practitioner building real world systems, mastering few shot learning is essential for the data constrained future of artificial intelligence.