Introduction

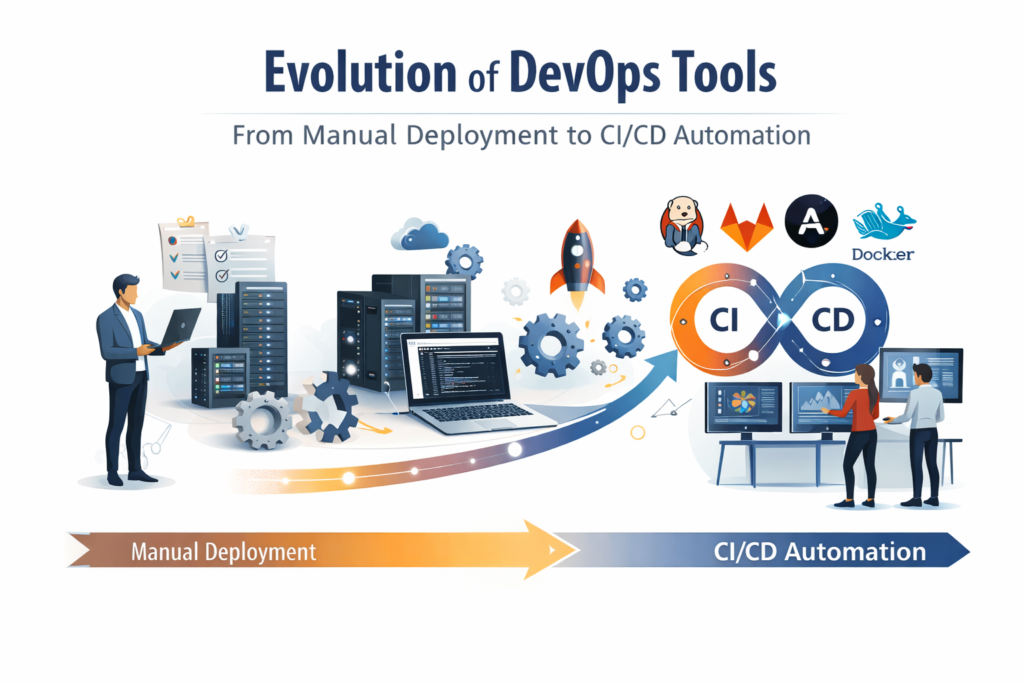

The software development landscape has witnessed a remarkable transformation over the past two decades. At the heart of this change lies the devops tools evolution, a journey that has redefined how teams build, test, and deploy applications. What once required days of manual effort and countless human errors can now be accomplished in minutes through automated pipelines. This powerful shift from traditional deployment methods to modern CI/CD automation represents one of the most significant advancements in Software Engineering Evolution. Understanding this journey helps developers appreciate the brilliant tools that make continuous delivery possible today.

The Dark Ages Before Automation (2000 – 2005)

Before the devops tools evolution gained momentum, software deployment was a nightmare. System administrators manually copied files to production servers. They ran shell scripts that often broke. Teams used spreadsheets to track deployment steps. A single deployment could take six to eight hours. If something failed, the entire team scrambled to fix it without proper rollback mechanisms. The concept of Continuous Deployment did not exist. Developers threw code over the wall to operations, who then struggled to make it run. This painful process created friction, delayed releases, and frustrated everyone involved.

During this era, companies released software once every few months. The Development lifecycle lacked integration between development and operations teams. Deployments on Friday afternoons became infamous for ruining weekends. Without Build automation, every step required human intervention. The Software delivery pipeline was essentially a checklist on a whiteboard. This manual approach could not scale as applications grew more complex. Something had to change.

The First Spark: Version Control Revolution (2005)

The devops tools evolution began with version control systems. Subversion (SVN) gained popularity around 2005, but the real game changer arrived in 2005 with Git. Linus Torvalds created Git for Linux kernel development. Unlike centralized systems, Git offered distributed Version control (Git) . Every developer had a complete history on their local machine. Branching and merging became simple operations rather than painful ordeals. This evolution of the first digital computer mindset applied to code management meant developers could experiment freely without breaking the main codebase.

Git did not just track changes. It enabled collaboration at an unprecedented scale. Teams could work on features simultaneously. Code reviews became practical. The ability to revert changes instantly reduced fear. This foundation proved essential for everything that followed. Without reliable version control, automated testing and deployment pipelines would have been impossible. Git remains the gold standard for source code management in modern devops tools evolution.

The Rise of Continuous Integration (2006- 2009)

Continuous Integration emerged as the next critical milestone. Developers realized that integrating code daily prevented the nightmare of merge hell. Jenkins and Hudson entered the scene in 2006. Originally called Hudson, this project later forked into Jenkins in 2011. Jenkins provided an open source automation server that could build and test code automatically whenever someone committed changes. This represented a brilliant leap forward in devops tools evolution.

With Jenkins, teams configured build jobs that ran Automated unit tests every time code changed. If tests failed, the team received immediate notifications. No more waiting until release day to discover broken functionality. Jenkins plugins extended functionality to support almost any programming language or tool. The Agile methodology found a perfect companion in Jenkins. Short development cycles paired with immediate feedback loops made rapid iteration possible. Jenkins became the workhorse of CI/CD for years and still powers thousands of organizations today.

Configuration Management Takes Hold (2008- 2012)

As infrastructure grew more complex, manually configuring servers became unsustainable. Puppet and Chef history began around 2008. Puppet, released in 2005 but gaining traction by 2008, used declarative language to define server states. Chef, launched in 2009, took a procedural approach using Ruby. Both tools introduced Configuration management to mainstream IT operations. Administrators could write code that described exactly how servers should look. Running that code on any machine produced identical configurations.

This shift from click ops to code driven infrastructure marked a major chapter in devops tools evolution. No more golden images or manual setup instructions. Teams stored server configurations in Git alongside application code. Infrastructure as Code (IaC) became a real practice, though the term would gain popularity later. Puppet and Chef reduced environment inconsistencies dramatically. Development, testing, and production servers finally resembled each other. The dreaded it works on my machine excuse began losing its power.

The Container Revolution (2013)

The year 2013 changed everything. Docker launched its container platform in March 2013. Containers were not entirely new Linux containers existed before but Docker made them accessible and developer friendly. Docker containers packaged applications with all their dependencies into lightweight portable units. A container ran identically on a laptop, a test server, or a production cloud instance. This solved the environment drift problem permanently.

devops tools evolution accelerated dramatically with Docker. Developers no longer wasted hours setting up local environments. Operations teams no longer debugged missing library errors. Containers started in milliseconds, unlike virtual machines that took minutes. The ability to version container images enabled reproducible deployments. Docker images stored in registries like Docker Hub became the standard artifact for application delivery. Microservices architecture became practical because containers provided isolation without excessive overhead. Each service could run in its own container with its own dependencies.

Orchestration Masters the Chaos (2014- 2015)

Running a few containers worked well. Running hundreds or thousands of containers across multiple servers required orchestration. Kubernetes orchestration emerged from Google in 2014. Google donated Kubernetes to the Cloud Native Computing Foundation based on years of internal experience with Borg and Omega systems. Kubernetes provided automated container scheduling, scaling, and management. It handled failed containers, load balancing, and rolling updates.

The combination of Docker and Kubernetes represented a peak moment in devops tools evolution. Teams could deploy microservices across clusters of machines with declarative configuration files. Kubernetes managed the complexity automatically. If a node failed, Kubernetes rescheduled containers elsewhere. If traffic spiked, Kubernetes scaled replicas up or down. Rolling updates deployed new versions without downtime. Kubernetes became the operating system of the cloud native world. Today, almost every major company uses Kubernetes for production workloads.

Infrastructure as Code Matures (2014 2016)

While Puppet and Chef focused on configuration management, Infrastructure as Code (IaC) evolved to include provisioning. Ansible vs. Terraform became a common debate starting around 2014. Ansible, acquired by Red Hat, used simple YAML playbooks for automation. It required no agents on target machines, using SSH instead. Terraform, released by HashiCorp in 2014, specialized in provisioning infrastructure across cloud providers. Terraform could create AWS EC2 instances, Google Cloud storage buckets, and Azure databases using declarative configuration files.

devops tools evolution embraced both tools for different purposes. Teams used Terraform to provision cloud resources and Ansible to configure those resources after creation. The immutable infrastructure pattern gained popularity where servers never changed after deployment. Instead, teams replaced entire servers with new versions. This approach worked perfectly with containers and cloud environments. Infrastructure as Code brought the same benefits to operations that version control brought to code: repeatability, auditability, and automation.

The CI/CD Pipeline Takes Shape (2015 -2018)

Continuous Integration evolved into Continuous Delivery and Continuous Deployment. CI/CD pipelines automated the entire journey from code commit to production deployment. GitHub Actions launched in 2018, bringing CI/CD directly into the GitHub ecosystem. Developers could define workflows in YAML files stored alongside their code. Every push triggered automated builds, tests, and deployments. GitHub Actions integrated seamlessly with GitHub repositories, eliminating the need for separate CI servers.

devops tools evolution reached a new level of accessibility. Small teams could implement sophisticated pipelines without managing Jenkins servers. The Software delivery pipeline became a first class citizen in development platforms. Build automation tools like Maven, Gradle, and Webpack integrated naturally into these pipelines. Shift left testing meant running tests earlier in the pipeline, catching defects before they reached production. Deployment frequency increased from monthly to daily or even hourly for many organizations.

Monitoring and Observability (2012 2016)

Automated deployment pipelines required automated monitoring. Monitoring tools (Prometheus) entered the scene around 2012. Prometheus, developed at SoundCloud and open sourced in 2012, introduced a powerful multidimensional data model and query language called PromQL. It scraped metrics from instrumented services and stored time series data. Combined with Grafana for visualization, Prometheus became the standard monitoring stack for cloud native environments.

devops tools evolution included observability as a core practice. The three pillars of observability metrics, logs, and traces enabled teams to understand system behavior without guessing. Tools like ELK Stack (Elasticsearch, Logstash, Kibana) aggregated logs. Jaeger and Zipkin provided distributed tracing. Alerts configured in Prometheus notified teams of problems before customers noticed. Monitoring transformed from reactive firefighting to proactive optimization. Deployment frequency could increase safely because monitoring provided rapid feedback on production health.

Microservices and Toolchain Integration (2016 2019)

Microservices architecture gained widespread adoption during this period. Breaking monoliths into small, independently deployable services increased complexity but also increased agility. Toolchain integration became essential. Development teams needed to stitch together version control, CI/CD, container registries, orchestration, monitoring, and logging. The devops tools evolution produced dozens of specialized tools. The CNCF landscape diagram grew to hundreds of projects.

Service meshes like Istio and Linkerd added another layer to devops tools evolution. These tools handled service to service communication, retries, timeouts, and security without changing application code. API gateways like Kong and Traefik managed external traffic. Secret management tools like HashiCorp Vault secured credentials. The ecosystem matured rapidly, offering solutions for every possible need. However, complexity became a real challenge. Teams struggled to choose the right combination of tools.

GitOps and Declarative Everything (2017 Present)

GitOps emerged as an evolution of Infrastructure as Code. Weaveworks popularized GitOps in 2017. The core idea: use Git as the single source of truth for both application code and infrastructure configuration. Changes happen through pull requests. Tools like ArgoCD and Flux continuously synchronize live environments with Git repositories. If someone manually changes a production setting, the GitOps tool reverts it automatically.

devops tools evolution reached a point where the entire software delivery process lived in Git. Developers changed code, opened pull requests, ran CI pipelines, and merged changes. The GitOps operator applied those changes to production automatically. This approach improved security, auditability, and reliability. Every change had a corresponding commit. Rollbacks meant reverting a commit. The evolution of the first digital computer from punch cards to GitOps shows how far automation has come.

The Future of DevOps Tools

Current trends in devops tools evolution point toward platform engineering. Internal developer platforms abstract away infrastructure complexity. Developers interact with self service portals rather than raw Kubernetes or cloud APIs. Backstage, originally developed at Spotify and donated to CNCF, provides a framework for building these platforms. Another trend is AI powered operations. Tools use machine learning to detect anomalies, predict failures, and suggest remediation steps. AI Evolution is beginning to impact how we operate systems.

Serverless computing reduces operational burden further. AWS Lambda, Google Cloud Functions, and Azure Functions run code without managing any servers. Cloud Computing History shows a progression from physical servers to virtual machines to containers to serverless. Each layer adds abstraction and reduces toil. The devops tools evolution will likely continue toward more automation, more intelligence, and less manual intervention. The goal remains the same: deliver software faster, safer, and more reliably.

Frequently Asked Questions (FAQs)

Q1: What is the difference between Continuous Integration, Continuous Delivery, and Continuous Deployment?

Continuous Integration (CI) means automatically building and testing every code change. Continuous Delivery (CD) means keeping code in a deployable state but requiring manual approval for production releases. Continuous Deployment (CD) means automatically deploying every change that passes tests directly to production without human intervention. Most organizations start with CI, add Continuous Delivery, and eventually adopt Continuous Deployment for low risk services.

Q2: Which DevOps tool should I learn first?

Start with Version control (Git) because everything else depends on it. After Git, learn Jenkins or GitHub Actions for CI/CD automation. Then add Docker containers to understand packaging and isolation. Finally learn Kubernetes for orchestration if you work with microservices. This learning path follows the historical devops tools evolution and builds foundational knowledge progressively.

Q3: How do I choose between Ansible and Terraform?

Use Terraform for provisioning cloud infrastructure like networks, load balancers, and virtual machines. Use Ansible for configuration management tasks like installing software, managing users, and deploying application code. Many teams use both tools together. Terraform provisions the server, then Ansible configures it. For container based environments, you might need neither because containers embed configuration inside images.

Q4: What is the role of monitoring in CI/CD pipelines?

Monitoring validates that deployments actually work in production. Even with perfect Automated unit tests and staging environments, production surprises happen. Monitoring tools (Prometheus) track metrics like error rates, latency, and resource usage. Teams use this data to verify successful deployments and detect problems early. Modern pipelines can automatically roll back deployments when monitoring detects abnormal conditions.

Q5: Is Jenkins still relevant in 2026?

Yes, Jenkins remains widely used despite newer alternatives. Jenkins has thousands of plugins and works with almost any technology. However, many teams prefer GitHub Actions or GitLab CI for new projects because these platforms offer better integration with version control and simpler maintenance. The devops tools evolution includes multiple valid options. Choose based on your team’s existing expertise and specific requirements.

Q6: How does microservices architecture affect DevOps tool choices?

Microservices architecture increases the number of deployable components. This amplifies the need for automation. You need robust CI/CD pipelines for each service. Container orchestration with Kubernetes becomes almost mandatory. Service meshes help manage communication. Distributed tracing tools replace simple logging. In short, microservices require more sophisticated devops tools evolution adoption than monolithic applications.

Q7: What are the biggest challenges in implementing DevOps tools?

Cultural resistance often exceeds technical challenges. Teams accustomed to manual processes may resist automation. Lack of Agile methodology adoption undermines DevOps benefits. Tool sprawl creates complexity without value. Security integration often lags behind. Start with small wins, automate one pain point at a time, and demonstrate value before expanding. The technical aspects of devops tools evolution are well understood, but people and process changes require patience.

Conclusion

The journey from manual deployment scripts to modern CI/CD pipelines represents one of the most powerful transformations in technology. devops tools evolution has delivered brilliant improvements in deployment frequency, failure recovery time, and developer productivity. Tools like Git, Jenkins, Docker, Kubernetes, Terraform, Prometheus, and GitHub Actions have each contributed essential capabilities. The evolution of the first digital computer to today’s cloud native ecosystems demonstrates human ingenuity at its best. Organizations that embrace these tools and practices will continue to outperform competitors who rely on outdated methods. The future promises even more automation and intelligence, making software delivery faster and safer than ever before.