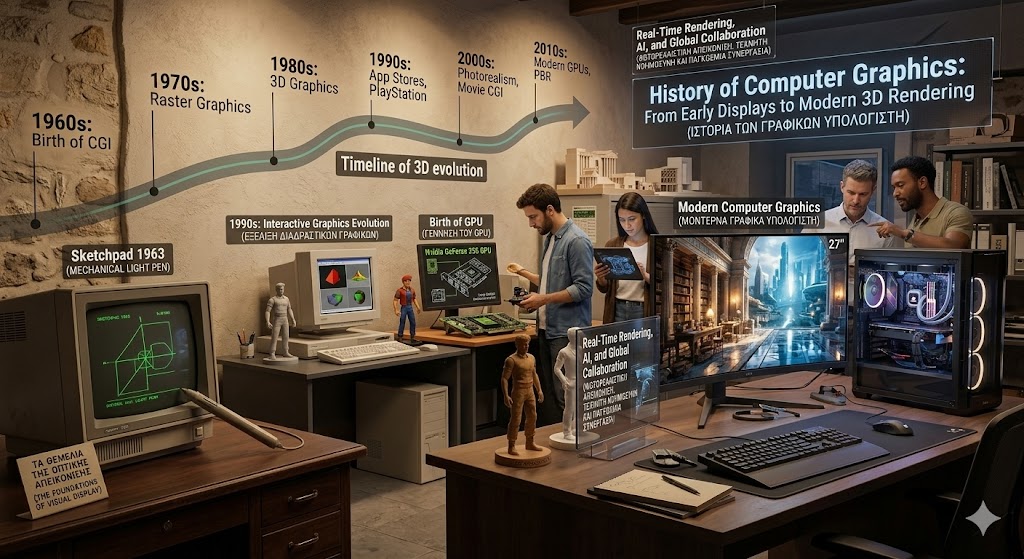

The History of Computer Graphics is a journey from flickering dots on a vacuum tube to the indistinguishable-from-reality digital worlds of today. It is a story of how we taught machines not just to calculate numbers, but to “see” and “draw.” Much like the History of Computer Hardware, which provided the raw processing power for these visual leaps, the evolution of computer graphics has redefined how humans interact with data, entertainment, and each other. Today, we don’t just use computers; we inhabit the visual spaces they create.

The Birth of Computer Graphics – 1960s

The 1960s marked the era of “Vector Graphics,” where images were composed of lines drawn between points. The most significant milestone in this decade was Sketchpad 1963, created by Ivan Sutherland. This was the first program that allowed a user to interact with a computer visually using a light pen. It introduced the world to the concept of the Graphical User Interface (GUI), long before the History of Operating Systems moved toward the windows and icons we use today.

During this period, graphics were incredibly expensive and reserved for government research or elite universities. However, these early experiments proved that computers could be more than just giant calculators; they could be canvases for human creativity.

The Rise of Raster Graphics – 1970s

In the Raster Graphics 1970s era, the technology shifted from drawing lines to filling a grid of pixels. This change was revolutionary because it allowed for the display of solid shapes and varying colors. This was also the decade that gave birth to the video game industry with titles like Pong.

As memory became cheaper, computers could store “frame buffers,” allowing them to remember what every pixel on the screen should look like. This period was essential for the History of computers, as it moved the display from a simple output device to a dynamic, colorful medium. It was during this time that researchers at places like the University of Utah began developing algorithms for shading and hidden-surface removal, which are still used in 3D graphics today.

3D Graphics and Graphics Hardware – 1980s

The 1980s was the decade where the 3D graphics timeline truly accelerated. We saw the transition from flat, 2D shapes into wireframe 3D models. This era introduced “rendering,” the process of turning a 3D mathematical model into a 2D image. To handle these intense calculations, specialized graphics hardware began to emerge, moving the burden away from the central processor.

This decade also saw the first major instances of computer graphics in movies, such as the light cycle sequence in Tron (1982). Companies like Silicon Graphics (SGI) began building workstations specifically designed for visual effects, paving the way for the professional digital artist.

Interactive Graphics and Multimedia – 1990s

The 1990s brought computer graphics to the masses. With the release of the Sony PlayStation and the rise of PC gaming, interactive graphics evolution reached a fever pitch. This was the decade of the “GPU” (Graphics Processing Unit). Nvidia released the GeForce 256 in 1999, marketing it as the world’s first true GPU.

Multimedia became the buzzword of the era. Computers were no longer just for word processing; they were for watching videos, playing immersive 3D games like Quake, and exploring the early web. The History of Open Source Software also played a role here, as open libraries like OpenGL allowed developers to write graphics code that could run on many different types of hardware.

High-Quality Rendering and Realistic Visuals – 2000s

By the 2000s, the goal of the History of Computer Graphics shifted toward “photorealism.” This era was defined by complex lighting models, such as Global Illumination and Subsurface Scattering (which makes digital skin look real by simulating how light travels through it).

Computer graphics in movies reached a tipping point with films like Avatar (2009), which used motion capture and advanced rendering to create an entire alien world. On the software side, the History of DevOps began to influence how large-scale rendering farms managed the massive amounts of data required to “crunch” these high-fidelity frames, ensuring that thousands of servers could work together seamlessly.

Modern Computer Graphics and GPUs – 2010s

The 2010s saw the rise of GPU rendering as the standard for both professional and consumer applications. GPUs were no longer just for games; they were being used for scientific visualization, medical imaging, and cryptocurrency mining.

The 3D graphics timeline in this decade was dominated by the move toward 4K resolution and Virtual Reality (VR). We saw the perfection of “Physically Based Rendering” (PBR), which ensures that digital materials—like metal, glass, and wood—react to light exactly as they would in the real world. This was also the era where mobile graphics caught up, bringing console-quality visuals to our pockets.

Real-Time Rendering, AI, and Global Collaboration – 2020s

As we navigate the 2020s, the focus has shifted to real-time rendering and global collaboration. We no longer have to wait hours for a single frame to render; technologies like Ray Tracing allow us to simulate the path of light in real-time, creating perfect reflections and shadows instantly.

Modern AI computer graphics are currently the biggest trend. AI upscaling (like DLSS) allows computers to render at a lower resolution and use deep learning to “fill in” the missing pixels, providing high performance without sacrificing quality. Furthermore, generative AI can now create entire 3D textures and models from simple text prompts, fundamentally changing the workflow of digital artists.

Frequently Asked Questions (FAQs)

1. What is the difference between Raster and Vector graphics?

Vector graphics use mathematical paths (lines and curves) and can be scaled infinitely without losing quality. Raster graphics use a grid of pixels; if you zoom in too far, the image becomes “pixelated.”

2. What does a GPU do in computer graphics?

The GPU (Graphics Processing Unit) is a specialized processor designed to handle many mathematical tasks simultaneously. This makes it much faster than a standard CPU at drawing the millions of pixels required for 3D images.

3. What was the first movie to use CGI?

While Westworld (1973) used 2D digital imagery, Tron (1982) is often cited as the first major film to use extensive 3D CGI. Toy Story (1995) was the first feature-length film to be entirely computer-animated.

4. How is AI used in modern graphics?

AI is used for “denoising” (cleaning up grainy images), upscaling (making low-res images look high-res), and even generating realistic textures or animations automatically.

Conclusion

The History of Computer Graphics is a testament to the human desire to blur the line between the digital and the physical. From the humble beginnings of Sketchpad 1963 to the mind-bending realism of modern AI-driven rendering, we have turned the computer into the ultimate window into other worlds. As hardware continues to evolve, we are moving toward a future where “graphics” are no longer something we look at on a screen, but something we experience all around us. The journey from pixels to presence is nearly complete.