What is a Markov Decision Process (MDP)?

Imagine you are teaching a robot to navigate a maze. Every step matters. Each action leads to a new position. Some paths bring rewards. Others lead to dead ends. How does the robot learn the best sequence of actions? The answer lies in a markov decision process. This remarkably powerful mathematical framework provides the foundation for sequential decision making under uncertainty, forming the backbone of modern reinforcement learning.

A markov decision process is a mathematical model that captures situations where outcomes are partly random and partly under the control of a decision maker. It provides a structured way to think about problems where you need to make a sequence of decisions over time, each affecting future states and rewards. From teaching robots to walk to optimizing investment portfolios, the markov decision process is the engine that drives intelligent sequential decision making.

The name honors Andrey Markov, a Russian mathematician who studied sequences of random variables where the future depends only on the present, not on the past. This memoryless property, which we will explore in detail, is what makes Markov decision processes both powerful and tractable. Understanding markov decision processes is essential for anyone serious about reinforcement learning MDP applications. The reinforcement learning history shows how these mathematical foundations enabled breakthroughs in game playing, robotics, and autonomous systems.

The Core Components: States, Actions, and Rewards

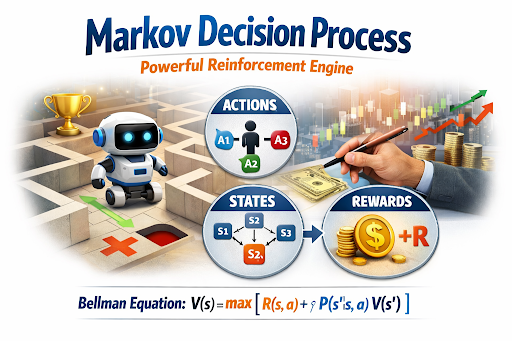

Every markov decision process consists of three essential components: states, actions, and rewards. These elements work together to model any sequential decision problem.

States represent all possible situations the agent can be in. In a maze, states are the positions the robot can occupy. In a game of chess, states are all possible board configurations. The set of all possible states is called the state space. A well defined state space captures everything relevant to the decision problem.

Actions are the choices available to the agent in each state. From a given state, the agent can select from a set of possible actions. In a maze, actions might include moving north, south, east, or west. In financial trading, actions might include buying, selling, or holding an asset.

Rewards provide immediate feedback. When the agent takes an action and transitions to a new state, it receives a numerical reward. Positive rewards encourage behaviors. Negative rewards discourage them. In a maze, reaching the goal might give a large positive reward. Hitting a wall might give a negative reward.

The goal of solving a markov decision process is to find a strategy, called a policy, that maximizes the total cumulative reward over time. This simple framework of states, actions, and rewards in AI captures an astonishing variety of real world problems. The deep blue chess computer history demonstrated how sequential decision making could achieve superhuman performance in complex strategic environments.

Understanding the “Memoryless” Markov Property

The Markov property is the defining characteristic of a Markov decision process. It states that the future depends only on the current state, not on the history of how the agent arrived there. In mathematical terms, the probability of transitioning to a future state depends only on the current state and the action taken.

This property is often called the memoryless property. Once you know the current state, all previous states, actions, and rewards become irrelevant for predicting the future. This might seem like a strong assumption, but many real world systems satisfy it or can be approximated with it.

For example, the position of a moving car depends on its current position, velocity, and the control inputs. You do not need to know where it was five seconds ago to predict where it will be in one second, provided you know its current state. The Markov property makes what is a markov decision process computationally tractable, enabling efficient algorithms for finding optimal policies.

How MDPs Power Reinforcement Learning

Markov decision processes are the mathematical engine that powers reinforcement learning. Every reinforcement learning problem is essentially a markov decision process that the agent must learn to solve through interaction.

The Role of Policies and Value Functions

In a markov decision process, a policy is a strategy that tells the agent what action to take in each state. Policies can be deterministic, mapping each state to a single action, or stochastic, mapping states to probability distributions over actions. The goal of reinforcement learning is to find the optimal policy that maximizes expected cumulative reward.

Value functions measure how good it is to be in a given state or to take a given action in a state. The state value function, often denoted V(s), estimates the expected total reward starting from state s and following a given policy. The action value function, denoted Q(s,a), estimates the expected total reward starting from state s, taking action a, then following the policy.

These value functions are central to solving MDPs with value iteration and other dynamic programming methods. By estimating values accurately, an agent can make decisions that maximize long term rewards rather than just immediate gratification. The Kasparov vs deep blue artificial intelligence match that captured the world’s attention showed how search and value estimation could achieve what was once thought impossible.

Decoding the Bellman Equation for Optimization

The Bellman equation is the cornerstone of Markov decision process theory. It expresses the relationship between the value of a state and the values of its successor states. This recursive formulation enables efficient computation of optimal policies.

The Bellman equation for the state value function is:

V(s) = max over actions of [ R(s,a) + γ × sum over next states of P(s’|s,a) × V(s’) ]

Let us break this down. V(s) is the value of being in state s. R(s,a) is the immediate reward received after taking action in state s. γ, the discount factor between 0 and 1, determines how much future rewards matter relative to immediate rewards. P(s’|s,a) is the MDP transition probability model, the probability of transitioning to state s’ after taking action in state s.

The Bellman equation says that the value of a state equals the best possible combination of immediate reward plus the discounted value of future states, averaged according to transition probabilities. This elegant equation transforms the problem of finding optimal strategies into a system of equations that can be solved through iterative methods.

The rise of modern machine learning saw the Bellman equation become central to reinforcement learning algorithms like Q learning and policy iteration. These algorithms have powered some of the most impressive AI achievements, from mastering complex games to controlling robotic systems.

Real-World Applications of Markov Decision Processes

The practical applications of markov decision processes span diverse industries. Their ability to model sequential decisions under uncertainty makes them invaluable for complex, real world problems.

Robotics and Autonomous Navigation

Robotics is perhaps the most natural application of Markov decision processes. A robot navigating an environment must make decisions at every moment. Where should it move? What obstacles should it avoid? How does it reach its goal efficiently?

Each decision affects future possibilities. The robot’s current position and sensor readings define the state. Actions control movement. Rewards encourage progress toward goals and penalize collisions. The markov decision process captures this sequential decision problem perfectly.

Modern autonomous vehicles use reinforcement learning MDP frameworks to navigate complex traffic scenarios. The vehicle must decide when to accelerate, brake, or change lanes while accounting for uncertainty about other drivers’ intentions. The remarkable history of artificial intelligence in autonomous vehicles shows how MDP based approaches have enabled vehicles to handle increasingly complex environments.

Robotic manipulation tasks also rely on markov decision processes. A robot arm trying to grasp an object must decide how to move its joints, what force to apply, and how to adjust based on sensor feedback. Each action changes the state and influences the success of the overall task.

Finance, Trading, and Portfolio Management

Financial markets are inherently sequential and uncertain. Every investment decision affects future wealth. Market conditions change unpredictably. Markov decision processes provide a powerful framework for optimizing trading strategies.

Consider portfolio management. The state might include current asset prices, portfolio composition, and market volatility indicators. Actions include buying, selling, or holding various assets. Rewards represent returns or risk adjusted performance. The Markov decision process models how current decisions affect future investment opportunities.

Algorithmic trading systems use MDP based approaches to execute large orders without moving prices unfavorably. They decide when to split orders, what timing to use, and how to respond to market movements. The incredible AI in healthcare history and evolution highlights parallel applications in medical treatment planning, where doctors must make sequential decisions about patient care.

Risk management also benefits from markov decision processes. Financial institutions model how current lending decisions affect future default probabilities. Insurance companies optimize pricing strategies by considering how current premiums influence customer retention and future claims.

Frequently Asked Questions

1. What is the Markov property in simple terms?

The Markov property means the future depends only on the present, not on the past. Given your current situation, history provides no additional information for predicting what happens next.

2. How does a Markov decision process differ from a Markov chain?

A Markov chain describes random transitions between states without any decisions. A Markov decision process adds actions and rewards, allowing an agent to influence outcomes through choices.

3. What is the discount factor in an MDP?

The discount factor, between 0 and 1, determines how much future rewards matter relative to immediate rewards. A factor near 0 focuses on short term gains. A factor near 1 values long term outcomes.

4. How do you solve a Markov decision process?

Common solution methods include value iteration, policy iteration, and linear programming. These algorithms compute optimal policies by iteratively improving value estimates and policy decisions.

5. Can MDPs handle continuous state spaces?

Yes, but the exact solution becomes challenging. Approximate methods like function approximation, discretization, or deep reinforcement learning extend MDP principles to continuous domains.

6. What is the relationship between MDPs and reinforcement learning?

Reinforcement learning solves MDPs when the transition probabilities and reward functions are unknown. The agent learns optimal behavior through interaction and experience rather than explicit modeling.

Conclusion

The markov decision process stands as one of the most elegant and powerful frameworks in artificial intelligence. It captures the essence of sequential decision making under uncertainty, providing a mathematical language for describing how agents should act to achieve long term goals. From robots navigating unknown terrain to traders optimizing investment portfolios, MDPs power some of the most sophisticated AI systems in existence.

Understanding markov decision processes is essential for anyone working with reinforcement learning. The concepts of states, actions, rewards, and the Bellman equation form the foundation upon which modern reinforcement learning algorithms are built. The shocking AlphaGo breakthrough demonstrated how MDP based reinforcement learning could achieve superhuman performance in complex games, marking a milestone in AI history.As artificial intelligence continues to advance, Markov decision processes will remain central to systems that must make decisions over time. Whether you are building autonomous vehicles, optimizing business operations, or developing intelligent assistants, the principles of MDPs provide a reliable guide. The modern artificial intelligence applications we see today owe much to this mathematical framework that transforms sequential decisions into solvable optimization problems.