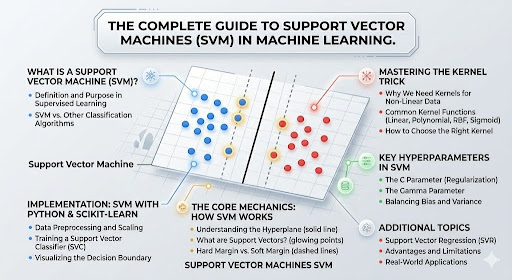

Introduction to Support Vector Machines

In the vast and complex world of machine learning, few algorithms command the same level of respect and admiration as support vector machines. This incredibly powerful tool stands as a monument to elegant mathematical theory applied to practical problems. For anyone serious about predictive modeling, mastering this algorithm is not just an option; it is a necessity. It provides a robust framework for classification and regression, delivering astonishing accuracy even with complex datasets. To understand where this algorithm fits in the broader timeline of artificial intelligence, exploring the evolution of machine learning algorithms provides valuable context.

What is a Support Vector Machine?

At its core, a support vector machine is a supervised learning model that analyzes data for classification and regression analysis. Imagine you have a set of points plotted on a two dimensional plane, belonging to two distinct categories. The support vector classifier works by finding the best line, or in higher dimensions, a hyperplane, that separates these categories. What makes it special is not just finding any line, but the optimal one that maximizes the distance between the line and the nearest points from each group. These critical points that define the boundary are called support vectors, giving the algorithm its name.

Where Does SVM Fit in Supervised Learning?

Within the hierarchy of machine learning, support vector machines are a cornerstone of supervised learning. This means they learn from labeled training data to make predictions on new, unseen data. Unlike unsupervised learning, which finds hidden patterns, supervised learning with SVM relies on the explicit relationship between input features and output labels. The rise of modern machine learning has seen SVM emerge as a foundational algorithm that bridges classical statistical learning with more complex neural approaches. It excels in binary classification, the task of distinguishing between two classes, but through strategic extensions, it adeptly handles multiclass problems and even regression tasks, known as Support Vector Regression (SVR). Its unique approach to defining an optimal decision boundary makes it a formidable tool in the data scientist’s arsenal.

Core Mechanics: How SVM Works

To truly appreciate the sophistication of support vector machines, one must understand the mechanics that drive its decision making process. It is a beautiful interplay of geometry, algebra, and optimization. The journey from early linear models like the perceptron machine learning guide highlights how SVM improved upon simpler concepts by introducing the principle of margin maximization.

Understanding Hyperplanes and Decision Boundaries

The fundamental concept in an SVM is the hyperplane. In a dimensional space with n features, a hyperplane is an n-1 dimensional subspace that acts as a decision surface. For a 2D feature space, this hyperplane is simply a line. For 3D, it is a plane. The goal of the svm algorithm is to find the hyperplane that best separates the data. Mathematically, this hyperplane is defined by the equation:

w · x + b = 0

Here, w is the weight vector normal to the hyperplane, x is the input vector, and b is the bias term. The optimal decision boundary is the one that creates the largest possible separation between the two classes.

What Are Support Vectors?

Support vectors are the most critical elements of the entire model. They are the data points that lie closest to the hyperplane. These points are pivotal because they alone define the position and orientation of the optimal hyperplane. If you were to move any other data point that is not a support vector, the hyperplane would not change. However, altering a support vector directly influences the decision boundary. This property makes the svm algorithm exceptionally memory efficient, as the model only needs to store these critical points after training, not the entire dataset.

The Importance of Maximizing the Margin

The margin is the distance between the hyperplane and the support vectors. The SVM’s primary objective is to maximize this margin. A larger margin is associated with lower generalization error, meaning the model is less likely to overfit to the training data. This principle is known as structural risk minimization.

For a linearly separable dataset, we aim for a hard margin, where no points are allowed to violate the margin. The optimization problem for maximizing the margin can be framed as minimizing the norm of the weight vector:

Minimize ||w||

This minimization is performed under a key constraint: every data point must be correctly classified and lie at least a certain distance from the hyperplane. In mathematical terms, for each data point i, we require that y_i times (w · x_i + b) is at least 1. Here, y_i represents the class label (either +1 or -1), ensuring that the point is on the correct side of the margin.

However, real world data is messy. To handle non separable data, we introduce a soft margin, which allows some points to fall on the wrong side of the margin. This is controlled by a regularization parameter, often denoted as C. This leads to a more complex optimization, often solved using Lagrange multipliers, transforming the problem into its dual formulation.

Types of Support Vector Machines

The adaptability of support vector machines is evident in their various forms, designed to tackle different kinds of data challenges.

Linear SVM (For Linearly Separable Data)

A linear SVM is the simplest form of the classifier. It is used when the data is linearly separable, meaning a straight line or a flat hyperplane can effectively separate the classes without any misclassification. This form is computationally efficient and works remarkably well for high dimensional data like text classification, where the feature space is so vast that the data often becomes linearly separable. It relies on a linear kernel and is a great starting point for any machine learning classification task.

Non-Linear SVM (For Complex Datasets)

Most real world datasets are not linearly separable. Consider a circular pattern where one class is a cluster in the center and the other surrounds it; no straight line can separate them. This is where the non-linear SVM shines. It uses a clever mathematical technique called the kernel trick to transform the original feature space into a higher dimensional space where the data becomes linearly separable. This transformation allows the algorithm to fit complex, non linear boundaries without explicitly computing the coordinates in the higher dimensional space, saving immense computational resources.

Mastering the Kernel Trick

The kernel trick is the secret sauce that empowers support vector machines to solve highly complex problems. It is a mathematical marvel that elevates the algorithm from a linear classifier to a formidable non linear modeling tool.

What is a Kernel Function?

A kernel function is a function that takes input vectors from the original feature space and computes their dot product in a higher dimensional feature space, without ever having to perform the transformation. In essence, it allows the SVM to learn a complex decision surface in the original space by performing the computation as if it were a simple linear separation in a transformed space. The choice of kernel directly impacts the model’s ability to capture patterns.

The Linear Kernel

The linear kernel is the simplest kernel function, defined as the dot product of two input vectors. It is equivalent to a linear SVM and is the default choice when the number of features is very high. In such cases, mapping to a higher dimension is unnecessary. It offers fast training and a model that is easy to interpret.

The Polynomial Kernel

The polynomial kernel is a powerful tool for capturing interactions between features. It introduces parameters for degree, coefficient, and intercept. This kernel can create complex decision boundaries that are curved and non linear. However, for high degree polynomials, the model can become prone to overfitting in svm, making careful tuning essential.

The Radial Basis Function (RBF) Kernel

The radial basis function kernel or rbf kernel is the most popular and versatile kernel used in support vector machines. It is defined as an exponential function of the squared distance between two points. It is a local kernel whose influence is limited to a specific region, making it excellent at handling complex, non linear relationships. The gamma parameter defines how far the influence of a single training example reaches. Low gamma values create a smooth boundary, while high gamma values create a tightly fit, more complex boundary around the data points.

Beyond Classification: Support Vector Regression (SVR)

While celebrated for classification, the principles of SVM extend elegantly to regression tasks. Support vector regression (SVR) flips the objective of the algorithm.

Adapting SVM for Continuous Variables

Instead of finding a hyperplane that separates classes, SVR aims to find a function that deviates from the actual target values by a value no greater than a specified margin, denoted as epsilon. It attempts to fit as many points as possible within this epsilon insensitive tube. Points outside this tube become the support vectors, driving the model’s optimization. This approach makes SVR a robust method for predictive modeling of continuous variables, as it focuses on the errors that truly matter, ignoring small, acceptable deviations.

Step-by-Step: Building an SVM Model

Building a successful support vector machine model is a methodical process. It involves careful preparation and strategic decision making to unlock its full potential.

Preprocessing Your Data

Preprocessing is arguably the most critical step. Because support vector machines rely on distance calculations between points, features on larger scales can dominate those on smaller scales, biasing the model. Therefore, scaling is essential. Using scikit-learn SVM implementation, techniques like StandardScaler or MinMaxScaler should be applied to all features. This ensures each feature contributes equally to the decision boundary. Missing value imputation and outlier detection are also vital preparatory steps.

Selecting the Right Kernel

The choice of kernel is driven by the nature of your data. If your data is roughly linear or has a very high number of features, start with the linear kernel. For non linear data, the rbf kernel is an excellent default due to its flexibility. The polynomial kernel can be useful for image processing or when you expect complex feature interactions. Cross validation is the most reliable way to empirically select the best kernel for your specific problem.

Training the Model and Tuning Hyperparameters

Once the kernel is selected, the focus shifts to hyperparameter tuning. For scikit-learn svm, key parameters include C for regularization, gamma for the rbf kernel, and epsilon for SVR.

The C parameter controls the tradeoff between achieving a low training error and a low testing error. A small C creates a soft margin, allowing for a wider margin but more misclassifications to avoid overfitting in svm. A large C aims for a hard margin with minimal misclassifications, which can lead to overfitting.

The gamma parameter defines the influence of a single training example. Low gamma means far reach, high gamma means close reach.

The best combination of these parameters is typically found using cross-validation techniques like GridSearchCV in scikit-learn SVM, which systematically tests a range of values to find the model with the highest generalization performance.

Pros and Cons of Using SVMs

Like any algorithm, support vector machines come with a unique set of strengths and weaknesses that determine their suitability for a given task.

Advantages (High Dimensionality, Memory Efficiency)

The svm algorithm offers several remarkable advantages. Its effectiveness in high dimensional spaces is unparalleled, making it a top choice for text and image classification where the number of features can dwarf the number of samples. It is also memory efficient because it relies only on a subset of training points (the support vectors) after training. Furthermore, its reliance on structural risk minimization makes it highly robust to overfitting when compared to other algorithms like neural networks, especially on smaller datasets. The rise of neural networks ai evolution has introduced alternative approaches, but SVM remains competitive for many structured data problems.

Disadvantages (Training Time, Scalability Issues)

Despite its power, support vector machines have notable limitations. When dealing with very large datasets (millions of samples), the training time can be prohibitively long due to the complexity of the optimization problem. The algorithm’s performance is also highly dependent on proper hyperparameter tuning, which can be time consuming. Additionally, the model is less interpretable than a decision tree, as the optimal decision boundary in a transformed feature space is not easily understood by humans.

Real-World Applications of SVM

The theoretical strength of support vector machines translates into incredible success across a diverse range of real world applications. These modern artificial intelligence applications demonstrate how SVM continues to power critical systems across industries.

Image and Object Recognition

Support vector machines have historically been a dominant force in image recognition. They excel at tasks like handwritten digit recognition, face detection, and object categorization. By using the rbf kernel or polynomial kernel, an SVM can learn to distinguish complex patterns of pixels and edges that define different objects, often achieving state of the art accuracy on smaller to medium sized datasets.

Text Classification and Sentiment Analysis

In the domain of natural language processing, support vector machines are a classic and highly effective tool. They are widely used for text classification, such as spam detection and topic categorization. For sentiment analysis, an SVM can learn to classify text as positive or negative based on the frequency and co occurrence of specific words. The linear kernel is particularly effective here because text data is naturally high dimensional and often linearly separable.

Bioinformatics and Protein Classification

The biological sciences present some of the most challenging classification problems, such as identifying which proteins belong to a specific family or classifying cancer cells based on gene expression. The ability of support vector machines to handle high dimensional data with relatively few samples makes them a perfect fit for bioinformatics. They are used for protein classification, identifying functional sites in DNA, and even in outlier detection for identifying rare but significant biological anomalies.

Frequently Asked Questions (FAQs)

1. Is SVM only for binary classification?

No. SVM handles multiclass problems using one versus one or one versus all strategies, where multiple SVM models work together.

2. What is the difference between hard margin and soft margin SVM?

Hard margin requires perfect separation with no errors. Soft margin, controlled by parameter C, allows some misclassifications to create a more generalized model.

3. How do I choose the right kernel?

Start with a linear kernel for high dimensional data. If performance is poor, switch to RBF kernel. Use cross-validation to confirm your choice.

4. Is feature scaling necessary for SVM?

Yes, absolutely. SVM relies on distance calculations, so unscaled features with larger ranges will dominate the model.

5. What does the C parameter control?

C controls the tradeoff between a wider margin and correct classification. Small C prioritizes margin width. Large C prioritizes classifying every training point correctly.

Conclusion

In the ever evolving landscape of machine learning, support vector machines remain a testament to the power of mathematical elegance. From their foundational principles of margin maximization to the sophisticated kernel trick, they offer a robust, versatile, and remarkably effective approach to data classification and regression. While they may require careful tuning and can be slower on massive datasets, their accuracy and efficiency in high dimensional spaces solidify their status as an essential algorithm. For data scientists and machine learning practitioners, a deep understanding of support vector machines is not just a skill, but a powerful asset for tackling some of the most challenging predictive modeling tasks.