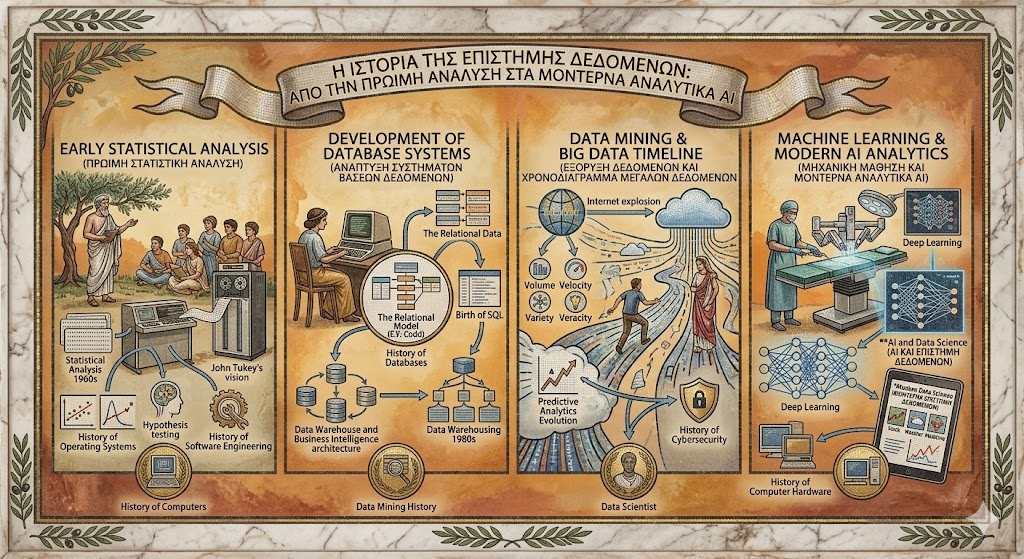

The History of Data Science is a journey of turning raw information into a form of “digital gold.” It represents the evolution of how humanity uses logic, math, and machines to predict the future and understand the past. Much like the History of computers, which provided the physical vessels for calculation, the field of data science has provided the intellectual framework to make sense of the massive amounts of data those computers generate. Today, data science is the engine behind everything from personalized movie recommendations to life-saving medical diagnoses.

Early Statistical Analysis – 1960s

In the 1960s, what we now call data science was rooted deeply in Statistical Analysis 1960s. During this era, statisticians used mainframe computers to process census data and economic trends. John Tukey, a visionary mathematician, began calling for a merger between statistics and computer science, suggesting that the “analysis of data” was a distinct discipline.

At this stage, the History of Operating Systems was in its infancy, and data processing was a slow, batch-oriented task. Researchers were limited by the physical constraints of memory and storage, but the foundational mathematical theories—like regression and hypothesis testing—were being digitized for the first time.

Development of Database Systems – 1970s

The 1970s marked a major shift as the focus moved from mere calculation to storage and retrieval. This period is vital in the History of Databases, as Edgar F. Codd introduced the relational model. This allowed data to be organized into tables, making it much easier to query and manipulate.

As the History of Software Engineering progressed, the ability to store structured data meant that businesses could start tracking inventory and customers with digital precision. The evolution of data science during this decade was characterized by the birth of SQL (Structured Query Language), which remains a primary tool for data scientists more than fifty years later.

Data Warehousing and Business Intelligence – 1980s

By the 1980s, corporations were sitting on mountains of data but lacked a way to see the “big picture.” This led to the era of Data Warehousing 1980s. Specialized systems were built to pull data from various departments into a central “warehouse” for analysis.

This birthed the field of Business Intelligence (BI). For the first time, executives could look at quarterly reports and year-over-year trends to make informed decisions. While it wasn’t yet “predictive,” it was the first time data was used strategically on a global scale to drive profit and efficiency.

Data Mining and Predictive Analytics – 1990s

The 1990s saw the term “Data Science” begin to gain traction in professional circles. This decade was defined by Data Mining History, where algorithms were used to “mine” large datasets for hidden patterns that weren’t obvious to the human eye.

The Predictive Analytics Evolution began here, as companies like credit card providers started using data to predict which customers were likely to default. As the History of Cybersecurity emerged as a major concern, data mining was also utilized to detect fraudulent patterns and unauthorized access. This was the moment data moved from being a historical record to a tool for forecasting.

Big Data and Advanced Analytics – 2000s

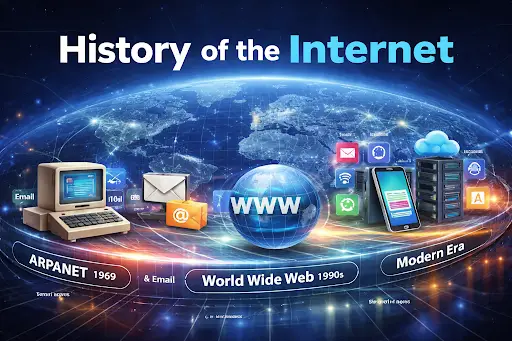

The new millennium brought the “Internet Explosion,” and with it, a volume of data that traditional databases could no longer handle. This was the start of the Big Data Timeline. Companies like Google and Yahoo developed technologies like MapReduce and Hadoop to process “unstructured” data—everything from emails and social media posts to GPS signals.

During this time, the History of Computer Hardware reached a point where thousands of cheap servers could be linked together to act as one giant super-analyser. The role of the “Data Scientist” was officially coined in 2008, described as a professional who combines the skills of a programmer, a statistician, and a storyteller.

Machine Learning and AI Integration – 2010s

The 2010s were the decade of the algorithm. Machine Learning History saw a massive leap forward as “Deep Learning”—modeled after the human brain—became commercially viable. AI and Data Science became inseparable, as models were trained on the massive datasets collected during the previous decade.

This era transformed industries. Netflix used it to suggest what you should watch next; Amazon used it to predict what you would buy before you even clicked. Data science was no longer just about reporting; it was about automation and autonomous decision-making.

Modern Data Science and Predictive Analytics – 2020s

As we navigate the 2020s, Modern Data Science is defined by “Real-Time Analytics.” We no longer wait for weekly reports; data is processed and acted upon the millisecond it is generated. The Predictive Analytics Evolution has reached a point where AI can forecast stock market fluctuations, weather patterns, and even potential disease outbreaks with staggering accuracy.

The current landscape also focuses heavily on “Ethical AI” and data privacy. Data scientists are now tasked with ensuring that algorithms are fair and transparent. With the integration of Generative AI, data science is now being used to create new data, simulate complex scenarios, and help humans solve the world’s most difficult problems in energy, medicine, and climate change.

Frequently Asked Questions (FAQs)

What is the difference between Data Science and Statistics?

While both involve analyzing data, statistics is primarily concerned with mathematical theory and historical analysis. Data Science incorporates statistics but adds computer science, programming, and Big Data technologies to build predictive models.

When was the term “Big Data” first used?

While the concept existed earlier, the term “Big Data” gained widespread popularity in the early 2000s to describe datasets so large that they required specialized software and hardware to process.

Why is Python the most popular language for Data Science?

Python is popular because it has a simple syntax and a massive ecosystem of libraries (like Pandas, Scikit-Learn, and TensorFlow) that make it easy to perform complex data analysis and machine learning.

What is a Data Warehouse?

A Data Warehouse is a central repository where data from different sources (like sales, HR, and marketing) is cleaned and stored specifically for analysis and reporting.

Conclusion

The History of Data Science is a testament to the human spirit’s desire for clarity in a complex world. We have moved from the simple Statistical Analysis 1960s to a world where AI-driven Modern Data Science influences every aspect of our lives. By combining the rigorous logic of the History of Software Engineering with the vast storage capabilities of modern systems, we have created a discipline that allows us to see the invisible and predict the unknown. As we look forward, the evolution of data science will continue to be the primary engine of human innovation, turning the vast ocean of digital information into a map for our future.