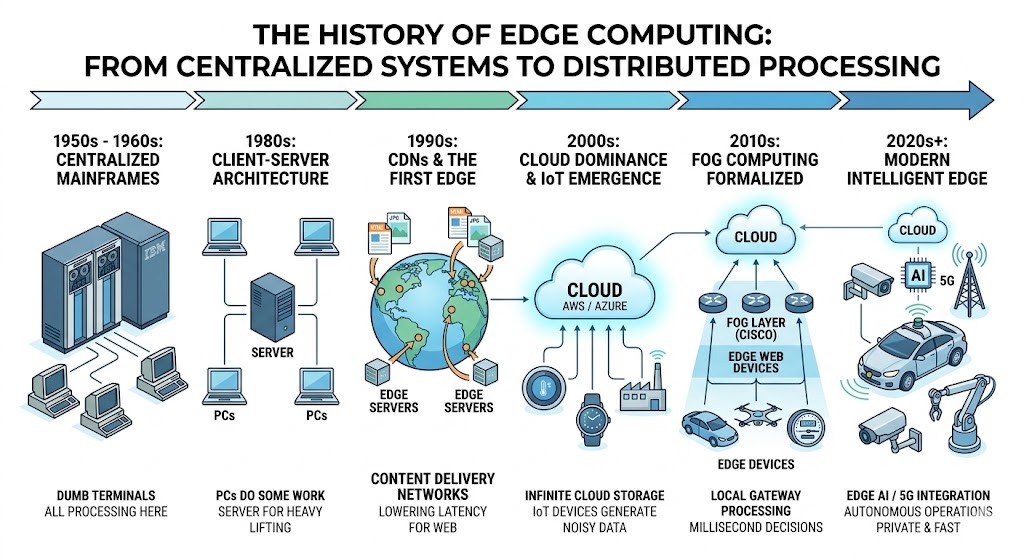

The history of edge computing reflects the evolution of how data is processed across computing systems. In the early decades of computing, nearly all data processing happened in centralized mainframe computers. As digital technologies expanded and internet-connected devices increased, the need for faster and more efficient data processing led to the development of distributed architectures.

Today, edge computing processes data closer to where it is generated—whether on smartphones, sensors, gateway devices, or local servers. This reduces latency and enables faster responses for applications like autonomous vehicles, smart cities, and real-time data analytics.

The development of edge computing is closely connected with the broader evolution of computing infrastructure discussed in history of computers, networking systems described in history of computer networking, and the rapid growth of connected devices explained in history of the internet of things (iot).

Understanding the history of edge computing reveals how modern decentralized infrastructure emerged from decades of technological innovation.

A. The Era of Extreme Centralization – 1950–1970

The earliest stage in the history of edge computing began during the 1950s and 1960s, when computing relied heavily on centralized systems.

During this era, organizations used large mainframe machines that processed all data within a single location. This architecture, often referred to as mainframe architecture, required users to connect to the central computer through terminals.

Although this approach worked for early applications, it created challenges such as limited scalability, slow processing speeds, and heavy reliance on centralized resources.

These early computing environments are explored in greater detail in history of computer hardware, which highlights the technological foundations of modern computing systems.

Centralized systems dominated computing for several decades, but as technology advanced, new architectures began to emerge.

B. The Shift to Client-Server Architecture – 1980–1990

A major transformation in the history of edge computing occurred during the 1980s, when the client-server model began replacing centralized mainframe systems.

In this architecture, client devices handled user interaction while servers managed data storage and processing. This model significantly improved scalability and enabled businesses to build distributed applications.

The client-server model also supported bandwidth optimization, allowing networks to distribute workloads more efficiently across multiple systems.

Software development methodologies described in history of software engineering also contributed to this transition, enabling developers to design more modular and scalable applications.

This shift represented an important milestone in the evolution of distributed processing, setting the stage for modern distributed architectures.

C. Content Delivery Networks (CDNs) and the First “Edge” – 1990–2000

During the 1990s, the rapid growth of the internet created new challenges in delivering digital content quickly and efficiently.

The solution was the development of content delivery networks (cdn), which distributed servers across multiple geographic locations.

This innovation represented one of the first practical implementations of edge computing concepts.

Akamai and the Birth of CDNs

In 1998, Akamai introduced one of the first large-scale CDNs. These networks cached web content closer to users, reducing latency and improving website performance.

The development of CDNs marked an important chapter in the history of edge computing, as it demonstrated how distributed servers could improve speed and reliability.

This milestone also connects to the growth of global connectivity explained in history of internet.

CDNs introduced the idea of processing and delivering content closer to users—an idea that would later become central to edge computing.

D. The Cloud Dominance and IoT Emergence – 2005–2010

The mid-2000s saw the rapid rise of cloud computing platforms. Cloud systems allowed businesses to store and process large volumes of data using centralized data centers.

However, the rapid growth of iot and edge devices created new challenges. Billions of connected sensors, cameras, and smart devices generated enormous amounts of data.

Sending all this data to centralized cloud servers increased network congestion and processing delays.

This problem highlighted the limitations of cloud-only architectures and accelerated the history of edge computing.

E. Fog Computing and the Focus Keyword: History of Edge Computing – 2012–2015

As cloud limitations became evident, researchers introduced new distributed architectures designed to process data closer to the source.

One of the most influential ideas during this stage of the history of edge computing was fog computing.

Fog computing extended cloud capabilities by placing processing resources closer to network devices.

Cisco and Fog Computing – 2012

In 2012, Cisco formally introduced the concept of fog computing.

Fog systems use gateway devices and intermediate computing layers to process data between the cloud and end devices.

This architecture improved latency reduction in networking, making it ideal for applications such as autonomous vehicles, industrial automation, and smart cities.

Fog computing became an important milestone in the history of edge computing, bridging the gap between centralized cloud systems and distributed edge processing.

F. The Modern Edge and 5G Integration – 2018–2024

The most recent phase in the history of edge computing began in the late 2010s, with the rapid development of 5g network architecture and high-performance edge devices.

Technologies such as multi-access edge computing (mec) allow telecom providers to deploy computing resources directly within network infrastructure.

These systems enable ultra-fast real-time data analytics, which is essential for applications like autonomous vehicles, smart manufacturing, and augmented reality.

Edge computing also supports emerging technologies such as modern artificial intelligence applications, where AI models process data locally instead of relying entirely on remote cloud servers.

The rise of edge computing has also complemented the massive datasets generated in modern analytics systems described in history of big data.

G. The Future: Toward a Seamless Continuum – 2025 and Beyond

Looking ahead, the history of edge computing is expected to evolve into a seamless continuum of cloud, edge, and device-level computing.

Future systems will likely integrate:

- decentralized infrastructure for large-scale distributed processing

- advanced edge AI for intelligent autonomous systems

- improved bandwidth optimization across networks

- enhanced real-time analytics for smart cities and industrial automation

Edge computing will become a critical foundation for the next generation of digital infrastructure.

Frequently Asked Questions (FAQs)

What is edge computing?

Edge computing is a distributed computing model where data processing occurs closer to the data source rather than in centralized cloud servers.

Why is edge computing important?

Edge computing reduces latency, improves bandwidth efficiency, and enables real-time data processing for connected devices.

How is edge computing different from cloud computing?

In edge computing vs cloud computing history, cloud systems rely on centralized data centers, while edge computing processes data locally near devices.

What technologies support edge computing?

Technologies include fog computing, multi-access edge computing, IoT devices, and high-speed 5G networks.

Conclusion

The history of edge computing illustrates the transformation of computing infrastructure from centralized mainframe systems to highly distributed architectures. Over several decades, innovations such as client-server models, content delivery networks, fog computing, and 5G integration have enabled faster and more efficient data processing.

As digital ecosystems continue expanding with billions of connected devices, edge computing will play a vital role in enabling intelligent applications, decentralized infrastructure, and real-time analytics across industries worldwide.