Introduction

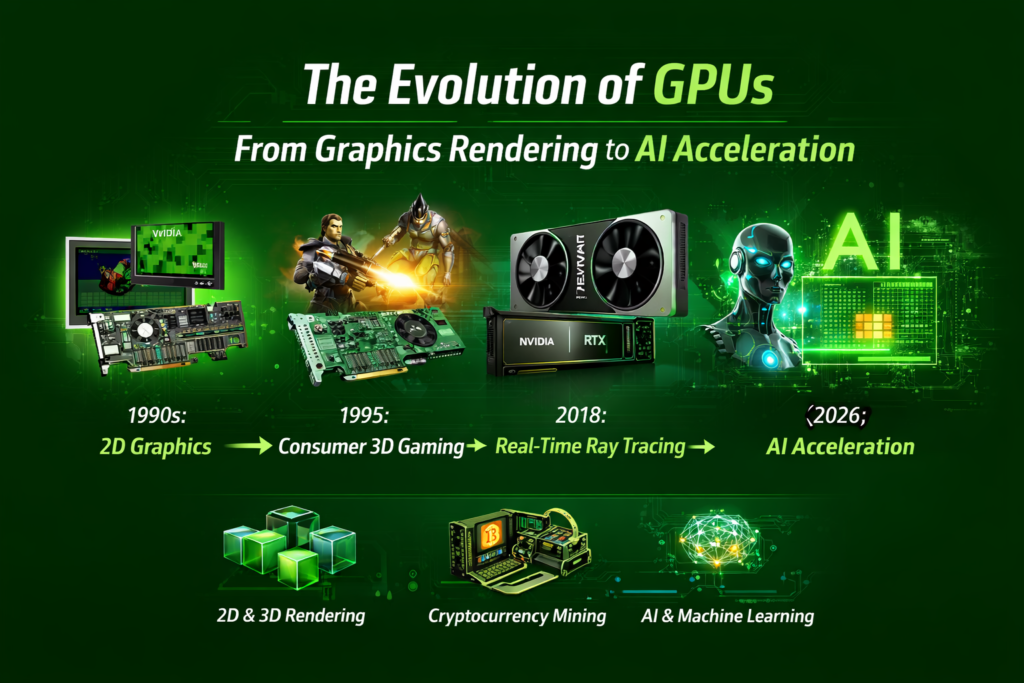

The evolution of gpus is one of the most powerful and fascinating technological journeys in modern computing. Graphics Processing Units (GPUs) began as simple chips designed to accelerate image rendering for video games. Today, they have transformed into massively parallel processors capable of powering artificial intelligence, scientific simulations, and deep learning models.

Originally built to improve frame rates and visual effects in gaming, GPUs quickly evolved into specialized processors optimized for parallel workload execution. Unlike CPUs that focus on sequential processing, GPUs excel at handling thousands of tasks simultaneously. This capability makes them ideal for complex workloads such as neural networks, data analysis, and high-performance computing.

The evolution of gpus has been driven by innovations in semiconductor engineering, graphics algorithms, and software frameworks. Early graphics cards relied on rasterization techniques and simple pipelines, but modern GPUs now integrate tensor cores, ray tracing engines, and AI-accelerated rendering systems like DLSS.

This transformation is closely connected with advancements in computing architecture and chip design. Innovations such as the evolution of transistors have allowed GPUs to pack billions of transistors onto a single chip, dramatically increasing their performance measured in TFLOPS.

From arcade machines to powering ChatGPT and Gemini, the evolution of gpus represents a remarkable story of technological innovation and computing power.

A. The Early Days of 2D Graphics Accelerators (1970 – 1995)

During the earliest stage of the evolution of gpus, computers lacked dedicated hardware for graphics rendering. Instead, CPUs handled most visual calculations, limiting graphical performance.

Before the GPU: Framebuffers and Video Display Controllers

In the 1970s and early 1980s, computers used framebuffers and video display controllers to generate images on screens. These systems stored pixel data in memory known as VRAM and transmitted the information to display devices.

At the time, rendering images required significant CPU resources. As graphical interfaces became more complex, developers realized that specialized hardware was necessary to accelerate rendering tasks.

These early graphics chips focused primarily on 2D acceleration tasks such as drawing lines, filling shapes, and handling window interfaces. These innovations laid the groundwork for the modern GPU pipeline.

This stage in the evolution of gpus also coincided with developments in the history of computer graphics, where researchers experimented with rasterization techniques and early texture mapping concepts.

Arcade Cabinets and the First 3D Hardware Pushes

The gaming industry played a major role in the early evolution of gpus. Arcade cabinets in the late 1980s introduced hardware capable of rendering primitive 3D graphics.

Companies like Sega and Namco developed specialized chips designed to accelerate polygon rendering and texture mapping. These systems demonstrated the potential of hardware acceleration in gaming.

Although primitive by today’s standards, these early machines paved the way for consumer graphics cards capable of handling 3D environments.

B. The 3D Gaming Boom and 3dfx Voodoo (1995 – 1999)

The mid-1990s marked a turning point in the evolution of gpus as 3D gaming became increasingly popular.

The Incredible Evolution of GPUs in Consumer 3D Gaming

During this era, PC gaming experienced explosive growth thanks to titles such as Quake and Unreal. These games required advanced graphics hardware capable of rendering complex environments in real time.

Companies like 3dfx introduced the Voodoo graphics card, which dramatically improved performance by accelerating texture mapping, shading, and depth calculations.

These cards utilized specialized hardware components such as Texture Mapping Units (TMUs) to improve rendering efficiency.

The rapid growth of gaming hardware also influenced the broader history of computer hardware, shaping the direction of PC component development.

NVIDIA GeForce 256: Defining the Term “GPU”

In 1999, NVIDIA introduced the GeForce 256, a revolutionary graphics card that officially introduced the term “GPU.”

This chip integrated hardware transformation and lighting (T&L), enabling the graphics card to perform complex geometry calculations previously handled by CPUs.

The GeForce 256 significantly improved performance and established the modern GPU architecture that continues to influence graphics hardware today.

C. Programmable Shaders and the Birth of CUDA (1999 – 2010)

The next stage in the evolution of gpus introduced programmable graphics pipelines, enabling developers to write custom shader programs.

Moving from Fixed-Function to Programmable Graphics

Earlier GPUs relied on fixed-function pipelines that executed predefined operations. However, the introduction of programmable shaders allowed developers to control rendering operations through custom code.

Shaders enabled advanced visual effects such as dynamic lighting, reflections, and complex materials.

These innovations greatly expanded the capabilities of GPUs and played a crucial role in the evolution of 3D rendering.

GPGPU: Using Graphics Cards for General Math Problems

One of the most groundbreaking developments in the evolution of gpus occurred when researchers realized GPUs could perform general mathematical computations.

This concept, known as General Purpose GPU (GPGPU), allowed graphics cards to accelerate scientific simulations and data analysis.

In 2006, NVIDIA introduced CUDA, a programming platform that enabled developers to write GPU-accelerated applications using familiar programming languages.

CUDA opened the door for GPUs to power applications beyond gaming, including machine learning and scientific computing.

This transition also influenced areas like history of data science, where GPUs dramatically accelerated large-scale data processing.

D. Ray Tracing and the Cryptomining Craze (2010 – 2020)

The decade between 2010 and 2020 introduced dramatic advancements in GPU architecture and performance.

Real-Time Ray Tracing: Simulating Light Photorealistically

One of the most exciting developments in the evolution of gpus was real-time ray tracing.

Ray tracing simulates how light interacts with objects in a virtual environment, producing highly realistic reflections, shadows, and lighting effects.

NVIDIA’s RTX series introduced dedicated ray tracing cores capable of accelerating these complex calculations.

Combined with AI-based technologies like DLSS, ray tracing dramatically improved graphical realism in modern video games.

Why GPUs Became the Backbone of Blockchain Technology

Another surprising chapter in the evolution of gpus was the rise of cryptocurrency mining.

Blockchain networks rely on cryptographic calculations that require massive parallel processing power. GPUs proved ideal for this workload due to their ability to perform thousands of calculations simultaneously.

As a result, GPUs became essential tools for mining cryptocurrencies like Ethereum.

This phenomenon connected GPU technology to the history of blockchain technology, demonstrating how graphics processors could be repurposed for entirely different computational tasks

E. Tensor Cores and the AI Revolution (2020 – 2026)

The most recent chapter in the evolution of gpus is driven by artificial intelligence and machine learning.

Large Language Models: Why GPUs Power ChatGPT and Gemini

Modern AI models require enormous computational power to train and operate.

GPUs are particularly well-suited for deep learning because neural networks rely heavily on parallel matrix operations.

Technologies such as tensor cores accelerate these operations, enabling GPUs to process massive datasets quickly.

This is why systems powering ChatGPT, Gemini, and other AI platforms rely heavily on GPU clusters.

The evolution of gpus has therefore become a central factor in modern AI hardware milestones.

Integrated vs. Discrete GPUs in the Era of Shared Memory

Today’s computing landscape includes two primary types of GPUs: integrated GPUs and discrete GPUs.

Integrated GPUs are built into CPUs and share system memory, making them efficient for everyday tasks.

Discrete GPUs, on the other hand, include dedicated VRAM and significantly more processing cores, making them ideal for gaming, AI training, and scientific computing.

Advancements in memory systems, including the rise of storage technology, have also contributed to the increasing efficiency of GPU architectures.

Frequently Asked Questions FAQs

What is a GPU?

A GPU (Graphics Processing Unit) is a specialized processor designed to accelerate graphics rendering and parallel computations.

Why are GPUs important for artificial intelligence?

GPUs excel at parallel processing, making them ideal for training neural networks and processing large datasets.

What is the difference between GPU and CPU?

CPUs handle sequential tasks efficiently, while GPUs are optimized for parallel workloads involving thousands of simultaneous operations.

What company invented the GPU?

NVIDIA popularized the term GPU in 1999 with the release of the GeForce 256.

Why are GPUs used for cryptocurrency mining?

Cryptocurrency mining requires massive parallel calculations, which GPUs can perform much faster than CPUs.

Conclusion: The AI Acceleration Era and GPU Innovation (2020 – 2026)

The evolution of gpus has transformed graphics processors from simple rendering devices into powerful engines driving modern computing innovation.

From early 2D accelerators to cutting-edge AI accelerators, GPUs have revolutionized industries including gaming, scientific research, machine learning, and blockchain technology. Today’s GPUs contain billions of transistors and thousands of CUDA cores capable of performing trillions of calculations per second.

Modern GPUs power everything from photorealistic graphics using ray tracing to large language models that require enormous parallel workload processing. Technologies like tensor cores, neural engines, and AI-based rendering have dramatically improved performance and efficiency.

The next stage in the evolution of gpus will likely involve chiplet architectures, improved VRAM bandwidth, and deeper integration with CPUs through shared memory systems. As artificial intelligence continues to grow, GPUs will remain essential for powering deep learning systems, data centers, and advanced simulations.

Ultimately, the evolution of gpus demonstrates how a technology originally designed for video games became one of the most critical components in the future of computing.