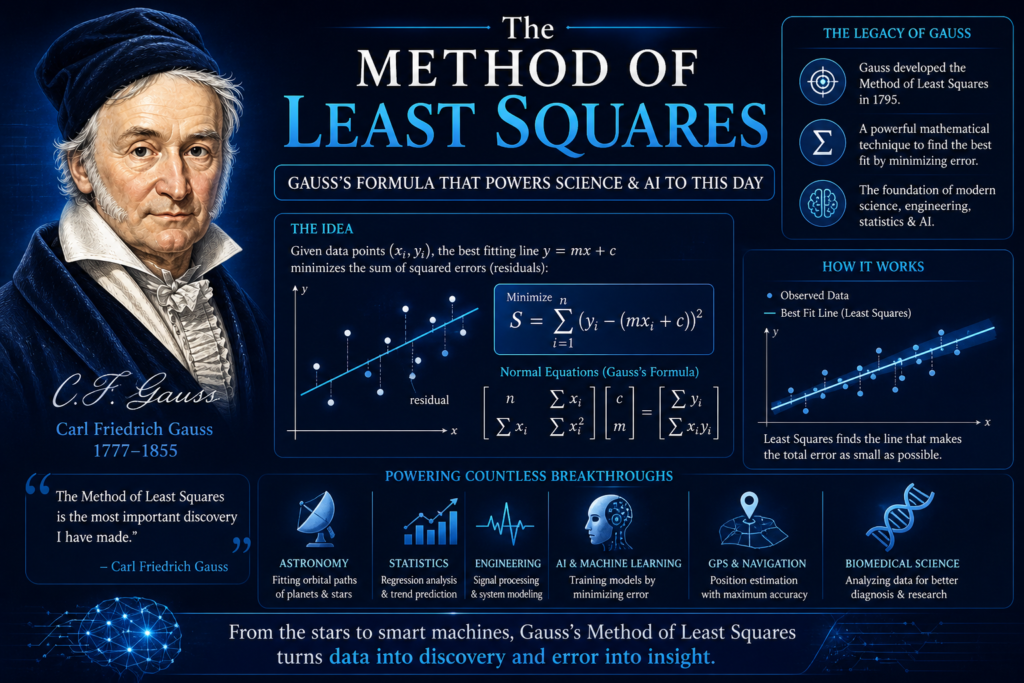

Every day, without knowing it, you trust a mathematical formula. When your GPS calculates the fastest route, when Netflix recommends a movie, or when a doctor diagnoses a disease from a blood test, they all rely on the same brilliant idea. That idea is the method of least squares. It sounds technical, but its logic is simple and beautiful. How do you find the truth when every measurement contains a little error? How do you draw the best line through a cloud of messy data points? A German mathematician, the prince of mathematics, solved this puzzle over two centuries ago. His solution did not just change astronomy; it created the foundation of machine learning foundations and modern artificial intelligence. Let us explore the astonishing power of the method of least squares and why it remains the quiet engine of the digital age.

The Problem Every Scientist Faces

Imagine you are trying to measure the speed of sound. You perform ten experiments. Every time, you get a slightly different number because of wind, temperature, or human reaction time. Which number is correct? Do you trust the first, the last, or the average? Before 1805, scientists had no rigorous answer. They guessed. They argued. They wasted years debating which measurement was “pure.” The world needed a mathematical rule to turn messy residuals (the differences between observed and predicted values) into reliable knowledge. This problem appears everywhere: in regression analysis, in data fitting, and in statistical estimation. The answer came from a young mathematician obsessed with minimization of errors. His name was carl friedrich gauss, and his tool was the method of least squares.

The Birth of a Genius Formula

The story of the method of least squares begins with a lost planet. As we saw with gauss and ceres, astronomers needed to predict the orbit of a missing dwarf planet using limited data. Gauss realized that every astronomical observation contained small, random errors. He assumed that small errors are more likely than large errors and that positive and negative errors are equally likely. From these reasonable assumptions, he derived a revolutionary conclusion: the best estimate of the true value is the one that minimizes the sum of the squared errors. In mathematical notation, if you have a set of observed values and you want to find the predicted value , you minimize:

For linear regression, where you are fitting a straight line to data, the method of least squares finds the slope and intercept that make as small as possible. This simple equation changed everything. It turned residuals from a nuisance into a precise mathematical target.

How the Mathematics Actually Works

Let us go deeper into the math behind the method of last squares. Suppose you have three data points that roughly follow a line. You want to find the line that best fits these points. For each point , the vertical distance between the point and the line is called the residual . Some residuals are positive (point above the line), some are negative (point below the line). If you simply added them up, the positives and negatives would cancel, giving a false impression of accuracy. Gauss’s brilliant move was to square each residual. Squaring makes all residuals positive and heavily penalizes large errors. The cost function (the quantity we want to minimize) becomes:

To find the best a and b, you take partial derivatives of this cost function with respect to a and b, set them to zero, and solve the resulting equations. This produces the famous “normal equations” of matrix algebra. For large datasets, solving these equations is computationally intense, but modern algorithms like gradient descent do it in milliseconds. This is the core of optimization algorithms and predictive modeling.

Gauss vs. Legendre: A Battle for Credit

The history of the method of least squares contains a famous rivalry. In 1805, the French mathematician Adrien Marie Legendre published the method of least squares in a book about comets. He called it the “method of least squares” for the first time and showed how to apply it. However, Gauss claimed he had been using the same method since 1795, a full decade earlier. When Gauss published his own account in 1809, he included a scathing footnote implying Legendre had stolen the idea. Most historians now believe that Gauss did indeed discover the method of least squares first. He used it to solve gauss and ceres in 1801. However, Legendre published first. Today, both men receive credit. But the mathematical world acknowledges that Gauss connected the method of least squares to probability theory, proving why it works so beautifully. Legendre saw it as a practical trick; Gauss saw it as a fundamental law of statistical estimation.

The Deep Connection to Probability

Why does squaring the errors produce the best result? Why not use absolute values or cube the errors? Gauss provided a rigorous proof using probability theory. He assumed that the errors follow the gauss normal distribution (the famous bell curve). He then asked: given the observed data, what values of the unknown parameters make the observed data most probable? This is called maximum likelihood estimation. When Gauss solved this problem for the gauss normal distribution, the method of least squares popped out naturally. In other words, the method of least squares is not an arbitrary rule. It is the mathematically correct method when your errors are randomly distributed with a constant variance. This connection between regression analysis and probability turned the method of least squares from a useful trick into a cornerstone of inferential statistics.

From Astronomy to Everywhere

The method of least squares spread rapidly through 19th century science. In gauss geodesy, Gauss used his own method to measure the shape of the Kingdom of Hanover. He calculated the precise curvature of the Earth’s surface. In physics, researchers used the method of least squares to determine fundamental constants like the speed of light and the charge of the electron. In biology, Francis Galton used it to study heredity and regression toward the mean (which is where the term regression analysis comes from). By the early 20th century, the method of least squares was the standard tool for curve fitting in every laboratory across the world. It solved overdetermined systems (where you have more equations than unknowns) with elegance and efficiency. No other method offered the same combination of simplicity, speed, and mathematical rigor.

The Mathematics of Overdetermined Systems

Let us look at a classic overdetermined systems problem. Suppose you have 100 measurements but only 2 unknown parameters (like the slope and intercept of a line). You cannot satisfy all 100 equations perfectly because the measurements contain errors. The method of least squares finds the best compromise. In matrix notation, you write the system as , where is a matrix of data, is the vector of unknown parameters, and yis the vector of observations. Because the system has no exact solution, you instead solve the “normal equations”:

The solution is . This formula is taught in every university statistics course. It is the foundation of linear regression and predictive modeling. Without the method of least squares, we could not build predictive models from noisy data. Every time a data scientist types lm(y ~ x) in R or sklearn.linear_model in Python, they are invoking Gauss’s 200 year old formula.

How the Method of Least Squares Powers AI

The connection between the method of least squares and modern artificial intelligence is deep and direct. Most machine learning algorithms are built on the concept of a cost function. The algorithm tries to minimize the difference between its predictions and the true answers. For linear regression (the simplest form of machine learning), the cost function is exactly the sum of squared errors. For more complex models like neural networks, the cost function is often a generalization of the same idea. Gradient descent, the algorithm that trains most deep learning models, was invented to minimize the same kinds of squared error functions that Gauss minimized by hand. The method of least squares provides the theoretical foundation for algorithmic accuracy and computational efficiency. When you hear about “training data” and “loss functions,” you are hearing echoes of Gauss.

Real World Applications You Use Daily

You encounter the method of least squares dozens of times every day. When your smartphone predicts the next word you will type, a statistical model (often trained using least squares) made that guess. When Amazon suggests products you might like, predictive modeling algorithms (based on least squares) analyzed your purchase history. When weather forecasters predict tomorrow’s temperature, they run numerical models that use optimization algorithms descended from Gauss’s work. Even the covariance matrices used in portfolio optimization on Wall Street rely on the method of least squares. It is impossible to overstate how deeply this formula is woven into the fabric of modern technology. From regression analysis in medical trials to curve fitting in satellite trajectories, the method of least squares is the quiet hero.

Common Misunderstandings and Limitations

Despite its power, the method of least squares has limitations. It is very sensitive to outliers (extreme data points). A single bad measurement can completely distort the result. That is why data scientists often clean their training data before applying least squares. The method also assumes that the errors are independent and have constant variance. If these assumptions are violated (for example, in financial data where volatility changes over time), least squares can give misleading results. In those cases, statisticians use robust regression or weighted least squares. But for the vast majority of scientific and engineering problems, the method of least squares remains the gold standard. Its computational efficiency is hard to beat.

Frequently Asked Questions (FAQs)

What exactly is the method of least squares in simple terms?

The method of least squares is a mathematical way to find the best fitting line or curve through a set of data points. It works by minimizing the sum of the squared vertical distances between each data point and the line. The “squaring” step ensures that errors on both sides of the line count equally and that large errors are penalized more heavily than small ones. It is the standard tool for data fitting and regression analysis.

Why do we square the errors instead of using absolute values?

Squaring the errors has several advantages. First, it makes all residuals positive, so they do not cancel each other out. Second, squaring penalizes large errors much more than small errors, which pushes the solution to avoid extreme mistakes. Third, the square function is smooth and differentiable, allowing mathematicians to use calculus to find the minimum. Using absolute values creates a sharp V shape that is harder to solve analytically. The method of least squares is mathematically elegant and computationally efficient.

Who invented the method of least squares?

The invention is credited to both carl friedrich gauss (the prince of mathematics) and Adrien Marie Legendre. Legendre published first in 1805. However, Gauss claimed he had been using the method since 1795 and proved that he used it to solve gauss and ceres in 1801. Most historians agree that Gauss discovered it first, but Legendre named it and published it first. Today, both receive recognition.

How is the method of least squares used in machine learning?

The method of least squares is the foundation of linear regression, which is the simplest form of supervised machine learning. In neural networks and deep learning, the cost function that the network tries to minimize is often a generalized version of the sum of squared errors. The gradient descent algorithm used to train these networks was invented to minimize exactly the same kind of mathematical function that Gauss studied. So every AI model is built on Gauss’s ideas.

What are the limitations of the method of least squares?

The main limitations are sensitivity to outliers and reliance on assumptions. If your data contains extreme errors (outliers), the method of least squares can give very poor results because squaring amplifies the influence of those outliers. The method also assumes that the errors are independent, have constant variance, and follow a gauss normal distribution. When these assumptions are violated, other methods like robust regression or quantile regression may perform better.

Conclusion

The method of least squares is far more than a dusty equation from a 19th century textbook. It is a living, breathing tool that powers the most advanced technologies of our time. From the recovery of gauss and ceres to the training of deep neural networks, this simple idea of minimizing squared errors has proven to be one of the most fertile concepts in human history. Carl friedrich gauss, the prince of mathematics, gave us a way to find truth inside noise. He showed us that even when the universe gives us messy, imperfect data, we can still extract reliable knowledge. His work on the gauss normal distribution, gauss non euclidean geometry, and the gauss-weber telegraph would have been enough for ten ordinary scientists. But the method of least squares stands above them all as his most practical gift to the world. In many ways, how ancient greek scientists changed modern science by creating geometry and logic, Carl Friedrich Gauss gave us the mathematics of uncertainty and data. Every line of best fit, every prediction from an AI model, and every scientific curve is a quiet tribute to his enduring genius.