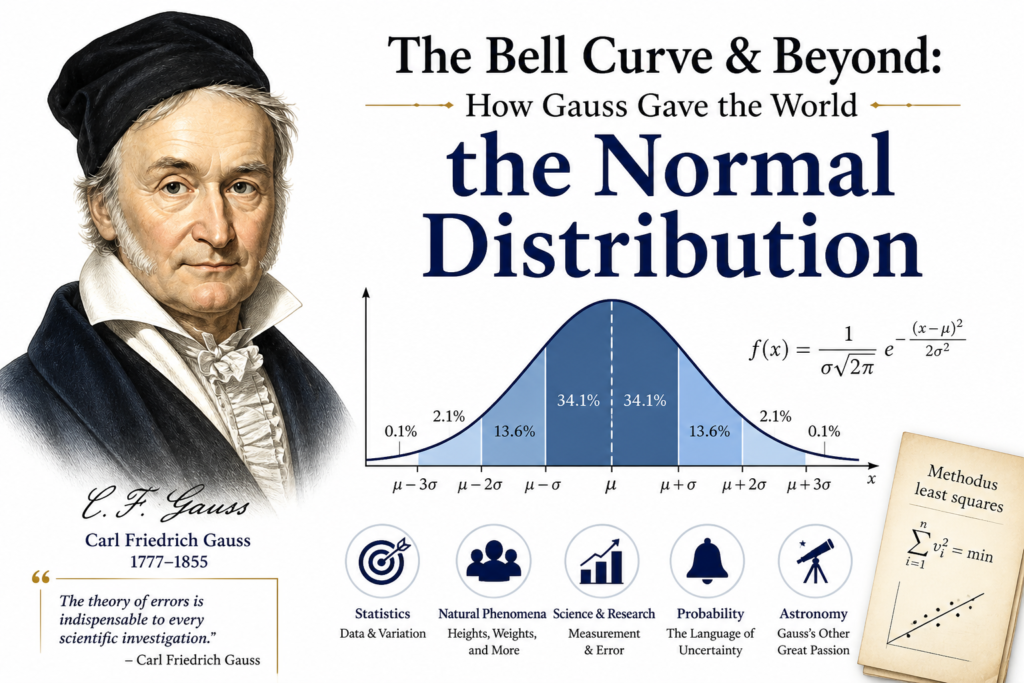

Imagine trying to predict tomorrow’s stock market, the height of a stranger, or the outcome of a national election. The world feels chaotic and random. Yet, hidden inside that chaos is a beautiful, predictable pattern that looks like a bell. This pattern is the normal distribution, and it is the single most important concept in statistics. Without it, there is no quality control in factories, no accurate medical trials, and no way to grade a standardized test. This is the story of how a German genius, the prince of mathematics, looked at random errors and saw a universal law. We will explore the mathematics behind the curve, how it solved real world problems like finding a lost planet, and why every aspiring data scientist must master this concept. From the classroom to the frontiers of artificial intelligence, the normal distribution remains the quiet engine of scientific discovery.

The Problem of Random Errors

Before the 19th century, scientists had a massive headache. When they measured the distance between two stars or the length of a metal bar, they never got the same answer twice. Air temperature, human eyesight, and tiny vibrations all created “errors.” The big question was: how do you find the “true” value from a pile of slightly different measurements? Most early scientists simply took the average, but they could not prove why this was mathematically correct. They lacked a rigorous model for randomness. Enter carl friedrich gauss, a man obsessed with precision. He did not just want a practical trick; he wanted a mathematical proof that explained the structure of uncertainty. His solution would give the world the normal distribution.

The Mathematical Genius Behind the Curve

What exactly is the normal distribution? Technically, it is a probability function that describes how the values of a variable are distributed. Most of the observations cluster around the middle (the mean), while fewer and fewer observations appear as you move further away from the center. The famous bell shaped curve is actually the graph of a specific exponential equation. The formula looks intimidating, but its logic is pure elegance:

In this equation, the Greek letter μ (mu) represents the mean, or the center of the distribution. The Greek letter σ (sigma) represents the standard deviation, which measures the spread of the data. A small sigma produces a tall, skinny bell curve, meaning very little variation. A large sigma produces a flat, wide curve, meaning high variation. The constant π (pi) and Euler’s number e appear in the equation, proving that the normal distribution connects geometry, calculus, and probability into one beautiful package. This equation is the heart of statistics basics and the foundation of probability theory.

How Gauss Discovered the Bell Curve

The discovery did not happen in a vacuum. While studying astronomy, carl friedrich gauss needed a way to predict the orbit of celestial bodies. He knew that every measurement contained errors, but he assumed that small errors were more likely than large errors. He also assumed that errors were symmetric (equally likely to be positive or negative). By applying calculus and the principle of maximizing the likelihood of the observed data, he derived the normal distribution. He published this work in 1809 in his treatise Theoria Motus Corporum Coelestium (Theory of the Motion of Heavenly Bodies). This was the birth of the method of least squares. Gauss proved that the normal distribution is not just a convenient guess; it is the only distribution that makes the arithmetic mean the most probable true value.

The Central Limit Theorem: The Hidden Magic

Here is the truly astonishing fact about the normal distribution: it shows up everywhere, even when the original data is not normal. This is due to a mathematical rule called the Central Limit Theorem. In simple terms, if you take many random samples from almost any population and calculate the averages of those samples, those averages will form a normal distribution. This is the secret engine of inferential statistics. It allows pollsters to predict election results by asking only a thousand people. It allows pharmaceutical companies to test a drug on 500 patients and generalize the results to the entire human population. The Central Limit Theorem explains why the normal distribution is normal. It is the natural resting state of aggregated randomness. You do not need to force nature to fit the bell curve; nature naturally rings the bell when you listen correctly.

Gauss vs. Laplace: The Race for the Curve

It would be unfair to mention carl friedrich gauss without acknowledging Pierre Simon Laplace. While Gauss derived the normal distribution from the theory of errors (looking for the most accurate measurement), Laplace discovered it from the Central Limit Theorem (looking at sums of random variables). Both giants of mathematics were working simultaneously in the early 1800s. In fact, the curve is often called the “Gaussian distribution” in physics and engineering, but “Laplace Gauss curve” in pure statistics. However, history generally credits Gauss with the wider application because he connected the normal distribution directly to error analysis and astronomy. His work on gauss and ceres (finding the lost dwarf planet) demonstrated the practical power of the curve so dramatically that the scientific world never looked back.

Real World Applications: From IQ to Quality Control

Once you learn to recognize the normal distribution, you see it everywhere. Human characteristics like height, weight, and IQ scores all follow a bell curve. In a large population, most people have average intelligence, while very few are geniuses or have significant intellectual disabilities. In manufacturing, the normal distribution is the basis for Six Sigma quality control. If a factory produces thousands of screws, the lengths will vary randomly around a target value. By calculating the standard deviation and variance, engineers can predict how many screws will be defective before they even measure them. In finance, the normal distribution helps calculate risk. However, finance also shows the limits of the curve (real markets have “fat tails” or extreme events more often than the bell curve predicts). Despite this, the normal distribution remains the starting point for statistical modeling and regression analysis.

The Fast Fourier Transform Connection

There is a deep mathematical connection between the normal distribution and signal processing. The gauss fast fourier transform (FFT) is a mathematical algorithm used to convert a signal from its original domain (often time or space) to a representation in the frequency domain. Interestingly, the Fourier transform of a normal distribution is another normal distribution. This property is called “self reciprocity.” It is why Gaussian blur is the most common blurring filter in image editing software like Photoshop. When you blur an image, you are applying a mathematical function based on the normal distribution to every pixel. This smooths out noise and reduces detail in a statistically natural way. Without this property, modern digital photography and MRI medical scans would not exist.

Common Misconceptions About the Bell Curve

Despite its widespread use, the normal distribution is often misunderstood. First, not everything is normally distributed. Income distribution, for example, is heavily skewed to the right (most people earn little, a few earn a lot). Second, the normal distribution requires continuous data that can go infinitely in both directions (negative to positive infinity), but real world data like human height cannot be negative. Third, people often confuse the bell shaped curve with the shape of a population pyramid. A true normal distribution is symmetric; the mean and median are exactly the same. If the median is far from the mean, the data is not normal. Understanding these limitations is part of advanced data science and statistics basics. The normal distribution is a model, not a law of nature, but it is an incredibly useful one.

The Gaussian Legacy in Modern Science

Today, the influence of carl friedrich gauss extends far beyond the bell curve. His work on magnetism with Wilhelm Weber led to the gauss-weber telegraph, a precursor to modern communication. His mathematical work on curved surfaces gave us gaussian curvature, which Einstein used for general relativity. His foundational texts on gauss number theory remain essential for cryptography. However, the normal distribution is arguably his most democratic legacy. You do not need a Ph.D. in mathematics to understand the bell curve. A high school student can calculate a Z-score to see how they performed relative to the class. A business owner can use sampling distribution theory to understand customer satisfaction. Every time you see a grade curve, a political poll, or a “control chart” in a factory, you are seeing the ghost of Gauss.

Frequently Asked Questions (FAQs)

Why is it called the Normal Distribution?

It is called the normal distribution because it was initially thought to be the “normal” or natural state for many types of data. The term was popularized by the statistician Karl Pearson in the early 20th century. Before that, it was known as the “Laplace Gauss curve” or the “law of errors.” The word “normal” in this context does not mean “ideal” or “healthy”; it means “standard” or “the usual model.”

How is the Normal Distribution used in grading?

In education, “grading on a curve” uses the normal distribution to assign letter grades. The professor calculates the mean score and the standard deviation of the exam. Students who score one standard deviation above the mean might receive an A, while those one deviation below might get a C. This method ensures that even if an exam is too hard or too easy, the distribution of grades fits a predetermined pattern (e.g., 10% As, 20% Bs, etc.). However, this only works if the student scores are actually normally distributed.

What is the difference between a Gaussian and a Normal Distribution?

There is no difference. “Gaussian distribution” and normal distribution are two names for the exact same thing. “Gaussian” honors carl friedrich gauss (the prince of mathematics), while “normal” is the descriptive term used in social sciences and introductory textbooks. In physics and engineering, you will often hear “Gaussian.” In psychology and business statistics, you will hear “normal.” Both refer to the same bell shaped curve defined by the mean and standard deviation.

Can the Normal Distribution predict extreme events?

This is a weakness of the model. The normal distribution predicts that extreme events (like a stock market crash or a 1000 year flood) are incredibly rare. In reality, many natural systems have “fat tails,” meaning extreme events happen more frequently than the bell curve predicts. This is why financial risk managers use other distributions (like the Cauchy or Levy distributions) for risk analysis. For moderate, everyday variation, the normal distribution works perfectly. For catastrophic, rare events, it can be dangerously misleading.

How do I know if my data is normally distributed?

There are several statistical tests to check for normality. The easiest visual test is to create a histogram. If the bars form a symmetric bell shaped curve with one peak in the middle, it is likely normal. You can also use a Q-Q plot (quantile quantile plot). If your data points fall roughly along a straight diagonal line, the data follows a normal distribution. Common numerical tests include the Shapiro-Wilk test and the Kolmogorov Smirnov test. In data science, checking for normality is often the first step before running parametric statistical tests.

Conclusion

The normal distribution is more than a mathematical equation; it is a lens for seeing the world. It reveals order inside disorder. It turns uncertainty into probability. Carl friedrich Gauss did not just give us a curve; he gave us a method to measure the unmeasurable. From the orbit of gauss and ceres to the noise in a fiber optic cable, the bell curve provides a shared language between physics, biology, and economics. While no real world dataset perfectly fits a normal distribution (there are always tiny discrepancies), the model is so powerful and so close to reality that it has become the foundation of modern statistics. The next time you look at a graph of test scores or a population chart, remember the prince of mathematics and his beautiful bell. The universe may be random, but thanks to Gauss, that randomness has a rhythm. In many ways, how ancient greek scientists changed modern science by introducing logic and geometry, Gauss completed their journey by adding the mathematics of uncertainty, forever bridging the ancient quest for order with the modern age of probability and data.