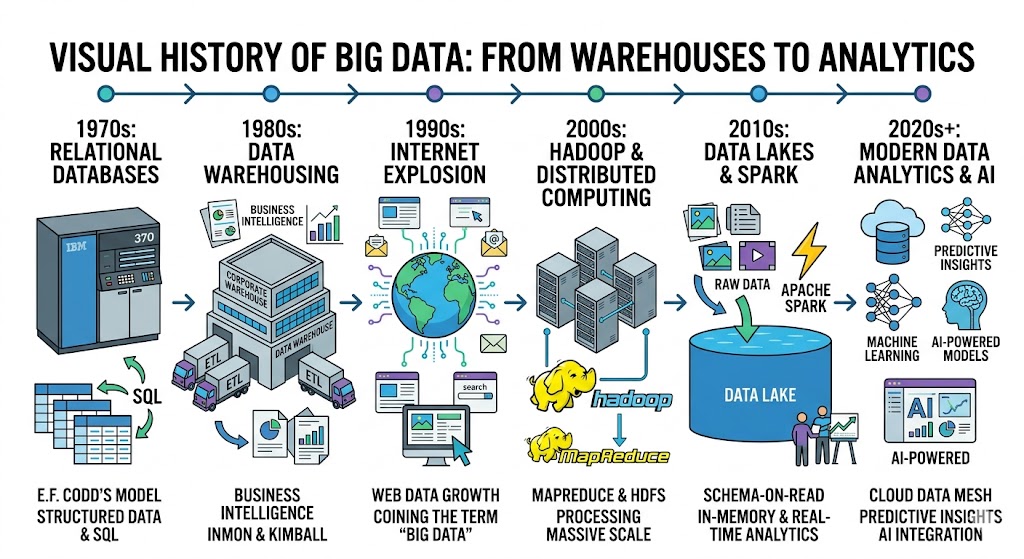

The story of big data history is a fascinating journey through the evolution of computing, data management, and analytics. In the early days of computing, organizations stored limited amounts of structured information using punch card systems and mainframe computing. Over time, the rapid growth of digital information created new challenges and opportunities in storing, managing, and analyzing large volumes of data.

Today, big data powers modern businesses, research institutions, and governments through advanced analytics, predictive models, and cloud computing platforms. Understanding the big data history helps us appreciate how technologies such as relational databases, distributed computing, and modern analytics systems evolved to handle enormous datasets.

This technological journey is closely connected to advancements in computer hardware, networking, and storage, which are discussed in articles like history of computers, history of computer hardware, and history of computer networking.

The Dawn of Data Collection and Early Foundations

The earliest stage of big data history began long before modern computing. In the late 19th and early 20th centuries, organizations relied on punch card systems to store and process information. These systems were used for census data, financial records, and government statistics.

With the introduction of mainframe computing in the 1950s and 1960s, businesses started using electronic systems for data storage and processing. Although these systems could handle more information than manual methods, they were still limited in scalability and flexibility.

During this period, structured data dominated computing environments, laying the groundwork for modern data management systems.

The Birth of the Relational Model and Early Data Warehouses

The development of the relational database management system (rdbms) in the late 1960s and early 1970s transformed the way organizations stored and managed data. These systems used tables to organize structured data efficiently.

This innovation became a key milestone in big data history, enabling companies to store larger datasets and retrieve information more effectively.

Modern database concepts were also deeply influenced by programming and software development methodologies discussed in history of programming languages and history of software engineering.

The Codd Revolution – 1970

A major breakthrough in big data history occurred in 1970, when E. F. Codd introduced the relational model for databases. His work defined e.f. codd’s 12 rules, which described how relational databases should operate.

This model introduced the use of structured query language (sql) to retrieve and manipulate data. SQL quickly became the standard language for interacting with relational databases.

Codd’s ideas formed the foundation for modern database systems used in business intelligence and analytics.

The Concept of the Data Warehouse – 1980s

In the 1980s, organizations began to separate operational databases from analytical systems. This led to the development of data warehouses, which were designed specifically for large-scale analysis and reporting.

Data warehouses relied on processes such as extract, transform, load (etl) to collect data from multiple sources and prepare it for analysis.

This stage of big data history marked the beginning of structured analytical systems used by enterprises worldwide.

Defining the Architecture of Big Data History – 1990s

During the 1990s, the architecture of modern data systems began to take shape. Concepts like business intelligence (bi) and online analytical processing (olap) enabled organizations to analyze historical data for strategic decision-making.

Two important methodologies emerged during this period:

- Inmon vs. Kimball methodology

- The use of data marts for departmental analysis

These innovations significantly improved analytical capabilities and helped organizations move toward modern data analytics.

The Internet Explosion and the Big Data History – 2000s

The rapid expansion of the internet in the early 2000s dramatically changed the landscape of big data history. Online platforms, e-commerce websites, and digital services generated massive amounts of data every second.

This growth in digital information is closely related to the development of the internet itself, as explained in history of internet.

Organizations suddenly faced new challenges in storing and processing enormous datasets.

The Rise of Unstructured Data

Unlike traditional databases, modern systems began handling unstructured data such as images, videos, emails, and social media posts. Data formats like semi-structured data (json/xml) also became common.

This new data environment forced companies to rethink traditional data architectures and adopt more scalable solutions.

The Coining of the Term – 2005

Around 2005, the term big data became widely used to describe datasets characterized by the volume, velocity, and variety of information being generated.

This concept captured the challenges of handling extremely large datasets that could not be processed using traditional database systems.

The Hadoop Era and Distributed Computing – 2006

A major milestone in big data history occurred with the development of distributed computing frameworks. These systems allowed data to be processed across multiple machines simultaneously.

This shift toward distributed computing helped organizations analyze massive datasets efficiently.

Google’s MapReduce and GFS – 2004

Before Hadoop became popular, Google developed innovative technologies such as the mapreduce framework and google file system (gfs).

These technologies demonstrated how distributed systems could process massive datasets across thousands of servers.

The Apache Hadoop Revolution – 2006

Inspired by Google’s research, the apache hadoop ecosystem was introduced in 2006. Hadoop used the Hadoop Distributed File System (hdfs) to store large datasets across clusters of computers.

This technology became a cornerstone of big data history, enabling organizations to process petabytes of data efficiently.

The Rise of Data Lakes and Modern Data Analytics – 2010s

As data volumes continued to grow, organizations adopted data lakes to store raw datasets in their native formats.

The debate of data lakes vs. data warehouses became central to modern data architecture discussions.

This stage of big data history also coincided with advancements in analytics and data science, as explored in history of data science.

The Data Lake

A data lake allows organizations to store structured, semi-structured, and unstructured data in a single repository. Unlike traditional warehouses, data lakes store information in raw form until it is needed for analysis.

Apache Spark: Real-time Speed – 2014

In 2014, apache spark emerged as a powerful framework for real-time data streaming and large-scale analytics.

Spark dramatically improved the speed of data processing compared to traditional Hadoop systems.

Cloud Migration and the Focus Keyword: Big Data History – 2015–Present

In recent years, the big data history has been shaped by the migration of analytics platforms to the cloud. Modern systems rely on cloud-native data platforms that provide scalable infrastructure for data storage and processing.

This transformation is closely linked to cloud computing technologies discussed in history of cloud storage.

Cloud Data Warehousing

Cloud-based systems such as Snowflake and BigQuery allow organizations to analyze massive datasets without maintaining physical infrastructure.

These platforms enable faster analytics, improved scalability, and lower operational costs.

The Rise of NoSQL

To manage large-scale datasets efficiently, developers created nosql databases that support flexible data models and horizontal scaling.

These systems complement traditional relational databases in modern data architectures.

Present: AI, LLMs, and Modern Data Analytics – 2020s

Today, big data history has reached a stage where data analytics powers advanced technologies such as artificial intelligence, machine learning, and large language models.

These systems rely on enormous datasets to train predictive models and automate decision-making processes.

The Convergence of Big Data and AI

Modern enterprises combine big data with predictive analytics and machine learning (ml) to generate actionable insights.

These technologies are driving innovation in modern artificial intelligence applications, enabling smarter automation and intelligent decision-making systems.

The Data Mesh and Data Fabric

Emerging architectures like data mesh and data fabric aim to decentralize data management and improve scalability across organizations.

These models allow teams to manage their own datasets while maintaining unified data governance.

Summary of the Technological Evolution

| Era | Key Innovation | Impact |

|---|---|---|

| 1950s–1960s | Punch cards & mainframes | Early electronic data processing |

| 1970 | Relational database model | Foundation of modern databases |

| 1980s | Data warehouses | Structured analytics systems |

| 2000s | Internet data explosion | Massive data growth |

| 2006 | Hadoop ecosystem | Distributed big data processing |

| 2014 | Apache Spark | Real-time analytics |

| 2020s | AI + big data | Intelligent data-driven systems |

Frequently Asked Questions (FAQs)

What is big data?

Big data refers to extremely large datasets characterized by volume, velocity, and variety that require advanced technologies for processing and analysis.

When did big data become popular?

The term became widely used around 2005 as internet companies began dealing with massive datasets.

What technologies are used in big data systems?

Technologies include Hadoop, Spark, data lakes, NoSQL databases, and cloud-native analytics platforms.

Why is big data important today?

Big data enables organizations to perform predictive analytics, improve decision-making, and power modern AI systems.

Conclusion: The Future of Big Data

The big data history demonstrates how data management evolved from simple punch cards to sophisticated distributed systems and cloud-based analytics platforms. Over decades, innovations such as relational databases, Hadoop clusters, and real-time analytics frameworks transformed how organizations collect and analyze information.

As technology continues to evolve, the future of big data history will likely involve deeper integration with artificial intelligence, advanced automation, and decentralized data architectures. With the growth of cloud computing, edge computing, and intelligent analytics platforms, big data will remain one of the most powerful drivers of innovation in the digital age.