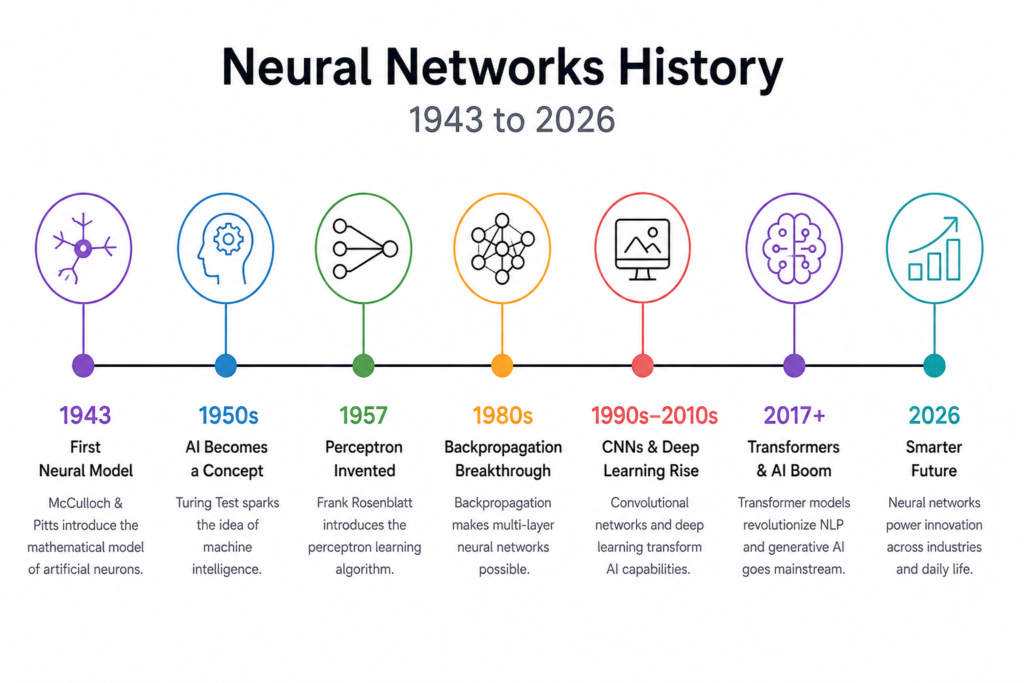

Artificial intelligence has transformed the modern world, but one technology stands at the center of this revolution: neural networks history. From simple mathematical ideas in the 1940s to advanced AI systems in 2026, neural networks have reshaped science, healthcare, robotics, language processing, and predictive modeling.

Today, artificial neural networks (ANN) power voice assistants, recommendation systems, autonomous vehicles, and image recognition tools. These systems imitate the structure of the human brain through artificial neurons, node connections, hidden layers, activation function mechanisms, and synaptic weights.

The journey of neural networks history is filled with breakthroughs, failures, controversies, and incredible innovation. Scientists spent decades trying to build machine intelligence that could learn from data just like humans. This long AI evolution eventually led to deep learning architecture, transformer neural networks, and generative AI systems.

In this article, we will explore the complete neural network timeline from 1943 to 2026 and understand how computational neuroscience changed technology forever.

The Beginning of Neural Networks (1943 – 1950)

The foundation of neural networks history started in 1943 when Warren McCulloch and Walter Pitts introduced the first mathematical model of artificial neurons.

Their research paper described how neurons could process binary information using mathematical modeling. This became the first attempt to imitate biological intelligence with machines.

The McCulloch-Pitts model used:

- Input layer

- Output layer

- Threshold activation function

- Logical computations

This early design laid the foundation for connectionism, a theory suggesting that intelligence emerges through interconnected nodes.

One of the most important parts of this era was the idea that machines could potentially learn patterns through synaptic weights. Even though computers were primitive, the concept inspired future AI researchers.

This period is closely connected to history of ai and neural networks and mcculloch and pitts neural network research that shaped modern AI evolution.

Alan Turing and Machine Intelligence (1950 – 1956)

In 1950, Alan Turing introduced the famous Turing Test in his paper “Computing Machinery and Intelligence.”

Turing asked a revolutionary question:

“Can machines think?”

This question changed computer science forever.

The Turing Test became a major milestone in neural network timeline discussions because it pushed scientists to create machines capable of intelligent behavior.

During this period, researchers believed machine intelligence could eventually replicate the human brain using artificial neurons and adaptive learning systems.

Although neural networks were still simple, this era inspired future breakthroughs in AI evolution and computational neuroscience.

The Perceptron Revolution (1957 – 1969)

The next major breakthrough in neural networks history came from Frank Rosenblatt in 1957.

Rosenblatt invented the Perceptron, one of the earliest learning algorithms in AI history.

The perceptron model introduced:

- Weight optimization

- Learning from examples

- Input and output mapping

- Error correction

Mathematically, the perceptron used:

Where:

- = synaptic weights

- = inputs

- = bias

This formula became one of the most important equations in deep learning architecture.

The perceptron generated huge excitement because scientists believed intelligent machines were near.

This era is strongly related to history of perceptron and who invented perceptron discussions in AI research.

The Perceptron Controversy and First AI Winter (1969 – 1980)

In 1969, Marvin Minsky and Seymour Papert published a book criticizing perceptrons.

They proved that single-layer perceptrons could not solve certain problems like XOR logic.

This created the famous perceptron controversy.

Funding for AI research collapsed, leading to the first AI winter. Many scientists abandoned neural networks because computers lacked processing power.

This period slowed AI evolution for years.

However, some researchers still believed multi-layer perceptron systems could overcome these limitations.

The rivalry connected to minsky vs rosenblatt became one of the most famous conflicts in artificial intelligence history.

Hebbian Learning and Synaptic Adaptation

One of the most important ideas in neural networks history came from Donald Hebb.

Hebb introduced the famous learning principle:

“Neurons that fire together wire together.”

This idea became the basis of the hebb learning rule.

Hebbian learning explained how synaptic weights strengthen during repeated activation.

Mathematically:

Where:

- = weight change

- = learning rate

- = input neuron

- = output neuron

This concept became essential in modern model training and deep learning systems.

Backpropagation Changes Everything (1980 – 1995)

Neural networks history entered a new era when backpropagation became practical in the 1980s.

Researchers discovered that multi-layer perceptron systems could learn efficiently using gradient descent explained through calculus and optimization.

The backpropagation algorithm adjusts synaptic weights by minimizing prediction error.

The core formula:

Where:

- = error function

- = learning rate

Backpropagation allowed neural networks to train across hidden layers, making deep learning architecture possible.

This period is deeply connected to history of backpropagation and multilayer perceptron history.

Scientists realized that artificial neural networks could perform predictive modeling tasks far beyond earlier expectations.

The Rise of Convolutional Neural Networks (1989 – 2010)

In the late 1980s and 1990s, Yann LeCun developed convolutional neural networks (CNNs).

CNNs became revolutionary for computer vision tasks.

These systems introduced:

- Feature extraction

- Shared weights

- Pooling layers

- Image classification

LeNet became one of the first successful CNN models.

This period is linked to:

- history of cnn

- who invented cnn

- history of lenet

- cnn computer vision history

CNNs eventually powered facial recognition, medical imaging, and self driving technology.

The architecture mimicked how the visual cortex processes patterns in biological brains.

Recurrent Neural Networks and LSTM Models (1990 – 2015)

Traditional neural networks struggled with sequential data like speech and language.

Researchers developed recurrent neural networks (RNNs) to solve this issue.

RNNs introduced memory into neural computation through feedback loops.

However, they suffered from the vanishing gradient problem.

To solve this issue, Sepp Hochreiter and Jürgen Schmidhuber created Long Short-Term Memory (LSTM) networks.

LSTMs transformed:

- Speech recognition neural networks

- Language translation

- Sequence prediction

- Chatbots

This era is strongly tied to history of lstm and rnn vs lstm vs transformer discussions.

Deep Learning Explosion (2012 – 2020)

The true deep learning revolution started in 2012.

A neural network called AlexNet shocked the AI community by dominating the ImageNet competition.

AlexNet used:

- GPUs for training

- ReLU activation function

- Dropout regularization

- Deep convolutional layers

This breakthrough launched the modern era of deep learning architecture.

The AI world rapidly advanced through:

- history of alexnet

- history of imagenet

- history of deep learning

- history of relu

- history of dropout

Companies invested billions into machine intelligence research.

Researchers like Geoffrey Hinton, Yoshua Bengio, and Yann LeCun became known as the Godfathers of Deep Learning.

Their work changed AI forever.

Transformer Neural Networks (2017 – 2026)

In 2017, researchers at Google introduced transformer neural networks.

The famous paper “Attention Is All You Need” transformed natural language processing.

Transformers replaced traditional sequence models using self-attention mechanisms.

Advantages included:

- Faster training

- Better context understanding

- Improved scalability

- Superior language generation

Transformer systems eventually powered:

- Large language models

- AI chatbots

- Image generators

- Generative neural networks

This era connects strongly with:

- transformer neural networks

- generative neural networks

- what is deep learning

Modern AI assistants rely heavily on transformer-based deep learning architecture.

Neural Networks in Healthcare and Robotics

Artificial neural networks now impact nearly every industry.

Applications include:

Medicine

AI systems detect diseases using predictive modeling and computer vision.

This field relates to ai in medicine history.

Neural networks assist doctors with:

- Cancer detection

- MRI analysis

- Drug discovery

- Personalized medicine

Self Driving Cars

Autonomous vehicles use CNNs and transformers for real-time navigation.

This connects with self driving cars and ai.

Neural systems analyze:

- Traffic signs

- Pedestrians

- Road conditions

- Motion prediction

Speech Recognition

Speech assistants use recurrent and transformer models for natural communication.

This links to speech recognition neural networks.

The Role of GPUs in AI Evolution

One major reason for AI success was GPU acceleration.

GPUs made massive model training possible by processing parallel computations efficiently.

This advancement is closely tied to gpu history in ai.

Without GPU computing, modern deep learning architecture would not exist.

Neural Networks vs the Human Brain

Many researchers compare artificial intelligence with biological intelligence.

The human brain contains approximately 86 billion neurons connected through complex synaptic pathways.

Artificial neural networks simplify this process using:

- Artificial neurons

- Input layer

- Hidden layers

- Output layer

- Activation function

Although AI systems are powerful, they still lack true human consciousness.

This topic is widely discussed in neural networks vs human brain research.

Neural Networks in 2026

By 2026, neural networks have become more advanced than ever.

Modern systems can:

- Generate videos

- Write software

- Create music

- Analyze scientific data

- Control robots

Companies like OpenAI and DeepMind continue pushing AI boundaries.

The competition between these organizations is often discussed in deepmind vs openai debates.

Researchers are also exploring:

- Spiking neural networks

- Quantum AI

- Energy-efficient AI chips

- Brain-inspired computing

Today, even best free ai tools rely heavily on neural network technology.

The future of machine intelligence looks incredibly powerful.

Frequently Asked Questions (FAQs)

What are neural networks in simple words?

Neural networks are AI systems inspired by the human brain. They use artificial neurons and connected layers to learn patterns from data.

Who invented neural networks?

The earliest neural network model was developed by Warren McCulloch and Walter Pitts in 1943.

Why are neural networks important?

Neural networks power modern AI applications like image recognition, language translation, recommendation systems, robotics, and medical diagnosis.

What is deep learning?

Deep learning is an advanced form of machine learning that uses many hidden layers to process complex information.

What is the difference between CNN and RNN?

CNNs are mainly used for image processing, while RNNs and LSTMs are designed for sequential data like language and speech.

Conclusion

The story of neural networks history is one of the most fascinating journeys in science and technology. From the first mathematical neuron in 1943 to advanced transformer neural networks in 2026, artificial intelligence has evolved beyond imagination.

Researchers overcame failures, AI winters, and technical limitations to build systems capable of learning, reasoning, and generating human-like content. Today, artificial neural networks influence medicine, transportation, robotics, communication, and nearly every major industry.

As computational neuroscience and deep learning architecture continue advancing, the next chapter of AI evolution may completely transform civilization. The future of neural networks is not just about smarter machines. It is about redefining the relationship between humans and intelligence itself.