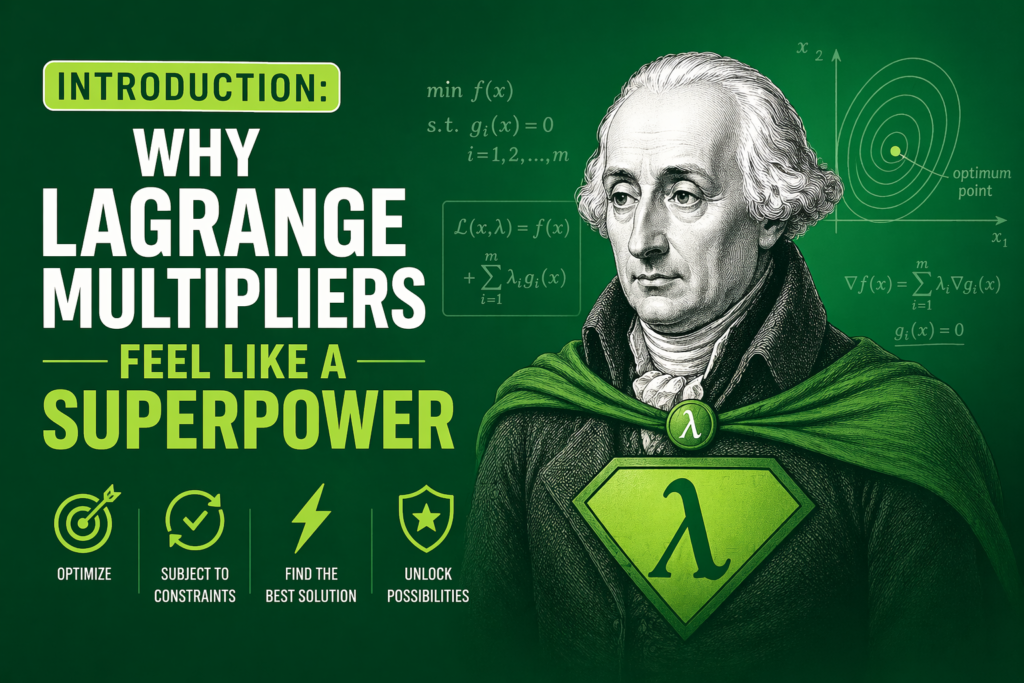

Introduction: Why Lagrange Multipliers Feel Like a Superpower

Imagine you have a limited budget but you want maximum happiness. Or you have a fixed amount of metal but you need the strongest possible container. These are constrained optimization problems. They appear everywhere in engineering, economics, physics, and even daily life. The brilliant solution comes from Joseph-Louis Lagrange, a mathematical genius we met in our previous pillar content.

The tool he invented is called lagrange multipliers. This method turns a hard problem with restrictions into a simpler problem without them. You add a new variable called lambda (λ). Then you solve a system of equations. That is the core idea.

In this article, we will explain lagrange multipliers step by step. You will see the formula, the logic, and fully worked examples. We will also touch on related concepts like lagrange points and lagrangian mechanics, which use similar thinking. By the end, you will understand why lagrange multipliers are one of the most elegant tools in multivariable calculus.

What Exactly Are Lagrange Multipliers? The Core Concept

Let us start with a simple truth. Most real world problems are not free. You cannot maximize profit without a budget limit. You cannot minimize fuel without a time limit. These limits are called constraints. The thing you want to optimize (maximize or minimize) is called the objective function.

The method of lagrange multipliers says: at the optimal point, the gradient of the objective function is parallel to the gradient of the constraint function. In plain English, the direction of steepest increase for your goal lines up with the direction of your restriction. This allows us to introduce a scaling factor λ, the multiplier.

Here is the lagrange multipliers formula for a two variable problem with one equality constraint:

You want to optimize f(x, y) subject to g(x, y) = c.

You define the Lagrangian function:

L(x, y, λ) = f(x, y) + λ (c − g(x, y))

Then you take partial derivatives with respect to x, y, and λ and set them equal to zero. The critical values you find are the candidates for maximum or minimum. This is the heart of lagrange multipliers.

The Mathematical Derivation in Simple Steps

Let us walk through the logic carefully. Suppose you have an objective function f(x, y) and a constraint g(x, y) = k. At the optimum, the level curve of f is tangent to the constraint curve. Why? Because if they crossed, you could move along the constraint and increase f further.

When two curves are tangent, their normal vectors are parallel. The gradient ∇f is perpendicular to the level curve of f. The gradient ∇g is perpendicular to the constraint curve. So at the optimum, ∇f = λ ∇g for some scalar λ. That λ is the lagrange multiplier.

This gives us a system of equations:

∂f/∂x = λ ∂g/∂x

∂f/∂y = λ ∂g/∂y

g(x, y) = k

You now have three equations for three unknowns (x, y, λ). Solving them yields stationary points. Then you check which ones give the highest or lowest f. This entire framework belongs to the broader calculus of variations, a field that Joseph-Louis Lagrange helped create.

How to Use Lagrange Multipliers: A Step by Step Method

Let me give you a reliable workflow for any constrained optimization problem using lagrange multipliers.

Step 1: Identify the objective function f and the constraint g(x, y) = c.

Step 2: Write the Lagrangian: L = f(x, y) + λ (c − g(x, y)).

Step 3: Compute all first partial derivatives:

∂L/∂x = 0

∂L/∂y = 0

∂L/∂λ = 0

Step 4: Solve the system for x, y, and λ.

Step 5: Evaluate f at each solution to find global extrema if the feasible region is closed and bounded.

That is the complete method. Now let us apply it to real numbers.

Example 1: Maximizing Area with a Fixed Perimeter

This is a classic problem. You have 100 meters of fencing. You want to enclose the largest possible rectangle. What should the dimensions be?

We know the objective function is area A = x * y.

The constraint is the perimeter: 2x + 2y = 100, or x + y = 50.

Using lagrange multipliers, we set:

f(x, y) = x*y

g(x, y) = x + y

c = 50

The Lagrangian is:

L = x*y + λ (50 − x − y)

Take partial derivatives:

∂L/∂x = y − λ = 0 → y = λ

∂L/∂y = x − λ = 0 → x = λ

∂L/∂λ = 50 − x − y = 0

From the first two, x = y = λ. Plug into the third: 50 − λ − λ = 0 → 50 − 2λ = 0 → λ = 25.

Thus x = 25, y = 25.

The rectangle is a square of side 25 meters. Area = 625 square meters. This shows how lagrange multipliers find the perfect balance without guessing.

Example 2: Minimizing Cost for a Production Target

A manufacturer must produce 100 units of product using two machines. Machine 1 costs $2 per unit and Machine 2 costs $3 per unit. The total cost function is C = 2x + 3y, where x units from machine 1 and y units from machine 2. Constraint: x + y = 100.

We want to minimize cost. Our objective function is C = 2x + 3y.

Constraint g(x, y) = x + y = 100.

The Lagrangian: L = 2x + 3y + λ (100 − x − y)

Partial derivatives:

∂L/∂x = 2 − λ = 0 → λ = 2

∂L/∂y = 3 − λ = 0 → λ = 3

Wait, we have a contradiction. λ cannot be 2 and 3 at the same time. This means no interior stationary point. The minimum must lie on the boundary of the feasible region. In fact, since machine 1 is cheaper, we set y = 0 and x = 100. Cost = $200. This is a valuable lesson: lagrange multipliers tell you when a problem has an interior solution and when it forces a corner solution.

Lagrange Multipliers for Functions of Three Variables

The method extends naturally. For f(x, y, z) subject to g(x, y, z) = c, we have:

L = f(x, y, z) + λ (c − g(x, y, z))

Then solve:

∂L/∂x = 0, ∂L/∂y = 0, ∂L/∂z = 0, ∂L/∂λ = 0

For example, maximize volume V = x*y*z of a box with no lid, given surface area constraint. The same logic works. These optimization problems appear in fluid dynamics, structural engineering, and even machine learning.

The Connection to Lagrange Points and Lagrangian Mechanics

The power of lagrange multipliers extends far beyond simple rectangles. In orbital mechanics, lagrange points are positions where gravitational forces and centrifugal forces balance. These points were discovered using the same variational principles that underlie lagrange multipliers. Similarly, lagrangian mechanics uses a function called the Lagrangian (L = T − V) to derive equations of motion without dealing with forces directly. That approach relies on the euler–lagrange equation, which is a continuous cousin of our multiplier method.

So when you learn lagrange multipliers, you are actually learning a gateway to lagrange interpolation, lagrangian mechanics, and even modern control theory. Every engineer and physicist thanks Joseph-Louis Lagrange for this gift.

Handling Inequality Constraints: A Quick Look

Sometimes constraints are not equalities. You might have x ≥ 0 or y ≤ 10. These are inequality constraints. The method extends using Karush–Kuhn–Tucker (KKT) conditions. You add slack variables and complementary slackness conditions. For a constraint g(x) ≤ 0, the condition becomes λ ≥ 0 and λ g(x) = 0. This is powerful for duality in optimization, a key concept in machine learning and economics. The shadow price in economics represents exactly the value of λ, telling you how much the objective would improve if you relaxed the constraint by one unit.

Common Mistakes When Using Lagrange Multipliers

Beginners often forget to check the boundary separately. lagrange multipliers only find stationary points inside the feasible region. If the constraint is not closed and bounded, you must examine limits. Another mistake is ignoring the sign of λ. In minimization with inequality constraints, λ must be nonnegative. Also, some problems have multiple λ values. Each corresponds to a different candidate. Always plug back into the objective function to compare.

Lagrange Multipliers in Real World Applications

Let me give you three powerful examples where lagrange multipliers save the day.

1. Economics: A company maximizes utility U(x, y) given budget I = p_x x + p_y y. The lagrange multiplier λ represents the marginal utility of income. If λ = 5, one extra dollar increases utility by 5 units.

2. Machine Learning: Support Vector Machines (SVMs) use lagrange multipliers to find the maximum margin hyperplane. The support vectors are exactly the points where λ > 0.

3. Engineering: Minimizing heat loss from a pipe given a fixed cross section area. The optimal shape is found via lagrange multipliers and yields the famous isoperimetric solution.

Solving a Nonlinear Example Step by Step

Let us work through a tougher one. Maximize f(x, y) = x*y^2 subject to x^2 + y^2 = 3.

We set:

L = x*y^2 + λ (3 − x^2 − y^2)

Partial derivatives:

∂L/∂x = y^2 − 2λx = 0 → (1) y^2 = 2λx

∂L/∂y = 2xy − 2λy = 0 → (2) 2y(x − λ) = 0

∂L/∂λ = 3 − x^2 − y^2 = 0

From (2), either y = 0 or x = λ.

Case 1: y = 0. Then from (1): 0 = 2λx → either λ = 0 or x = 0. Constraint gives x^2 = 3 → x = ±√3. f = 0.

Case 2: x = λ. Then (1) becomes y^2 = 2x^2. Substitute into constraint: x^2 + 2x^2 = 3 → 3x^2 = 3 → x^2 = 1 → x = ±1. Then y^2 = 2 → y = ±√2.

Now f = x*y^2 = (±1)*(2) = 2. This is larger than 0. So maximum is 2 at (1, √2), (1, −√2), (−1, √2), (−1, −√2)? Wait, check sign of x: if x = −1, y^2=2, f = (−1)*2 = −2. That is a minimum. So maximum = 2 at (1, √2) and (1, −√2). This detailed mathematical work shows how lagrange multipliers distinguish maxima from minima.

The Concept of Duality and Shadow Prices

There is a beautiful idea called duality in optimization. Every constrained problem has a dual problem. The lagrange multiplier λ is the link between them. In economics, λ is the shadow price. It tells you the rate at which the optimal value changes if the constraint is relaxed. For example, if your budget increases by $1 and λ = 3, your maximum utility increases by 3 units. This is invaluable for policy making and resource allocation.

Frequently Asked Questions (FAQs)

1. Can lagrange multipliers handle multiple constraints?

Yes. You introduce one multiplier per constraint. For two constraints g = c and h = d, you use L = f + λ₁(c − g) + λ₂(d − h). Then take partial derivatives for each variable and each λ.

2. What if the lagrange multiplier is zero?

λ = 0 means the constraint is inactive at the optimum. The optimum would be the same even without the constraint. This often happens in inequality constraints when the solution lies strictly inside the feasible region.

3. Are lagrange multipliers only for equality constraints?

No. For inequality constraints like g(x) ≤ 0, you add the condition λ ≥ 0 and λ g(x) = 0. This is the KKT extension. It is used extensively in machine learning and economics.

4. How do lagrange multipliers relate to gradient descent?

In deep learning, you often optimize a loss function with constraints. lagrange multipliers convert the constrained problem into an unconstrained one, then you apply gradient descent on the Lagrangian.

5. Why do we square the constraint sometimes?

You should never square the constraint unless it is naturally an equation like x² + y² = R². Squaring alters the derivative and can introduce false solutions. Always keep the constraint as given.

Conclusion

lagrange multipliers are one of the most brilliant tools ever created. They take a messy constrained optimization problem and turn it into a clean system of equations. From lagrange points in space to lagrangian mechanics on Earth, from the euler–lagrange equation in physics to lagrange interpolation in numerical analysis, the spirit of this method is everywhere. Even the calculus of variations owes its foundation to the same thinking.

Just like how ancient greek scientists changed modern science by asking geometric questions, Joseph-Louis Lagrange changed mathematics by asking algebraic ones. Whether you are an engineer, economist, or data scientist, mastering lagrange multipliers gives you a superpower. You will see constraints not as barriers but as guides. Now go solve your own optimization problems with confidence.