Understanding Transfer Learning in Deep Learning

Imagine learning to play the guitar. After months of practice, you can strum chords, fingerpick melodies, and understand music theory. Now you want to learn the ukulele. Do you start from zero? Of course not. You transfer your knowledge. The finger positions are different, but rhythm, timing, and musical concepts carry over. This is the essence of transfer learning, a remarkably powerful technique that has revolutionized how we build artificial intelligence systems.

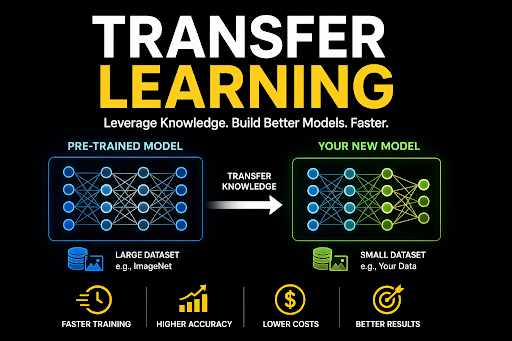

Transfer learning allows AI models to leverage knowledge gained from one task to excel at another related task. Instead of training every new model from scratch, which requires massive datasets and enormous computational resources, transfer learning starts with a pre-trained model that already understands basic patterns. This pre-trained model is then adapted to the specific task at hand with minimal additional training.

The impact of transfer learning on the field of artificial intelligence cannot be overstated. It has democratized access to state of the art AI, enabling small teams and individual researchers to build sophisticated models without billion dollar computing budgets. The rise of modern machine learning has been accelerated significantly by transfer learning techniques that make AI development faster, cheaper, and more accessible.

The Core Concept: Knowledge Reuse in AI

At its heart, transfer learning in machine learning is about knowledge reuse. A neural network trained on a large, general purpose dataset learns to detect edges, textures, shapes, and patterns. These low level features are universal. Whether you are identifying cats in photos or tumors in X rays, the ability to detect edges and textures is valuable.

When you apply transfer learning, you take a pre-trained neural network and repurpose it for a new task. The early layers, which detect basic features, are often kept frozen. They have already learned useful representations. Only the later layers, which make task specific predictions, are retrained on your new data.

This approach works because neural networks learn hierarchical representations. Early layers detect simple patterns like edges and corners. Middle layers combine these into shapes and object parts. Later layers assemble these into complete objects. The early and middle layers are broadly useful. Only the final layers need to be adapted for specific tasks.

Why Use Transfer Learning Instead of Training from Scratch?

Training a deep neural network from scratch is seriously challenging. It requires millions of labeled examples, weeks of computation on expensive GPUs, and careful hyperparameter tuning. For most organizations, this is simply not feasible. Transfer learning offers a practical alternative.

The first reason to use transfer learning is data efficiency. Many real world problems lack large labeled datasets. Medical imaging, rare species identification, and specialized manufacturing inspection have limited examples. Transfer learning achieves high accuracy with small datasets by leveraging knowledge learned from larger, related datasets.

The second reason is computational efficiency. Training a model from scratch can cost thousands of dollars in cloud computing resources. Transfer learning reduces this cost by 90 percent or more. The pre-trained model has already done the heavy lifting. You only need a fraction of the training time to adapt it to your task.

The third reason is performance. Counterintuitively, transfer learning often produces better results than training from scratch, even when you have plenty of data. The pre-trained model has learned features from a diverse dataset that generalize better than features learned from a narrow, task specific dataset.

Key Techniques for Implementing Transfer Learning

There are several ways to implement transfer learning, each with different tradeoffs. The choice depends on the size of your dataset and the similarity between the source and target tasks.

Feature Extraction vs. Fine-Tuning

Feature extraction is the simplest form of transfer learning. You take a pre-trained model, remove its final classification layer, and use the remaining layers as a fixed feature extractor. You then train a new classifier, often a simple linear layer or a support vector machine, on top of these features.

Feature extraction works well when your target dataset is very small. The pre-trained model’s weights are frozen, so no backpropagation through the large network is needed. This is computationally efficient and prevents overfitting.

Fine tuning is more powerful but also more risky. In fine tuning, you unfreeze some or all of the pre-trained layers and continue training on your target dataset. This allows the model to adapt its features to the specific nuances of your task.

The distinction between fine tuning vs transfer learning is subtle. Fine tuning is actually a specific technique within the broader transfer learning framework. Most practitioners use the terms interchangeably, but fine tuning specifically refers to continued training of pre-trained weights.

When fine tuning, it is common to freeze the early layers and only fine tune the later layers. The early layers detect universal features that rarely need adjustment. The later layers are more task specific and benefit from fine tuning.

Choosing the Right Pre-trained Model (ResNet, VGG, BERT)

Selecting the right pre-trained model is critical for successful transfer learning. For computer vision tasks, models trained on ImageNet are the standard choice. ImageNet contains 1.2 million images across 1000 categories, providing a rich set of learned features.

ResNet and VGG architectures are popular choices. ResNet, short for Residual Network, uses skip connections that allow very deep networks to train effectively. ResNet50, with 50 layers, is a common starting point. VGG is simpler and older but still effective for many tasks.

For natural language processing, natural language processing fine-tuning typically starts with BERT or its variants. BERT, or Bidirectional Encoder Representations from Transformers, is pre-trained on massive text corpora using masked language modeling. It understands context, grammar, and semantics.

The hugging face pre-trained models library provides access to thousands of pre-trained models for text, image, audio, and multimodal tasks. This has made transfer learning accessible to practitioners without deep expertise in model architecture.

The Role of Domain Adaptation

Domain adaptation in AI addresses a common challenge in transfer learning. The source domain, where the pre-trained model was trained, may differ from the target domain where you want to apply it. An ImageNet model was trained on natural photographs. Your target domain might be medical X rays or satellite imagery.

Domain adaptation techniques help bridge this gap. They adjust the model to account for differences in data distribution between source and target domains. Simple approaches include data augmentation that makes target images more similar to source images. More sophisticated approaches use adversarial training to learn domain invariant features.

When source and target domains are very different, transfer learning may still provide benefits, but the gains are smaller. Understanding the relationship between your source and target domains is essential for setting realistic expectations.

Practical Benefits of Transfer Learning

The advantages of transfer learning extend beyond technical convenience. They have real business and research implications.

Drastically Reducing Training Time and Compute Costs

Reducing AI model training time is one of the most tangible benefits of transfer learning. Training ResNet50 from scratch on ImageNet takes about two weeks on a single GPU. With transfer learning, you can fine tune the same model for a new task in hours or even minutes.

This reduction in training time translates directly to cost savings. Cloud GPU time is expensive. By using transfer learning, organizations can experiment with more models, iterate faster, and deploy solutions sooner.

For researchers with limited computing resources, transfer learning is enabling. A graduate student with a single GPU can now fine tune state of the art models that would have required a supercomputer to train from scratch.

Achieving High Accuracy with Small Datasets

Few-shot learning techniques are closely related to transfer learning. Both address the challenge of learning from limited data. Transfer learning excels when you have dozens or hundreds of examples per class, rather than the thousands or millions needed for training from scratch.

In medical imaging, labeled datasets are often small because expert radiologists are expensive and time constrained. Transfer learning enables accurate disease detection from chest X rays or MRI scans using just hundreds of labeled examples.

In manufacturing, defect detection models can be built with transfer learning using limited examples of defective products. This allows quality control systems to be deployed quickly without waiting months to collect large datasets.

Improving Model Generalization

Models trained from scratch on small datasets tend to overfit. They memorize the training examples rather than learning generalizable patterns. Transfer learning improves generalization because the pre-trained model has already learned robust features from a large, diverse dataset.

The pre-trained model acts as a regularizer, constraining the fine tuned model to stay close to the original weights. This inductive bias prevents overfitting and leads to better performance on test data.

Transfer learning also makes models more robust to distribution shift. If your deployment environment differs slightly from your training environment, a transfer learning based model is more likely to maintain its performance.

Common Challenges and Best Practices

While transfer learning is powerful, it is not magic. There are common pitfalls to avoid.

How to Avoid Negative Transfer

Negative transfer occurs when transfer learning hurts performance instead of helping it. This happens when the source task and target task are too different. Using an ImageNet model for medical X ray classification might still work, but using a natural language model for image classification would be disastrous.

To avoid negative transfer, evaluate the similarity between your source and target domains. If they are very different, consider training from scratch or using a different source model. You can also use domain adaptation techniques to bridge the gap.

Another cause of negative transfer is fine tuning too aggressively on a very small target dataset. The model may overfit to the target data and lose the beneficial features learned from the source domain. Use smaller learning rates and freeze more layers when target data is limited.

Matching Source and Target Domains Effectively

The success of transfer learning depends on the relationship between source and target domains. Ideally, the source domain should be broader and more diverse than the target domain. ImageNet covers thousands of object categories, making it a good source for many vision tasks.

When selecting a pre-trained model, consider the data it was trained on. For satellite imagery, a model trained on aerial photographs might be better than one trained on natural photographs. For medical imaging, models trained on medical datasets are increasingly available.

Sometimes, you can use multiple source domains. This is called multi source transfer learning. By combining knowledge from several pre-trained models, you can achieve better performance than any single source.

Fine-Tuning Hyperparameters for Optimal Performance

Fine tuning requires careful hyperparameter selection. The learning rate should be smaller than when training from scratch, typically 10 to 100 times smaller. A high learning rate will destroy the pre-trained features. A very low learning rate will prevent adaptation.

The number of layers to fine tune depends on dataset size. With very small datasets, fine tune only the final layer. With larger datasets, fine tune more layers. With abundant data, you can fine tune the entire network.

Early stopping is essential. Monitor validation performance and stop fine tuning when it plateaus or begins to decrease. This prevents overfitting to the target dataset.

Frequently Asked Questions

1. What is transfer learning in simple terms?

Transfer learning means taking a model trained on one task and reusing it as a starting point for a different but related task, saving time and data.

2. When should I use transfer learning instead of training from scratch?

Use transfer learning when you have limited labeled data, limited computational resources, or when a suitable pre-trained model exists for a related task.

3. What is the difference between feature extraction and fine tuning?

Feature extraction uses pre-trained weights as fixed feature extractors. Fine tuning continues training the pre-trained weights on the new task.

4. How do I choose the right pre-trained model?

Choose a model trained on data similar to your target domain. For images, ResNet or VGG trained on ImageNet are good starting points. For text, BERT or its variants are standard.

5. What is negative transfer?

Negative transfer happens when using a pre-trained model hurts performance instead of helping it, usually because the source and target tasks are too different.

6. Can transfer learning work with very small datasets?

Yes, transfer learning excels with small datasets. Feature extraction with a pre-trained model can achieve good results with just dozens of examples per class.

Conclusion

Transfer learning has fundamentally changed how artificial intelligence systems are built. This remarkably powerful technique enables developers to achieve state of the art performance with a fraction of the data and computational resources previously required. From medical imaging to natural language processing, transfer learning has democratized access to advanced AI.

The core insight of transfer learning is beautifully simple. Knowledge learned from one task can be reused for another. This mirrors how humans learn, building on previous experience rather than starting from zero each time. The evolution of machine learning algorithms shows how transfer learning represents a shift toward more efficient, more human-like learning.

For practitioners, transfer learning offers a practical path to building better models faster. The incredible AI in healthcare history and evolution demonstrates how transfer learning has enabled diagnostic tools that save lives. The modern artificial intelligence applications we see today, from autonomous vehicles to recommendation systems, increasingly rely on transfer learning to deliver performance with efficiency.

To deepen your understanding of related AI techniques, explore the history of AI agents and how autonomous systems leverage learned knowledge. Additionally, the self supervised learning in artificial intelligence resource provides insight into complementary approaches for learning from unlabeled data.

Whether you are a researcher pushing the boundaries of transfer learning deep learning or a practitioner building real world applications, mastering transfer learning is essential. The field continues to advance, with new techniques and pre-trained models emerging regularly. By understanding the fundamentals and following best practices, you can harness the power of transfer learning to build better AI models faster.