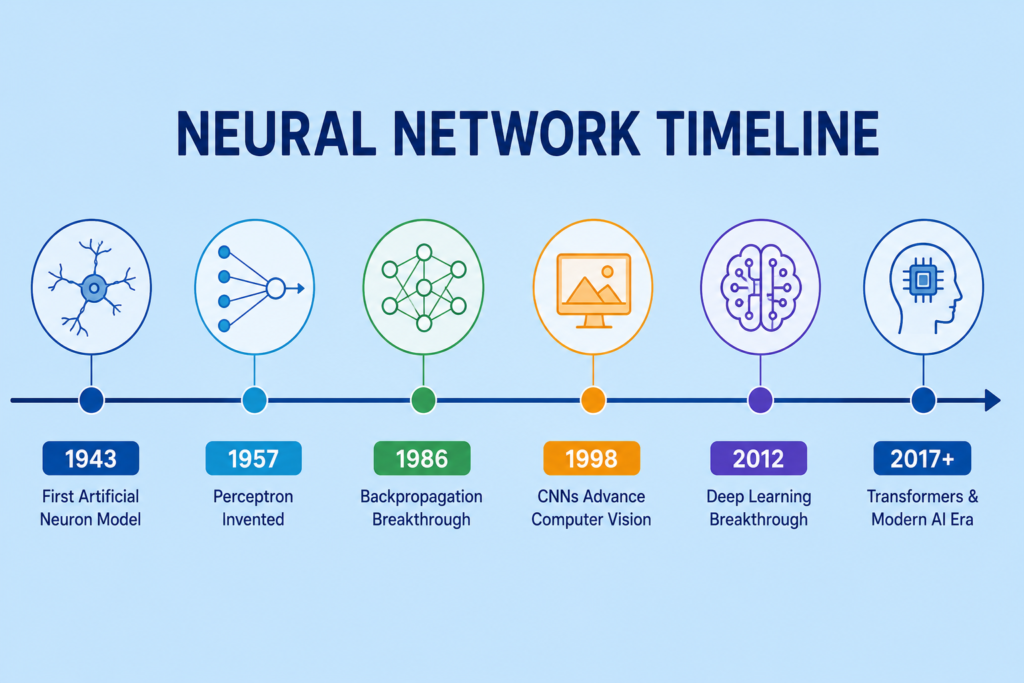

The neural network timeline is one of the most exciting stories in the history of modern technology. From simple mathematical neuron models in the 1940s to advanced generative AI systems in 2026, neural networks have transformed science, computing, healthcare, robotics, and communication.

Today, artificial intelligence systems can recognize speech, generate realistic images, drive cars, and even write code. These breakthroughs did not happen overnight. The complete neural network timeline is filled with scientific discovery, foundational research, technological progress, and major paradigm shifts.

The journey of the neural network timeline also reflects the evolution of computing power, data availability, hardware accelerators, and innovation cycles. Scientists spent decades trying to imitate the learning ability of the human brain through artificial neurons and machine intelligence.

In this article, we will explore the complete neural network timeline and explain every major milestone that shaped artificial intelligence from 1943 to 2026.

The Birth of Artificial Neurons (1943)

The first major event in the neural network timeline happened in 1943.

Warren McCulloch and Walter Pitts introduced the McCulloch-Pitts model, one of the earliest mathematical representations of artificial neurons.

This system simulated basic neural behavior using logic and binary computation.

The McCulloch-Pitts model introduced:

- Artificial neurons

- Binary activation

- Logical operations

- Simple node connections

Their work became the foundation of modern neural architecture and connectionism.

This breakthrough is strongly related to mcculloch and pitts neural network research and modern AI milestones.

The model proved that machines could theoretically imitate biological intelligence using mathematical systems.

Alan Turing and Intelligent Machines (1950)

Another critical milestone in the neural network timeline came from Alan Turing in 1950.

Turing introduced the famous Turing Test in his paper “Computing Machinery and Intelligence.”

He asked a revolutionary question:

“Can machines think?”

This concept became central to the future of machine intelligence and the broader history of ai.

Turing’s ideas inspired researchers to explore cognitive simulation, algorithmic learning, and intelligent systems.

His early work also influenced later developments in neural computation and symbolic AI.

The Perceptron Era Begins (1957 – 1969)

The next giant step in the neural network timeline occurred in 1957 when Frank Rosenblatt invented the Perceptron.

The Perceptron became one of the first machine learning systems capable of learning from examples.

The mathematical formula:

Where:

- = weights

- = inputs

- = bias

This breakthrough became one of the most important events in the neural network timeline because it introduced trainable artificial neurons.

Researchers believed perceptrons could eventually create intelligent machines capable of reasoning and recognition.

This era is closely connected to:

- history of perceptron

- who invented perceptron

The Perceptron era also encouraged new academic papers on learning systems and adaptive computation.

The First AI Winter and Research Collapse (1969 – 1980)

The excitement surrounding neural networks suddenly slowed during the late 1960s and 1970s.

Marvin Minsky and Seymour Papert criticized perceptrons in their famous book Perceptrons.

They showed that single-layer perceptrons could not solve certain logical problems.

This criticism caused major funding cuts and slowed AI research dramatically.

This period became known as the first ai winter.

The neural network timeline nearly stopped during this era because computing power was limited and datasets were too small.

However, foundational research continued quietly in universities and research laboratories.

Hebbian Learning and Adaptive Intelligence

One important concept that survived the AI winter was Hebbian learning.

Donald Hebb proposed the famous idea:

“Neurons that fire together wire together.”

This theory became the basis of the hebb learning rule.

Hebbian learning explained how neural systems strengthen connections through repeated activation.

This idea strongly influenced modern machine learning, pattern recognition, and neural optimization methods.

The neural network timeline would later depend heavily on adaptive weight learning techniques inspired by Hebb’s work.

The Connectionist Revival (1980 – 1995)

The 1980s marked a major revival in the neural network timeline.

Researchers discovered that multi-layer neural systems could overcome earlier limitations through backpropagation.

Backpropagation allowed neural networks to adjust weights efficiently during training.

The formula used:wnew=wold−η∂w∂E

This breakthrough became one of the biggest neural network breakthroughs in AI history.

The connectionist revival transformed machine learning research and restarted interest in neural computation.

This era strongly relates to:

- history of backpropagation

- multilayer perceptron history

Scientists realized that deep neural systems could solve far more complex tasks than earlier perceptrons.

The neural network timeline entered a completely new phase during this period.

Convolutional Neural Networks and Computer Vision (1989 – 2010)

The rise of convolutional neural networks changed the future of computer vision forever.

Yann LeCun developed CNN architectures capable of recognizing visual patterns efficiently.

CNNs introduced:

- Feature extraction

- Shared weights

- Pooling layers

- Hierarchical learning

LeNet became one of the first successful convolutional neural networks.

This era became a massive milestone in the neural network timeline because machines finally achieved strong image recognition performance.

The period connects closely with:

- history of cnn

- who invented cnn

- cnn computer vision history

The development of CNNs also accelerated industry adoption of AI technologies.

Recurrent Networks and Sequential Learning (1990 – 2015)

Another major chapter in the neural network timeline involved recurrent neural networks (RNNs).

Unlike CNNs, RNNs processed sequential data such as speech and language.

Researchers later introduced Long Short-Term Memory (LSTM) systems to solve memory limitations and the vanishing gradient problem.

LSTMs became essential for:

- Language translation

- Speech recognition

- Time-series prediction

- Chatbots

This stage of the neural network timeline is deeply connected to:

- history of lstm

- rnn vs lstm vs transformer

Sequential learning systems dramatically improved natural language processing capabilities.

The Deep Learning Explosion (2012 – 2016)

The modern Deep Learning era officially exploded in 2012.

AlexNet shocked the AI community by dominating the ImageNet competition using GPU acceleration and deep convolutional networks.

This breakthrough completely changed the neural network timeline.

Key factors behind the success included:

- Massive datasets

- Hardware accelerators

- Improved GPUs

- Better optimization algorithms

- Large-scale model training

The AI industry rapidly expanded after this achievement.

This period strongly relates to:

- history of alexnet

- gpu history in ai

- history of deep learning

Researchers like Geoffrey Hinton, Yann LeCun, and Yoshua Bengio became famous as the Godfathers of Deep Learning.

The neural network timeline entered its fastest growth phase during these years.

Transformer Revolution and Generative AI (2017 – 2026)

One of the biggest revolutions in the neural network timeline started in 2017.

Researchers at Google introduced transformer neural networks through the famous paper “Attention Is All You Need.”

Transformers replaced older sequence-processing methods with self-attention mechanisms.

This innovation dramatically improved:

- Language understanding

- Text generation

- Translation systems

- AI reasoning

The Transformer revolution eventually powered:

- Chatbots

- Image generators

- Voice assistants

- Coding assistants

- Generative AI systems

This stage strongly connects with:

- transformer neural networks

- generative neural networks

Companies such as OpenAI, Google DeepMind, and Anthropic accelerated the modern AI race.

Today, even best free ai tools depend heavily on transformer-based neural systems.

The neural network timeline continues evolving rapidly as generative AI becomes more advanced every year.

Neural Networks in Real Industries

The neural network timeline is not only about academic research. Neural systems now power real-world industries.

Healthcare

Neural networks assist doctors in disease diagnosis, medical imaging, and treatment planning.

This field connects with ai in medicine history.

Self Driving Cars

Autonomous vehicles use neural systems for object detection and navigation.

This area relates to self driving cars and ai innovation.

Speech Recognition

Modern assistants use neural networks for natural conversation and voice understanding.

This development links to speech recognition neural networks.

The neural network timeline now impacts nearly every modern technology sector.

The Future of Neural Networks (2026 and Beyond)

The future of the neural network timeline looks even more powerful.

Researchers are currently exploring:

- Spiking neural networks

- Quantum AI systems

- Brain-inspired computing

- Energy-efficient neural chips

- Artificial general intelligence

Future AI systems may eventually surpass human performance in many specialized tasks.

However, ethical concerns surrounding privacy, security, and employment continue growing.

The next decade may become the most important chapter in the entire neural network timeline.

Frequently Asked Questions (FAQs)

What is the neural network timeline?

The neural network timeline is the chronological history of neural network development from early artificial neurons in 1943 to modern generative AI systems.

Who invented neural networks?

Warren McCulloch and Walter Pitts introduced one of the earliest artificial neuron models in 1943.

Why was the Perceptron important?

The Perceptron was one of the first machine learning systems capable of learning from examples using adjustable weights.

What caused the AI winters?

AI winters happened because early systems failed to meet expectations, and computers lacked sufficient processing power.

Why are transformers important?

Transformer neural networks revolutionized natural language processing through self-attention mechanisms and large-scale learning.

Conclusion

The neural network timeline represents one of humanity’s greatest technological journeys. From the McCulloch-Pitts model in 1943 to transformer neural networks in 2026, neural systems have transformed how machines learn, reason, and interact with the world.

Every era introduced new paradigm shifts, scientific discoveries, and technological progress that pushed AI closer to human-like intelligence. The Perceptron era, AI winters, connectionist revival, Deep Learning era, and Transformer revolution all played essential roles in shaping modern artificial intelligence.

Today, neural networks power industries, scientific research, healthcare, robotics, communication, and generative AI systems. As computing power and data availability continue improving, the future of neural networks may become even more revolutionary than anything we have seen before.