The perceptron controversy remains one of the most dramatic events in artificial intelligence history. During the 1950s and 1960s, scientists believed neural networks might eventually create machines capable of human-like intelligence. The invention of the perceptron generated enormous excitement across universities, research laboratories, and government agencies.

However, one academic critique nearly destroyed the entire neural network movement.

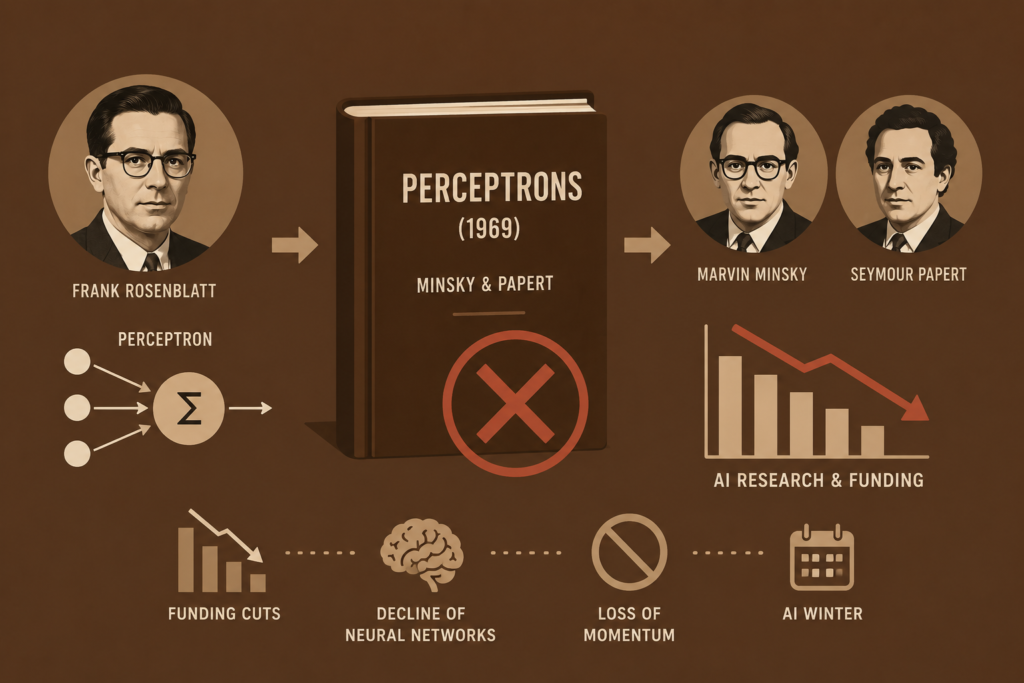

The perceptron controversy began after a famous 1969 book called Perceptrons criticized the limitations of early neural networks. The book, written by Marvin Minsky and Seymour Papert, dramatically changed research priorities in artificial intelligence.

As a result, funding cuts spread across AI laboratories, the death of connectionism began, and symbolic AI dominance replaced neural network research for many years.

The story of the perceptron controversy is not only about mathematics and computational geometry. It is also a story of academic debate, institutional impact, scientific setbacks, and the struggle between competing visions of machine intelligence.

In this article, we will explore the complete history of the perceptron controversy, why the perceptron became controversial, how one book nearly killed AI research, and how neural networks eventually returned stronger than ever.

The Rise of the Perceptron (1957 – 1969)

To understand the perceptron controversy, we must first understand why the perceptron became so important.

In 1957, Frank Rosenblatt invented the perceptron while working at Cornell Aeronautical Laboratory.

The perceptron became one of the first machine learning systems capable of learning from data.

It used:

- Weighted inputs

- Binary classification

- Signal detection

- Adaptive learning

- Single-layer neural computation

The perceptron represented a huge breakthrough in the broader history of perceptron and machine intelligence research.

Scientists believed the system might eventually:

- Recognize speech

- Understand images

- Translate language

- Simulate human cognition

This excitement transformed the early history of ai.

The perceptron became one of the biggest AI milestones of the twentieth century.

The Foundations of Neural Network Research

The perceptron itself was built upon earlier neural network theories.

In 1943, Warren McCulloch and Walter Pitts introduced the first artificial neuron model.

Their work became central to mcculloch and pitts neural network research.

Donald Hebb later introduced adaptive neural learning concepts through the hebb learning rule.

Together, these breakthroughs helped launch the broader neural network history movement.

Researchers believed artificial neurons could eventually imitate biological learning systems.

This growing excitement laid the foundation for the future perceptron controversy.

How the Perceptron Worked

The perceptron controversy centered around the mathematical limitations of the perceptron itself.

The perceptron operated as a single-layer neural network.

Its basic equation:

Where:

- = input values

- = weights

- = bias

The perceptron learned through weight adjustments.

This learning system became one of the earliest forms of supervised machine learning.

The perceptron could successfully solve many linearly separable problems.

However, hidden weaknesses eventually triggered the perceptron controversy.

Marvin Minsky and Seymour Papert

The major turning point in the perceptron controversy came from Marvin Minsky and Seymour Papert.

Both researchers were leading figures in symbolic AI research.

Unlike connectionist researchers, Minsky and Papert believed intelligence should be built through symbolic reasoning and logical manipulation rather than neural learning systems.

Their criticism of perceptrons eventually became one of the biggest academic debates in AI history.

This conflict later became famous through minsky vs rosenblatt discussions.

The Perceptrons Book (1969)

In 1969, Minsky and Papert published the book Perceptrons.

This book became the center of the perceptron controversy.

The authors mathematically proved that single-layer perceptrons could not solve certain computational problems.

One of the most famous examples was the XOR problem.

The XOR function is not linearly separable.

This meant a simple perceptron could not classify XOR outputs correctly.

The book used mathematical proofs and computational geometry to demonstrate serious limitations in perceptron systems.

The criticism shocked the AI research community.

The XOR Problem Explained

The XOR problem became one of the most important parts of the perceptron controversy.

XOR stands for “exclusive OR.”

Its output logic:

| Input A | Input B | XOR Output |

|---|---|---|

| 0 | 0 | 0 |

| 0 | 1 | 1 |

| 1 | 0 | 1 |

| 1 | 1 | 0 |

The problem could not be separated using a single straight decision boundary.

Because perceptrons relied on linear classification, they failed on XOR tasks.

This limitation became a major argument against early neural networks.

The perceptron controversy spread rapidly throughout universities and research institutions after this discovery.

Why the Criticism Became So Powerful

The perceptron controversy became devastating because the AI field was still young and fragile.

Research funding depended heavily on optimistic predictions.

When Minsky and Papert exposed the limitations of perceptrons, many government agencies and institutions lost confidence in neural network research.

This led to:

- DARPA funding reductions

- Research limitations

- Institutional skepticism

- Declining academic interest

Neural network research suddenly appeared weak compared to symbolic AI systems.

The perceptron controversy dramatically changed research priorities across computer science.

The Death of Connectionism

One of the biggest consequences of the perceptron controversy was the decline of connectionism.

Connectionism focused on learning systems inspired by biological neural networks.

After the Perceptrons book, many researchers abandoned neural approaches completely.

Instead, symbolic AI became dominant.

Researchers focused on:

- Rule-based systems

- Expert systems

- Logical reasoning

- Symbolic computation

The neural network community nearly disappeared during the 1970s.

This period eventually became connected to the first ai winter.

Was Minsky Completely Wrong?

The perceptron controversy remains controversial even today because Minsky and Papert were not entirely wrong.

Their mathematical proofs were accurate.

Single-layer perceptrons truly could not solve non-linear problems like XOR.

However, critics argue the book unintentionally discouraged research into multi-layer neural systems.

At the time, researchers had not yet discovered efficient training methods for deep neural networks.

The real issue was not neural networks themselves, but the technological limitations of the era.

The perceptron controversy may have delayed AI progress by decades.

The Return of Neural Networks (1980 – 2010)

Although the perceptron controversy nearly destroyed neural network research, connectionism eventually returned.

During the 1980s, researchers developed backpropagation algorithms for training multi-layer neural networks.

This breakthrough became deeply connected with:

- history of backpropagation

- multilayer perceptron history

Scientists such as Geoffrey Hinton helped revive neural network research.

Multi-layer systems successfully solved many problems impossible for single-layer perceptrons.

The world finally realized neural networks still had enormous potential.

The Deep Learning Revolution

The modern deep learning revolution completely changed perceptions surrounding the perceptron controversy.

Advanced neural systems eventually powered:

- Speech recognition

- Computer vision

- Robotics

- Generative AI

- Autonomous vehicles

This success strongly relates to:

- history of deep learning

- transformer neural networks

Modern AI systems proved neural learning approaches could become incredibly powerful with enough computing power and data.

Even today’s best free ai tools rely heavily on advanced neural architectures descended from perceptrons.

The Historical Importance of the Controversy

The perceptron controversy became one of the most important lessons in scientific history.

It showed how:

- Academic critique can reshape entire industries

- Technological limitations can slow innovation

- Research funding affects scientific progress

- Early failures do not always mean permanent failure

The controversy also demonstrated the importance of long-term scientific vision.

Neural networks eventually succeeded because later researchers continued exploring ideas abandoned during the AI winters.

Frequently Asked Questions (FAQs)

What was the perceptron controversy?

The perceptron controversy was a major debate in AI after the 1969 book Perceptrons criticized the limitations of single-layer neural networks.

Who created the perceptron?

Frank Rosenblatt invented the perceptron in 1957.

What was the XOR problem?

The XOR problem showed that single-layer perceptrons could not solve non-linear classification tasks.

Did the perceptron controversy stop AI research?

It severely slowed neural network research and contributed to the first AI winter.

Why did neural networks return later?

Researchers later developed multi-layer neural systems and backpropagation algorithms capable of solving more complex problems.

Conclusion

The perceptron controversy remains one of the most influential turning points in artificial intelligence history. The publication of Perceptrons in 1969 exposed important limitations in early neural network systems and triggered decades of reduced funding, academic skepticism, and declining connectionist research.

Although the criticism was mathematically correct, it also unintentionally slowed the development of neural networks during a critical period in AI history. Eventually, researchers discovered multi-layer architectures and backpropagation techniques that overcame many early limitations.

Today, the legacy of the perceptron controversy serves as a powerful reminder that scientific setbacks do not always represent permanent failure. Modern deep learning systems, generative AI, and advanced neural networks all evolved from ideas that once seemed impossible.