The history of perceptron is one of the most important chapters in artificial intelligence and machine learning. Long before modern deep learning systems, transformers, and generative AI existed, a scientist named Frank Rosenblatt introduced a revolutionary invention that changed computing forever.

The perceptron was the first practical artificial neural system capable of learning from data. This breakthrough became the foundation for neural networks, supervised learning, computer vision, and modern AI research.

Today, the history of perceptron remains essential because nearly every modern neural network evolved from Rosenblatt’s original ideas. From image recognition systems to autonomous robots, the influence of the perceptron can still be seen in today’s machine intelligence technologies.

The journey of the history of perceptron combines neuroscience, mathematics, hardware-based AI, optical sensors, and early robotics research. It also reflects the rise, fall, and rebirth of neural network research during the twentieth century.

In this article, we will explore the complete history of perceptron, Frank Rosenblatt’s incredible invention, the scientific ideas behind it, and how it ultimately shaped the future of artificial intelligence.

The Scientific World Before the Perceptron (1943 – 1957)

Before understanding the history of perceptron, it is important to examine the scientific foundations that came earlier.

In 1943, Warren McCulloch and Walter Pitts introduced the first mathematical artificial neuron model.

Their research became central to mcculloch and pitts neural network development.

The McCulloch-Pitts neuron proved that logical reasoning could theoretically emerge from interconnected artificial neurons.

At the same time, researchers explored:

- Threshold logic

- Binary signals

- Neural activity modeling

- Signal detection

- Computational neuroscience

Scientists began wondering whether machines could learn directly from experience instead of relying only on fixed programming.

These ideas laid the foundation for the future history of perceptron.

Who Was Frank Rosenblatt?

Frank Rosenblatt was an American psychologist and computer scientist deeply interested in machine intelligence and human cognition.

Rosenblatt believed machines could imitate biological learning systems.

His work combined:

- Psychology

- Neuroscience

- Mathematics

- Computer engineering

- Cognitive modeling

He worked at the Cornell Aeronautical Laboratory, where he conducted groundbreaking Navy research related to artificial intelligence and pattern recognition.

Rosenblatt became one of the most important pioneers in the broader who invented neural networks discussion.

His invention of the perceptron transformed the future of machine learning forever.

The Invention of the Perceptron (1958)

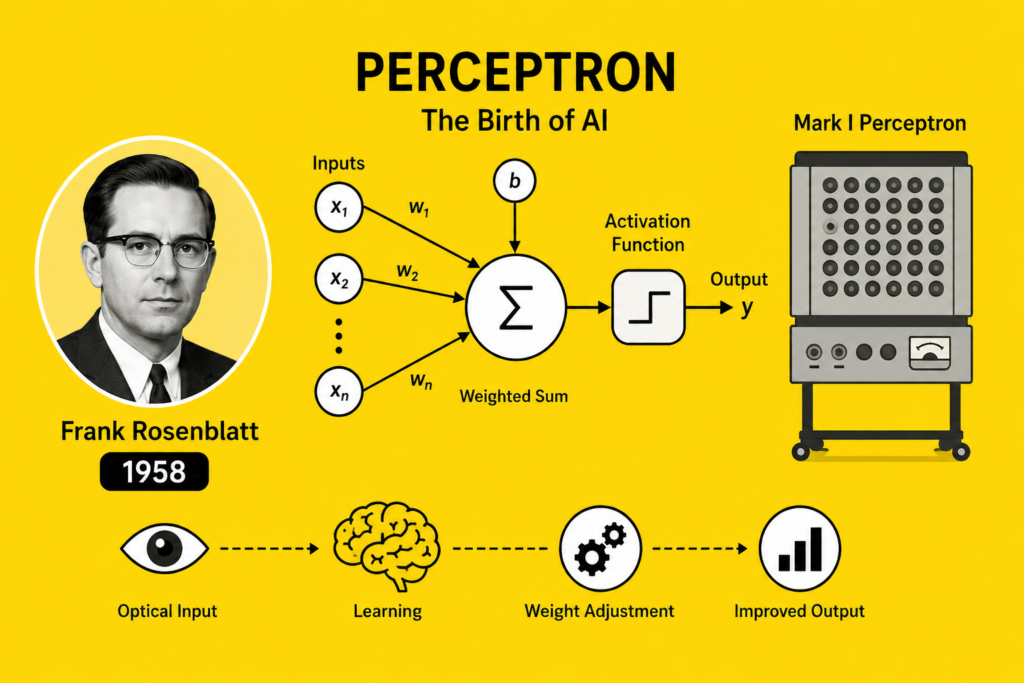

The major breakthrough in the history of perceptron happened in 1958.

Frank Rosenblatt introduced the Mark I Perceptron, one of the first learning machines capable of binary classification.

The Mark I Perceptron used:

- Optical sensors

- Weighted inputs

- Step function activation

- Adaptive learning

- Hardware-based AI

The system attempted to simulate biological neural processing.

Unlike earlier static neuron models, the perceptron could adjust internal weights during training.

This was revolutionary.

The perceptron became one of the first machine learning systems capable of improving performance through experience.

The history of perceptron officially marked the beginning of supervised learning origins in artificial intelligence.

How the Perceptron Worked

The history of perceptron cannot be understood without examining the perceptron’s mathematical structure.

The perceptron operated as a single-layer neural network.

The basic formula:

Where:

- = input signals

- = adjustable weights

- = bias

- = output

The perceptron learned through weight adjustments.

When errors occurred, the system updated weights to improve future predictions.

The learning rule:

Where:

- = learning rate

- = target output

- = predicted output

This learning process became a foundational concept in modern neural network training.

The history of perceptron introduced one of the earliest practical examples of machine learning.

Why the Perceptron Was Revolutionary

The history of perceptron became revolutionary because Rosenblatt’s system could actually learn.

Earlier systems relied on fixed logical programming.

The perceptron demonstrated:

- Adaptive behavior

- Pattern recognition

- Signal classification

- Learning mechanisms

Rosenblatt believed perceptrons might eventually:

- Recognize speech

- Translate language

- Understand images

- Mimic human cognition

At the time, these predictions seemed almost unbelievable.

The perceptron became one of the biggest breakthroughs in early computer vision and AI research.

It also helped expand the broader neural network history movement.

The Mark I Perceptron Machine

The Mark I Perceptron was not merely a theoretical idea.

It was an actual machine built with hardware components and photoreceptor cells.

The system included:

- Electrical circuits

- Motors

- Sensor arrays

- Analog hardware

The U.S. Navy supported much of this research because scientists believed intelligent machines could become useful for defense systems and robotics.

The Mark I Perceptron represented one of the earliest examples of hardware-based AI in history.

This achievement became a major milestone in the neural network timeline.

The Perceptron Convergence Theorem

One major contribution in the history of perceptron was the Perceptron Convergence Theorem.

The theorem proved that the perceptron could successfully classify data if the data was linearly separable.

This concept became central to:

- Linear classifiers

- Pattern recognition

- Neural optimization

- Signal processing

However, the theorem also revealed an important limitation.

The perceptron could not solve problems that were not linearly separable.

This weakness later caused major controversy in AI research.

The Perceptron Controversy (1969)

The most dramatic event in the history of perceptron happened in 1969.

Marvin Minsky and Seymour Papert published the famous book Perceptrons.

The book demonstrated serious limitations of single-layer perceptrons.

The system could not solve certain logical problems such as XOR classification.

This criticism triggered the famous perceptron controversy.

Funding for neural network research declined dramatically.

Many researchers abandoned AI and neural systems completely.

This period eventually became known as the first ai winter.

The history of perceptron entered a difficult phase during the 1970s.

The Revival of Neural Networks (1980 – 2010)

Although perceptrons lost popularity for a time, the history of perceptron did not end.

Researchers later realized that multi-layer neural systems could overcome perceptron limitations.

This breakthrough became connected with:

- history of backpropagation

- multilayer perceptron history

Scientists like Geoffrey Hinton helped revive neural network research during the 1980s.

Backpropagation allowed networks to train across multiple hidden layers efficiently.

Modern deep learning systems eventually evolved from these ideas.

The perceptron became recognized as the ancestor of all modern neural architectures.

The Perceptron’s Influence on Modern AI

The influence of the history of perceptron can still be seen everywhere today.

Modern AI systems use principles first introduced by Rosenblatt:

- Weighted learning

- Pattern classification

- Neural adaptation

- Layered processing

These ideas eventually powered:

- Speech recognition

- Computer vision

- Robotics

- Self-driving vehicles

- Generative AI

Even today’s best free ai tools depend heavily on neural learning systems inspired by the perceptron.

The history of perceptron remains one of the most important stories in computer science.

Frank Rosenblatt’s Lasting Legacy

Frank Rosenblatt’s ideas were far ahead of their time.

Although his work faced criticism, modern AI eventually proved that neural learning systems could become extremely powerful.

Today, Rosenblatt is recognized as one of the true pioneers of machine intelligence.

His contributions influenced:

- Deep learning

- Neural optimization

- Cognitive computing

- Pattern recognition

Without Rosenblatt, modern AI systems might never have evolved into their current form.

The history of perceptron is ultimately the story of how one scientist changed technology forever.

Frequently Asked Questions (FAQs)

What is a perceptron?

A perceptron is a simple artificial neural network capable of binary classification and supervised learning.

Who invented the perceptron?

Frank Rosenblatt invented the perceptron in 1958 at Cornell Aeronautical Laboratory.

Why was the perceptron important?

The perceptron became one of the first machine learning systems capable of learning from data automatically.

What caused the perceptron controversy?

Marvin Minsky and Seymour Papert showed that single-layer perceptrons could not solve certain complex logical problems.

Is the perceptron still important today?

Yes. Modern neural networks and deep learning systems evolved directly from perceptron concepts.

Conclusion

The history of perceptron represents one of the most important revolutions in artificial intelligence history. Frank Rosenblatt’s 1958 invention proved that machines could learn from data through adaptive neural systems, launching the foundations of machine learning and neural computation.

Although the perceptron faced criticism during the AI winters, its ideas survived and later became the basis of deep learning, modern neural networks, and generative AI systems. The concepts of weighted learning, pattern recognition, and supervised training continue driving modern artificial intelligence today.

From early hardware-based AI systems to modern transformer models, the influence of the history of perceptron remains visible throughout the entire AI revolution.