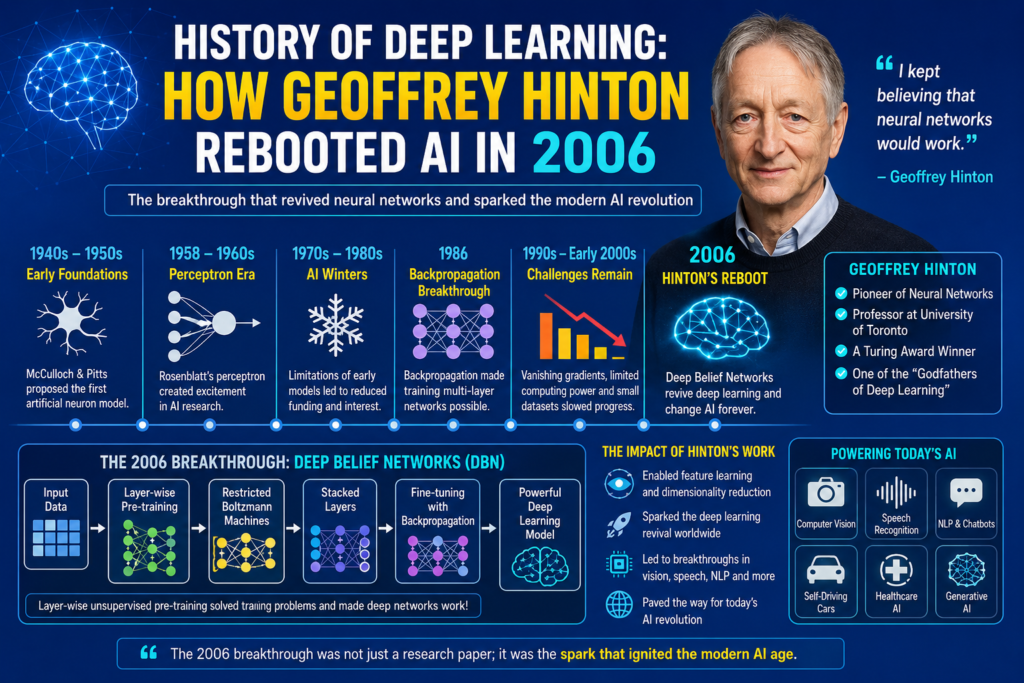

History of deep learning changed forever in 2006 when Geoffrey Hinton and his research team shocked the artificial intelligence world with a revolutionary breakthrough. At a time when many scientists believed neural networks had failed, Hinton introduced new training methods that revived deep neural networks and opened the door to modern AI.

Today, deep learning powers voice assistants, image recognition, chatbots, recommendation systems, self-driving technology, and generative AI. However, before 2006, neural networks were considered slow, unreliable, and nearly impossible to train effectively.

The turning point came with Deep Belief Networks (DBN), layer-wise pre-training, and Restricted Boltzmann Machines. Hinton’s famous Science 2006 paper proved that deep neural networks could finally learn efficiently. This moment became the true birth of modern AI.

The deep learning revival transformed the future of machine learning and completely changed the direction of artificial intelligence research worldwide.

Early Neural Network Dreams (1940 – 1980)

To understand the history of deep learning, we must first explore the early foundations of neural networks.

In 1943, Warren McCulloch and Walter Pitts introduced the first mathematical model of an artificial neuron. Their work became the basis for computational neuroscience and machine intelligence.

The famous mcculloch and pitts neural network concept demonstrated that machines could simulate simple logical reasoning similar to biological neurons.

Later, Frank Rosenblatt invented the perceptron in 1958. This system inspired huge excitement because researchers believed intelligent machines were finally possible.

The perceptron could learn simple patterns through training, but it struggled with complex problems. Despite these limitations, neural network research expanded rapidly during the 1960s.

Scientists believed deep neural systems could eventually imitate human thinking and learning.

The First AI Winter and Neural Network Collapse (1970 – 1985)

Excitement around neural networks suddenly collapsed after Marvin Minsky and Seymour Papert published criticisms of perceptrons.

Their book showed that simple perceptrons could not solve certain mathematical tasks, including XOR problems.

This criticism triggered the famous first ai winter, where AI funding and research dramatically declined.

Many researchers abandoned neural networks entirely. Symbolic AI systems became more popular because they appeared more practical and easier to control.

During this period, neural network depth became a serious challenge. Training multi-layer systems was extremely difficult due to poor computational power and unstable optimization methods.

Even though some researchers continued exploring neural learning, deep architectures remained largely ignored.

Backpropagation Revived Neural Research (1986)

The next major breakthrough arrived in 1986 when Geoffrey Hinton, David Rumelhart, and Ronald Williams popularized backpropagation.

Backpropagation allowed neural networks to adjust weights efficiently across multiple layers.

The training formula used gradient descent to minimize prediction errors:

Where:

- = network weights

- = learning rate

- = error function

This innovation became central to modern neural training.

Researchers studying history of backpropagation recognized that deep networks finally had a practical learning mechanism.

Backpropagation allowed multilayer perceptrons to solve more complicated tasks than earlier perceptrons.

However, training deep networks still remained extremely difficult.

The Major Problems Before 2006

Even with backpropagation, deep neural networks struggled badly during the 1990s and early 2000s.

Several problems slowed progress:

- Vanishing gradients

- Poor weight initialization

- Limited computational power

- Insufficient datasets

- Slow hardware

- Overfitting issues

The famous vanishing gradient problem became one of the biggest obstacles in AI research.

As networks became deeper, gradients became smaller during training. Earlier layers stopped learning effectively.

This caused researchers to avoid deep architectures altogether.

At the same time, support vector machines and statistical learning methods became dominant because they produced better short-term results.

Many scientists believed neural networks were outdated technology.

Geoffrey Hinton Never Gave Up

While most researchers abandoned neural networks, Geoffrey Hinton continued believing deep learning would eventually succeed.

Hinton worked at the University of Toronto and became one of the leading voices defending neural computation.

Researchers often refer to his journey through geoffrey hinton biography discussions because of his persistence during AI’s darkest years.

Hinton believed the human brain itself was evidence that multi-layer learning systems could work.

Instead of giving up, he focused on solving the training challenges that prevented deep networks from succeeding.

This determination eventually changed AI history forever.

The Revolutionary 2006 Breakthrough

The most important moment in the history of deep learning arrived in 2006.

Geoffrey Hinton and his collaborators published a groundbreaking paper in the journal Science titled:

“Reducing the Dimensionality of Data with Neural Networks.”

This research introduced Deep Belief Networks (DBN) and layer-wise unsupervised pre-training.

Instead of training deep networks all at once, Hinton trained them layer by layer.

This approach dramatically improved weight initialization and optimization stability.

The method used Restricted Boltzmann Machines to learn hidden representations gradually.

For the first time, deep neural networks could train effectively without collapsing.

This breakthrough became known as Hinton’s reboot.

How Deep Belief Networks Worked

Deep Belief Networks used stacked Restricted Boltzmann Machines.

Each layer learned feature representations from the previous layer.

The process looked like this:

- Train the first layer unsupervised

- Freeze its weights

- Train the second layer using outputs from the first

- Continue stacking layers

- Fine-tune the entire network with backpropagation

This layer-wise pre-training solved major optimization issues.

The network could learn hierarchical feature learning patterns automatically.

For example:

- Early layers learned edges

- Middle layers learned shapes

- Deeper layers learned complex objects

This ability became essential for image recognition and speech systems.

Why the 2006 Paper Changed Everything

The Science 2006 paper shocked the AI community because it proved deep networks could outperform older methods.

Researchers suddenly realized neural network depth was not impossible after all.

The paper triggered a massive research pivot toward deep learning revival.

Universities, tech companies, and AI labs rapidly returned to neural network research.

This moment became one of the greatest academic breakthroughs in AI history.

The impact spread into:

- Computer vision

- Speech recognition neural networks

- Language processing

- Recommendation systems

- Robotics

- Generative neural networks

The deep learning revolution had officially begun.

The Rise of Modern Deep Learning (2006 – 2012)

After Hinton’s breakthrough, deep learning research accelerated rapidly.

Several factors helped fuel growth:

GPU Computing

Researchers discovered GPUs could train neural networks much faster than CPUs.

The development of gpu history in ai became critical for large-scale neural training.

Massive parallel processing allowed deeper models to train efficiently.

Bigger Datasets

The internet created enormous labeled datasets.

More data allowed deep networks to generalize better.

Better Architectures

Researchers developed stronger neural structures including CNNs, RNNs, and LSTMs.

The rise of history of cnn research became especially important for computer vision.

Improved Optimization

New activation functions, regularization methods, and initialization techniques improved stability.

These advances allowed deep learning to outperform traditional machine learning systems.

AlexNet and the Deep Learning Explosion (2012)

Although the 2006 breakthrough restarted neural research, the true public explosion happened in 2012.

Alex Krizhevsky, Ilya Sutskever, and Geoffrey Hinton created AlexNet.

AlexNet won the ImageNet competition with dramatically better accuracy than previous systems.

Researchers studying history of alexnet often consider it the moment deep learning conquered computer vision.

AlexNet used:

- Deep convolutional layers

- GPU acceleration

- ReLU activation

- Dropout regularization

The model reduced image recognition error rates massively.

This victory convinced the technology industry that deep learning was the future.

Soon after, companies like Google, Facebook, Microsoft, and OpenAI invested heavily in neural AI.

Geoffrey Hinton Became an AI Legend

After 2012, Geoffrey Hinton became internationally recognized as one of the “Godfathers of Deep Learning.”

His persistence during decades of skepticism finally paid off.

Today, Hinton is frequently mentioned alongside Yann LeCun and Yoshua Bengio as pioneers of modern AI.

Their work shaped nearly every major neural technology used today.

Researchers discussing godfathers of deep learning usually recognize Hinton’s 2006 breakthrough as the most important turning point in modern neural AI.

Without his research, today’s generative AI systems might never have existed.

Deep Learning’s Impact on Modern AI

Modern AI systems depend heavily on ideas born during the deep learning revival.

These systems include:

- ChatGPT

- Image generators

- Speech assistants

- Medical AI

- Translation systems

- Self-driving cars

- Robotics

The rise of what is deep learning discussions across the world reflects how deeply this technology influences society today.

Deep learning also transformed industries such as healthcare, finance, transportation, and education.

Researchers continue improving architectures through transformers, reinforcement learning, and multimodal AI systems.

The Legacy of Geoffrey Hinton

The legacy of Geoffrey Hinton goes beyond one research paper.

He helped restore confidence in neural computation during a period when most scientists had abandoned it.

His work inspired thousands of researchers worldwide.

Modern transformer neural networks, sequence models, and generative AI systems all build upon ideas strengthened during the 2006 revival.

The Toronto AI research community became one of the most influential AI hubs in the world because of Hinton’s influence.

His persistence demonstrated that scientific breakthroughs often require decades of patience and belief.

Deep Learning Beyond 2026

The future of deep learning continues evolving rapidly.

Researchers are now exploring:

- Spiking neural networks

- Multimodal AI

- Autonomous reasoning systems

- Artificial general intelligence

- Efficient neural architectures

Modern AI systems also combine deep learning with reinforcement learning history and generative neural networks.

Many of today’s best free ai tools rely on neural architectures inspired by Hinton’s work.

The influence of deep learning now reaches almost every part of digital technology.

Frequently Asked Questions (FAQs)

Who rebooted deep learning in 2006?

Geoffrey Hinton and his research team rebooted deep learning in 2006 through Deep Belief Networks and unsupervised pre-training methods.

What was Geoffrey Hinton’s 2006 paper about?

The paper introduced methods for training deep neural networks using layer-wise pre-training and Restricted Boltzmann Machines.

Why was deep learning difficult before 2006?

Deep networks suffered from vanishing gradients, weak computational power, and unstable optimization methods.

What are Deep Belief Networks?

Deep Belief Networks are layered neural architectures trained using unsupervised learning methods and Restricted Boltzmann Machines.

Why is Geoffrey Hinton important in AI?

Hinton helped revive neural network research and became one of the key pioneers of modern deep learning.

How did AlexNet help deep learning?

AlexNet demonstrated that deep neural networks could dominate image recognition tasks with far higher accuracy than older systems.

Conclusion

The story of history of deep learning is a story of persistence, scientific courage, and revolutionary innovation. During a period when most researchers abandoned neural networks, Geoffrey Hinton continued believing deep architectures could eventually work.

His 2006 Science paper transformed AI forever through Deep Belief Networks, unsupervised pre-training, and layer-wise learning methods. This academic breakthrough restarted global interest in neural AI and paved the way for modern machine learning systems.

Today, deep learning powers everything from image recognition to conversational AI. Its influence stretches across medicine, robotics, language processing, and autonomous systems.

The rise of deep learning remains deeply connected to history of backpropagation, vanishing gradient problem, history of cnn, history of alexnet, and godfathers of deep learning research.

As artificial intelligence continues evolving, Geoffrey Hinton’s reboot of AI in 2006 will always remain one of the most important moments in technological history.