sequence-to-sequence models history is one of the most fascinating chapters in artificial intelligence and natural language processing. Before modern AI translation systems became powerful, computers struggled to understand language structure, sentence meaning, and variable-length sequences. Early machine translation systems were rigid, rule-based, and often produced robotic translations with poor linguistic structure.

The arrival of sequence-to-sequence models completely transformed neural machine translation (NMT). These systems allowed neural networks to read one sequence, understand its semantic representation, and generate another sequence in a different language. This breakthrough became the foundation for Google Translate AI improvements, chatbots, summarization systems, speech recognition, and modern NLP models.

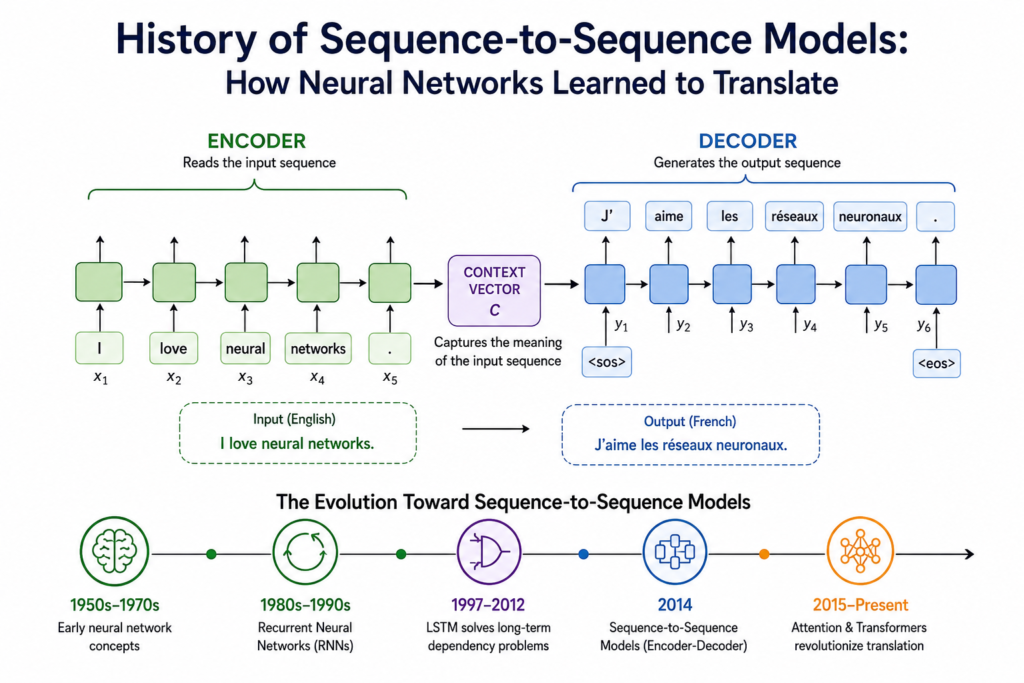

The rise of encoder-decoder architecture changed how machines processed text. Instead of translating word by word, neural networks learned hidden vectors and context vectors that captured sentence meaning. Researchers such as Ilya Sutskever and Oriol Vinyals played a major role in turning these ideas into practical systems.

Today, sequence-to-sequence learning influences almost every generative AI technology. From translation quality improvements to transformer neural networks, this innovation reshaped the future of deep learning forever.

The Early Foundations of Neural Language Processing (1950 – 1980)

The roots of sequence learning can be traced back to early neural network research. Scientists wanted machines to process human language, but computers lacked the ability to handle variable-length sequences and recursive processing.

During the 1940s and 1950s, researchers explored simple artificial neurons inspired by the human brain. The work of mcculloch and pitts neural network became an important milestone in computational neuroscience and AI theory. These early systems showed that machines could imitate logical reasoning.

Later, Frank Rosenblatt introduced the perceptron, which inspired decades of neural network research. However, early models were too limited for language translation because language contains complex semantic relationships and long-term dependencies.

Researchers also explored symbolic machine translation systems. These rule-based systems required manually programmed grammar rules and huge dictionaries. Although useful for narrow tasks, they struggled with flexible language mapping and contextual meaning.

At the same time, work in history of rnn research slowly prepared the foundation for future sequence learning models. Recurrent neural networks introduced the idea that information from previous inputs could influence future outputs.

Recurrent Neural Networks and Sequential Learning (1980 – 1995)

Recurrent neural networks became a major breakthrough because they could process sequential data step by step. Unlike traditional feedforward networks, RNNs contained loops that allowed information to persist over time.

This ability made RNNs suitable for language processing, speech recognition neural networks, and time-series prediction. Instead of treating every word independently, RNNs could understand sentence flow and linguistic structure.

However, these systems suffered from severe training problems. Long sequences caused gradients to vanish or explode during backpropagation. Because of this limitation, RNNs often forgot earlier words in long sentences.

The famous vanishing gradient problem became one of the biggest obstacles in neural language research. Scientists struggled to train deep sequential models effectively.

Researchers experimented with hidden vectors, semantic representation methods, and data pairs to improve translation quality, but results remained weak. Machine translation systems still produced unnatural and confusing outputs.

Despite these problems, RNNs introduced the revolutionary concept of end-to-end learning. Instead of manually programming language rules, neural networks could learn directly from massive text datasets.

This idea would later become the foundation of sequence-to-sequence learning.

The Rise of LSTM Networks (1997 – 2012)

A huge breakthrough arrived in 1997 when Sepp Hochreiter and Jürgen Schmidhuber introduced Long Short-Term Memory networks, commonly known as LSTMs.

LSTM models solved the memory limitations of standard RNNs by using gates that controlled information flow. These gates allowed networks to remember important information across long sequences.

The invention of LSTMs dramatically improved speech recognition, handwriting generation, and neural machine translation.

Researchers studying history of lstm development realized that these networks could finally manage long-term dependencies in sentences. LSTMs became especially important for language tasks because understanding one word often depends on earlier sentence context.

For example, translating a sentence from English to French may require remembering words that appeared many steps earlier. Traditional RNNs failed at this task, but LSTMs handled it far more effectively.

By the late 2000s, deep learning research was accelerating rapidly. GPU computing made neural network training much faster, and large datasets became widely available.

These improvements prepared the perfect environment for the birth of sequence-to-sequence models.

The Birth of Sequence-to-Sequence Models (2014)

The real revolution happened in 2014 when researchers at Google published a groundbreaking paper called “Sequence to Sequence Learning with Neural Networks.”

The system was developed mainly by Ilya Sutskever, Oriol Vinyals, and Quoc Le. Their work introduced the encoder-decoder architecture that transformed machine translation history forever.

The idea was surprisingly elegant:

- The encoder network reads the input sentence.

- It converts the sentence into a context vector.

- The decoder network generates the translated sentence from that vector.

For example:

Input: “I love neural networks.”

Output: “J’aime les réseaux neuronaux.”

Instead of translating word by word, the network learned a semantic representation of the entire sentence.

This was revolutionary because the system could handle variable-length sequences naturally. The encoder compressed sentence meaning into hidden vectors, while the decoder reconstructed meaning in another language.

The model learned directly from data pairs without handcrafted grammar rules.

This innovation marked one of the greatest moments in history of deep learning research.

How Encoder-Decoder Architecture Worked

The encoder-decoder architecture became the heart of neural machine translation.

Encoder Phase

The encoder processed words one by one:

Where:

- = hidden state

- = current input word

- = neural activation function

The final hidden state represented the entire sentence meaning.

Decoder Phase

The decoder generated output words sequentially:

Where:

- = predicted output word

- = context vector

The decoder relied on semantic representation stored inside the context vector.

This architecture enabled recursive processing and language mapping at a scale never seen before.

Why Sequence-to-Sequence Models Changed Translation Forever

Traditional machine translation systems translated sentences using rigid rules. Sequence-to-sequence models completely replaced this approach with neural machine translation.

The benefits were enormous:

- Better translation quality

- More natural sentence structure

- Improved semantic understanding

- Support for variable-length sequences

- End-to-end learning capabilities

Google Translate AI rapidly adopted neural translation systems because they produced far more fluent outputs.

BLEU score evaluations showed dramatic improvements over statistical translation systems.

Instead of relying on manually written dictionaries, neural networks learned directly from billions of multilingual examples.

This breakthrough also expanded beyond translation into:

- Speech recognition

- Text summarization

- Question answering

- Conversational AI

- Generative neural networks

The influence of seq2seq models became massive across NLP models.

Attention Mechanisms Improved Everything (2015 – 2017)

Although early sequence-to-sequence systems were powerful, they still had a major weakness.

Compressing an entire sentence into one context vector caused information loss for long sentences.

Researchers solved this problem using attention mechanisms.

Attention allowed the decoder to focus on relevant parts of the input sequence during translation.

Instead of depending on one hidden vector, the decoder dynamically accessed multiple encoder states.

Mathematically, attention weights were calculated as:

This innovation dramatically improved translation quality and contextual understanding.

Attention mechanisms later inspired transformer neural networks, which eventually dominated NLP research.

Sequence-to-Sequence Models and Transformers

Sequence-to-sequence learning directly influenced transformer architecture development.

Transformers removed recurrence entirely and relied fully on self-attention mechanisms.

This shift made training faster and more scalable.

Modern AI systems such as ChatGPT, translation engines, and generative text models all inherit concepts originally developed during sequence-to-sequence research.

The transition from RNNs to transformers became a critical step in the evolution of AI language systems.

Researchers studying transformer neural networks often trace their origins back to encoder-decoder seq2seq systems.

Without sequence-to-sequence learning, modern generative AI may never have existed.

Influence on Modern Artificial Intelligence

Today, sequence-to-sequence systems influence nearly every major AI application.

These include:

- Smart assistants

- Voice recognition

- Automatic subtitles

- AI chatbots

- Medical NLP systems

- Self-driving communication systems

The connection between seq2seq systems and history of ai is extremely important because they helped AI move beyond narrow classification tasks into true generative language understanding.

Modern deep learning systems also combine seq2seq learning with reinforcement learning, transformers, and multimodal AI architectures.

The technology continues evolving rapidly.

The Legacy of Sutskever and Vinyals

Ilya Sutskever and Oriol Vinyals became legendary figures in AI research because of their contributions to neural machine translation.

Their encoder-decoder architecture influenced:

- OpenAI language systems

- Google Translate AI

- Modern chatbots

- Large language models

- Generative neural networks

Their work proved that deep learning could understand complex language mapping tasks through data-driven learning.

Many researchers now consider seq2seq learning one of the defining breakthroughs in modern AI history.

The ideas behind hidden vectors, context vectors, and end-to-end learning remain central to NLP research today.

The Future of Sequence-to-Sequence Learning

Although transformers dominate today, sequence-to-sequence principles still remain essential.

Researchers continue improving:

- Context awareness

- Translation quality

- Multilingual systems

- Real-time speech translation

- Low-resource language learning

Future AI systems may combine seq2seq learning with reasoning models and multimodal architectures.

As AI advances further, understanding sequence relationships will remain a core challenge.

Even modern systems used in best free ai tools often rely on seq2seq-inspired architectures for translation, summarization, and conversation generation.

The influence of sequence-to-sequence learning is far from over.

Frequently Asked Questions (FAQs)

What is a sequence-to-sequence model?

A sequence-to-sequence model is a neural network architecture that converts one sequence into another sequence. It is commonly used in translation, summarization, and speech recognition.

Who invented sequence-to-sequence learning?

The modern seq2seq architecture was introduced in 2014 by Ilya Sutskever, Oriol Vinyals, and Quoc Le at Google.

Why were sequence-to-sequence models important?

They enabled neural machine translation systems to learn directly from data instead of relying on handcrafted language rules.

What is the role of the encoder-decoder architecture?

The encoder converts input data into semantic representation, while the decoder generates output sequences from that representation.

How are transformers related to sequence-to-sequence models?

Transformers evolved from seq2seq concepts and improved them using self-attention mechanisms instead of recurrent processing.

Are sequence-to-sequence models still used today?

Yes. Even modern transformer systems still use encoder-decoder principles inspired by early sequence-to-sequence research.

Conclusion

The journey of sequence-to-sequence models history represents one of the greatest breakthroughs in artificial intelligence. From early RNN experiments to advanced neural machine translation systems, seq2seq learning changed how machines understand language forever.

The encoder-decoder architecture introduced by Sutskever and Vinyals transformed machine translation history and paved the way for modern generative AI systems. These innovations improved translation quality, enabled semantic representation learning, and inspired transformer neural networks.

Today, seq2seq ideas continue influencing NLP models, conversational AI, and intelligent systems across the world. Their legacy remains deeply connected to history of ai, history of rnn, history of lstm, vanishing gradient problem, and transformer neural networks research.

As artificial intelligence evolves further, the impact of sequence-to-sequence learning will continue shaping the future of language understanding and communication technology.