The history of backpropagation is one of the most important stories in artificial intelligence and computer science. Modern deep learning systems, generative AI, speech recognition, and computer vision all depend heavily on one revolutionary learning algorithm: backpropagation.

Without backpropagation, modern neural networks would never have reached their current power.

The history of backpropagation represents the turning point that revived neural network research after years of decline during the AI winters. It allowed researchers to train multi-layer neural networks efficiently and finally overcome many limitations of early perceptron systems.

The story behind the history of backpropagation combines mathematics, neuroscience, optimization theory, and scientific persistence. Researchers spent decades trying to discover how neural systems could learn complex patterns across multiple hidden layers.

Today, nearly every advanced AI system relies on concepts connected to backpropagation and gradient-based learning.

In this article, we will explore the complete history of backpropagation, its mathematical foundations, the scientists who developed it, and why it became the algorithm that made deep learning possible.

Neural Networks Before Backpropagation (1943 – 1970)

Before understanding the history of backpropagation, we must examine the early history of neural networks.

The first artificial neuron model appeared in 1943 through Warren McCulloch and Walter Pitts.

Their work became famous through mcculloch and pitts neural network research.

Later, Donald Hebb introduced adaptive neural learning through the hebb learning rule.

In 1957, Frank Rosenblatt invented the perceptron, one of the earliest machine learning systems.

The perceptron became central to:

- Pattern recognition

- Binary classification

- Neural learning

- Early AI systems

However, single-layer perceptrons had serious limitations.

This eventually triggered the famous perceptron controversy and contributed to the first ai winter.

Researchers needed a way to train deeper neural systems.

That challenge became the heart of the history of backpropagation.

The Problem With Multi-Layer Neural Networks

The major issue before the history of backpropagation involved training multi-layer networks.

Single-layer networks could only solve linearly separable problems.

Complex tasks required hidden layers and deeper neural architectures.

However, researchers did not know how to adjust weights efficiently inside multiple layers.

The key question became:

How can error signals travel backward through a network?

Without an effective learning algorithm, deep neural systems remained impractical.

The history of backpropagation began as scientists searched for a solution to this learning problem.

Early Mathematical Foundations (1960 – 1974)

The foundations of the history of backpropagation began with mathematical optimization research.

Scientists explored:

- Partial derivatives

- Chain rule

- Gradient-based learning

- Reverse mode differentiation

- Error correction

One of the earliest important contributors was Paul Werbos.

In 1974, Werbos described how reverse mode differentiation could train neural networks efficiently.

His doctoral thesis introduced concepts closely related to modern backpropagation.

Although his work received little attention initially, it later became foundational to the history of backpropagation.

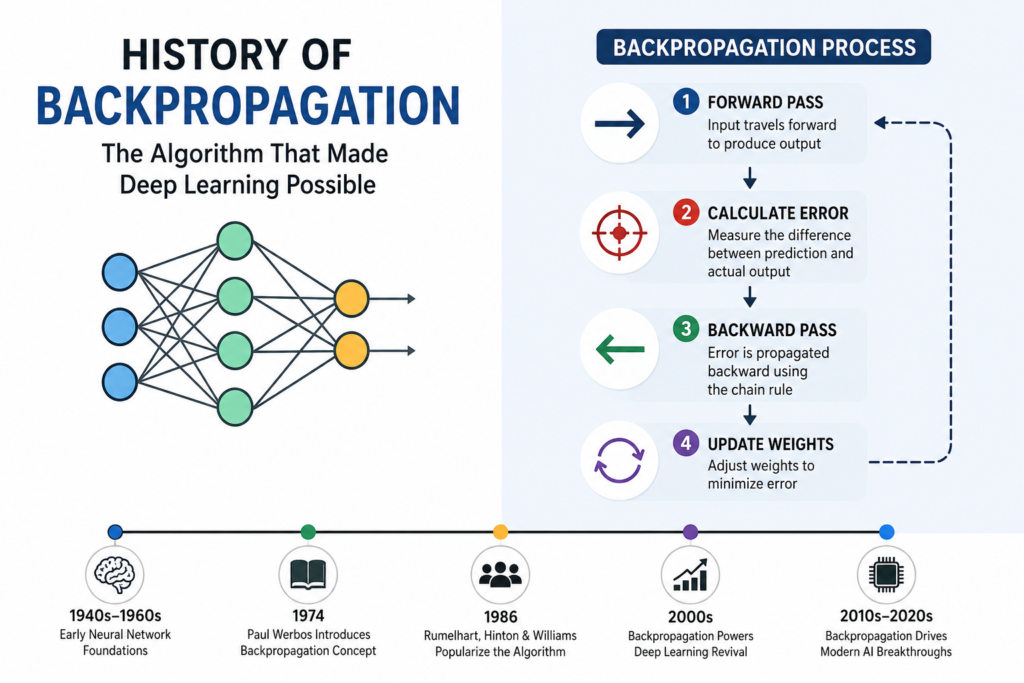

What Is Backpropagation?

To understand the history of backpropagation, we must understand how the algorithm works.

Backpropagation trains neural networks by minimizing prediction errors.

The process involves:

- Forward pass

- Error calculation

- Backward error propagation

- Weight update

The network predicts an output.

The error is calculated using a cost function:

Where:

- = actual value

- = predicted value

Backpropagation then computes derivatives using the chain rule.

Weights update according to:

Where:

- = learning rate

- = gradient

This process became central to the entire history of backpropagation and modern deep learning.

The 1986 Revival (1980 – 1990)

The biggest breakthrough in the history of backpropagation happened in 1986.

Researchers David Rumelhart, Geoffrey Hinton, and Ronald Williams published a landmark paper explaining practical backpropagation training for multi-layer neural networks.

This work became one of the most important moments in AI history.

The algorithm successfully trained networks across hidden layers.

This breakthrough revived neural network research after the AI winter.

The 1986 revival transformed the entire history of backpropagation and reignited interest in connectionism.

Why Backpropagation Was Revolutionary

The history of backpropagation became revolutionary because the algorithm solved one of AI’s biggest problems.

Backpropagation enabled:

- Training multi-layer networks

- Complex pattern recognition

- Efficient weight optimization

- Deep learning architectures

Neural systems could finally learn much more complicated relationships.

This breakthrough eventually led to:

- Computer vision

- Speech recognition

- Language translation

- Robotics

- Generative AI

The history of backpropagation changed artificial intelligence forever.

The Role of the Chain Rule

One of the most important concepts in the history of backpropagation is the chain rule from calculus.

The chain rule allows error signals to move backward through neural layers.

For nested functions:

This mathematical principle became the foundation of neural learning.

Backpropagation repeatedly applies derivatives layer by layer.

Without the chain rule, deep learning would not function effectively.

The history of backpropagation is deeply connected to mathematical optimization and calculus.

Challenges and Limitations

Although revolutionary, the history of backpropagation also includes many challenges.

Early neural systems suffered from:

- Local minima

- Slow convergence

- Vanishing gradients

- Limited hardware

- Computational inefficiency

The vanishing gradient problem became especially important in deep neural systems.

Researchers later developed improved activation functions and architectures to solve these issues.

The algorithm required decades of refinement before modern deep learning became practical.

Backpropagation and Deep Learning (2000 – 2026)

The modern AI revolution depends heavily on the history of backpropagation.

Backpropagation now powers:

- Convolutional neural networks

- Recurrent neural networks

- Transformers

- Generative AI

- Large language models

This progress strongly connects to:

- history of deep learning

- history of cnn

- transformer neural networks

Advanced GPUs dramatically improved training speed.

This breakthrough became part of gpu history in ai.

Modern neural systems can now train billions of parameters using optimized backpropagation algorithms.

Geoffrey Hinton and the Deep Learning Revolution

Geoffrey Hinton became one of the most important figures in the history of backpropagation.

Hinton strongly supported neural networks even during periods of skepticism.

His work helped revive deep learning during the 1980s and 2000s.

Hinton’s contributions strongly connect with:

- geoffrey hinton biography

- godfathers of deep learning

Without his persistence, neural network research might have remained forgotten after the AI winters.

Applications of Backpropagation Today

The influence of the history of backpropagation can now be seen everywhere.

Modern applications include:

- Medical imaging

- Voice assistants

- Self-driving vehicles

- Facial recognition

- Chatbots

- AI art generation

Even today’s best free ai tools rely heavily on backpropagation-based neural systems.

The algorithm became the engine powering modern artificial intelligence.

Why Backpropagation Still Matters

The history of backpropagation matters because it solved one of the greatest problems in machine learning:

How can deep neural systems learn efficiently?

Backpropagation transformed neural networks from theoretical ideas into practical technologies.

It enabled:

- Deep architectures

- Efficient learning

- Massive pattern recognition

- Modern AI systems

The algorithm remains one of the most important inventions in computer science history.

Frequently Asked Questions (FAQs)

What is backpropagation?

Backpropagation is a learning algorithm that trains neural networks by propagating errors backward through layers.

Who invented backpropagation?

Paul Werbos introduced early ideas, while Rumelhart, Hinton, and Williams popularized practical neural training in 1986.

Why is backpropagation important?

It enabled efficient training of deep neural networks and made modern deep learning possible.

What mathematical principle powers backpropagation?

The algorithm relies heavily on the chain rule from calculus.

Does modern AI still use backpropagation?

Yes. Most deep learning systems still rely on backpropagation and gradient-based optimization.

Conclusion

The history of backpropagation represents one of the greatest breakthroughs in artificial intelligence history. By solving the problem of training multi-layer neural networks, backpropagation transformed neural learning from a theoretical concept into a practical technology capable of powering modern AI systems.

From early mathematical theories to the 1986 deep learning revival, the algorithm opened the door to speech recognition, computer vision, generative AI, robotics, and large language models. Today, nearly every advanced neural system relies on principles connected to backpropagation and gradient-based learning.

The legacy of the history of backpropagation continues shaping the future of artificial intelligence, proving that one mathematical breakthrough can transform the entire world of technology.