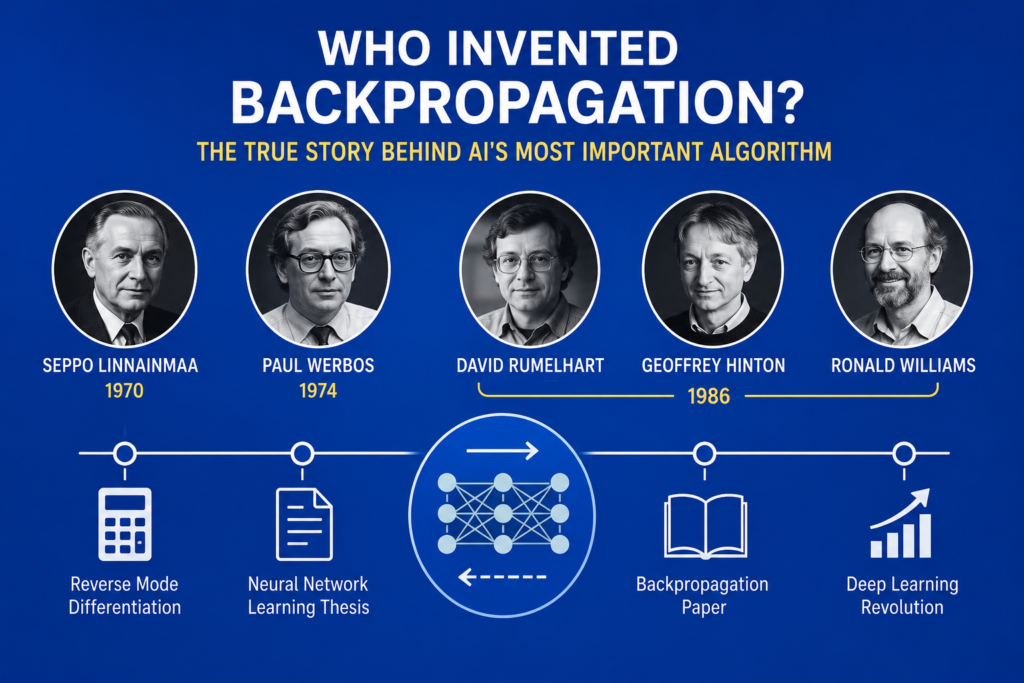

The question who invented backpropagation is one of the most important and controversial topics in artificial intelligence history. Modern deep learning systems, generative AI, speech recognition, and neural networks all rely on backpropagation, yet many people still debate who truly deserves credit for inventing this revolutionary algorithm.

The story of who invented backpropagation is not simple. Unlike many scientific inventions created by a single person, backpropagation evolved through multiple discoveries, mathematical refinements, and independent researchers working across different decades.

Today, the answer to who invented backpropagation involves mathematicians, psychologists, computer scientists, and neural network pioneers. Their combined work transformed artificial intelligence forever and helped launch the modern deep learning revolution.

The history behind who invented backpropagation includes scientific attribution debates, academic credit disputes, peer-reviewed research, and the evolution of automatic differentiation theory.

In this article, we will explore the complete story of who invented backpropagation, the scientists involved, the mathematical foundations behind the algorithm, and why backpropagation became the engine powering modern artificial intelligence.

Neural Networks Before Backpropagation (1943 – 1970)

To understand who invented backpropagation, we must first examine the history of neural networks before backpropagation existed.

In 1943, Warren McCulloch and Walter Pitts introduced the first artificial neuron model.

Their work became central to mcculloch and pitts neural network development.

Later, Donald Hebb introduced adaptive learning concepts through the hebb learning rule.

Frank Rosenblatt then invented the perceptron in 1957, one of the earliest machine learning systems.

These breakthroughs strongly shaped the broader neural network history movement.

However, early neural systems faced a major problem.

Researchers could not efficiently train deep multi-layer networks.

This challenge became the central issue in the future story of who invented backpropagation.

The Problem With Multi-Layer Neural Networks

Before the invention behind who invented backpropagation, neural networks struggled with hidden layers.

Single-layer perceptrons could solve only simple linearly separable tasks.

More advanced intelligence required multi-layer neural systems.

However, scientists lacked an efficient learning algorithm capable of adjusting weights inside hidden layers.

Researchers needed a method for:

- Error correction

- Gradient calculation

- Weight optimization

- Efficient learning

Without such a system, deep neural networks remained impractical.

The search for a solution eventually led to the discovery of backpropagation.

Seppo Linnainmaa and Automatic Differentiation (1970)

One important answer to who invented backpropagation involves Seppo Linnainmaa.

In 1970, Linnainmaa developed reverse mode automatic differentiation.

His work focused on efficiently calculating derivatives for computational systems.

This mathematical breakthrough later became foundational to backpropagation.

Although Linnainmaa was not directly studying neural networks, his work provided critical mathematical foundations for the algorithm.

The history of automatic differentiation became deeply connected to the future of deep learning.

Many historians now consider Linnainmaa one of the earliest contributors in the story of who invented backpropagation.

Paul Werbos and the 1974 Thesis

The strongest early answer to who invented backpropagation often points to Paul Werbos.

In 1974, Werbos published his doctoral thesis describing how reverse mode differentiation could train neural networks.

His work introduced many core concepts behind modern backpropagation.

The thesis explained:

- Error propagation

- Gradient-based learning

- Multi-layer training

- Neural optimization

Werbos became one of the first researchers to connect automatic differentiation directly to neural network learning.

However, his work initially received little attention.

At the time, neural network research was declining because of the perceptron controversy and the first ai winter.

Even though his ideas were revolutionary, the AI community largely ignored them during the 1970s.

Rumelhart, Hinton, and Williams (1986)

The biggest turning point in who invented backpropagation happened in 1986.

David Rumelhart, Geoffrey Hinton, and Ronald Williams published a landmark research paper that popularized backpropagation for training neural networks.

Their work demonstrated practical supervised training for multi-layer neural systems.

The paper showed how neural networks could finally learn complex patterns across hidden layers.

This breakthrough revived neural network research after years of decline.

The 1986 paper became one of the most important moments in the broader history of backpropagation and modern AI.

How Backpropagation Works

To fully understand who invented backpropagation, we must examine how the algorithm functions mathematically.

Backpropagation involves four major steps:

- Forward pass

- Error calculation

- Backward error propagation

- Weight update

The algorithm minimizes prediction error using a cost function:

Where:

- = actual value

- = predicted value

Weights update using gradients:

This process relies heavily on:

- Chain rule

- Partial derivatives

- Mathematical optimization

- Computational efficiency

The algorithm became one of the most important inventions in machine learning history.

Why Backpropagation Was Revolutionary

The answer to who invented backpropagation matters because the algorithm completely transformed AI.

Backpropagation enabled:

- Deep neural architectures

- Multi-layer training

- Advanced pattern recognition

- Modern deep learning

Without backpropagation, modern AI systems would not exist.

The algorithm eventually powered:

- Speech recognition

- Computer vision

- Language translation

- Robotics

- Generative AI

The invention behind who invented backpropagation changed the future of technology forever.

The Debate Over Academic Credit

The question who invented backpropagation remains controversial because several researchers contributed important ideas independently.

Some historians credit:

- Seppo Linnainmaa for reverse differentiation

- Paul Werbos for neural learning applications

- Rumelhart, Hinton, and Williams for practical implementation

This became a major issue involving:

- Scientific attribution

- Multiple discovery

- Independent researchers

- Academic credit

The algorithm evolved gradually rather than appearing through one single invention.

The debate surrounding who invented backpropagation continues even today.

Geoffrey Hinton and the Deep Learning Revival

Geoffrey Hinton became one of the most influential figures connected to who invented backpropagation.

Hinton strongly defended neural networks during periods of skepticism.

His work helped revive connectionism during the 1980s and 1990s.

The deep learning revolution later became connected to:

- geoffrey hinton biography

- history of deep learning

Hinton’s persistence helped transform backpropagation from an academic concept into the foundation of modern AI.

Backpropagation and Modern Deep Learning

The answer to who invented backpropagation matters even more today because nearly every modern AI breakthrough depends on the algorithm.

Backpropagation powers:

- Convolutional neural networks

- Recurrent neural networks

- Transformers

- Large language models

- Generative AI systems

This progress strongly connects to:

- transformer neural networks

- gpu history in ai

Modern hardware accelerators now train massive neural systems using optimized backpropagation techniques.

Even today’s best free ai tools rely heavily on neural learning powered by backpropagation.

The Legacy of Backpropagation

The story of who invented backpropagation ultimately reflects how scientific breakthroughs often emerge from multiple contributors across different generations.

The algorithm combined:

- Mathematical foundations

- Computational theory

- Neural learning research

- Algorithm refinement

Backpropagation transformed artificial intelligence from a struggling research field into one of the most powerful technologies in history.

Its legacy continues shaping science, medicine, robotics, education, and communication worldwide.

Frequently Asked Questions (FAQs)

Who invented backpropagation?

Several researchers contributed, including Seppo Linnainmaa, Paul Werbos, David Rumelhart, Geoffrey Hinton, and Ronald Williams.

What did Paul Werbos contribute?

Werbos connected reverse differentiation directly to neural network learning in his 1974 thesis.

Why is Geoffrey Hinton important?

Hinton helped popularize practical backpropagation training and revive neural network research.

Why is backpropagation important?

Backpropagation enabled efficient training of deep neural networks and made modern AI possible.

Does AI still use backpropagation today?

Yes. Most deep learning systems still rely heavily on backpropagation algorithms.

Conclusion

The story of who invented backpropagation is one of the most fascinating journeys in artificial intelligence history. Rather than being created by a single inventor, backpropagation emerged through decades of mathematical discovery, neural research, and scientific collaboration.

Researchers such as Seppo Linnainmaa, Paul Werbos, David Rumelhart, Geoffrey Hinton, and Ronald Williams all played critical roles in transforming backpropagation into the algorithm that powers modern deep learning systems today.

From neural networks and computer vision to generative AI and language models, the legacy behind who invented backpropagation continues shaping the future of artificial intelligence and technological innovation worldwide.