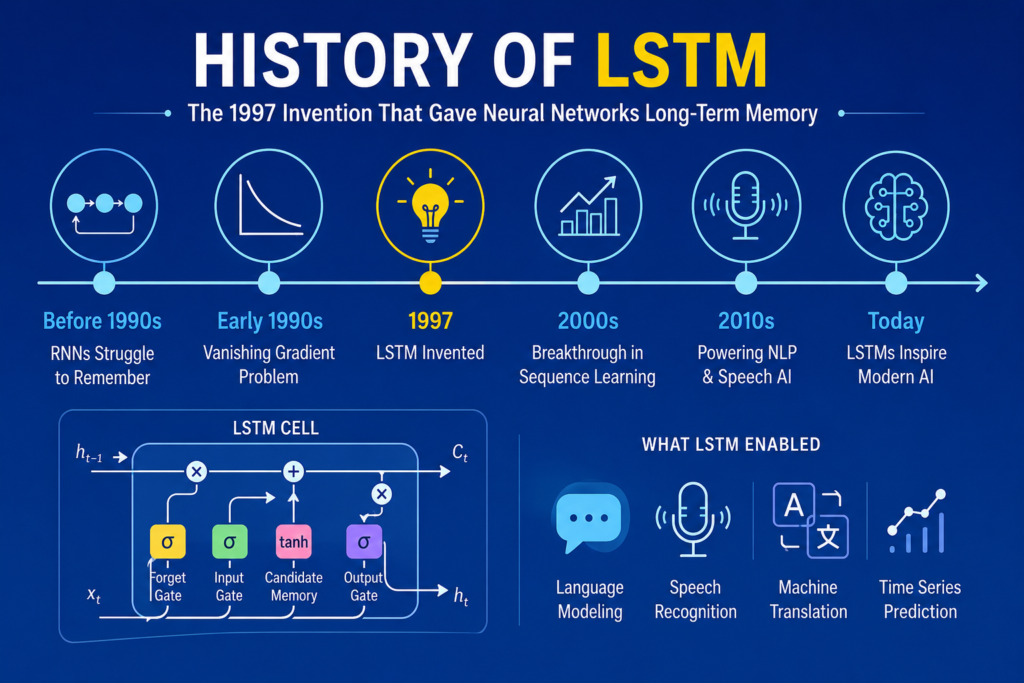

The story of history of lstm represents one of the most important breakthroughs in artificial intelligence history. Before Long Short-Term Memory networks existed, recurrent neural networks struggled badly with remembering information over long sequences.

AI systems could process sequences briefly, but they quickly forgot earlier information.

That limitation created massive problems for:

- Language understanding

- Speech recognition

- Translation systems

- Text generation

- Sequential prediction

Then LSTM networks changed everything.

The rise of history of lstm gave neural networks the ability to remember important information across long periods of time.

This breakthrough transformed modern AI systems worldwide.

Today, LSTMs influence:

- Natural language processing

- Voice assistants

- Machine translation

- Speech recognition

- Time-series prediction

- Generative AI

In this article, we will explore the complete history of lstm, how Long Short-Term Memory networks solved neural memory problems, and why they became revolutionary.

Neural Networks Before LSTM (1943 – 1990)

Before understanding history of lstm, we must first examine earlier neural network development.

The first artificial neuron model appeared in 1943 through Warren McCulloch and Walter Pitts.

Their work became foundational to:

Later, Frank Rosenblatt introduced the perceptron during the 1950s.

This breakthrough became connected to:

Although neural systems evolved gradually, they still lacked strong memory capabilities.

The Rise of Recurrent Neural Networks

One major milestone before history of lstm involved recurrent neural networks.

RNNs introduced feedback loops allowing information to persist over time.

This breakthrough became connected to:

Recurrent neural networks became useful for:

- Sequential data

- Language modeling

- Time-series prediction

- Speech synthesis

However, standard RNNs still struggled badly with long-term dependencies.

The Vanishing Gradient Problem

One of the biggest reasons behind history of lstm involved training limitations in recurrent neural networks.

During training, gradients often became extremely small across long sequences.

This issue became known as:

- vanishing gradient problem

When gradients disappeared, neural systems forgot earlier information quickly.

This created major limitations for:

- Language translation

- Speech recognition

- Sequential memory

- Long text generation

Researchers needed a revolutionary solution.

Sepp Hochreiter and Jürgen Schmidhuber (1997)

The defining breakthrough in history of lstm came through Sepp Hochreiter and Jürgen Schmidhuber.

In 1997, they introduced Long Short-Term Memory networks.

The architecture solved long-term memory problems using specialized memory structures.

Their invention transformed sequential AI forever.

What Is LSTM?

To fully understand history of lstm, we must examine how LSTM networks work.

LSTM stands for Long Short-Term Memory.

Unlike traditional RNNs, LSTMs contain memory cells capable of preserving information across long sequences.

The architecture introduced:

- Memory cells

- Forget gates

- Input gates

- Output gates

These mechanisms controlled information flow inside the network.

The Constant Error Carousel

One revolutionary concept in history of lstm involved the Constant Error Carousel.

The Constant Error Carousel allowed gradients to flow through memory cells without vanishing.

This solved one of the biggest problems in recurrent learning.

The innovation dramatically improved:

- Gradient flow

- Long-term dependencies

- Sequence learning stability

This breakthrough became one of the greatest achievements in neural network history.

Understanding LSTM Gates

Another defining feature in history of lstm involved gating mechanisms.

Forget Gate

The forget gate decides which information should be removed.

Input Gate

The input gate determines which new information should enter memory.

Output Gate

The output gate controls what information becomes visible externally.

Together, these gates allow LSTMs to remember important information while ignoring irrelevant data.

The Mathematics Behind LSTM

The success of history of lstm depended heavily on mathematical optimization.

LSTM gates use sigmoid functions such as:

Memory updates involve equations like:

Where:

- = memory state

- = forget gate

- = input gate

These mechanisms allowed stable long-term memory.

Why LSTMs Became Revolutionary

The importance of history of lstm came from solving long-range sequence learning.

Traditional RNNs struggled to remember information across many time steps.

LSTMs successfully handled:

- Long sentences

- Speech patterns

- Sequential dependencies

- Temporal information

This breakthrough transformed artificial intelligence dramatically.

LSTMs and Natural Language Processing

The rise of history of lstm strongly influenced natural language processing.

LSTMs improved:

- Text prediction

- Machine translation

- Chatbots

- Language generation

- Sequence learning

This breakthrough strongly connected to:

- sequence to sequence models

Many early translation systems relied heavily on LSTM architectures.

Speech Recognition and LSTMs

Another major breakthrough in history of lstm involved speech recognition.

Human speech depends heavily on temporal relationships.

LSTMs became highly effective for:

- Voice recognition

- Audio prediction

- Speech synthesis

- Sequential sound modeling

This progress strongly connected to:

- speech recognition neural networks

Modern voice assistants evolved partly from LSTM research.

LSTM vs Traditional RNNs

One important comparison in history of lstm involves standard recurrent neural networks.

Traditional RNNs:

- Forget long sequences

- Struggle with gradients

- Lose temporal information

LSTMs:

- Preserve memory

- Handle long dependencies

- Maintain gradient flow

This difference transformed sequence modeling forever.

LSTMs and Deep Learning

The rise of history of lstm strongly connected to the deep learning revolution.

Important breakthroughs included:

- history of deep learning

- what is deep learning

- godfathers of deep learning

Researchers such as Geoffrey Hinton, Yoshua Bengio, and Yann LeCun helped advance neural learning systems.

LSTMs became foundational to modern sequence AI.

GPU Computing and LSTM Expansion

Another important factor behind history of lstm involved GPU acceleration.

This progress strongly connected to:

- gpu history in ai

GPUs enabled:

- Faster matrix computation

- Parallel training

- Large-scale sequential learning

Without GPUs, modern LSTM systems would likely remain computationally impractical.

LSTMs and Generative AI

The influence of history of lstm extends into generative AI systems.

LSTMs helped pioneer:

- Text generation

- Music generation

- Conversational AI

- Sequential prediction

Many early AI chat systems depended heavily on LSTM architectures.

Even modern best free ai tools indirectly rely on breakthroughs inspired by LSTM research.

LSTMs and Modern AI Applications

Today, the impact of history of lstm appears across many industries.

LSTM systems power:

- Voice assistants

- Financial forecasting

- Medical monitoring

- Robotics

- Language translation

Their ability to handle temporal information remains extremely valuable.

Transformers and the Evolution Beyond LSTM

Although transformers later became dominant, history of lstm remains critically important.

This evolution strongly connected to:

- rnn vs lstm vs transformer

- transformer neural networks

Transformers improved long-range sequence handling using attention mechanisms.

However, LSTMs laid the foundation for modern sequence AI.

Many transformer ideas evolved partly from limitations discovered in recurrent systems.

Why LSTMs Changed AI Forever

Several reasons explain the importance of history of lstm.

Solved Long-Term Memory Problems

LSTMs preserved information across long sequences.

Improved Language Processing

AI systems understood temporal dependencies far better.

Enabled Modern NLP

Many language technologies became practical because of LSTMs.

Influenced Future Architectures

Transformers and modern sequence systems evolved partly from LSTM research.

Together, these breakthroughs transformed artificial intelligence forever.

Frequently Asked Questions (FAQs)

What is LSTM?

LSTM stands for Long Short-Term Memory, a recurrent neural network architecture.

Who invented LSTM?

Sepp Hochreiter and Jürgen Schmidhuber invented LSTM in 1997.

Why was LSTM important?

It solved long-term memory problems in recurrent neural networks.

What are LSTM gates?

Forget gates, input gates, and output gates control memory flow inside the network.

Are LSTMs still used today?

Yes. LSTMs remain important in speech recognition, forecasting, and sequence modeling.

Conclusion

The story of history of lstm represents one of the greatest breakthroughs in artificial intelligence history. By solving long-term memory problems inside recurrent neural networks, LSTMs transformed natural language processing, speech recognition, sequence modeling, and modern deep learning systems.

Their revolutionary memory cells and gating mechanisms allowed neural networks to preserve information across long sequences for the first time. This breakthrough launched major advances in translation systems, conversational AI, speech technology, and generative models.

Today, the legacy of history of lstm continues shaping artificial intelligence systems across the world.