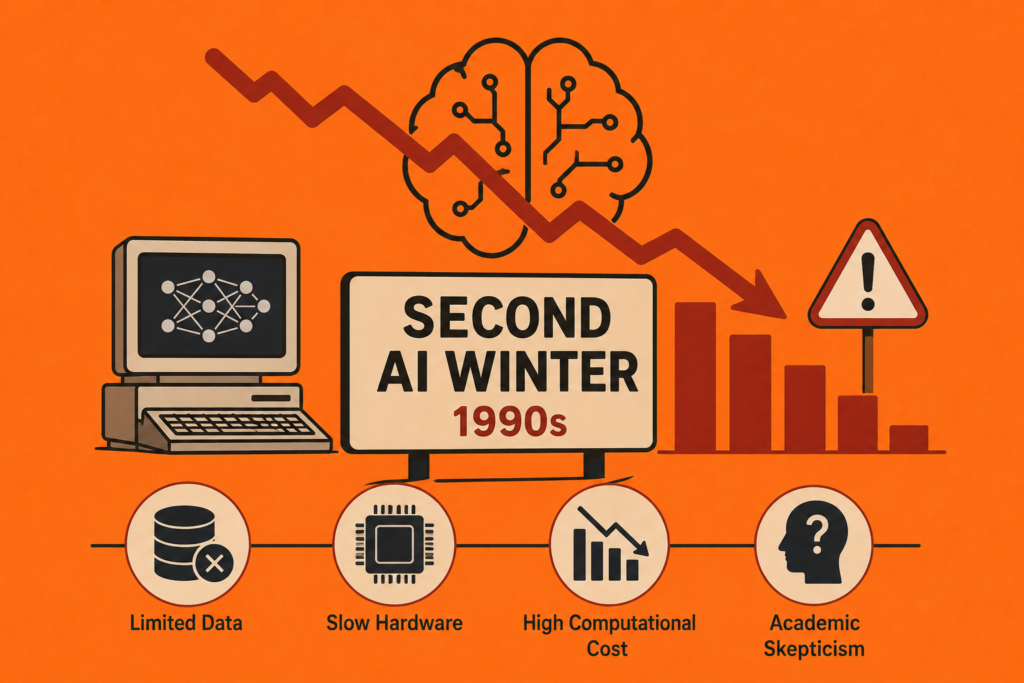

The second ai winter was one of the most difficult periods in modern artificial intelligence history. Just as neural networks began recovering from the first AI winter during the 1980s, researchers faced another major slowdown in the 1990s.

Many scientists believed deep learning and neural networks would finally transform computing after the success of backpropagation. However, technological limitations, hardware bottlenecks, lack of large datasets, and growing competition from alternative algorithms caused another major decline in neural network enthusiasm.

The story of the second ai winter is important because it explains why deep learning nearly disappeared again before eventually dominating the world during the 2010s.

This era involved scientific skepticism, industrial stagnation, algorithmic limits, high computational cost, and the rise of statistical learning theory approaches like Support Vector Machines.

In this article, we will explore the complete history of the second ai winter, why neural networks struggled again during the 1990s, what caused researchers to abandon deep learning temporarily, and how AI eventually recovered.

The Neural Network Revival Before the Second AI Winter (1980 – 1990)

Before the second ai winter, neural networks experienced a major comeback during the 1980s.

Researchers such as Geoffrey Hinton, David Rumelhart, and Ronald Williams helped revive neural networks using backpropagation.

This revival became central to:

- history of backpropagation

- history of deep learning

Backpropagation allowed multi-layer neural systems to learn complex patterns through gradient-based optimization.

Scientists believed deep learning might finally overcome earlier neural limitations from the 1960s and 1970s.

The revival created enormous excitement across the broader history of ai community.

However, many hidden problems still remained unresolved before the arrival of the second ai winter.

The Legacy of the First AI Winter

The second ai winter cannot be understood without examining the earlier collapse of neural research during the 1970s.

The famous first ai winter occurred after the perceptron controversy damaged confidence in neural systems.

During that period:

- Funding declined

- Neural research slowed

- Symbolic AI dominated

- Academic skepticism increased

Researchers eventually revived neural networks during the 1980s, but many scientists still remained cautious.

The memory of past failures influenced the scientific atmosphere leading into the second ai winter.

Why Neural Networks Struggled Again (1990 – 2005)

The second ai winter happened because neural networks still faced enormous practical challenges during the 1990s.

Major problems included:

- Slow CPUs

- High computational cost

- Lack of large datasets

- Training instability

- Hardware bottlenecks

Deep neural systems required far more computing power than available technology could provide.

Modern GPUs did not yet exist in forms suitable for large-scale deep learning.

This limitation became one of the biggest causes of the second ai winter.

The Vanishing Gradient Problem

One major issue during the second ai winter involved the vanishing gradient problem.

As neural networks became deeper, gradients during backpropagation often became extremely small.

This caused:

- Slow learning

- Training instability

- Poor convergence

- Weak performance

Deep neural networks struggled to train effectively.

Researchers found multi-layer architectures extremely difficult to optimize.

The vanishing gradient problem became one of the biggest technical barriers of the second ai winter.

Lack of Large Datasets

Another major reason for the second ai winter involved limited data availability.

Modern deep learning systems depend on enormous datasets.

However, during the 1990s:

- Internet data remained limited

- Digital storage was expensive

- Large labeled datasets barely existed

Without massive datasets, neural systems could not achieve high performance.

This data scarcity severely restricted deep learning progress during the second ai winter.

The Rise of Support Vector Machines

One of the biggest competitors during the second ai winter was Support Vector Machines (SVMs).

SVMs became extremely popular during the 1990s because they often outperformed neural networks on smaller datasets.

Researchers preferred SVMs because they offered:

- Better mathematical guarantees

- Strong statistical learning theory

- Reliable performance

- Lower computational requirements

The rise of kernel methods and SVMs shifted research priorities away from neural networks.

Many scientists believed neural systems were too inefficient compared to alternative algorithms.

This research shift intensified the second ai winter dramatically.

Symbolic AI and Statistical Learning Dominance

During the second ai winter, many researchers moved toward symbolic AI and statistical methods.

Popular approaches included:

- Expert systems

- Bayesian methods

- Decision trees

- Kernel methods

- Probabilistic models

Connectionism once again lost momentum.

The broader neural network history movement entered another difficult phase.

Many universities reduced emphasis on neural research during this period.

Geoffrey Hinton’s Persistence

One of the most important figures during the second ai winter was Geoffrey Hinton.

The geoffrey hinton biography became deeply connected to the survival of deep learning.

While many researchers abandoned neural systems, Hinton continued exploring:

- Distributed representations

- Boltzmann Machines

- Deep belief networks

- Neural optimization

His persistence helped keep deep learning alive during one of its most difficult eras.

Without researchers like Hinton, neural networks might never have recovered after the second ai winter.

The Failure of 1990s Neural Nets

The neural systems of the 1990s struggled for several reasons during the second ai winter.

Limited Hardware

CPUs lacked the power necessary for large neural training.

Slow Training

Networks often required days or weeks for simple tasks.

Poor Optimization

Training deep architectures remained unstable.

Limited Applications

Neural networks had not yet demonstrated massive commercial success.

These problems created widespread academic skepticism toward deep learning.

Industrial Stagnation and Venture Capital

The second ai winter also affected industry investment.

Many companies viewed neural networks as:

- Expensive

- Slow

- Unreliable

- Difficult to scale

Venture capital shifted toward more practical software systems instead of experimental AI.

Industrial stagnation further reduced momentum for deep learning research.

The AI field once again experienced declining enthusiasm during the second ai winter.

The Slow Return of Deep Learning (2000 – 2012)

Although the second ai winter slowed neural research significantly, deep learning eventually recovered.

Several major changes helped revive AI:

- Faster GPUs

- Larger datasets

- Internet growth

- Improved algorithms

- Better optimization methods

The rise of GPUs became especially important in:

- gpu history in ai

- history of deep learning

Researchers finally gained enough computational power to train deep neural systems effectively.

AlexNet and the End of the Second AI Winter (2012)

The defining moment ending the second ai winter came in 2012.

Geoffrey Hinton and his students introduced AlexNet, a deep convolutional neural network that dominated the ImageNet competition.

This breakthrough strongly connected to:

- history of alexnet

- history of imagenet

- history of cnn

AlexNet dramatically outperformed traditional machine learning systems.

The AI community suddenly realized deep learning could achieve extraordinary performance.

The second ai winter effectively ended after this breakthrough.

The Deep Learning Explosion

After the second ai winter, deep learning rapidly transformed artificial intelligence.

Modern neural systems now power:

- Speech recognition

- Computer vision

- Autonomous vehicles

- Generative AI

- Large language models

Even modern best free ai tools depend heavily on neural systems once considered impractical during the 1990s.

The success of deep learning proved many earlier neural ideas were simply ahead of available technology.

Lessons From the Second AI Winter

The second ai winter taught the AI community several critical lessons.

Hardware Matters

Deep learning depends heavily on powerful computing infrastructure.

Data Is Essential

Large datasets are necessary for neural learning success.

Scientific Patience Is Important

Breakthroughs may require decades before becoming practical.

Early Failure Does Not Mean Permanent Failure

Neural networks eventually succeeded despite repeated setbacks.

These lessons continue influencing AI research today.

Frequently Asked Questions (FAQs)

What was the second AI winter?

The second AI winter was a slowdown in neural network research during the 1990s caused by hardware limits, lack of data, and competing algorithms.

Why did neural networks struggle in the 1990s?

They suffered from slow hardware, vanishing gradients, expensive training, and limited datasets.

What replaced neural networks during the second AI winter?

Support Vector Machines and statistical learning methods became more popular.

How did deep learning recover?

Faster GPUs, larger datasets, and improved algorithms revived deep learning during the 2000s.

What ended the second AI winter?

AlexNet’s success in the 2012 ImageNet competition helped end the second AI winter.

Conclusion

The second ai winter remains one of the most important periods in artificial intelligence history. During the 1990s, neural networks once again struggled because of hardware bottlenecks, data scarcity, optimization problems, and competition from statistical learning methods like Support Vector Machines.

Although many researchers abandoned deep learning during this period, pioneers such as Geoffrey Hinton continued pushing neural network research forward. Their persistence eventually helped launch the modern AI revolution through GPUs, larger datasets, and deep neural architectures.

Today, the legacy of the second ai winter serves as a powerful reminder that scientific breakthroughs often require decades of patience, technological progress, and unwavering belief in long-term ideas.