The phrase gradient descent explained represents one of the most important concepts in artificial intelligence and machine learning. Every modern neural network, deep learning model, and AI system depends heavily on gradient descent to learn patterns, improve predictions, and reduce errors.

Without gradient descent, neural networks would never be able to train effectively.

The story of gradient descent explained combines calculus, optimization theory, mathematics, and computer science. It is the core mechanism that allows AI systems to gradually improve themselves through repeated learning.

Today, gradient descent powers:

- Deep learning

- Computer vision

- Speech recognition

- Generative AI

- Large language models

The concept behind gradient descent explained may sound complex, but the main idea is actually simple:

Neural networks learn by slowly reducing mistakes step by step.

In this article, we will fully explore gradient descent explained, including its mathematical foundations, learning process, optimization methods, types of gradient descent, and why it became essential to modern AI systems.

The Origins of Gradient Descent (1940 – 1980)

Before understanding gradient descent explained, we must first examine the rise of neural networks and machine learning.

Early AI researchers wanted machines capable of adaptive learning.

Important developments included:

Scientists realized neural systems needed a mathematical method for improving predictions automatically.

This challenge eventually led researchers toward optimization theory and gradient-based learning.

The growth of neural systems strongly influenced the broader:

Gradient descent eventually became the foundation of modern deep learning.

What Is Gradient Descent?

The simplest way to understand gradient descent explained is to imagine climbing down a mountain in heavy fog.

You cannot see the entire landscape.

You only know one thing:

Move downward step by step until you reach the lowest point.

That is exactly how gradient descent works.

Neural networks adjust internal parameters gradually to minimize prediction error.

The algorithm repeatedly updates weights to reduce mistakes during training.

The goal is to find the minimum value of a loss function.

This optimization process became central to gradient descent explained and modern machine learning.

The Role of Loss Functions

A major part of gradient descent explained involves loss functions.

A loss function measures how wrong a neural network’s predictions are.

One common example is Mean Squared Error:

Where:

- y = actual value

- = predicted value

The neural network tries to reduce this error during training.

Gradient descent moves weights in directions that minimize the loss function.

This process allows AI systems to improve performance gradually.

Understanding Gradients

Another key idea in gradient descent explained involves gradients.

A gradient measures how quickly a function changes.

Mathematically, gradients come from derivatives in calculus.

If a slope is steep:

- The gradient is large

If the surface becomes flat:

- The gradient becomes small

Neural networks use gradients to determine how weights should change during training.

This mathematical process powers nearly every modern AI system.

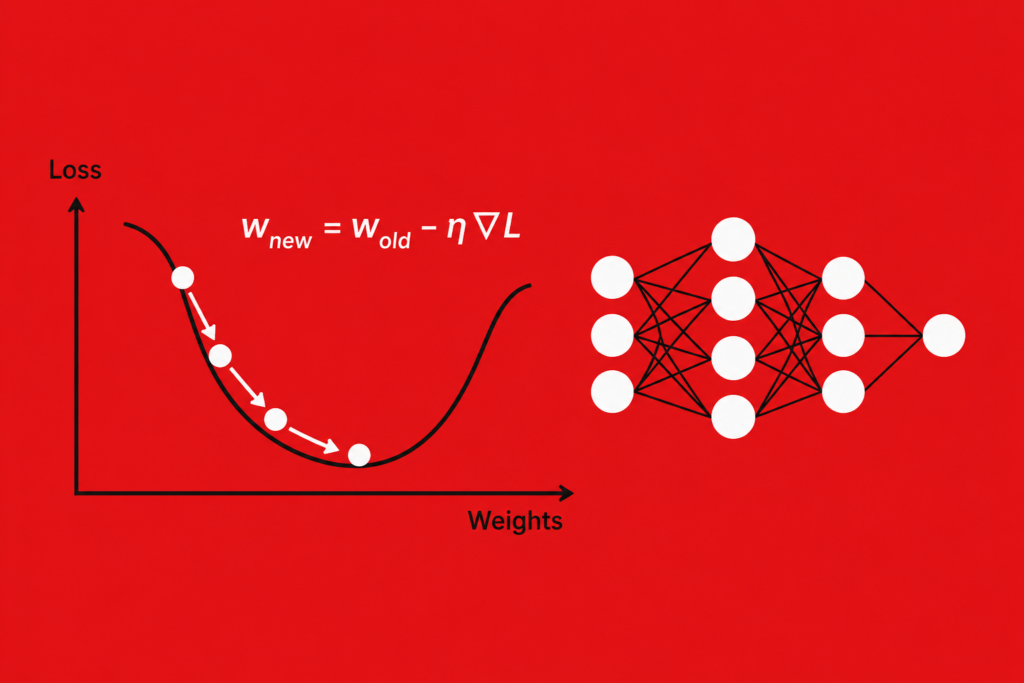

The Gradient Descent Formula

The most important equation in gradient descent explained is:

Where:

- = network weight

- = learning rate

- = gradient

This formula updates weights step by step.

The network moves in the opposite direction of the gradient because it wants to reduce error.

This process repeats thousands or millions of times during model training.

Learning Rate and Step Size

A critical concept in gradient descent explained is the learning rate.

The learning rate controls how large each update step becomes.

Small Learning Rate

- Stable learning

- Slower convergence

Large Learning Rate

- Faster updates

- Risk of overshooting

Choosing the correct learning rate is extremely important for neural network training.

Poor learning rates can cause unstable optimization and weak results.

Types of Gradient Descent

The story of gradient descent explained also includes different training methods.

Batch Gradient Descent

Uses the entire dataset during each update.

Advantages:

- Stable learning

- Accurate gradients

Disadvantages:

- Slow on large datasets

Stochastic Gradient Descent (SGD)

Updates weights using one training example at a time.

Advantages:

- Faster learning

- Lower memory use

Disadvantages:

- Noisy optimization

Mini-Batch Gradient Descent

Uses small groups of training examples.

This became the most popular method in deep learning.

The rise of SGD and mini-batch optimization transformed modern AI systems.

Backpropagation and Gradient Descent

The connection between gradient descent explained and backpropagation is extremely important.

Backpropagation computes gradients.

Gradient descent uses those gradients to update weights.

This relationship became central to:

- who invented backpropagation

- history of deep learning

Without backpropagation, gradient descent could not train deep neural networks effectively.

Together, these methods power modern AI learning.

Global Minima vs Local Minima

One major challenge in gradient descent explained involves optimization landscapes.

Neural networks often contain many valleys and hills inside their loss surfaces.

Global Minimum

The absolute lowest error point.

Local Minimum

A smaller valley that is not the best overall solution.

Gradient descent sometimes becomes trapped inside local minima.

Researchers developed advanced optimization methods to reduce this problem.

Momentum and Advanced Optimization

Modern AI systems improved gradient descent explained using advanced techniques.

Momentum

Momentum helps optimization continue moving smoothly instead of stopping suddenly.

It reduces oscillation during learning.

Adam Optimizer

Adam combines momentum and adaptive learning rates.

It became one of the most popular optimizers in deep learning.

RMSProp

Adjusts learning rates dynamically.

These improvements dramatically increased neural training efficiency.

Gradient Descent and Deep Learning (2000 – 2026)

Modern deep learning depends heavily on gradient descent explained.

The algorithm powers:

- Convolutional neural networks

- Recurrent neural networks

- Transformers

- Generative AI systems

This progress strongly connects to:

- history of cnn

- transformer neural networks

- gpu history in ai

Modern GPUs accelerated gradient calculations dramatically.

This computational power helped launch the deep learning revolution.

Why Gradient Descent Matters

The importance of gradient descent explained cannot be overstated.

The algorithm allows neural networks to:

- Learn patterns

- Reduce mistakes

- Improve predictions

- Optimize performance

Without gradient descent:

- Deep learning would fail

- Neural systems could not train

- Modern AI would not exist

It became one of the most important mathematical ideas in machine learning history.

Real World Applications

The influence of gradient descent explained can now be seen everywhere.

Applications include:

- Speech recognition neural networks

- Medical AI systems

- Self-driving vehicles

- Recommendation systems

- Chatbots

- AI image generation

Even modern best free ai tools rely heavily on gradient-based optimization.

The algorithm quietly powers much of the modern digital world.

Gradient Descent and the Future of AI

The future of AI still depends heavily on gradient descent explained.

Researchers continue improving optimization through:

- Faster training

- Better convergence

- Lower computational cost

- Improved stability

New architectures and optimization strategies continue evolving rapidly.

However, gradient descent remains the mathematical heart of neural learning.

Frequently Asked Questions (FAQs)

What is gradient descent in AI?

Gradient descent is an optimization algorithm that reduces neural network error by updating weights step by step.

Why is gradient descent important?

It allows neural networks to learn patterns and improve predictions during training.

What is the role of the learning rate?

The learning rate controls how large each weight update becomes during optimization.

What is stochastic gradient descent?

SGD updates weights using one training example at a time instead of the full dataset.

Does deep learning still use gradient descent?

Yes. Nearly all modern deep learning systems rely on gradient descent optimization.

Conclusion

The concept of gradient descent explained represents one of the greatest mathematical breakthroughs in artificial intelligence history. By allowing neural networks to reduce errors gradually through optimization, gradient descent transformed machine learning from a theoretical concept into a practical technology capable of powering modern AI systems.

From speech recognition and computer vision to generative AI and large language models, gradient descent remains the engine behind deep learning success. Combined with backpropagation, powerful GPUs, and large datasets, it helped launch the modern AI revolution.

Today, the legacy of gradient descent explained continues shaping the future of artificial intelligence, proving that mathematical optimization lies at the center of machine learning and neural intelligence.