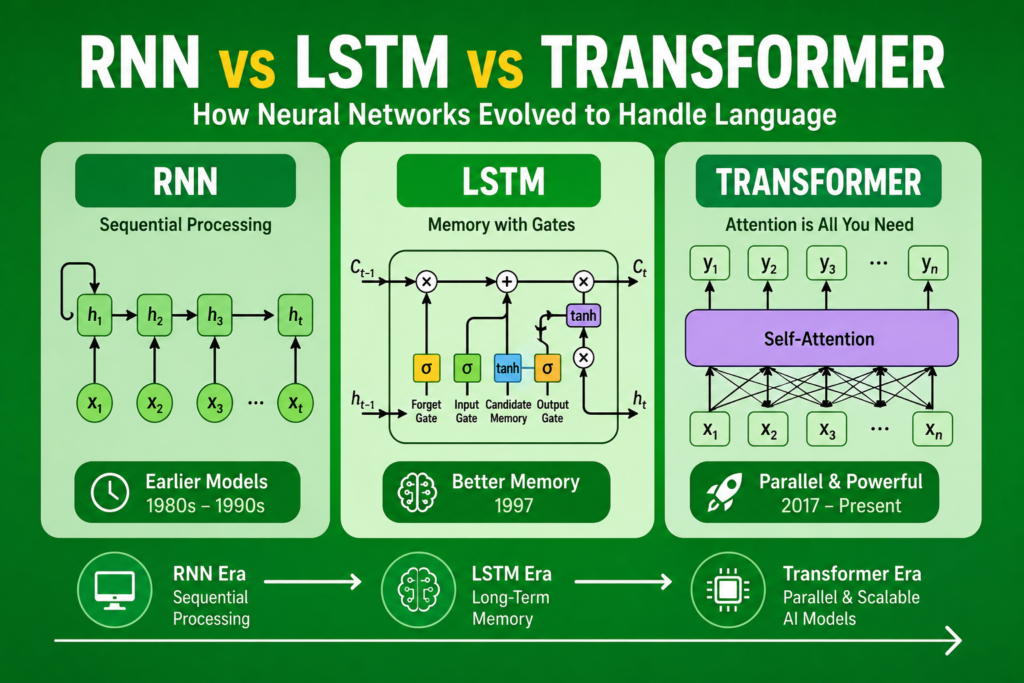

The story of rnn vs lstm vs transformer represents one of the most important technological evolutions in artificial intelligence history. Over the decades, researchers continuously improved neural network architectures to help machines understand human language more effectively.

At first, AI systems struggled badly with memory and context.

Then recurrent neural networks appeared.

Later, Long Short-Term Memory networks solved major memory limitations.

Finally, transformers revolutionized language modeling completely.

The rise of rnn vs lstm vs transformer transformed:

- Natural language processing

- Machine translation

- AI chatbots

- Speech recognition

- Text generation

- Conversational AI

Modern systems such as ChatGPT, Google Translate, and advanced AI assistants all evolved from this sequence of breakthroughs.

In this article, we will explore the complete evolution of rnn vs lstm vs transformer, how each architecture improved language understanding, and why transformers eventually dominated artificial intelligence.

Neural Networks Before Language AI (1943 – 1980)

Before understanding rnn vs lstm vs transformer, we must first examine early neural network development.

The first artificial neuron model appeared in 1943 through Warren McCulloch and Walter Pitts.

Their work became foundational to:

Later, Frank Rosenblatt introduced the perceptron during the 1950s.

This breakthrough became connected to:

Although early neural systems recognized simple patterns, they lacked memory and contextual understanding.

Why Language Is Difficult for AI

One major reason behind the evolution of rnn vs lstm vs transformer involves the complexity of human language.

Language depends heavily on:

- Context

- Word order

- Sequential meaning

- Long-range dependencies

For example:

“The boy who played football yesterday scored today.”

Understanding the sentence requires remembering earlier words.

Traditional feedforward networks could not handle such sequential relationships effectively.

Researchers needed architectures capable of memory.

The Rise of Recurrent Neural Networks (1980 – 1995)

The first major breakthrough in rnn vs lstm vs transformer came through recurrent neural networks.

This breakthrough became connected to:

RNNs introduced feedback loops allowing information to persist over time.

Unlike traditional neural systems, recurrent networks processed:

- Current input

- Previous hidden state

- Temporal dependencies

This allowed primitive memory capabilities.

How RNNs Work

To fully understand rnn vs lstm vs transformer, we must examine how recurrent neural networks operate.

RNNs process sequences step-by-step.

A hidden state stores previous information:

Where:

- = current hidden state

- = previous hidden state

- = current input

This feedback mechanism allowed AI systems to remember earlier sequence information.

Problems With RNNs

Although revolutionary, RNNs created major limitations.

One serious issue in rnn vs lstm vs transformer involved the:

- vanishing gradient problem

During training, gradients became extremely small across long sequences.

As a result, RNNs struggled to remember information over long distances.

This created problems for:

- Long sentences

- Translation tasks

- Speech processing

- Sequential prediction

Researchers needed a better architecture.

The Invention of LSTM (1997)

The next major breakthrough in rnn vs lstm vs transformer came through Long Short-Term Memory networks.

This breakthrough became connected to:

Sepp Hochreiter and Jürgen Schmidhuber introduced LSTMs in 1997.

Their invention solved long-term memory limitations inside recurrent systems.

How LSTMs Improved RNNs

LSTMs introduced specialized memory mechanisms.

These included:

- Forget gates

- Input gates

- Output gates

- Memory cells

These structures allowed networks to preserve important information while discarding irrelevant data.

This dramatically improved sequence learning.

The Mathematics of LSTM Memory

One important equation behind rnn vs lstm vs transformer involves LSTM memory updates:

Where:

- = memory state

- = forget gate

- = input gate

These mechanisms stabilized gradient flow and preserved long-term dependencies.

LSTMs and Natural Language Processing

The rise of rnn vs lstm vs transformer accelerated because LSTMs became highly effective for language tasks.

LSTMs improved:

- Machine translation

- Speech recognition

- Text generation

- Language prediction

- Sequence learning

This progress strongly connected to:

- sequence to sequence models

- speech recognition neural networks

Many early AI translation systems depended heavily on LSTM architectures.

The Limits of LSTMs

Despite their success, LSTMs still faced limitations.

Problems included:

- Sequential processing speed

- Limited parallelization

- High computational cost

- Difficulty with extremely long contexts

Training large LSTM systems remained slow.

Researchers searched for a more scalable architecture.

The Transformer Revolution (2017)

The biggest breakthrough in rnn vs lstm vs transformer came in 2017.

Researchers at Google introduced transformers through the famous paper:

“Attention Is All You Need”

This architecture transformed natural language processing forever.

What Makes Transformers Different?

Transformers abandoned recurrence entirely.

Instead, they introduced:

- Self-attention

- Parallel processing

- Positional encoding

- Multi-head attention

This architectural shift dramatically improved training efficiency and scalability.

Understanding Self-Attention

The defining innovation in rnn vs lstm vs transformer involved self-attention.

Self-attention allows models to evaluate relationships between all words simultaneously.

Instead of processing words one-by-one like RNNs and LSTMs, transformers analyze complete sequences in parallel.

This improved:

- Context understanding

- Long-range dependencies

- Training speed

- Model scalability

Positional Encoding

Since transformers process sequences in parallel, they require positional encoding.

Positional encoding helps models understand word order.

Without positional encoding, transformers could not distinguish sentence structure properly.

This became a critical innovation in modern language modeling.

Transformers and Large Language Models

The success of rnn vs lstm vs transformer accelerated dramatically because transformers enabled large language models.

Transformers became foundational to:

- BERT

- GPT

- Gemini

- Claude

- Modern chatbots

This breakthrough transformed generative AI completely.

Transformers vs RNNs and LSTMs

Several major differences define rnn vs lstm vs transformer.

RNNs

- Simple recurrent memory

- Weak long-term learning

- Sequential processing

LSTMs

- Improved memory handling

- Better long-range learning

- Slower training

Transformers

- Self-attention mechanisms

- Parallel processing

- Massive scalability

Transformers eventually became dominant because of efficiency and contextual understanding.

GPU Computing and Transformers

Another important factor behind rnn vs lstm vs transformer involved GPU acceleration.

This breakthrough strongly connected to:

- gpu history in ai

Transformers require enormous computational power.

GPUs enabled:

- Parallel matrix operations

- Large-scale model training

- Massive language learning

Without GPUs, transformer systems would likely remain impossible.

Transformers and Modern AI Applications

Today, transformers power countless AI systems worldwide.

Applications include:

- Chatbots

- AI assistants

- Image generation

- Language translation

- Coding assistants

- Search engines

This progress strongly connects to:

- transformer neural networks

- generative neural networks

Modern AI development now revolves heavily around transformer architectures.

RNNs and LSTMs Still Matter

Although transformers dominate modern NLP, RNNs and LSTMs still remain valuable.

They continue helping with:

- Time-series forecasting

- Embedded systems

- Audio modeling

- Lightweight AI applications

The earlier architectures still influence modern research deeply.

The Evolution of NLP

The complete story of rnn vs lstm vs transformer represents the evolution of natural language processing itself.

The progression looked like this:

- RNNs introduced memory

- LSTMs solved long-term dependencies

- Transformers enabled massive contextual learning

Each generation improved AI language understanding dramatically.

Modern Generative AI

The rise of generative AI strongly connects to rnn vs lstm vs transformer.

Modern systems now generate:

- Human-like text

- Images

- Code

- Speech

- Video

Even modern best free ai tools rely heavily on transformer-based architectures inspired by earlier recurrent research.

Frequently Asked Questions (FAQs)

What is the difference between RNN and LSTM?

LSTMs improve RNN memory handling using gates and memory cells.

Why are transformers better than LSTMs?

Transformers process sequences in parallel and understand context more effectively.

What is self-attention?

Self-attention helps transformers analyze relationships between all words simultaneously.

Are RNNs still used today?

Yes. RNNs remain useful for lightweight sequential tasks and forecasting.

What powers ChatGPT?

ChatGPT uses transformer-based neural network architectures.

Conclusion

The story of rnn vs lstm vs transformer represents one of the greatest evolutions in artificial intelligence history. From simple recurrent memory systems to advanced transformer architectures, neural networks gradually learned how to understand language, context, and long-range relationships more effectively.

RNNs introduced sequence memory, LSTMs solved long-term dependency problems, and transformers revolutionized large-scale language understanding through self-attention and parallel processing.

Today, the legacy of rnn vs lstm vs transformer continues powering modern AI systems, chatbots, language models, speech recognition platforms, and generative artificial intelligence across the world.