The hebb learning rule is one of the most influential ideas in neuroscience and artificial intelligence history. Long before modern deep learning systems, transformer models, and generative AI existed, Canadian psychologist Donald Hebb introduced a revolutionary theory explaining how learning happens inside the brain.

Today, the hebb learning rule still influences neural networks, machine learning algorithms, cognitive science, and computational neuroscience. Modern AI systems rely heavily on ideas connected to neural adaptation, synaptic strength, and associative learning.

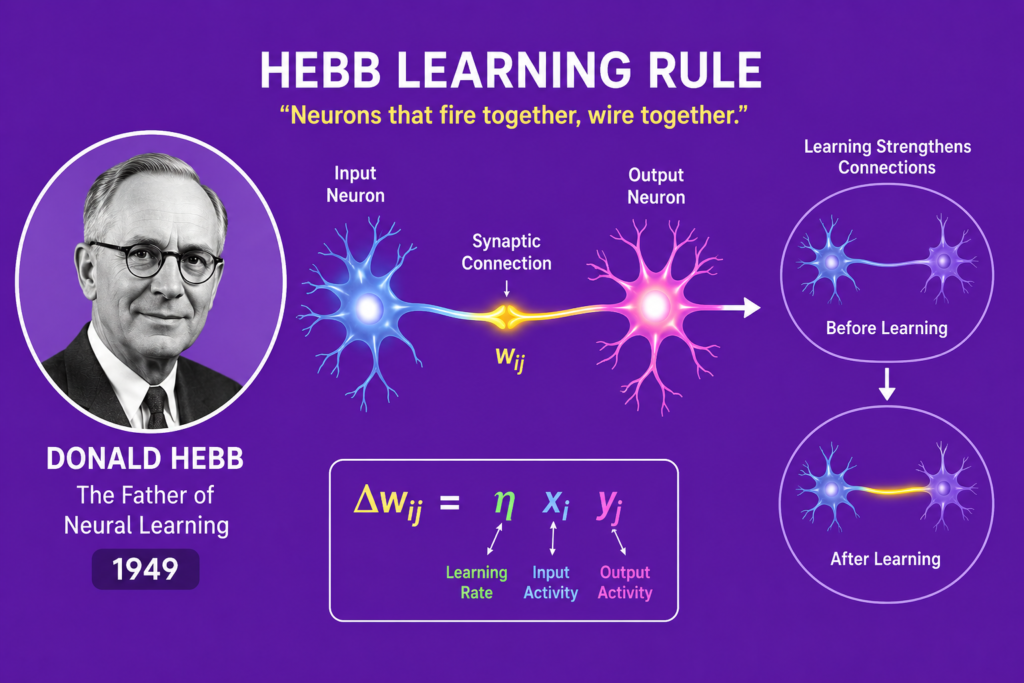

Donald Hebb’s famous principle:

“Neurons that fire together, wire together”

became one of the most important concepts in the entire history of artificial intelligence.

The story of the hebb learning rule combines behavioral psychology, neuroplasticity, biological learning, memory formation, and neural firing research. Hebb’s work helped scientists understand how experiences strengthen connections between neurons over time.

In this article, we will explore the complete history of the hebb learning rule, Donald Hebb’s groundbreaking theory from 1949, its scientific foundations, its mathematical principles, and why neural networks still rely on Hebbian ideas today.

The Scientific World Before Hebb (1930 – 1949)

Before the hebb learning rule was introduced, scientists struggled to understand how learning and memory actually worked inside the brain.

Researchers explored:

- Behavioral psychology

- Early neuroscience

- Neural signaling

- Reflex behavior

- Associative memory

- Brain function

However, there was no clear explanation for how experiences physically changed neural systems.

Scientists knew neurons communicated through electrical impulses, but they did not fully understand how repeated learning strengthened connections.

This mystery became one of the biggest challenges in early neuroscience.

The broader history of ai and machine learning would later depend heavily on solving this problem.

Who Was Donald Hebb?

Donald Hebb was a Canadian psychologist and neuroscientist deeply interested in intelligence, behavior, and biological learning.

Hebb studied:

- Experimental psychology

- Neurobiology

- Learning systems

- Cognitive behavior

- Brain organization

He became fascinated by how memories form inside neural systems.

Hebb believed intelligence emerged from changing neural connections rather than fixed brain structures.

This revolutionary idea later became the foundation of the hebb learning rule.

His research strongly influenced both neuroscience and artificial intelligence development.

The Organization of Behavior (1949)

The major breakthrough in the hebb learning rule came in 1949 when Donald Hebb published his famous book:

The Organization of Behavior

This book introduced a revolutionary explanation for learning and memory formation.

Hebb proposed that repeated neural activity strengthens synaptic connections between neurons.

The idea became known as Hebbian learning.

The famous principle:

“Neurons that fire together, wire together”

summarized the core concept of the hebb learning rule.

Hebb argued that neural pathways become stronger when neurons activate simultaneously during repeated experiences.

This idea transformed neuroscience forever.

How the Hebb Learning Rule Works

The hebb learning rule explains learning through changes in synaptic weight.

When two neurons activate together repeatedly, the connection between them strengthens.

Mathematically, Hebbian learning can be expressed as:

Where:

- = change in synaptic weight

- = learning rate

- = input neuron activity

- = output neuron activity

This equation became one of the most important formulas in neural network research.

The hebb learning rule introduced one of the earliest forms of unsupervised learning theory in artificial intelligence.

Unlike supervised systems, Hebbian learning does not require labeled training data.

Instead, learning emerges naturally through repeated neural activity.

Cell Assemblies and Memory Formation

One of Hebb’s most important contributions involved the concept of cell assemblies.

According to the hebb learning rule, groups of neurons repeatedly activated together form stable neural patterns called cell assemblies.

These assemblies became central to:

- Associative memory

- Memory formation

- Cognitive processing

- Learning mechanisms

Hebb believed memories were stored through strengthened neural connections rather than isolated brain cells.

This idea strongly influenced modern neuroscience and cognitive modeling.

The concept of neuroplasticity later supported many of Hebb’s theories.

Biological Inspiration Behind Neural Networks

The hebb learning rule became critically important in artificial intelligence because it provided biological inspiration for machine learning systems.

Researchers developing artificial neural networks realized that AI systems might learn similarly to biological brains.

The theory strongly influenced:

- Synaptic adaptation

- Weight optimization

- Neural learning

- Pattern recognition

This connection later became central to neural networks vs human brain discussions.

Modern machine learning still relies heavily on concepts related to neural reinforcement and adaptive learning.

Hebbian Learning and Artificial Intelligence

The influence of the hebb learning rule spread rapidly throughout computer science and AI research.

Scientists began exploring how artificial neurons could imitate biological learning processes.

This movement became deeply connected to:

Researchers realized that adaptive neural systems could potentially learn from data automatically.

The Hebbian approach inspired later neural architectures and machine learning algorithms.

Without Hebb’s theories, modern neural networks might never have evolved successfully.

Connection to the Perceptron Era (1957 – 1969)

The hebb learning rule strongly influenced Frank Rosenblatt’s perceptron research during the 1950s.

Rosenblatt developed systems capable of adjusting weights based on experience.

This era became deeply connected with:

The perceptron represented one of the first practical implementations of adaptive neural learning.

Hebbian ideas helped inspire machine learning systems capable of recognizing patterns and improving through training.

The perceptron era marked a major milestone in neural computation history.

Hebbian Learning vs Backpropagation

The hebb learning rule differs significantly from modern backpropagation systems.

Hebbian Learning

- Local learning mechanism

- Based on neuron co-activation

- Unsupervised learning

- Biologically inspired

Backpropagation

- Error-based optimization

- Gradient calculations

- Supervised learning

- Mathematical optimization

This later development became connected to:

- history of backpropagation

- gradient descent explained

Although modern AI uses backpropagation extensively, Hebbian principles still remain foundational in neuroscience and neural learning theory.

Hebb’s Influence on Deep Learning

The influence of the hebb learning rule remains visible even in modern deep learning systems.

Today’s neural networks still rely on:

- Synaptic weights

- Neural adaptation

- Pattern reinforcement

- Learning mechanisms

Scientists such as Geoffrey Hinton, Yann LeCun, and Yoshua Bengio built advanced AI systems upon principles originally inspired by biological learning.

Modern AI breakthroughs in speech recognition, image analysis, and generative systems still reflect Hebbian concepts indirectly.

The hebb learning rule remains one of the hidden foundations of modern machine intelligence.

Hebbian Learning in Modern Neuroscience

Modern neuroscience continues validating many ideas connected to the hebb learning rule.

Research into long-term potentiation showed that repeated neural activity can physically strengthen synaptic connections.

Scientists discovered evidence supporting Hebb’s theories about:

- Neuroplasticity

- Synaptic reinforcement

- Associative learning

- Neural memory storage

Hebb’s ideas helped bridge the gap between biology and artificial intelligence.

The relationship between biological learning and machine learning continues growing stronger today.

AI Applications Influenced by Hebbian Principles

The hebb learning rule influenced many modern AI technologies.

Applications include:

- Speech recognition systems

- Computer vision

- Robotics

- Pattern classification

- Recommendation systems

The theory also inspired research into:

- Spiking neural networks

- Neuromorphic computing

- Brain-inspired AI

Even many best free ai tools rely on neural systems ultimately connected to Hebbian principles.

The influence of Hebb’s work continues shaping AI innovation worldwide.

Why Donald Hebb Still Matters Today

Donald Hebb’s ideas remain relevant because they addressed one of the most important scientific questions:

How does learning happen?

The hebb learning rule provided one of the first biologically plausible explanations for adaptive learning and memory formation.

Hebb’s work influenced:

- Neuroscience

- Psychology

- Artificial intelligence

- Cognitive science

- Machine learning

His contributions became foundational to both brain science and AI development.

Today, Hebb is recognized as one of the greatest pioneers in neural learning research.

Frequently Asked Questions (FAQs)

What is the Hebb learning rule?

The Hebb learning rule is a theory stating that neural connections strengthen when neurons activate together repeatedly.

Who created Hebbian learning?

Donald Hebb introduced Hebbian learning in his 1949 book The Organization of Behavior.

Why is Hebbian learning important?

Hebbian learning helped explain memory formation, neural adaptation, and biological learning processes.

Is Hebbian learning still used in AI?

Yes. Many neural learning concepts in AI remain influenced by Hebbian principles and adaptive weight systems.

What does “neurons that fire together wire together” mean?

It means repeated simultaneous neural activity strengthens synaptic connections between neurons over time.

Conclusion

The hebb learning rule remains one of the most powerful and influential theories in neuroscience and artificial intelligence history. Donald Hebb’s 1949 ideas about synaptic strengthening and associative learning transformed how scientists understand memory, intelligence, and neural adaptation.

From early neuroscience to modern deep learning systems, Hebbian principles continue influencing machine learning, neural networks, and brain-inspired computing. The concepts of synaptic weight adjustment, neuroplasticity, and associative memory remain central to both biological and artificial intelligence research.

Today, the legacy of the hebb learning rule continues shaping modern AI systems, proving that Donald Hebb’s revolutionary theory was far ahead of its time.