The story of multilayer perceptron history represents one of the most important turning points in artificial intelligence and machine learning. Modern deep learning systems, image recognition models, and generative AI all depend on a simple but revolutionary idea:

Neural networks become far more powerful when they contain multiple layers.

Early neural systems could only solve simple tasks. However, once researchers discovered how to stack layers together and train them effectively, artificial intelligence entered an entirely new era.

The evolution of multilayer perceptron history changed neural networks from basic linear classifiers into powerful systems capable of learning complex patterns, speech, images, and language.

Today, multilayer perceptrons remain foundational to:

- Deep learning

- Feedforward neural networks

- AI optimization

- Neural architectures

- Pattern recognition

In this article, we will explore the complete multilayer perceptron history, how neural networks gained hidden layers, why multi-layer systems became revolutionary, and how they eventually launched the modern AI revolution.

Early Neural Networks Before Layers (1943 – 1957)

Before understanding multilayer perceptron history, we must examine the earliest neural systems.

The first artificial neuron model appeared in 1943 through Warren McCulloch and Walter Pitts.

Their research became central to:

Their mathematical neuron used binary signals and logical operations inspired by biological neurons.

Later, Donald Hebb introduced adaptive neural learning through the hebb learning rule.

These discoveries created the foundation for future neural systems.

However, early networks still remained extremely limited.

The Single Layer Perceptron Era (1957 – 1969)

The next major step in multilayer perceptron history came through Frank Rosenblatt.

In 1957, Frank Rosenblatt invented the perceptron.

This invention became central to:

The perceptron worked as a single-layer neural network capable of simple pattern recognition.

It used:

- Weighted sums

- Input vectors

- Output predictions

- Binary classification

The system learned by adjusting weights during training.

At the time, researchers believed perceptrons might eventually create intelligent machines.

However, single-layer systems soon encountered major limitations.

The Problem With Single Layer Networks

A major turning point in multilayer perceptron history involved the weaknesses of single-layer perceptrons.

Single-layer systems could only solve linearly separable problems.

They failed on more complex tasks involving non-linear relationships.

One famous issue involved the XOR problem.

The perceptron could not correctly solve XOR classification because the data was not linearly separable.

This limitation became central to the perceptron controversy.

Researchers realized neural systems required hidden layers capable of performing non-linear mapping.

That discovery became the foundation of multilayer perceptron history.

Minsky, Papert, and the AI Crisis (1969)

In 1969, Marvin Minsky and Seymour Papert published the famous book Perceptrons.

The book mathematically criticized single-layer perceptrons.

This event became connected to:

The criticism dramatically slowed neural network research during the 1970s.

Many researchers abandoned neural systems entirely.

However, some scientists believed the solution was not abandoning neural networks but adding more layers.

This idea eventually revived neural research and shaped the future of multilayer perceptron history.

The Birth of Hidden Layers

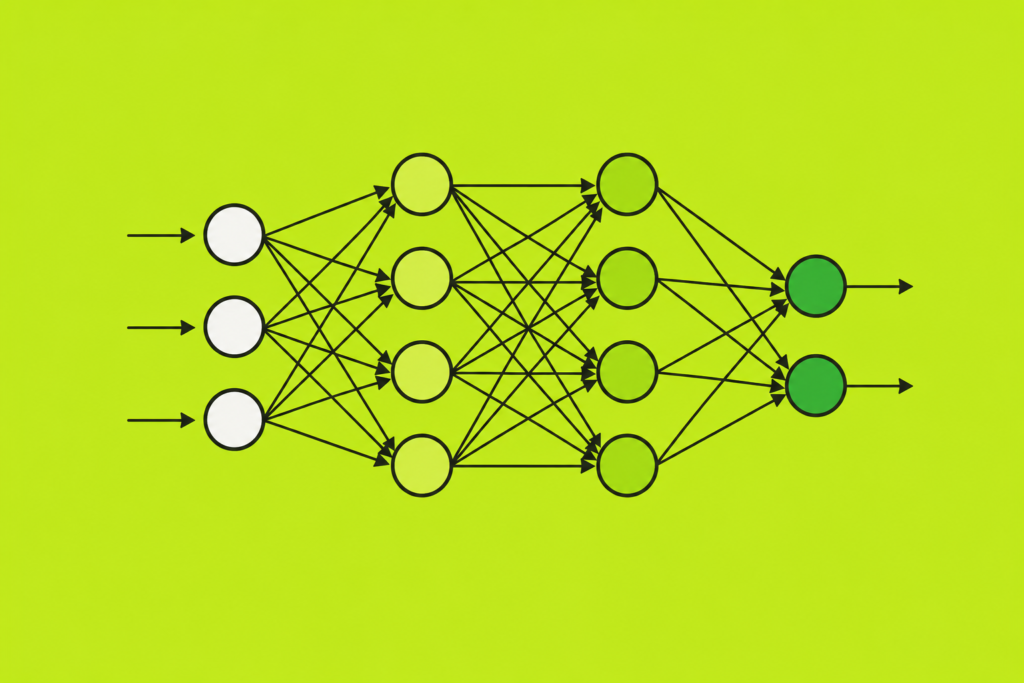

The defining breakthrough in multilayer perceptron history involved hidden layers.

Researchers realized networks could become more powerful by stacking neurons into multiple layers:

- Input layer

- Hidden layers

- Output layer

Hidden layers allowed networks to learn hierarchical representations.

This dramatically increased neural network capability.

The introduction of hidden layers enabled:

- Non-linear activation

- Complex pattern recognition

- Advanced decision boundaries

- Rich feature extraction

This breakthrough transformed neural networks forever.

Feedforward Neural Networks

The rise of feedforward neural systems became a major part of multilayer perceptron history.

In feedforward networks:

- Information flows forward only

- No loops exist

- Signals move layer by layer

Fully connected layers allowed every neuron to connect with neurons in adjacent layers.

These architectures became the foundation for modern deep learning systems.

Feedforward multilayer perceptrons later powered many early AI applications.

Activation Functions and Non-Linearity

A major reason multilayer systems succeeded involved activation functions.

Without non-linear activation, stacked layers would behave like simple linear models.

Important activation functions included:

- Sigmoid functions

- Tanh

- ReLU

These functions enabled neural systems to perform non-linear mapping across hidden layers.

The success of activation functions became critical in the evolution of multilayer perceptron history.

Backpropagation and Neural Learning (1980 – 1990)

The biggest breakthrough in multilayer perceptron history occurred during the 1980s.

Researchers finally discovered efficient training methods for multi-layer neural networks.

This breakthrough became central to:

Backpropagation allowed error signals to travel backward through hidden layers.

Weights updated using gradient descent optimization:

This training method transformed multilayer perceptrons into practical AI systems.

The revival dramatically accelerated neural network research worldwide.

Geoffrey Hinton and Neural Network Revival

One of the most important figures in multilayer perceptron history was Geoffrey Hinton.

The geoffrey hinton biography became closely connected to the revival of neural networks.

Hinton strongly supported connectionism during periods of skepticism.

His work on deep architectures and distributed representations helped revive multilayer neural research.

Without researchers like Hinton, multilayer perceptrons might never have succeeded.

Universal Approximation Theorem

One of the most important discoveries in multilayer perceptron history was the Universal Approximation Theorem.

The theorem showed:

Multi-layer neural networks can approximate almost any mathematical function.

This result proved multilayer perceptrons possessed enormous theoretical power.

Researchers realized hidden layer depth dramatically increased neural flexibility.

This theorem helped restore confidence in neural networks after the AI winters.

The Rise of Deep Learning (2000 – 2026)

Modern deep learning grew directly from multilayer perceptron history.

Researchers expanded multilayer architectures into:

- Convolutional neural networks

- Recurrent neural networks

- Transformers

This evolution strongly connected to:

- history of deep learning

- history of cnn

- history of alexnet

Advanced GPUs allowed networks to train using massive datasets.

The rise of modern AI became possible because multilayer perceptrons introduced deep layered learning.

Why Layers Matter in Neural Networks

The key lesson from multilayer perceptron history is simple:

More layers allow neural networks to learn more abstract representations.

For example:

- Early layers detect edges

- Middle layers detect shapes

- Deep layers detect objects

This layered hierarchy became one of the biggest breakthroughs in AI history.

Modern deep learning systems now contain hundreds or even thousands of layers.

Applications of Multilayer Perceptrons

The influence of multilayer perceptron history appears throughout modern AI.

Applications include:

- Medical diagnosis

- Speech recognition neural networks

- Image classification

- Financial prediction

- Robotics

- Generative AI

Even modern best free ai tools rely heavily on layered neural architectures descended from multilayer perceptrons.

Multilayer Perceptrons and the Future

The future of AI still depends heavily on ideas from multilayer perceptron history.

Researchers continue exploring:

- Larger neural systems

- Better optimization

- More efficient architectures

- Neuromorphic hardware

Although architectures evolve, the core idea of layered neural learning remains central to artificial intelligence.

Frequently Asked Questions (FAQs)

What is a multilayer perceptron?

A multilayer perceptron is a feedforward neural network containing one or more hidden layers.

Why were hidden layers important?

Hidden layers allowed neural networks to solve non-linear and complex problems.

What problem did multilayer perceptrons solve?

They solved limitations of single-layer perceptrons, including the XOR problem.

How are multilayer perceptrons trained?

They are trained using backpropagation and gradient descent optimization.

Do modern AI systems still use multilayer perceptrons?

Yes. Many modern deep learning systems evolved directly from multilayer perceptron architectures.

Conclusion

The story of multilayer perceptron history represents one of the most important breakthroughs in artificial intelligence. By introducing hidden layers and non-linear learning, researchers transformed neural networks from simple linear classifiers into powerful systems capable of deep learning and complex pattern recognition.

From early perceptrons and backpropagation to modern transformers and generative AI, multilayer perceptrons became the foundation of modern neural computing. Their layered structure enabled neural networks to process information hierarchically and learn increasingly abstract representations.

Today, the legacy of multilayer perceptron history continues shaping the future of artificial intelligence, proving that adding layers transformed neural networks into one of the most powerful technologies ever created.