The history of cnn is one of the most fascinating stories in artificial intelligence and computer vision. Convolutional Neural Networks, commonly called CNNs, transformed machines from simple calculators into systems capable of recognizing faces, objects, handwriting, medical images, and even self-driving road environments.

Today, CNNs power some of the most advanced AI applications in the world. However, the journey behind the history of cnn spans decades of scientific struggle, mathematical innovation, and technological breakthroughs.

From the early Neocognitron model created in Japan to the revolutionary success of AlexNet in 2012, convolutional neural networks reshaped artificial intelligence forever.

The evolution of the history of cnn introduced concepts such as:

- Local receptive fields

- Shared weights

- Pooling layers

- Feature maps

- Hierarchical vision processing

These ideas helped neural networks process visual information much like the human visual cortex.

In this article, we will explore the complete history of cnn, the scientists behind convolutional neural networks, their mathematical foundations, and how CNNs became the backbone of modern computer vision.

Neural Networks Before CNNs (1943 – 1979)

Before understanding the history of cnn, we must first examine the earlier development of neural networks.

The first artificial neuron model appeared in 1943 through Warren McCulloch and Walter Pitts.

Their work became central to:

Later, Frank Rosenblatt introduced the perceptron, an early neural learning system connected to:

Although perceptrons could recognize simple patterns, they struggled with complex image processing tasks.

Researchers realized traditional neural networks lacked efficient visual understanding mechanisms.

The challenge became:

How can machines recognize visual patterns the way humans do?

This question eventually launched the history of cnn.

The Inspiration From the Human Brain

A major part of the history of cnn came from neuroscience.

Researchers discovered the human visual cortex processes images hierarchically.

Different neurons respond to:

- Edges

- Shapes

- Motion

- Orientation

- Complex objects

Scientists wanted artificial neural systems that could imitate this biological structure.

This idea strongly connected to:

The concept of hierarchical vision processing became foundational to convolutional neural networks.

Kunihiko Fukushima and the Neocognitron (1980)

The true beginning of the history of cnn started in 1980 with Kunihiko Fukushima.

Fukushima created the Neocognitron, one of the earliest convolutional neural architectures.

The Neocognitron introduced several revolutionary concepts:

- Local receptive fields

- Layered processing

- Shift invariance

- Hierarchical feature extraction

The system became inspired by biological visual systems.

Although the Neocognitron did not use modern backpropagation training, it introduced many ideas later used in CNNs.

This breakthrough became one of the most important moments in the history of cnn.

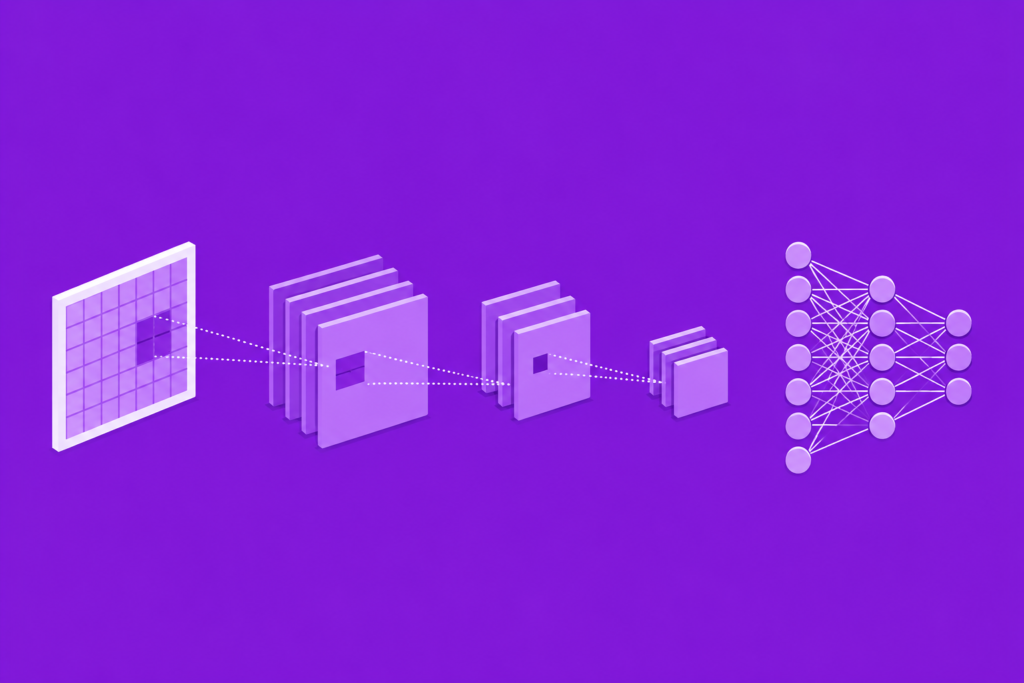

Understanding Convolutional Neural Networks

To understand the history of cnn, we must examine how CNNs work.

Convolutional neural networks process images through multiple layers.

Key components include:

Convolution Layers

These layers apply image filters called kernels.

The convolution operation extracts patterns such as:

- Edges

- Shapes

- Textures

Feature Maps

Convolution operations create feature maps that highlight important visual information.

Pooling Layers

Pooling reduces image dimensions while preserving essential patterns.

Fully Connected Layers

These layers make final predictions.

This architecture allowed CNNs to process visual data far more efficiently than traditional neural systems.

The Importance of Shared Weights

One major breakthrough in the history of cnn involved shared weights.

Traditional neural networks required massive numbers of parameters for image processing.

CNNs solved this problem by reusing kernels across image regions.

Advantages included:

- Reduced memory usage

- Faster training

- Better spatial recognition

- Improved computational efficiency

Shared weights became one of the defining innovations in convolutional neural networks.

Backpropagation and CNN Training (1980 – 1990)

The rise of backpropagation dramatically accelerated the history of cnn.

Backpropagation allowed neural systems to learn by minimizing prediction errors.

This breakthrough became central to:

CNNs could now automatically adjust kernels and weights during training.

The learning process used optimization equations such as:

This training method transformed CNNs into practical image recognition systems.

Yann LeCun and LeNet (1989 – 1998)

Another defining moment in the history of cnn involved Yann LeCun.

LeCun developed LeNet, one of the earliest successful convolutional neural networks.

This breakthrough became strongly connected to:

- history of lenet

- yann lecun biography

LeNet achieved impressive handwritten digit recognition performance.

Banks later used CNN systems for reading checks automatically.

LeNet demonstrated that convolutional neural networks could solve real-world computer vision problems.

Why CNNs Struggled During the 1990s

Despite promising results, the history of cnn faced serious obstacles during the 1990s.

Major problems included:

- Slow CPUs

- Limited datasets

- High computational cost

- Lack of GPU acceleration

This difficult period became connected to the:

Support Vector Machines and statistical learning methods became more popular than neural networks.

Many researchers temporarily abandoned CNN research.

The GPU Revolution (2000 – 2012)

The recovery of the history of cnn became possible because of GPUs.

Modern graphics processors dramatically accelerated matrix operations and convolution calculations.

This breakthrough strongly connected to:

- gpu history in ai

At the same time, the internet created enormous image datasets.

Researchers finally had:

- Large datasets

- Faster hardware

- Improved optimization

- Better neural architectures

The conditions for deep learning success were finally ready.

ImageNet and the CNN Revolution

One of the most important developments in the history of cnn was ImageNet.

ImageNet became a massive image classification dataset containing millions of labeled images.

This breakthrough became strongly connected to:

- history of imagenet

ImageNet allowed researchers to train large neural systems at unprecedented scale.

The dataset became essential to modern deep learning progress.

AlexNet Changes Everything (2012)

The defining moment in the history of cnn occurred in 2012.

Geoffrey Hinton and his students created AlexNet.

This breakthrough strongly connected to:

- history of alexnet

- geoffrey hinton biography

AlexNet dominated the ImageNet competition and dramatically outperformed traditional computer vision systems.

The model used:

- Deep convolutional layers

- ReLU activation

- GPU acceleration

- Dropout regularization

The AI community suddenly realized deep learning could outperform older machine learning approaches.

AlexNet launched the modern deep learning revolution.

CNNs and Modern Computer Vision

The impact of the history of cnn can now be seen everywhere.

CNNs power:

- Facial recognition

- Self-driving vehicles

- Medical imaging

- Satellite analysis

- Security systems

- Robotics

This progress strongly connects to:

- cnn computer vision history

- self driving cars and ai

Convolutional neural networks became the foundation of modern computer vision.

CNN Architectures After AlexNet

After AlexNet, researchers created even more advanced CNN architectures.

These included:

- VGGNet

- GoogLeNet

- ResNet

This evolution strongly connected to:

- history of resnet

Modern CNN systems became deeper, faster, and more accurate.

Researchers solved many earlier optimization problems using advanced architectures.

CNNs and Deep Learning Today

The history of cnn continues influencing modern AI systems today.

CNNs now work alongside:

- Transformers

- Recurrent neural networks

- Generative AI systems

Although newer architectures emerged, CNNs remain essential for visual processing tasks.

Even modern best free ai tools often depend heavily on convolutional neural networks.

Frequently Asked Questions (FAQs)

What is a CNN in AI?

A CNN is a Convolutional Neural Network designed primarily for image and visual data processing.

Who invented CNNs?

Kunihiko Fukushima introduced early CNN ideas through the Neocognitron, while Yann LeCun helped popularize CNNs with LeNet.

Why are CNNs important?

CNNs revolutionized computer vision by enabling machines to recognize images efficiently.

What made AlexNet special?

AlexNet combined deep CNN layers, GPU acceleration, and improved training techniques to dominate ImageNet in 2012.

Are CNNs still used today?

Yes. CNNs remain essential in computer vision, medical imaging, robotics, and autonomous vehicles.

Conclusion

The history of cnn represents one of the greatest breakthroughs in artificial intelligence history. From Kunihiko Fukushima’s Neocognitron to Yann LeCun’s LeNet and Geoffrey Hinton’s AlexNet, convolutional neural networks transformed machines into powerful visual recognition systems.

By introducing local receptive fields, shared weights, pooling layers, and hierarchical feature extraction, CNNs solved many challenges that traditional neural networks could not handle effectively. Their success launched the deep learning revolution and reshaped computer vision forever.

Today, the legacy of the history of cnn continues powering self-driving cars, medical diagnostics, robotics, image generation, and modern AI applications across the world.