The story of history of resnet represents one of the most important breakthroughs in artificial intelligence and deep learning history. Before ResNet appeared in 2015, researchers believed extremely deep neural networks would become impossible to train effectively.

As neural networks grew deeper, they faced serious problems:

- Vanishing gradients

- Training instability

- Accuracy degradation

- Optimization failure

Many researchers believed there was a practical limit to neural network depth.

Then everything changed.

A revolutionary neural architecture called ResNet showed the world that neural networks could become dramatically deeper while also becoming more accurate.

The rise of history of resnet transformed computer vision, deep learning, and modern AI research forever.

Today, ResNet principles influence:

- Computer vision systems

- Self-driving cars

- Medical imaging

- Image recognition

- Deep learning research

In this article, we will explore the complete history of resnet, how Microsoft’s research team solved deep learning limitations, and why residual learning became revolutionary.

Neural Networks Before ResNet (1943 – 2014)

Before understanding history of resnet, we must first examine earlier neural network development.

The first artificial neuron model appeared in 1943 through Warren McCulloch and Walter Pitts.

Their work became foundational to:

Later, Frank Rosenblatt introduced the perceptron during the 1950s.

This breakthrough became connected to:

Neural systems evolved gradually over decades, eventually leading to deep learning architectures.

The Rise of Deep Learning

The foundations of history of resnet strongly connect to the deep learning revolution.

Important breakthroughs included:

Researchers such as Geoffrey Hinton, Yann LeCun, and Yoshua Bengio helped revive neural network research.

Their work eventually became known as:

- godfathers of deep learning

Deep convolutional neural networks began dominating image recognition tasks during the 2010s.

However, deeper networks introduced new problems.

The Problem With Deep Neural Networks

One of the biggest reasons behind history of resnet involved training difficulties in very deep neural systems.

Researchers discovered that increasing neural layers did not always improve performance.

Instead, deeper models often performed worse.

This issue became known as the degradation problem.

Major challenges included:

- Vanishing gradients

- Optimization instability

- Training saturation

- Weak convergence

The vanishing gradient problem became especially serious in very deep architectures.

Researchers needed a revolutionary solution.

AlexNet and the Deep Learning Explosion (2012)

The rise of history of resnet strongly connects to AlexNet.

In 2012, AlexNet shocked the AI community by dominating the ImageNet competition.

This breakthrough became linked to:

- history of alexnet

- history of imagenet

AlexNet proved deep convolutional neural networks could outperform traditional computer vision systems dramatically.

The success launched enormous AI investment worldwide.

However, researchers quickly realized deeper networks remained difficult to train effectively.

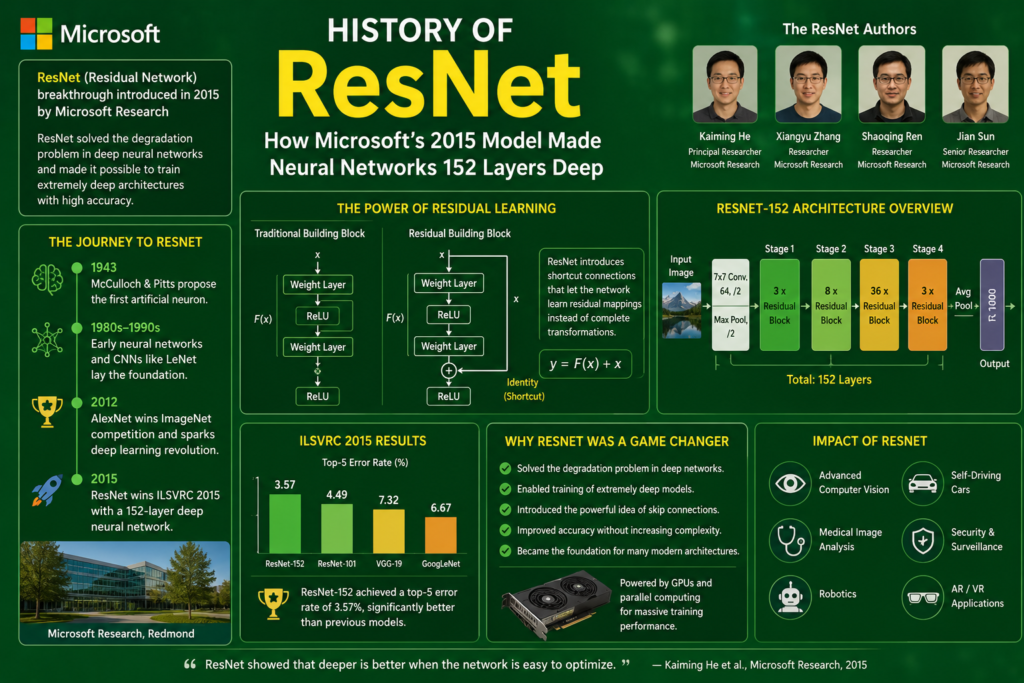

The Microsoft Research Team (2015)

The defining breakthrough in history of resnet came through researchers at Microsoft.

The main team included:

- Kaiming He

- Xiangyu Zhang

- Shaoqing Ren

- Jian Sun

Their research introduced Residual Networks, commonly called ResNet.

The architecture transformed deep learning forever.

What Is ResNet?

To fully understand history of resnet, we must examine what made ResNet revolutionary.

ResNet introduced a new concept called residual learning.

Instead of forcing neural layers to learn entire transformations directly, the network learned residual mappings.

The architecture used:

- Skip connections

- Identity mapping

- Residual blocks

These innovations dramatically improved training stability.

Understanding Skip Connections

The most important innovation in history of resnet involved skip connections.

Traditional neural networks pass information sequentially from one layer to the next.

ResNet introduced shortcut pathways allowing information to bypass layers.

Mathematically:

Where:

- = learned transformation

- = original input

This simple idea solved many deep learning problems.

Why Skip Connections Worked

The brilliance of history of resnet came from solving optimization difficulties elegantly.

Skip connections helped:

- Preserve gradients

- Improve information flow

- Reduce degradation

- Stabilize training

Residual learning allowed extremely deep networks to train successfully.

Researchers suddenly realized neural systems could become much deeper than previously believed.

ResNet 152 Layers Deep

The defining achievement in history of resnet came through ResNet-152.

The architecture successfully trained a neural network containing 152 layers.

At the time, this depth seemed almost impossible.

The system dramatically outperformed earlier architectures.

ResNet won the ImageNet competition in 2015 with remarkable accuracy.

This breakthrough shocked the AI community.

The Role of Bottleneck Design

Another major innovation in history of resnet involved bottleneck architecture.

Bottleneck blocks reduced computational complexity while preserving performance.

These blocks used:

- 1×1 convolutions

- 3×3 convolutions

- Feature compression

This design improved efficiency dramatically.

Without bottleneck structures, extremely deep networks would have become computationally impractical.

ResNet and Computer Vision

The impact of history of resnet became enormous in computer vision.

ResNet dramatically improved:

- Image recognition

- Object detection

- Medical imaging

- Facial recognition

- Scene understanding

This breakthrough strongly connected to:

- cnn computer vision history

- self driving cars and ai

Modern vision systems still rely heavily on residual architectures.

ResNet and GPU Computing

Another critical factor in history of resnet involved GPU acceleration.

Training 152-layer neural systems required enormous computational power.

This progress strongly connected to:

- gpu history in ai

Modern GPUs enabled:

- Faster matrix operations

- Parallel computation

- Deep CNN optimization

Without GPUs, ResNet training would likely have been impossible.

ResNet and Modern Deep Learning

The influence of history of resnet spread rapidly across AI research.

Residual learning inspired:

- DenseNet

- EfficientNet

- Transformer architectures

- Advanced CNN systems

ResNet principles became foundational to modern neural network design.

Researchers realized skip connections could stabilize extremely deep learning systems.

The Academic Impact of ResNet

The release of ResNet created massive academic impact worldwide.

Researchers rapidly adopted residual learning for:

- Speech recognition neural networks

- Medical AI

- Reinforcement learning

- Generative AI

This progress strongly connected to:

- history of deep learning

- what is deep learning

Residual architectures quickly became standard across deep learning research.

Why ResNet Became Revolutionary

Several reasons explain the importance of history of resnet.

Solved Vanishing Gradients

Residual connections preserved gradients effectively.

Enabled Extreme Depth

Neural networks could finally exceed 100 layers successfully.

Improved Accuracy

Deeper networks achieved dramatically better performance.

Influenced Modern AI

Residual learning inspired many later architectures.

Together, these breakthroughs transformed deep learning forever.

ResNet and the Future of AI

The legacy of history of resnet continues shaping artificial intelligence today.

Modern systems influenced by ResNet include:

- Autonomous vehicles

- Medical diagnosis systems

- Image generators

- Robotics

- Vision-language models

Even modern best free ai tools often depend on residual learning principles.

The architecture continues influencing new AI breakthroughs worldwide.

Frequently Asked Questions (FAQs)

What is ResNet?

ResNet is a deep neural network architecture introduced by Microsoft Research in 2015.

Why was ResNet important?

It solved deep learning problems using residual learning and skip connections.

What are skip connections?

Skip connections allow information to bypass layers and improve gradient flow.

How deep was ResNet?

ResNet-152 successfully trained a network containing 152 layers.

Did ResNet win ImageNet?

Yes. ResNet won the ImageNet competition in 2015 with remarkable accuracy.

Conclusion

The story of history of resnet represents one of the greatest breakthroughs in artificial intelligence history. By introducing residual learning and skip connections, Microsoft’s research team solved major deep learning limitations that had blocked progress for years.

ResNet proved neural networks could become dramatically deeper while remaining trainable and highly accurate. Its success transformed computer vision, influenced modern AI architectures, and accelerated the global deep learning revolution.

Today, the legacy of history of resnet continues powering medical imaging, self-driving vehicles, image recognition systems, and advanced AI applications across the world.