The story of history of lenet represents one of the greatest breakthroughs in artificial intelligence and computer vision. Long before modern AI systems could generate images, recognize faces, or power self-driving cars, a revolutionary neural network quietly changed the future of machine learning.

That neural network was LeNet.

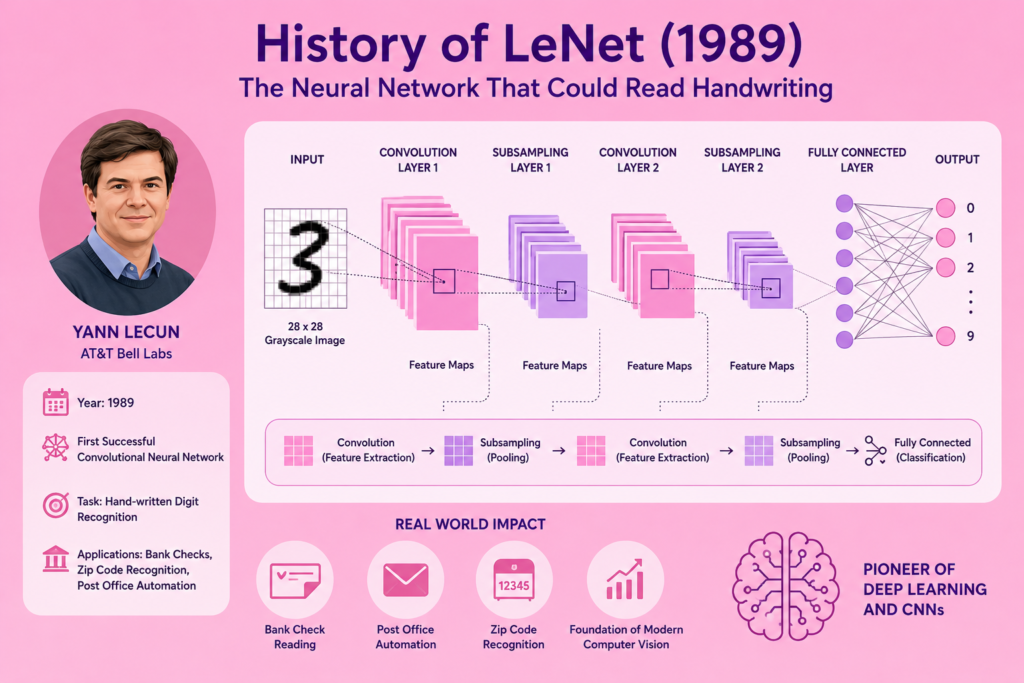

Created by Yann LeCun during the late 1980s, LeNet became one of the first successful convolutional neural networks capable of reading handwritten digits accurately.

The rise of history of lenet transformed neural networks from experimental research projects into practical industrial systems.

LeNet introduced many revolutionary ideas including:

- Convolutional architecture

- Feature extraction

- Shared weights

- Pooling layers

- Gradient-based learning

These innovations eventually became the foundation of modern deep learning and computer vision systems.

In this article, we will explore the complete history of lenet, how it worked, why it became revolutionary, and how it helped launch the modern AI era.

Neural Networks Before LeNet (1943 – 1980)

Before understanding history of lenet, we must first examine the earlier development of neural networks.

The first artificial neuron model appeared in 1943 through Warren McCulloch and Walter Pitts.

Their work became foundational to:

Later, Frank Rosenblatt introduced the perceptron during the 1950s.

This breakthrough became connected to:

Although perceptrons could solve simple tasks, they struggled badly with image processing and handwriting recognition.

Researchers needed more advanced neural architectures capable of handling visual data.

This challenge eventually led to the rise of history of lenet.

The Early CNN Foundations

Another important step in history of lenet came from Kunihiko Fukushima.

In 1980, Fukushima introduced the Neocognitron.

This early neural system introduced important CNN concepts such as:

- Local receptive fields

- Hierarchical image processing

- Shift invariance

The Neocognitron became a major influence on:

However, the system still lacked efficient modern learning methods.

Researchers still needed a practical neural architecture that could learn automatically and solve real-world problems.

Yann LeCun and the Bell Labs Revolution (1980 – 1989)

The defining breakthrough in history of lenet came through Yann LeCun.

During the 1980s, neural networks faced skepticism because of the lingering effects of the:

Many scientists believed neural systems were impractical.

However, LeCun continued researching gradient-based neural learning at AT&T Bell Labs.

His work focused on:

- Hand-written digit recognition

- Character recognition

- Image processing

- Neural optimization

LeCun strongly believed neural systems could eventually outperform traditional algorithms for visual tasks.

The Birth of LeNet (1989)

The most important moment in history of lenet occurred in 1989.

LeCun introduced LeNet, one of the earliest successful convolutional neural networks.

The system became revolutionary because it combined:

- Convolution layers

- Pooling layers

- Backpropagation

- Gradient descent optimization

LeNet processed grayscale images and learned patterns automatically.

This breakthrough transformed computer vision forever.

How LeNet Worked

To fully understand history of lenet, we must examine the architecture itself.

LeNet processed images through multiple neural layers.

Convolution Layers

These layers used kernels and filters to detect visual patterns.

The system identified:

- Edges

- Shapes

- Curves

- Pixel structures

Pooling Layers

Pooling reduced image size while preserving important information.

This improved model efficiency and reduced computational requirements.

Fully Connected Layers

These layers produced final predictions.

LeNet became one of the earliest examples of deep layered feature extraction.

The Mathematics Behind LeNet

The success of history of lenet depended heavily on mathematical optimization.

LeNet used:

- Weighted sums

- Feature maps

- Gradient calculations

- Backpropagation

This breakthrough became connected to:

The neural network updated weights using equations such as:

This allowed LeNet to improve predictions automatically during training.

LeNet and Handwriting Recognition

The biggest achievement in history of lenet involved handwritten digit recognition.

LeNet became highly effective at reading handwritten numbers on checks and documents.

Applications included:

- Bank check processing

- Postal mail sorting

- Zip code recognition

- Document automation

This became one of the first successful industrial uses of neural networks.

LeNet proved AI systems could solve real-world commercial problems.

The Role of the MNIST Dataset

Another important part of history of lenet involved training datasets.

LeNet later became strongly associated with the MNIST handwritten digit dataset.

The dataset included thousands of grayscale handwritten digit images.

MNIST became one of the most important benchmarks in machine learning history.

It allowed researchers to compare neural architectures fairly.

LeNet performed extremely well on handwritten digit classification tasks.

Hardware Constraints in 1989

Despite its success, the history of lenet faced major technological limitations.

During the late 1980s and early 1990s:

- CPUs were slow

- Memory was expensive

- GPUs barely existed

- Large datasets remained limited

Training neural networks required enormous computational effort.

These hardware bottlenecks slowed neural network progress dramatically.

Why Neural Networks Struggled Again

Even after LeNet’s success, neural networks entered another difficult period.

This era became connected to:

Alternative algorithms such as Support Vector Machines became more popular during the 1990s.

Many researchers temporarily abandoned deep learning research.

However, LeNet’s architecture continued influencing future CNN development quietly behind the scenes.

Geoffrey Hinton and the Deep Learning Revival

The survival of deep learning strongly connected to Geoffrey Hinton.

The geoffrey hinton biography became closely tied to the neural network revival.

Hinton continued supporting:

- Deep learning

- Neural optimization

- Distributed representations

His persistence helped preserve neural research until hardware improved.

Without pioneers like Hinton and LeCun, modern AI might never have emerged.

AlexNet and the Validation of LeNet (2012)

The greatest validation of history of lenet came in 2012.

Geoffrey Hinton and his students introduced AlexNet.

This breakthrough became strongly connected to:

- history of alexnet

- history of deep learning

AlexNet used many CNN principles pioneered by LeNet:

- Convolutional layers

- Pooling

- Hierarchical features

- Gradient-based optimization

The success of AlexNet proved LeCun’s earlier ideas were correct.

CNNs suddenly became dominant in artificial intelligence.

LeNet’s Influence on Modern AI

The influence of history of lenet can now be seen everywhere.

Modern CNN systems power:

- Facial recognition

- Self-driving vehicles

- Medical imaging

- Robotics

- AI cameras

- Security systems

This progress strongly connects to:

- cnn computer vision history

- self driving cars and ai

LeNet became the foundation of modern computer vision.

Yann LeCun’s Legacy

Today, Yann LeCun is recognized as one of the most influential AI researchers in history.

His work helped shape:

- Computer vision

- Deep learning

- Neural architectures

- AI research leadership

LeCun later received the Turing Award alongside Geoffrey Hinton and Yoshua Bengio.

The trio became widely known as the:

- godfathers of deep learning

Their research transformed artificial intelligence forever.

LeNet and the Future of AI

The story of history of lenet continues influencing AI development today.

Even modern best free ai tools often rely on CNN concepts pioneered by LeNet.

Although transformers and newer architectures now exist, convolutional neural networks remain essential for image processing.

The core ideas introduced by LeNet continue shaping the future of artificial intelligence.

Frequently Asked Questions (FAQs)

What is LeNet?

LeNet is one of the earliest successful convolutional neural networks created by Yann LeCun.

Why was LeNet important?

LeNet proved neural networks could solve real-world handwriting recognition problems.

What was LeNet used for?

It was used for bank check reading, postal automation, and digit recognition.

Who created LeNet?

Yann LeCun developed LeNet at AT&T Bell Labs.

Is LeNet still important today?

Yes. Modern CNN systems evolved directly from LeNet’s architecture and ideas.

Conclusion

The story of history of lenet represents one of the most important milestones in artificial intelligence history. By combining convolution layers, pooling, gradient-based learning, and feature extraction, Yann LeCun created a neural architecture capable of solving real-world handwriting recognition tasks long before modern deep learning became popular.

LeNet proved neural networks could process visual information effectively and laid the foundation for modern computer vision systems. Later breakthroughs such as AlexNet and deep CNN architectures expanded these ideas even further, transforming artificial intelligence forever.

Today, the legacy of history of lenet continues powering image recognition, robotics, medical AI, self-driving systems, and modern computer vision applications across the world.