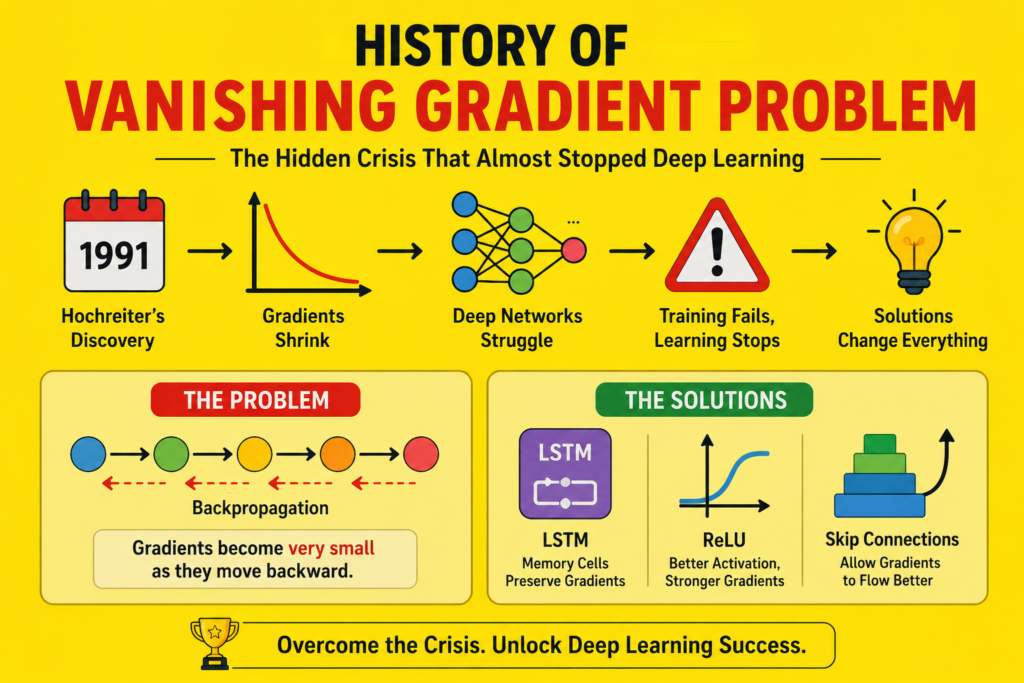

The story of the vanishing gradient problem represents one of the most dangerous obstacles in artificial intelligence history. During the early development of deep neural networks, researchers discovered a hidden mathematical issue that prevented AI systems from learning effectively.

For years, this crisis slowed neural network progress dramatically.

Many researchers believed deep learning might never work properly.

The rise of the vanishing gradient problem nearly stopped progress in:

- Recurrent neural networks

- Deep neural systems

- Language processing

- Speech recognition

- Sequence learning

- Computer vision

Fortunately, researchers eventually discovered revolutionary solutions that transformed artificial intelligence forever.

In this article, we will explore the complete history of the vanishing gradient problem, why it became so dangerous, and how scientists solved one of the greatest crises in deep learning history.

Neural Networks Before the Crisis (1943 – 1980)

Before understanding the vanishing gradient problem, we must first examine early neural network history.

The first artificial neuron model appeared in 1943 through Warren McCulloch and Walter Pitts.

Their work became foundational to:

Later, Frank Rosenblatt introduced the perceptron during the 1950s.

This breakthrough strongly connected to:

Early neural systems successfully recognized simple patterns, but deeper architectures remained extremely difficult to train.

The Rise of Backpropagation

One major milestone before the vanishing gradient problem involved backpropagation.

This breakthrough became connected to:

Backpropagation allowed neural networks to update weights by minimizing prediction errors.

The training process relied heavily on gradient descent.

Understanding Gradient Descent

To fully understand the vanishing gradient problem, we must first examine gradient descent.

Gradient descent adjusts neural weights using derivatives:

Where:

- = neural weight

- = learning rate

- = loss function

This process became foundational to:

However, deep architectures introduced unexpected mathematical problems.

What Is the Vanishing Gradient Problem?

The vanishing gradient problem occurs when gradients become extremely small during backpropagation.

As gradients move backward through many neural layers, repeated multiplication causes values to shrink exponentially.

Eventually, early layers stop learning entirely.

This creates severe optimization failure.

Deep networks become extremely difficult or impossible to train.

Why Sigmoid Functions Created Problems

One major reason behind the vanishing gradient problem involved sigmoid activation functions.

The sigmoid equation is:

Its derivative remains very small:

Repeated multiplication of tiny derivatives caused mathematical decay across deep layers.

This became one of the biggest obstacles in neural network history.

Hochreiter’s 1991 Discovery

One defining moment in the history of the vanishing gradient problem came from Sepp Hochreiter.

In his 1991 thesis, Hochreiter mathematically analyzed why recurrent neural networks struggled with long-term dependencies.

He proved:

- Gradients vanish exponentially

- Deep sequences lose information rapidly

- Neural memory becomes unstable

This discovery became one of the most important findings in deep learning history.

Deep Networks Struggled to Learn

The rise of the vanishing gradient problem created severe training bottlenecks.

Researchers discovered:

- Early neural layers stopped updating

- Learning became extremely slow

- Deep networks performed poorly

- Optimization failed frequently

As a result, many researchers abandoned deep learning entirely during the 1990s.

The Crisis in Recurrent Neural Networks

The vanishing gradient problem became especially dangerous for recurrent neural networks.

This issue strongly connected to:

RNNs process sequential information over time.

During Backpropagation Through Time (BPTT), gradients repeatedly multiplied across long sequences.

This caused long-term memory to disappear rapidly.

RNNs could not remember distant information effectively.

Long-Term Dependency Failure

One major effect of the vanishing gradient problem involved long-term dependencies.

For example:

“The boy who went to school yesterday played football today.”

Understanding the sentence requires remembering earlier words.

Traditional RNNs failed to preserve information over long sequences.

This severely limited:

- Language processing

- Translation systems

- Sequential prediction

- Speech recognition

Gradient Explosion

Another major issue connected to the vanishing gradient problem involved exploding gradients.

Sometimes gradients became excessively large instead of tiny.

This caused:

- Numerical instability

- Unstable training

- Weight explosion

- Optimization collapse

Researchers struggled with both mathematical extremes simultaneously.

The First AI Winter and Neural Network Decline

The rise of the vanishing gradient problem contributed indirectly to:

Many researchers believed neural networks were fundamentally flawed.

Funding declined sharply.

Symbolic AI dominated research priorities for years.

Deep learning nearly disappeared from mainstream AI research.

The LSTM Solution (1997)

The first major solution to the vanishing gradient problem came through Long Short-Term Memory networks.

This breakthrough strongly connected to:

Sepp Hochreiter and Jürgen Schmidhuber introduced LSTMs in 1997.

Their architecture solved gradient decay using:

- Memory cells

- Forget gates

- Input gates

- Constant Error Carousel

These innovations stabilized gradient flow dramatically.

How LSTMs Solved the Problem

LSTMs preserved information using specialized memory structures.

The Constant Error Carousel prevented gradients from disappearing.

This allowed neural systems to learn:

- Long sequences

- Speech patterns

- Temporal information

- Language dependencies

LSTMs transformed sequence learning forever.

ReLU Activation Functions

Another revolutionary solution to the vanishing gradient problem involved ReLU activation functions.

The ReLU equation is:

Unlike sigmoid functions, ReLU derivatives remain stable for positive values.

This dramatically improved deep network training.

The rise of ReLU became connected to:

- history of relu

GPUs and Deep Learning Revival

The growth of the vanishing gradient problem solutions accelerated because of GPU computing.

This breakthrough strongly connected to:

- gpu history in ai

GPUs enabled:

- Faster matrix operations

- Large-scale training

- Deep architecture optimization

Combined with ReLU and LSTM innovations, deep learning finally became practical.

CNNs and Stable Deep Architectures

Another important milestone in solving the vanishing gradient problem involved convolutional neural networks.

This breakthrough connected strongly to:

Modern architectures such as ResNet introduced skip connections that stabilized gradient flow across deep layers.

This allowed neural networks to grow extremely deep.

Transformers and Modern AI

The influence of the vanishing gradient problem still appears in modern AI systems.

Transformers solved many sequential learning limitations using self-attention mechanisms.

This evolution strongly connected to:

- rnn vs lstm vs transformer

- transformer neural networks

Modern AI architectures evolved partly because researchers spent decades solving gradient instability problems.

Deep Learning Finally Succeeds

The eventual solutions to the vanishing gradient problem transformed artificial intelligence completely.

Modern systems now achieve breakthroughs in:

- Natural language processing

- Computer vision

- Speech recognition

- Generative AI

- Autonomous systems

Today, even modern best free ai tools depend heavily on solutions developed to overcome gradient instability.

Why the Vanishing Gradient Problem Was So Important

Several reasons explain the importance of the vanishing gradient problem.

Nearly Stopped Deep Learning

Researchers lost confidence in neural networks for years.

Revealed Mathematical Limits

The problem exposed weaknesses inside deep architectures.

Inspired Revolutionary Solutions

LSTMs, ReLU, and ResNet emerged partly because of this crisis.

Enabled Modern AI

Solving gradient instability made deep learning practical.

Together, these breakthroughs transformed artificial intelligence forever.

Frequently Asked Questions (FAQs)

What is the vanishing gradient problem?

It occurs when gradients become extremely small during backpropagation in deep neural networks.

Why was the vanishing gradient problem dangerous?

It prevented deep networks from learning effectively.

Who discovered the vanishing gradient problem?

Sepp Hochreiter formally analyzed the problem in 1991.

How was the problem solved?

Solutions included LSTM networks, ReLU activation functions, and residual connections.

Does the vanishing gradient problem still exist?

Yes, but modern architectures reduce its effects significantly.

Conclusion

The story of the vanishing gradient problem represents one of the most important crises in artificial intelligence history. This hidden mathematical issue nearly stopped deep learning progress by making deep neural networks impossible to train effectively.

For years, researchers struggled with gradient decay, unstable optimization, and long-term dependency failures. However, groundbreaking innovations such as LSTMs, ReLU activation functions, GPU acceleration, and residual networks eventually solved many of these limitations.

Today, the legacy of the vanishing gradient problem continues shaping modern AI systems, deep learning architectures, language models, and neural network research across the world.